## Diagram: Canonical RAG vs. Agentic/Multi-Agent RAG

### Overview

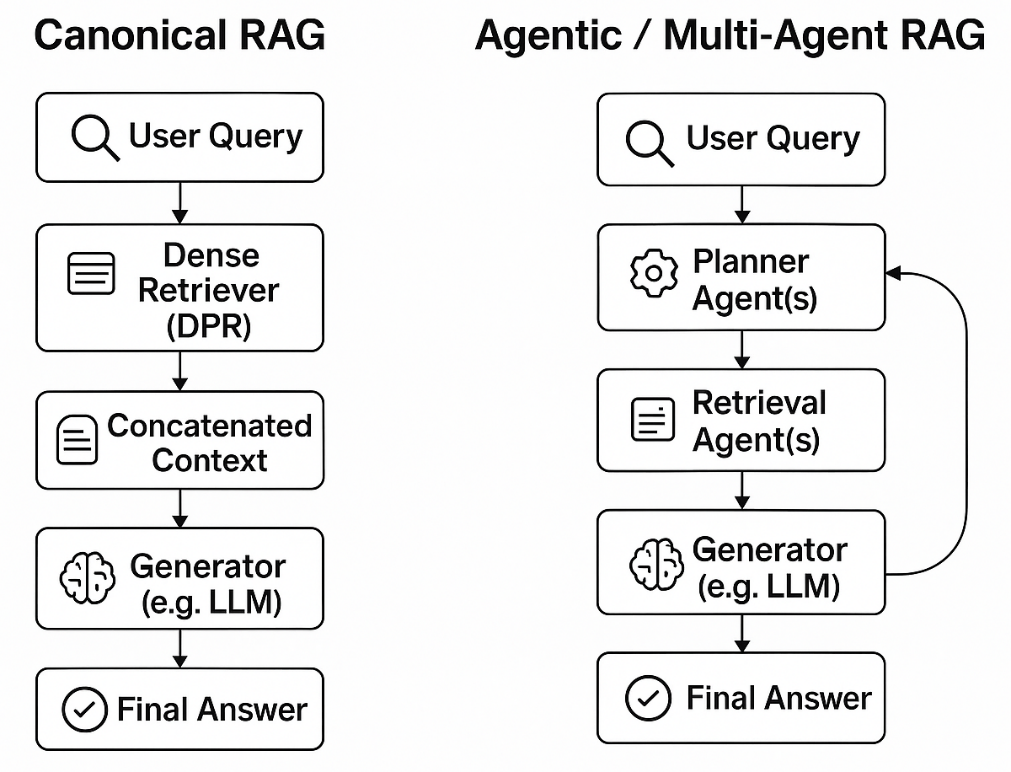

The image presents two diagrams illustrating different approaches to Retrieval-Augmented Generation (RAG): Canonical RAG and Agentic/Multi-Agent RAG. Both diagrams depict a flow of processes, starting with a user query and ending with a final answer, but they differ in the intermediate steps and complexity.

### Components/Axes

**Left Diagram: Canonical RAG**

* **Title:** Canonical RAG (positioned at the top)

* **Nodes:**

* User Query (top) - Rounded rectangle containing the text "User Query" and a magnifying glass icon.

* Dense Retriever (DPR) - Rounded rectangle containing the text "Dense Retriever (DPR)" and a document icon.

* Concatenated Context - Rounded rectangle containing the text "Concatenated Context" and a document icon.

* Generator (e.g. LLM) - Rounded rectangle containing the text "Generator (e.g. LLM)" and a brain icon.

* Final Answer (bottom) - Rounded rectangle containing the text "Final Answer" and a checkmark icon.

* **Flow:** The nodes are connected by downward-pointing arrows, indicating the flow of information from the User Query to the Final Answer.

**Right Diagram: Agentic / Multi-Agent RAG**

* **Title:** Agentic / Multi-Agent RAG (positioned at the top)

* **Nodes:**

* User Query (top) - Rounded rectangle containing the text "User Query" and a magnifying glass icon.

* Planner Agent(s) - Rounded rectangle containing the text "Planner Agent(s)" and a gear icon.

* Retrieval Agent(s) - Rounded rectangle containing the text "Retrieval Agent(s)" and a document icon.

* Generator (e.g. LLM) - Rounded rectangle containing the text "Generator (e.g. LLM)" and a brain icon.

* Final Answer (bottom) - Rounded rectangle containing the text "Final Answer" and a checkmark icon.

* **Flow:** The nodes are connected by downward-pointing arrows, indicating the flow of information. There is also a curved arrow looping back from the "Generator (e.g. LLM)" to the "Planner Agent(s)", indicating a feedback loop.

### Detailed Analysis or Content Details

**Canonical RAG Flow:**

1. **User Query:** The process begins with a user submitting a query.

2. **Dense Retriever (DPR):** The query is processed by a dense retriever, specifically DPR (Dense Passage Retrieval), to fetch relevant documents or passages.

3. **Concatenated Context:** The retrieved context is concatenated or combined.

4. **Generator (e.g. LLM):** The concatenated context is fed into a generator, such as a Large Language Model (LLM), to generate an answer.

5. **Final Answer:** The generated answer is presented as the final output.

**Agentic / Multi-Agent RAG Flow:**

1. **User Query:** The process begins with a user submitting a query.

2. **Planner Agent(s):** The query is processed by one or more planner agents, which determine the best strategy for answering the query.

3. **Retrieval Agent(s):** One or more retrieval agents fetch relevant documents or passages based on the planner's strategy.

4. **Generator (e.g. LLM):** The retrieved context is fed into a generator, such as a Large Language Model (LLM), to generate an answer.

5. **Feedback Loop:** The output of the generator is fed back to the planner agent(s), allowing them to refine their strategy and improve the answer.

6. **Final Answer:** The generated answer is presented as the final output.

### Key Observations

* The Canonical RAG is a simpler, linear process.

* The Agentic/Multi-Agent RAG incorporates planning and a feedback loop, making it more complex and potentially more adaptable.

* Both approaches use a generator (e.g., LLM) to produce the final answer.

* The Agentic RAG uses "agents" for planning and retrieval, suggesting a more modular and potentially parallelizable architecture.

### Interpretation

The diagrams illustrate two different approaches to RAG. The Canonical RAG represents a straightforward retrieval and generation process, while the Agentic/Multi-Agent RAG introduces a layer of planning and a feedback loop, potentially leading to more sophisticated and accurate answers. The Agentic approach allows for more complex strategies and iterative refinement, which could be beneficial for handling more challenging or nuanced queries. The presence of multiple agents suggests a system that can leverage parallel processing and specialized expertise.