## Diagram: Comparison of Canonical RAG and Agentic/Multi-Agent RAG Systems

### Overview

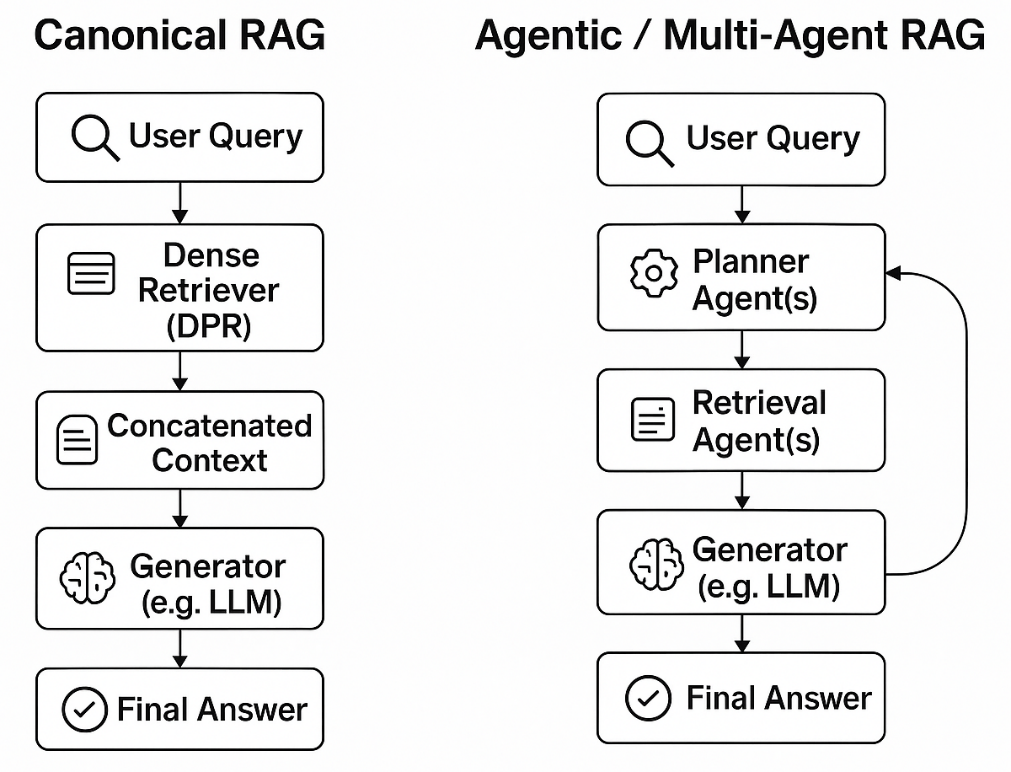

The diagram illustrates two distinct architectures for Retrieval-Augmented Generation (RAG) systems. On the left, the **Canonical RAG** follows a linear pipeline, while the **Agentic/Multi-Agent RAG** employs a modular, iterative approach with specialized agents. Both systems begin with a **User Query** and produce a **Final Answer**, but their intermediate processes differ significantly.

---

### Components/Axes

#### Canonical RAG (Left Side)

1. **User Query** (Input)

2. **Dense Retriever (DPR)**

- Icon: Document with lines (text representation)

- Function: Converts queries into dense vectors for semantic search.

3. **Concatenated Context**

- Icon: Document with lines

- Function: Combines retrieved documents into a single context.

4. **Generator (e.g., LLM)**

- Icon: Brain

- Function: Processes context to generate a response.

5. **Final Answer** (Output)

#### Agentic/Multi-Agent RAG (Right Side)

1. **User Query** (Input)

2. **Planner Agent(s)**

- Icon: Gear

- Function: Decomposes the query into sub-tasks or retrieval strategies.

3. **Retrieval Agent(s)**

- Icon: Document with lines

- Function: Specialized agents for targeted document retrieval.

4. **Generator (e.g., LLM)**

- Icon: Brain

- Function: Synthesizes retrieved information into a response.

5. **Final Answer** (Output)

- Feedback loop: The Generator’s output may inform the Planner for iterative refinement.

---

### Detailed Analysis

- **Canonical RAG** follows a strict sequence: Query → Retrieval → Context → Generation → Answer.

- **Agentic RAG** introduces modularity:

- The **Planner Agent** orchestrates the process, potentially splitting complex queries into subtasks.

- **Retrieval Agent(s)** handle domain-specific or multi-step retrieval.

- The **Generator** integrates outputs from retrieval agents, with optional feedback to the Planner for refinement.

- Both systems use **LLMs** (Large Language Models) as generators, but the Agentic approach adds layers of specialization and control.

---

### Key Observations

1. **Modularity vs. Simplicity**:

- Canonical RAG is simpler but less flexible.

- Agentic RAG’s modular design allows for dynamic task decomposition and iterative refinement.

2. **Feedback Loop**:

- The Agentic system includes a feedback mechanism (Generator → Planner), enabling adaptive query handling.

3. **Specialization**:

- Retrieval agents in the Agentic system may focus on specific domains or retrieval strategies (e.g., keyword vs. semantic search).

---

### Interpretation

The diagram highlights a trade-off between simplicity and sophistication:

- **Canonical RAG** is ideal for straightforward, single-step retrieval tasks.

- **Agentic RAG** excels in complex scenarios requiring multi-step reasoning, domain-specific retrieval, or iterative refinement.

The feedback loop in the Agentic system suggests potential for self-correction, improving answer accuracy at the cost of increased computational overhead. This architecture aligns with trends in AI systems that prioritize modularity and adaptability over rigid pipelines.