## Diagram: PathHD Hyperdimensional Encoding for Question Answering

### Overview

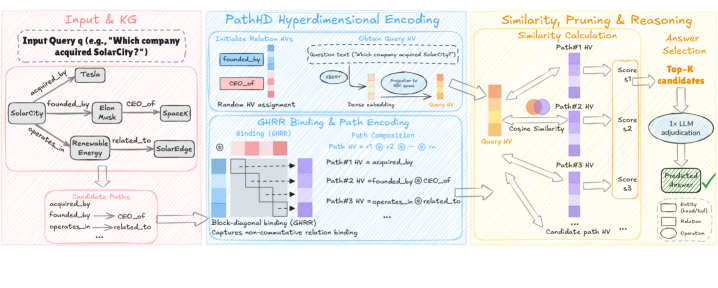

The image presents a diagram illustrating the PathHD Hyperdimensional Encoding approach for question answering. It outlines the process from inputting a query and knowledge graph (KG) to encoding paths, calculating similarity, pruning, reasoning, and ultimately selecting the answer. The diagram is divided into three main sections: Input & KG, PathHD Hyperdimensional Encoding, and Similarity, Pruning & Reasoning.

### Components/Axes

**1. Input & KG (Left Section - Pink Border)**

* **Input Query:** "Which company acquired SolarCity?"

* **Knowledge Graph (KG):** A network of entities and relations:

* Entities: SolarCity, Tesla, Elon Musk, SpaceX, Renewable Energy, SolarEdge

* Relations: acquired_by, founded_by, CEO_of, operates_in, related_to

* Edges connect entities based on these relations (e.g., SolarCity acquired_by Tesla).

* **Candidate Paths:** A list of possible paths extracted from the KG:

* acquired_by

* founded_by -> CEO_of

* operates_in -> related_to

* ... (Indicates more paths exist)

**2. PathHD Hyperdimensional Encoding (Middle Section - Blue Border)**

* **Initialize Relation HVs:**

* `founded_by`: Represented by a vertical bar with blue at the top and fading to white at the bottom.

* `CEO_of`: Represented by a vertical bar with red at the top and fading to white at the bottom.

* **Random HV assignment**

* **Obtain Query HV:**

* Question text: "Which company acquired SolarCity?"

* Dense embedding: A process involving "BERT" and "Projection to HDC space" to generate a "Query HV".

* **GHRR Binding & Path Encoding:**

* **Binding (GHRR):** A visual representation of the binding process using a grid. The top row is red, and the left column is blue. The diagonal elements are marked with a "-" symbol.

* **Path Composition:**

* Path HV = r1 @ r2 @ ... @ rn (where r represents relations)

* Path#1 HV = acquired_by

* Path#2 HV = founded_by @ CEO_of

* Path#3 HV = operates_in @ related_to

* ... (Indicates more paths exist)

* **Block-diagonal binding (GHRR):** Captures non-commutative relation binding.

**3. Similarity, Pruning & Reasoning (Right Section - Yellow Border)**

* **Similarity Calculation:**

* Cosine Similarity: The Query HV is compared to each Candidate Path HV using cosine similarity.

* Path#1 HV, Path#2 HV, Path#3 HV, ... (Candidate path HV)

* **Answer Selection:**

* Top-K candidates: The paths are ranked based on their similarity scores (s1, s2, s3).

* 1x LLM adjudication: The top-K candidates are processed by a Large Language Model (LLM) for final adjudication.

* Predicted answer: The final predicted answer is selected.

* **Legend:**

* Entity (head/tail): Rectangle

* Relation: Oval

* Operation: Circle

### Detailed Analysis or ### Content Details

* **Input & KG:** The initial step involves defining the question and representing the relevant knowledge as a graph. The example question is "Which company acquired SolarCity?". The KG contains entities like "SolarCity" and "Tesla" and relations like "acquired_by".

* **PathHD Hyperdimensional Encoding:** This section focuses on encoding the query and the candidate paths into high-dimensional vectors (HVs). Relation HVs are initialized, and the query is converted into a Query HV using dense embeddings. The GHRR binding process is used to encode paths, capturing the order of relations.

* **Similarity, Pruning & Reasoning:** The similarity between the Query HV and each Candidate Path HV is calculated using cosine similarity. The paths are then ranked based on their similarity scores. The top-K candidates are passed to an LLM for final adjudication, and the predicted answer is selected.

### Key Observations

* The diagram illustrates a pipeline for question answering using hyperdimensional computing.

* The GHRR binding is used to capture the order of relations in the paths.

* Cosine similarity is used to measure the similarity between the query and the candidate paths.

* An LLM is used for final adjudication of the top-K candidates.

### Interpretation

The diagram presents a method for question answering that leverages hyperdimensional computing to encode knowledge graph paths and query information into high-dimensional vectors. The use of GHRR binding allows the model to capture the order of relations in the paths, which is crucial for accurate reasoning. The cosine similarity measure provides a way to compare the query and the candidate paths, and the LLM adjudication step helps to refine the answer selection process. This approach aims to improve the accuracy and efficiency of question answering systems by combining the strengths of knowledge graphs, hyperdimensional computing, and large language models.