## Flowchart: Knowledge Graph-Based Question Answering System

### Overview

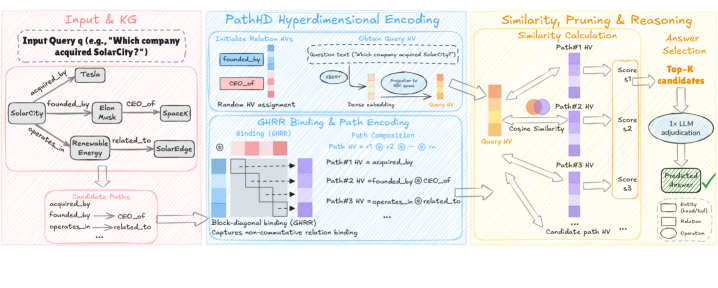

The image depicts a multi-stage pipeline for answering knowledge graph (KG) queries using hyperdimensional encoding, similarity calculations, and reasoning. The process begins with an input query (e.g., "Which company acquired SolarCity?") and progresses through KG traversal, path encoding, similarity scoring, and final answer selection via LLM adjudication.

### Components/Axes

1. **Input & KG Section (Left, Pink Background)**

- **Input Query**: "Which company acquired SolarCity?"

- **Knowledge Graph (KG) Entities**:

- SolarCity

- Tesla

- Elon Musk

- SpaceX

- SolarEdge

- Renewable Energy

- **Relationships**:

- `acquired_by`

- `founded_by`

- `CEO_of`

- `operates_in`

- `related_to`

- **Candidate Paths**:

- `acquired_by`

- `founded_by → CEO_of`

- `operates_in → related_to`

2. **PathHD Hyperdimensional Encoding (Center, Blue Background)**

- **Relation Hypervectors (HVs)**:

- Initialized for `founded_by` and `CEO_of`

- Random HV assignment for query text

- Dense embedding of query text

- **GHRR Binding & Path Encoding**:

- Block-diagonal binding (GHRR) for non-commutative relations

- Path composition examples:

- Path #1 HV: `acquired_by`

- Path #2 HV: `founded_by → CEO_of`

- Path #3 HV: `operates_in → related_to`

3. **Similarity, Pruning & Reasoning (Right, Orange Background)**

- **Similarity Calculation**:

- Cosine similarity between query HV and candidate path HVs

- Scores (s₁, s₂, s₃) for top candidate paths

- **Answer Selection**:

- Top-K candidates (e.g., top 3 paths)

- 1x LLM adjudication for final answer validation

### Detailed Analysis

- **Input & KG Section**:

- The KG structure is a directed graph with entities and relationships. For example, SolarCity is linked to Tesla via `acquired_by`, and Elon Musk is connected to SolarCity via `founded_by` and `CEO_of`.

- Candidate paths represent logical sequences of relationships (e.g., `founded_by → CEO_of` implies Elon Musk founded SolarCity and is its CEO).

- **PathHD Hyperdimensional Encoding**:

- Hypervectors (HVs) encode relationships and paths in a high-dimensional space. Block-diagonal binding (GHRR) ensures non-commutative relations (e.g., `founded_by → CEO_of` vs. `CEO_of → founded_by`) are preserved.

- Path composition combines HVs of individual relations (e.g., Path #2 HV = `founded_by` ⊗ `CEO_of`).

- **Similarity & Reasoning**:

- Cosine similarity scores (s₁, s₂, s₃) rank candidate paths. Higher scores indicate stronger alignment with the query.

- Top-K candidates are passed to an LLM for final adjudication, ensuring contextual accuracy (e.g., confirming Tesla acquired SolarCity).

### Key Observations

1. **Non-Commutative Relation Handling**: The use of block-diagonal binding in GHRR ensures directional relationships (e.g., `founded_by` vs. `CEO_of`) are distinct.

2. **LLM Adjudication**: Final answer selection relies on LLM reasoning to resolve ambiguities (e.g., distinguishing between "founded" and "acquired").

3. **Path Composition**: Hypervector composition allows efficient encoding of multi-step relational paths.

### Interpretation

This system demonstrates a hybrid approach to KG-based QA:

- **KG Traversal**: Extracts candidate paths from structured relationships.

- **Hyperdimensional Encoding**: Captures semantic relationships in a continuous space, enabling efficient similarity calculations.

- **LLM Integration**: Adds a layer of contextual reasoning to handle edge cases (e.g., confirming Tesla’s acquisition of SolarCity despite overlapping entities like SpaceX).

The flowchart emphasizes the importance of encoding relational paths and leveraging similarity metrics to prioritize candidate answers, with LLM adjudication as a final safeguard. The absence of numerical data suggests the focus is on architectural design rather than empirical results.