TECHNICAL ASSET FINGERPRINT

09fa27bcde1b20925bdf5dc2

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

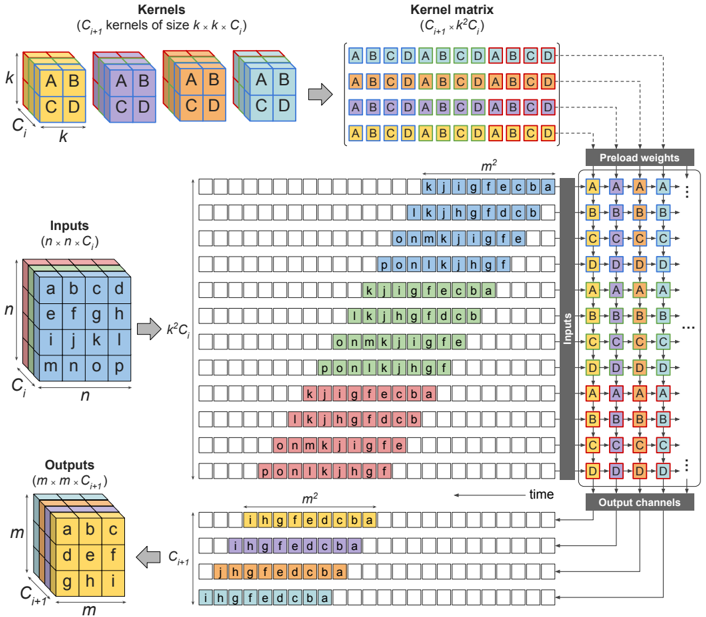

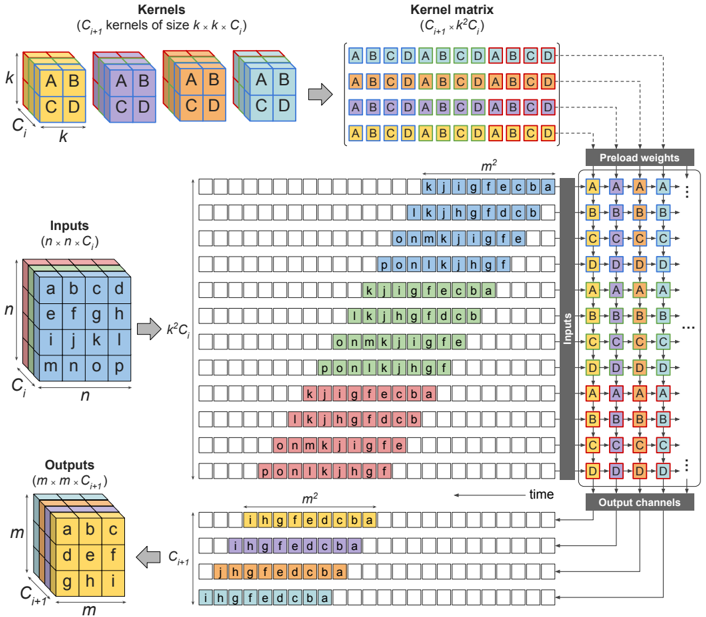

## Convolutional Neural Network Layer Diagram

### Overview

The image is a diagram illustrating the process of convolution in a convolutional neural network (CNN) layer. It shows how input data, kernels, and weights interact to produce output channels. The diagram visualizes the transformation of input features through convolution operations, highlighting the spatial relationships and data flow within the layer.

### Components/Axes

* **Kernels (C<sub>i+1</sub> kernels of size k x k x C<sub>i</sub>):** Four 3D cubes, each representing a kernel. Each cube is divided into 8 smaller cubes, with labels "A", "B", "C", and "D" on the faces. The dimensions are labeled as 'k', 'k', and 'C<sub>i</sub>'.

* **Kernel matrix (C<sub>i+1</sub> x k<sup>2</sup>C<sub>i</sub>):** A matrix formed by arranging the kernel elements. It shows the repeating sequence of "ABCD" in each row.

* **Inputs (n x n x C<sub>i</sub>):** A 3D cube representing the input data. The dimensions are labeled as 'n', 'n', and 'C<sub>i</sub>'. The cube's faces are labeled with letters 'a' through 'p'.

* **Outputs (m x m x C<sub>i+1</sub>):** A 3D cube representing the output data. The dimensions are labeled as 'm', 'm', and 'C<sub>i+1</sub>'. The cube's faces are labeled with letters 'a' through 'i'.

* **Preload weights:** A column of blocks labeled "Inputs", each containing four sub-blocks labeled "A", "B", "C", and "D". These blocks are connected to the "Kernel matrix" via dashed lines.

* **Output channels:** A column of blocks representing the output channels, each containing four sub-blocks.

* **m<sup>2</sup>:** Indicates the number of rows in the intermediate matrices.

* **k<sup>2</sup>C<sub>i</sub>:** Indicates the transformation from the input cube to the intermediate matrix.

* **Time:** An arrow indicating the direction of processing.

### Detailed Analysis

1. **Kernels:**

* Four kernel cubes are shown, each with dimensions k x k x C<sub>i</sub>.

* Each kernel cube has faces labeled with "A", "B", "C", and "D".

* The colors of the cubes are blue, purple, orange, and green.

2. **Kernel Matrix:**

* The kernel matrix is formed by arranging the elements of the kernels.

* Each row of the matrix contains a repeating sequence of "ABCD".

* The matrix has dimensions C<sub>i+1</sub> x k<sup>2</sup>C<sub>i</sub>.

3. **Inputs:**

* The input data is represented as a 3D cube with dimensions n x n x C<sub>i</sub>.

* The faces of the cube are labeled with letters 'a' through 'p'.

4. **Outputs:**

* The output data is represented as a 3D cube with dimensions m x m x C<sub>i+1</sub>.

* The faces of the cube are labeled with letters 'a' through 'i'.

5. **Intermediate Matrices:**

* Several matrices are shown between the "Inputs" and "Outputs".

* Each row of these matrices contains a sequence of letters, such as "kjigfecba", "lkhgfdcb", "onmkjiigfe", and "ponlkjhgf".

* The number of rows in these matrices is indicated as m<sup>2</sup>.

6. **Preload Weights:**

* The "Preload weights" column shows blocks labeled "A", "B", "C", and "D".

* These blocks are connected to the "Kernel matrix" via dashed lines, indicating the application of weights to the kernel elements.

7. **Output Channels:**

* The "Output channels" column shows blocks representing the final output.

* Each block contains four sub-blocks, similar to the "Preload weights".

### Key Observations

* The diagram illustrates the flow of data from the input cube through the convolution operation to the output cube.

* The kernel matrix represents the weights applied during the convolution.

* The intermediate matrices show the result of applying the kernels to different regions of the input data.

* The "Preload weights" and "Output channels" columns highlight the role of weights in the convolution process.

### Interpretation

The diagram provides a visual representation of the convolution operation in a CNN layer. It demonstrates how the input data is transformed by applying kernels and weights to produce output features. The diagram highlights the spatial relationships between the input data, kernels, and output features. The use of intermediate matrices helps to visualize the intermediate steps in the convolution process. The diagram suggests that the convolution operation involves sliding the kernels across the input data, applying weights to the kernel elements, and summing the results to produce the output features. The diagram also shows how multiple kernels can be used to produce multiple output channels.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Convolutional Neural Network Kernel Operation

### Overview

This diagram illustrates the operation of convolutional kernels on an input matrix, leading to an output matrix. It depicts the process of applying multiple kernels to an input, the formation of a kernel matrix, and the resulting output channels. The diagram also shows a "preload weights" section, likely representing the initial weights of the kernels.

### Components/Axes

The diagram is segmented into several key areas:

* **Kernels:** A set of 3D cubes representing the convolutional kernels. Dimensions are labeled as (C<sub>i</sub> kernels of size k x k x C<sub>i</sub>).

* **Kernel Matrix:** A 2D matrix formed by arranging the kernels. Dimensions are labeled as (C<sub>i+1</sub> x k<sup>2</sup>C<sub>i</sub>).

* **Inputs:** A 2D matrix representing the input data. Dimensions are labeled as (n x n x C<sub>i</sub>).

* **Outputs:** A 2D matrix representing the output data. Dimensions are labeled as (m x m x C<sub>i+1</sub>).

* **Preload Weights:** A 4x4 matrix representing the initial weights of the kernels.

* **Time:** An axis indicating the progression of the convolution operation over time.

The input and output matrices are labeled with letters (a, b, c, d, etc.) to illustrate the data flow.

### Detailed Analysis or Content Details

**Kernels:**

* The diagram shows three kernels, each a cube with dimensions k x k x C<sub>i</sub>.

* Each kernel is labeled with letters A, B, C, and D on its faces.

**Kernel Matrix:**

* The kernel matrix is formed by arranging the kernels in a grid.

* The matrix has dimensions C<sub>i+1</sub> x k<sup>2</sup>C<sub>i</sub>.

* The letters A, B, C, and D are repeated within the matrix, indicating the arrangement of kernel elements.

**Inputs:**

* The input matrix has dimensions n x n x C<sub>i</sub>.

* The input matrix is populated with letters a through p, arranged in a grid.

* The diagram shows the application of the kernel matrix to the input matrix. The letters k, j, i, g, f, e, c, b, a are shown as the result of the convolution.

**Outputs:**

* The output matrix has dimensions m x m x C<sub>i+1</sub>.

* The output matrix is populated with letters i through a, arranged in a grid.

**Preload Weights:**

* The preload weights matrix is a 4x4 matrix.

* The matrix is populated with letters A, B, C, and D, repeated in a pattern.

* The matrix is labeled "Inputs" on the left and "Output channels" on the top.

**Data Flow:**

* The diagram shows the flow of data from the input matrix, through the kernel matrix, to the output matrix.

* The time axis indicates that the convolution operation is performed sequentially.

### Key Observations

* The diagram illustrates the core operation of a convolutional neural network layer.

* The kernel matrix is formed by arranging the kernels in a specific order.

* The convolution operation involves sliding the kernel matrix over the input matrix and performing element-wise multiplication and summation.

* The preload weights matrix represents the initial weights of the kernels, which are learned during training.

* The letters used in the matrices are likely placeholders for numerical values.

### Interpretation

This diagram demonstrates the fundamental process of convolution in a neural network. The kernels, represented as 3D cubes, are the learnable parameters that extract features from the input data. The kernel matrix is a way to organize these kernels for efficient application to the input. The convolution operation itself involves sliding the kernel across the input, performing element-wise multiplication, and summing the results to produce an output feature map. The "preload weights" section suggests that the kernels are initialized with certain values before training begins. The diagram highlights the spatial relationships between the input, kernels, and output, and the temporal aspect of the convolution process. The use of letters instead of numbers suggests a conceptual illustration rather than a specific numerical example. The diagram is a simplified representation of a complex process, but it effectively conveys the key concepts of convolutional neural networks. The arrangement of the letters within the matrices suggests a sliding window approach, where the kernel is applied to different parts of the input to generate the output. The diagram is a valuable tool for understanding the inner workings of convolutional neural networks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Technical Diagram: Convolutional Kernel Operations and Data Flow

### Overview

This image is a technical diagram illustrating the process of applying multiple convolutional kernels to an input tensor to produce an output tensor, likely within the context of a Convolutional Neural Network (CNN). It breaks down the operation into its constituent parts: the kernels, the input data, the resulting kernel matrix, and the sequential processing flow over time that leads to the final output. The diagram uses a color-coded letter system (A, B, C, D for kernels/weights; a, b, c, d... for input/output data) to trace data through the transformation.

### Components/Axes

The diagram is segmented into five primary regions:

1. **Top-Left: Kernels**

* **Label:** `Kernels`

* **Sub-label:** `(C_{i+1} kernels of size k × k × C_i)`

* **Visual:** Four 3D cubes, each representing a kernel. Each cube has spatial dimensions `k` by `k` and depth `C_i`. The front face of each cube is a 2x2 grid containing the letters A, B, C, D. The cubes are colored (from left to right): yellow, purple, orange, light blue.

2. **Top-Right: Kernel Matrix**

* **Label:** `Kernel matrix`

* **Sub-label:** `(C_{i+1} × k²C_i)`

* **Visual:** A 2D grid with 4 rows and 16 columns. Each row corresponds to one of the four kernels (matching their colors: blue, orange, green, red). The cells contain repeating sequences of the letters A, B, C, D. The grid is labeled with dimension `m²` at the bottom.

3. **Middle-Left: Inputs**

* **Label:** `Inputs`

* **Sub-label:** `(n × n × C_i)`

* **Visual:** A 3D cube representing the input feature map. Its spatial dimensions are `n` by `n` and depth `C_i`. The front face is a 4x4 grid containing the letters a through p in row-major order.

4. **Bottom-Left: Outputs**

* **Label:** `Outputs`

* **Sub-label:** `(m × m × C_{i+1})`

* **Visual:** A 3D cube representing the output feature map. Its spatial dimensions are `m` by `m` and depth `C_{i+1}`. The front face is a 3x3 grid containing the letters a through i in row-major order.

5. **Center-Right: Processing Flow (Time Axis)**

* **Components:**

* **Preload weights:** A vertical column on the right, containing repeating blocks of the letters A, B, C, D in their respective colors (yellow, purple, orange, light blue). This represents the weights from the four kernels being loaded.

* **Inputs (to processing):** A vertical column to the left of the weights, showing sequences of letters (e.g., `k j i i g f e c b a`) derived from the input data.

* **Output channels:** A vertical column at the bottom right, showing the resulting sequences (e.g., `i h g f e d c b a`) for each output channel, color-coded to match the kernels.

* **Axis:** A horizontal arrow at the bottom labeled `time`, pointing from right to left, indicating the sequential nature of the operation.

* **Grid:** A large central grid with 16 rows and multiple columns. Each row shows a sequence of letters (e.g., `k j i i g f e c b a`) being processed. The rows are grouped into four color-coded blocks (blue, orange, green, red), each block containing four rows. The grid is labeled with dimension `m²` at the top and bottom.

### Detailed Analysis

* **Kernel to Matrix Transformation:** The four 3D kernels (each `k x k x C_i`) are flattened and arranged into the "Kernel matrix." Each kernel becomes one row in this matrix. The repeating `A B C D` pattern in the matrix rows suggests the kernel values are being tiled or replicated across the input channels (`C_i`).

* **Input Data Structure:** The input is a single `n x n x C_i` tensor. The letters `a` through `p` on its front face represent spatial data at a specific channel depth.

* **Processing Flow:** The central grid illustrates a convolution operation unfolding over time. For each position in the output (there are `m²` positions), a sequence of input data (like `k j i i g f e c b a`) is multiplied with the preloaded kernel weights (A, B, C, D sequences). The result is a new sequence (like `i h g f e d c b a`) that contributes to one of the `C_{i+1}` output channels.

* **Color-Coded Data Path:**

* **Blue Path (Kernel 1):** The blue row in the Kernel matrix connects to the blue "Preload weights" (A, B, C, D in yellow boxes). This processes the top four rows of the central grid (blue background) to produce the top blue "Output channels" sequence (`i h g f e d c b a`).

* **Orange Path (Kernel 2):** The orange matrix row connects to orange "Preload weights." It processes the next four rows (orange background) to produce the orange output sequence.

* **Green Path (Kernel 3):** The green matrix row connects to green "Preload weights." It processes the next four rows (green background) to produce the green output sequence.

* **Red Path (Kernel 4):** The red matrix row connects to red "Preload weights." It processes the bottom four rows (red background) to produce the red output sequence.

* **Dimensional Relationships:** The diagram implies the relationship: `m = n - k + 1` (for valid convolution), which is standard for calculating output spatial size. The output depth `C_{i+1}` equals the number of kernels (4 in this example).

### Key Observations

1. **Visual Metaphor for Flattening:** The diagram uses the 2D "Kernel matrix" and the letter sequences to visually represent the flattening of 3D kernel volumes into 1D vectors for computation.

2. **Explicit Time Dimension:** Unlike static diagrams, this one includes a `time` axis, emphasizing that the convolution is a sequential process of sliding the kernel and performing dot products across the input.

3. **Channel-wise Parallelism:** The four color-coded paths demonstrate that the operations for different output channels (different kernels) are independent and can be performed in parallel.

4. **Data Reuse:** The same input data sequences (e.g., `k j i i g f e c b a`) appear in multiple rows within a color block, illustrating how input values are reused for different output positions.

### Interpretation

This diagram is a pedagogical tool designed to demystify the mechanics of a convolutional layer. It moves beyond the abstract mathematical notation (`Y = W * X`) to show the concrete data movement and transformation.

* **What it demonstrates:** It explicitly shows how a set of 3D kernels is transformed into a 2D weight matrix, how input data is accessed in sequences corresponding to the kernel's receptive field, and how these are combined via multiplication and accumulation (implied) to produce distinct output channels. The color-coding is critical for tracing the contribution of each kernel to the final output.

* **Relationships:** The core relationship shown is between the kernel parameters (stored in the matrix and preloaded weights) and the input data. The output is a direct function of their interaction, spatially organized (`m x m`) and depth-wise organized (`C_{i+1}`).

* **Notable Anomalies/Clarifications:** The use of letters (A-D, a-p) instead of numbers is a deliberate abstraction to focus on the *structure* of the operation rather than specific numerical values. The diagram simplifies by showing only the front face of the 3D tensors, implying the depth dimension (`C_i`) is handled by the tiling in the kernel matrix and the sequences in the processing flow. The "time" axis is a conceptual aid; in actual hardware, many of these operations would be parallelized.

**In essence, this image provides a "under the hood" look at a convolution, translating the high-level operation into a sequence of data routing and matrix manipulation steps, making it invaluable for understanding implementation details in deep learning hardware or software.**

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Kernel Processing Architecture

### Overview

This diagram illustrates a computational architecture for processing inputs through a series of kernels, kernel matrices, and precomputed weights. It depicts the flow of data from raw inputs to transformed outputs, emphasizing the role of kernel combinations and weight matrices in the transformation process.

### Components/Axes

1. **Kernels Section**:

- **Labels**:

- "Kernels (C_k kernels of size k x k, C)"

- Grid of colored blocks labeled with combinations like `AB`, `CD`, `AB`, `CD`, etc.

- **Structure**:

- A 2D grid of `k x k` kernels, each represented by a unique color-coded block (e.g., purple for `AB`, orange for `CD`).

- Kernels are indexed by `k` (size) and `C` (number of kernels).

2. **Kernel Matrix Section**:

- **Labels**:

- "Kernel matrix (C_k x C_k)"

- **Structure**:

- A grid of letters (A–D) representing combinations of kernels.

- Each cell in the matrix corresponds to a specific kernel combination (e.g., `ABCD`, `ABCD`, etc.).

3. **Inputs Section**:

- **Labels**:

- "Inputs (n x n x C)"

- **Structure**:

- A 3D grid of letters (a–o) arranged in `n x n` spatial dimensions and `C` channels.

- Example: `a b c d` (row 1), `e f g h` (row 2), etc.

4. **Outputs Section**:

- **Labels**:

- "Outputs (n x m x C_out)"

- **Structure**:

- A 3D grid of letters (a–o) with transformed values (e.g., `i h g f`, `d c b a`).

5. **Preload Weights Section**:

- **Labels**:

- "Preload weights"

- **Structure**:

- A grid of letters (A–D) and numbers (1–4), likely representing weight matrices or transformation rules.

- Example: `A1 B2 C3 D4` in rows.

### Detailed Analysis

- **Kernels to Kernel Matrix**:

- Kernels (`AB`, `CD`, etc.) are combined into a `C_k x C_k` matrix, where each cell represents a unique kernel combination (e.g., `ABCD`).

- The matrix is color-coded to match the original kernels (e.g., purple for `AB`, orange for `CD`).

- **Inputs to Outputs**:

- Inputs (`n x n x C`) are processed through the kernel matrix and preload weights to produce outputs (`n x m x C_out`).

- The transformation involves applying kernel combinations (e.g., `i h g f`, `d c b a`) to the input data.

- **Preload Weights**:

- The weights grid (`A1 B2 C3 D4`) suggests a structured transformation applied to the kernel matrix, possibly for optimization or efficiency.

### Key Observations

1. **Color-Coded Kernels**:

- Kernels are distinctly color-coded (e.g., purple for `AB`, orange for `CD`), ensuring clear differentiation in the kernel matrix.

2. **Spatial Dimensions**:

- Inputs and outputs are 3D grids, with spatial dimensions (`n x n` and `n x m`) and channel dimensions (`C` and `C_out`).

3. **Kernel Combinations**:

- The kernel matrix explicitly shows how individual kernels are combined (e.g., `ABCD`, `ABCD`), indicating a hierarchical or composite processing approach.

4. **Preload Weights**:

- The weights grid (`A1 B2 C3 D4`) implies a systematic application of weights to the kernel matrix, possibly for bias or scaling.

### Interpretation

This diagram represents a **convolutional or matrix-based processing pipeline**, likely for tasks like image recognition or signal processing. The architecture emphasizes:

- **Kernel Reuse**: Kernels (`AB`, `CD`) are reused and combined into a matrix for efficient computation.

- **Weight Optimization**: Preload weights (`A1 B2 C3 D4`) suggest precomputed transformations to reduce computational overhead.

- **Dimensionality Reduction**: Inputs (`n x n x C`) are transformed into outputs (`n x m x C_out`), indicating a change in spatial or channel dimensions.

The use of color-coding and structured grids highlights the importance of **kernel organization** and **weight management** in the processing pipeline. This architecture likely aims to balance computational efficiency with the ability to capture complex patterns in the input data.

DECODING INTELLIGENCE...