## Diagram: Convolutional Neural Network Kernel Operation

### Overview

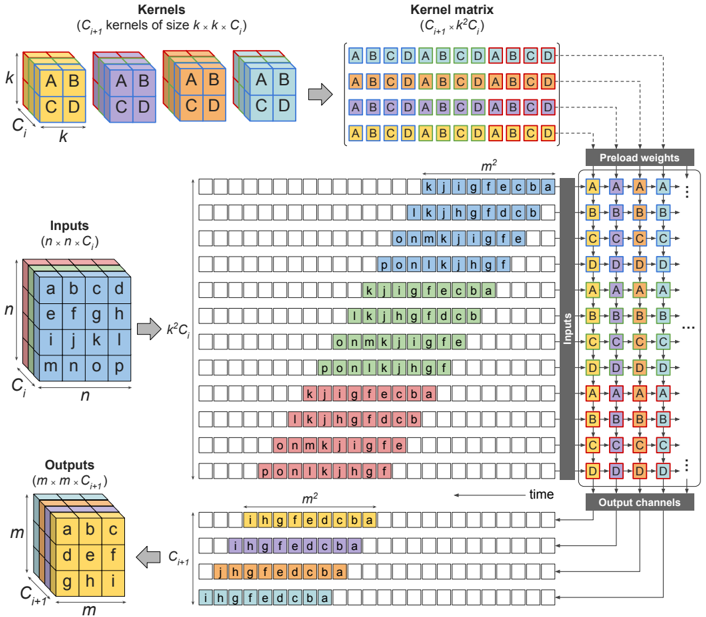

This diagram illustrates the operation of convolutional kernels on an input matrix, leading to an output matrix. It depicts the process of applying multiple kernels to an input, the formation of a kernel matrix, and the resulting output channels. The diagram also shows a "preload weights" section, likely representing the initial weights of the kernels.

### Components/Axes

The diagram is segmented into several key areas:

* **Kernels:** A set of 3D cubes representing the convolutional kernels. Dimensions are labeled as (C<sub>i</sub> kernels of size k x k x C<sub>i</sub>).

* **Kernel Matrix:** A 2D matrix formed by arranging the kernels. Dimensions are labeled as (C<sub>i+1</sub> x k<sup>2</sup>C<sub>i</sub>).

* **Inputs:** A 2D matrix representing the input data. Dimensions are labeled as (n x n x C<sub>i</sub>).

* **Outputs:** A 2D matrix representing the output data. Dimensions are labeled as (m x m x C<sub>i+1</sub>).

* **Preload Weights:** A 4x4 matrix representing the initial weights of the kernels.

* **Time:** An axis indicating the progression of the convolution operation over time.

The input and output matrices are labeled with letters (a, b, c, d, etc.) to illustrate the data flow.

### Detailed Analysis or Content Details

**Kernels:**

* The diagram shows three kernels, each a cube with dimensions k x k x C<sub>i</sub>.

* Each kernel is labeled with letters A, B, C, and D on its faces.

**Kernel Matrix:**

* The kernel matrix is formed by arranging the kernels in a grid.

* The matrix has dimensions C<sub>i+1</sub> x k<sup>2</sup>C<sub>i</sub>.

* The letters A, B, C, and D are repeated within the matrix, indicating the arrangement of kernel elements.

**Inputs:**

* The input matrix has dimensions n x n x C<sub>i</sub>.

* The input matrix is populated with letters a through p, arranged in a grid.

* The diagram shows the application of the kernel matrix to the input matrix. The letters k, j, i, g, f, e, c, b, a are shown as the result of the convolution.

**Outputs:**

* The output matrix has dimensions m x m x C<sub>i+1</sub>.

* The output matrix is populated with letters i through a, arranged in a grid.

**Preload Weights:**

* The preload weights matrix is a 4x4 matrix.

* The matrix is populated with letters A, B, C, and D, repeated in a pattern.

* The matrix is labeled "Inputs" on the left and "Output channels" on the top.

**Data Flow:**

* The diagram shows the flow of data from the input matrix, through the kernel matrix, to the output matrix.

* The time axis indicates that the convolution operation is performed sequentially.

### Key Observations

* The diagram illustrates the core operation of a convolutional neural network layer.

* The kernel matrix is formed by arranging the kernels in a specific order.

* The convolution operation involves sliding the kernel matrix over the input matrix and performing element-wise multiplication and summation.

* The preload weights matrix represents the initial weights of the kernels, which are learned during training.

* The letters used in the matrices are likely placeholders for numerical values.

### Interpretation

This diagram demonstrates the fundamental process of convolution in a neural network. The kernels, represented as 3D cubes, are the learnable parameters that extract features from the input data. The kernel matrix is a way to organize these kernels for efficient application to the input. The convolution operation itself involves sliding the kernel across the input, performing element-wise multiplication, and summing the results to produce an output feature map. The "preload weights" section suggests that the kernels are initialized with certain values before training begins. The diagram highlights the spatial relationships between the input, kernels, and output, and the temporal aspect of the convolution process. The use of letters instead of numbers suggests a conceptual illustration rather than a specific numerical example. The diagram is a simplified representation of a complex process, but it effectively conveys the key concepts of convolutional neural networks. The arrangement of the letters within the matrices suggests a sliding window approach, where the kernel is applied to different parts of the input to generate the output. The diagram is a valuable tool for understanding the inner workings of convolutional neural networks.