## Diagram: Taxonomy of Explainable AI (XAI) Techniques

### Overview

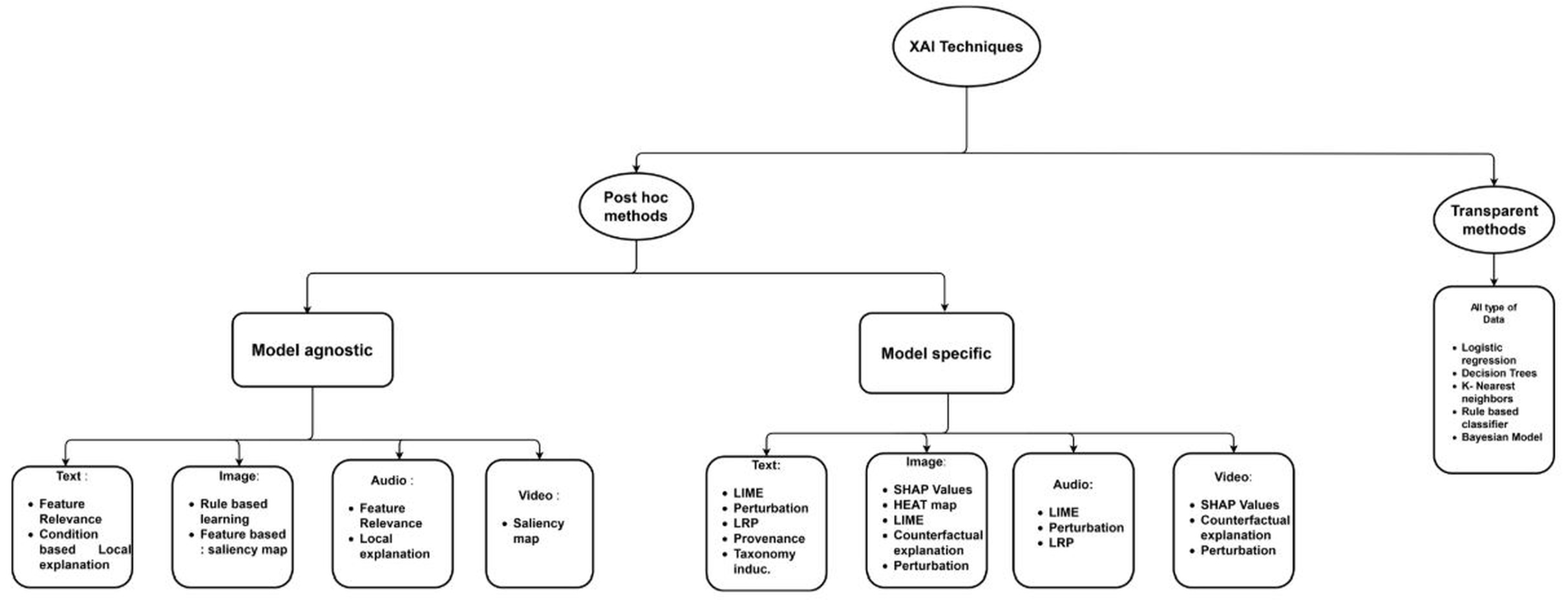

The image is a hierarchical flowchart or taxonomy diagram illustrating the classification of Explainable AI (XAI) techniques. It organizes methods based on their fundamental approach (Post hoc vs. Transparent) and further categorizes them by their applicability to model types (agnostic vs. specific) and data modalities (Text, Image, Audio, Video). The diagram uses a top-down tree structure with connecting lines to show relationships.

### Components/Axes

The diagram is structured as a tree with the following hierarchical levels and components:

1. **Root Node (Top Center):** An oval labeled **"XAI Techniques"**.

2. **First-Level Branches:** Two main categories branch from the root:

* **Left Branch:** An oval labeled **"Post hoc methods"**.

* **Right Branch:** An oval labeled **"Transparent methods"**.

3. **Second-Level Branches (under "Post hoc methods"):** Two subcategories:

* **Left Sub-branch:** A rectangle labeled **"Model agnostic"**.

* **Right Sub-branch:** A rectangle labeled **"Model specific"**.

4. **Leaf Nodes (Data Modality Categories):** Under both "Model agnostic" and "Model specific", there are four rounded rectangles representing data types:

* **Text:**

* **Image:**

* **Audio:**

* **Video:**

5. **Leaf Node (under "Transparent methods"):** A single rounded rectangle labeled **"All type of Data"**.

### Detailed Analysis

The specific techniques and models listed under each category are as follows:

**A. Under "Post hoc methods" -> "Model agnostic":**

* **Text:**

* Feature Relevance

* Condition based Local explanation

* **Image:**

* Rule based learning

* Feature based: saliency map

* **Audio:**

* Feature Relevance

* Local explanation

* **Video:**

* Saliency map

**B. Under "Post hoc methods" -> "Model specific":**

* **Text:**

* LIME

* Perturbation

* LRP

* Provenance

* Taxonomy induc.

* **Image:**

* SHAP Values

* HEAT map

* LIME

* Counterfactual explanation

* Perturbation

* **Audio:**

* LIME

* Perturbation

* LRP

* **Video:**

* SHAP Values

* Counterfactual explanation

* Perturbation

**C. Under "Transparent methods" -> "All type of Data":**

* Logistic regression

* Decision Trees

* K- Nearest neighbors

* Rule based classifier

* Bayesian Model

### Key Observations

1. **Structural Dichotomy:** The primary split is between methods applied after model training ("Post hoc") and inherently interpretable models ("Transparent").

2. **Post Hoc Subdivision:** Post hoc methods are further divided based on whether they require knowledge of the model's internals ("Model specific") or treat it as a black box ("Model agnostic").

3. **Data Modality Focus:** Both "Model agnostic" and "Model specific" branches are organized by the type of data they are applied to (Text, Image, Audio, Video), indicating that the choice of XAI technique is highly dependent on the data domain.

4. **Technique Overlap:** Several techniques (e.g., LIME, Perturbation, SHAP Values) appear under multiple data modalities within the "Model specific" branch, suggesting they are versatile methods adapted for different data types.

5. **Transparent Methods List:** The "Transparent methods" branch lists classic, inherently interpretable machine learning models rather than post-hoc explanation techniques.

### Interpretation

This diagram presents a structured taxonomy for navigating the field of Explainable AI. It suggests that selecting an appropriate explanation method involves a sequential decision process:

1. **First, determine the fundamental approach:** Is the goal to explain an existing complex model (requiring a *Post hoc* method), or to use an inherently understandable model from the start (a *Transparent* method)?

2. **For Post hoc methods, determine model accessibility:** If using a post hoc method, can you access the model's internal structure (*Model specific*), or must you treat it as a complete black box (*Model agnostic*)?

3. **Finally, consider the data domain:** The specific technique is then chosen based on the modality of the data being explained (Text, Image, Audio, Video).

The taxonomy highlights that the XAI landscape is not monolithic. The proliferation of techniques under "Model specific" for Text and Image data, compared to fewer listed for Audio and Video, may reflect the relative maturity of research in those areas. The inclusion of classic models like Decision Trees under "Transparent methods" reinforces the idea that explainability is not solely a property of new techniques but also of model choice. The diagram serves as a conceptual map for practitioners to identify suitable explanation strategies based on their specific constraints (model access, data type, and requirements).