\n

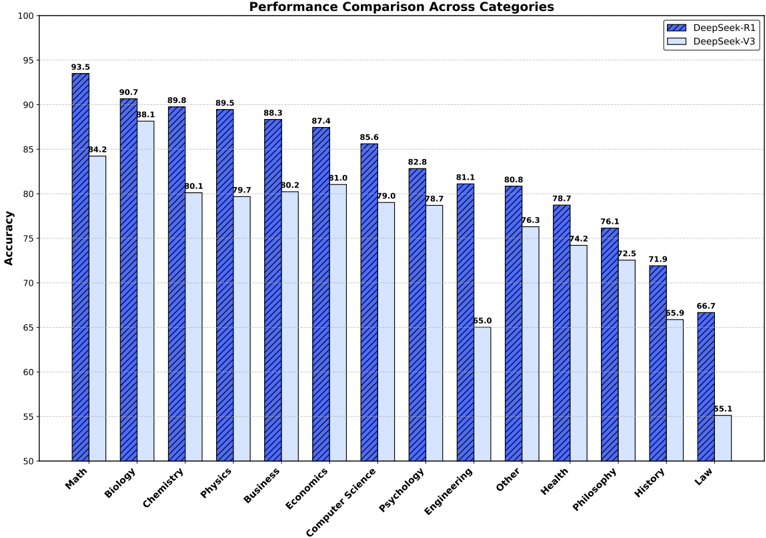

## Grouped Bar Chart: Performance Comparison Across Categories

### Overview

This image is a grouped bar chart comparing the accuracy performance of two AI models, "DeepSeek-R1" and "DeepSeek-V3," across 14 different subject categories. The chart is titled "Performance Comparison Across Categories." The data is presented as percentages, with the y-axis representing "Accuracy."

### Components/Axes

* **Chart Title:** "Performance Comparison Across Categories" (centered at the top).

* **Y-Axis:**

* **Label:** "Accuracy" (rotated vertically on the left side).

* **Scale:** Linear scale ranging from 50 to 100, with major tick marks every 5 units (50, 55, 60, ..., 100).

* **X-Axis:**

* **Categories (from left to right):** Math, Biology, Chemistry, Physics, Business, Economics, Computer Science, Psychology, Engineering, Other, Health, Philosophy, History, Law.

* **Legend:** Located in the top-right corner of the chart area.

* **DeepSeek-R1:** Represented by dark blue bars with a diagonal stripe pattern.

* **DeepSeek-V3:** Represented by solid, light blue bars.

### Detailed Analysis

The chart displays paired bars for each category. The left bar (dark blue, striped) is DeepSeek-R1, and the right bar (light blue, solid) is DeepSeek-V3. The exact accuracy values are annotated above each bar.

| Category | DeepSeek-R1 Accuracy | DeepSeek-V3 Accuracy | Performance Gap (R1 - V3) |

| :--- | :--- | :--- | :--- |

| Math | 93.5 | 84.2 | +9.3 |

| Biology | 90.7 | 88.1 | +2.6 |

| Chemistry | 89.8 | 80.1 | +9.7 |

| Physics | 89.5 | 79.7 | +9.8 |

| Business | 88.3 | 80.2 | +8.1 |

| Economics | 87.4 | 81.0 | +6.4 |

| Computer Science | 85.6 | 79.0 | +6.6 |

| Psychology | 82.8 | 78.7 | +4.1 |

| Engineering | 81.1 | 65.0 | +16.1 |

| Other | 80.8 | 76.3 | +4.5 |

| Health | 78.7 | 74.2 | +4.5 |

| Philosophy | 76.1 | 72.5 | +3.6 |

| History | 71.9 | 65.9 | +6.0 |

| Law | 66.7 | 55.1 | +11.6 |

**Trend Verification:**

* **DeepSeek-R1 (Dark Blue, Striped):** The series shows a clear, consistent downward trend from left to right. The highest performance is in Math (93.5), and the lowest is in Law (66.7).

* **DeepSeek-V3 (Light Blue, Solid):** This series also follows a general downward trend from left to right, mirroring the pattern of R1 but at consistently lower values. Its highest point is in Biology (88.1) and its lowest is in Law (55.1).

### Key Observations

1. **Consistent Superiority:** DeepSeek-R1 outperforms DeepSeek-V3 in every single category presented.

2. **Largest Performance Gaps:** The most significant differences in accuracy are observed in **Engineering** (+16.1 points) and **Law** (+11.6 points). The smallest gap is in **Biology** (+2.6 points).

3. **Overall Performance Hierarchy:** Both models perform best in STEM and quantitative fields (Math, Biology, Chemistry, Physics) and worst in humanities and law (History, Law).

4. **Anomaly in "Other":** The "Other" category shows relatively high performance for both models (80.8 and 76.3), placing it in the upper-middle range of the chart, suggesting it may contain a mix of subjects where the models are proficient.

5. **Steep Drop-off for V3 in Engineering:** While both models see a drop in Engineering compared to adjacent categories, the decline for DeepSeek-V3 is particularly severe, falling from 78.7 in Psychology to 65.0 in Engineering.

### Interpretation

This chart provides a clear comparative benchmark of two AI models' knowledge and reasoning capabilities across a broad academic spectrum. The data suggests that **DeepSeek-R1 is a more capable and robust model than DeepSeek-V3 across all tested domains.**

The consistent downward trend from left to right for both models indicates that the categories are likely ordered by decreasing average model performance, not alphabetically. This ordering highlights a key pattern: both models demonstrate stronger performance in formal, rule-based, or quantitative disciplines (Math, Physics, Computer Science) compared to disciplines that rely heavily on interpretation, precedent, and nuanced human context (Law, History, Philosophy).

The particularly large gaps in **Engineering** and **Law** are noteworthy. This could imply that DeepSeek-R1 has significantly better training data, architecture, or fine-tuning for applied technical problem-solving and complex regulatory/textual analysis, respectively. The relatively small gap in **Biology** might suggest that both models have been trained on similar, high-quality biological datasets, or that the nature of the tasks in this category is such that the performance ceiling is closer for both models.

In essence, the chart doesn't just show that one model is better; it maps *where* and by *how much* it is better, providing crucial insights for selecting the appropriate model for specific tasks or identifying areas for future model improvement. The data strongly advocates for the use of DeepSeek-R1 over V3 for any application requiring high accuracy across these subject areas.