## Bar Chart: Performance Comparison Across Categories

### Overview

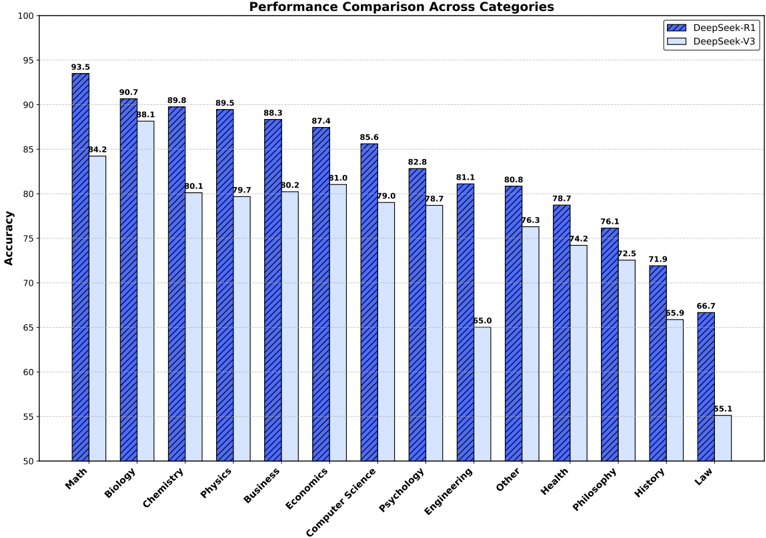

This image presents a bar chart comparing the performance (Accuracy) of two models, DeepSeek-R1 and DeepSeek-V3, across ten different categories. The chart uses vertically oriented bars to represent accuracy scores for each category. Each category has two bars, one for each model.

### Components/Axes

* **Title:** "Performance Comparison Across Categories" (centered at the top)

* **X-axis:** Categories - Math, Biology, Chemistry, Physics, Business, Economics, Computer Science, Psychology, Engineering, Other, Health, Philosophy, History, Law. Labels are positioned along the bottom of the chart, angled for readability.

* **Y-axis:** Accuracy, ranging from 50 to 100, with increments of 5. Labels are positioned along the left side of the chart.

* **Legend:** Located in the top-right corner.

* DeepSeek-R1: Represented by a dark blue color.

* DeepSeek-V3: Represented by a light blue color.

### Detailed Analysis

The chart displays accuracy scores for each model in each category. I will analyze each category, noting the accuracy for both DeepSeek-R1 and DeepSeek-V3.

* **Math:** DeepSeek-R1: 93.5, DeepSeek-V3: 84.2

* **Biology:** DeepSeek-R1: 90.7, DeepSeek-V3: 88.1

* **Chemistry:** DeepSeek-R1: 89.8, DeepSeek-V3: 80.1

* **Physics:** DeepSeek-R1: 89.5, DeepSeek-V3: 79.7

* **Business:** DeepSeek-R1: 88.3, DeepSeek-V3: 87.4

* **Economics:** DeepSeek-R1: 87.4, DeepSeek-V3: 81.0

* **Computer Science:** DeepSeek-R1: 85.6, DeepSeek-V3: 79.0

* **Psychology:** DeepSeek-R1: 82.8, DeepSeek-V3: 78.7

* **Engineering:** DeepSeek-R1: 81.1, DeepSeek-V3: 65.0

* **Other:** DeepSeek-R1: 80.8, DeepSeek-V3: 76.3

* **Health:** DeepSeek-R1: 78.7, DeepSeek-V3: 74.2

* **Philosophy:** DeepSeek-R1: 76.1, DeepSeek-V3: 72.5

* **History:** DeepSeek-R1: 71.9, DeepSeek-V3: 66.9

* **Law:** DeepSeek-R1: 65.1, DeepSeek-V3: 55.1

**Trends:**

* Generally, DeepSeek-R1 consistently outperforms DeepSeek-V3 across all categories.

* The difference in performance is most significant in Law, History, and Engineering.

* The difference in performance is least significant in Business and Biology.

### Key Observations

* DeepSeek-R1 achieves the highest accuracy in Math (93.5).

* DeepSeek-V3 achieves the lowest accuracy in Law (55.1).

* Engineering shows the largest performance gap between the two models.

* The accuracy scores generally decrease as you move from left to right across the categories, suggesting a general difficulty trend.

### Interpretation

The data suggests that DeepSeek-R1 is a more robust and accurate model than DeepSeek-V3 across a diverse range of academic and professional categories. The substantial performance difference in fields like Law, History, and Engineering indicates that DeepSeek-R1 may be better equipped to handle the complexities and nuances of these subjects. The decreasing trend in accuracy from left to right could be due to the inherent difficulty of the categories, or the order in which they were presented to the models. The consistent outperformance of DeepSeek-R1 suggests a superior underlying architecture or training methodology. Further investigation into the specific challenges faced by DeepSeek-V3 in the lower-performing categories could reveal areas for improvement. The chart provides a clear and quantitative comparison of the two models, enabling informed decisions about which model to use for specific tasks.