## Flowchart: Continual Learning Methods

### Overview

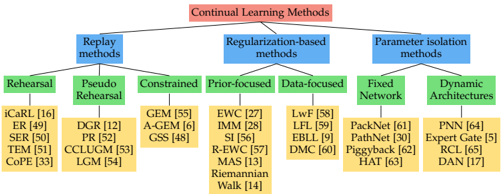

The diagram presents a hierarchical taxonomy of continual learning methods, organized into three primary categories: **Replay methods**, **Regularization-based methods**, and **Parameter isolation methods**. Each category branches into subcategories with specific examples, illustrating the diversity of approaches in the field.

### Components/Axes

- **Main Title**: "Continual Learning Methods" (red box at the top).

- **Primary Categories** (blue boxes):

1. **Replay methods**

2. **Regularization-based methods**

3. **Parameter isolation methods**

- **Subcategories** (green boxes):

- **Replay methods**:

- Rehearsal

- Pseudo Rehearsal

- Constrained

- **Regularization-based methods**:

- Prior-focused

- Data-focused

- **Parameter isolation methods**:

- Fixed Network

- Dynamic Architectures

- **Examples** (yellow boxes with references):

- Each subcategory lists specific algorithms/models (e.g., iCaRL, EWC, PackNet).

### Detailed Analysis

#### Replay Methods

- **Rehearsal**:

- iCaRL [16], ER [49], SER [50], TEM [51], CoPE [33]

- **Pseudo Rehearsal**:

- DGR [12], PR [52], CCLUGM [53], LGM [54]

- **Constrained**:

- GEM [55], A-GEM [6], GSS [48]

#### Regularization-based Methods

- **Prior-focused**:

- EWC [27], IMM [28], SI [56], R-EWC [57], MAS [13], Riemannian Walk [14]

- **Data-focused**:

- LwF [58], LFL [59], EBBL [9], DMC [60]

#### Parameter Isolation Methods

- **Fixed Network**:

- PackNet [61], PathNet [30], Piggyback [62], HAT [63]

- **Dynamic Architectures**:

- PNN [64], Expert Gate [5], RCL [65], DAN [17]

### Key Observations

1. **Hierarchical Structure**: The diagram emphasizes categorization, with methods grouped by their core strategy (replay, regularization, parameter isolation).

2. **Subcategory Diversity**: Each primary category contains distinct subcategories, reflecting specialized approaches within broader strategies.

3. **Example References**: All examples include numerical citations (e.g., [16], [27]), suggesting academic or technical sources for further reading.

### Interpretation

The diagram provides a structured overview of continual learning methodologies, highlighting how approaches are classified based on their mechanisms to address challenges like catastrophic forgetting. The taxonomy suggests:

- **Replay methods** focus on retaining past data through explicit or implicit storage.

- **Regularization-based methods** prioritize constraints on model updates, either through prior knowledge or data-driven techniques.

- **Parameter isolation methods** explore architectural innovations to preserve knowledge without direct data or parameter replay.

This categorization aids researchers in identifying gaps, overlaps, or emerging trends within the field. For instance, the prominence of "Fixed Network" and "Dynamic Architectures" under parameter isolation indicates a focus on model design innovations. The inclusion of both theoretical (e.g., Riemannian Walk) and practical (e.g., PackNet) examples underscores the field's interdisciplinary nature.