\n

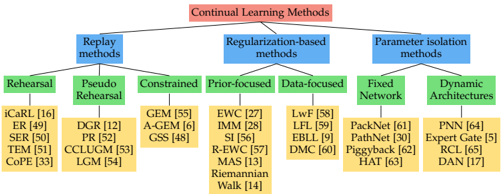

## Diagram: Continual Learning Methods

### Overview

The image is a hierarchical diagram illustrating the categorization of Continual Learning Methods. The diagram is structured as a tree, branching out from a central title into three main categories, which are further subdivided into more specific methods. The diagram uses color-coding to distinguish between different levels of the hierarchy.

### Components/Axes

The diagram consists of the following components:

* **Root Node:** "Continual Learning Methods" (in a red rectangular box)

* **Level 1 Branches:** Three main categories:

* "Replay methods" (in a blue rectangular box)

* "Regularization-based methods" (in a blue rectangular box)

* "Parameter isolation methods" (in a blue rectangular box)

* **Level 2 Branches (under Replay methods):**

* "Rehearsal" (in a green rectangular box)

* "Pseudo Rehearsal" (in a green rectangular box)

* "Constrained" (in a green rectangular box)

* **Level 2 Branches (under Regularization-based methods):**

* "Prior-focused" (in a green rectangular box)

* "Data-focused" (in a green rectangular box)

* **Level 2 Branches (under Parameter isolation methods):**

* "Fixed Network" (in a green rectangular box)

* "Dynamic Architectures" (in a green rectangular box)

* **Level 3 Branches (Specific Methods):** Listed under each Level 2 branch, in yellow rectangular boxes, with associated numerical identifiers (e.g., "[16]", "[49]").

### Detailed Analysis or Content Details

Here's a breakdown of the specific methods listed under each category:

**Replay methods:**

* iCaRL [16]

* ER [49]

* SER [50]

* TEM [51]

* CoPe [33]

* DGR [12]

* PR [52]

* CCLUGM [53]

* LGM [54]

**Regularization-based methods:**

* GEM [55]

* A-GEM [6]

* GSS [48]

* EWC [27]

* IMM [28]

* SI [56]

* R-EWC [57]

* MAS [13]

* Riemannian Walk [14]

* LwF [58]

* LFL [59]

* EBLL [9]

* DMC [60]

**Parameter isolation methods:**

* PackNet [61]

* PathNet [30]

* Piggyback [62]

* HAT [63]

* PNN [64]

* Expert Gate [5]

* RCL [65]

* DAN [17]

### Key Observations

The diagram provides a structured overview of the landscape of continual learning methods. The categorization into Replay, Regularization, and Parameter Isolation methods offers a clear way to understand the different approaches to addressing the challenges of continual learning. The numerical identifiers associated with each method likely refer to publications or research papers.

### Interpretation

The diagram illustrates the diverse range of techniques developed to tackle the problem of continual learning, where a model learns new tasks without forgetting previously learned ones. The three main categories represent fundamentally different strategies:

* **Replay methods** attempt to mitigate forgetting by replaying examples from previous tasks.

* **Regularization-based methods** aim to constrain the model's updates to preserve knowledge from previous tasks.

* **Parameter isolation methods** seek to allocate specific parameters or modules to different tasks, preventing interference.

The branching structure suggests a hierarchical relationship between these methods, with more specific techniques falling under broader categories. The presence of numerical identifiers indicates that these are established methods with associated research literature. The diagram serves as a valuable resource for researchers and practitioners in the field of continual learning, providing a concise overview of the key approaches and their relationships. The diagram does not provide any quantitative data or performance comparisons, it is purely a categorization scheme.