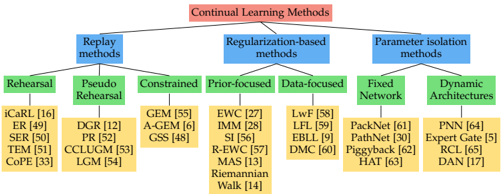

## Taxonomy Diagram: Continual Learning Methods

### Overview

This image is a hierarchical taxonomy diagram (tree structure) that classifies various "Continual Learning Methods." It organizes the field into three primary methodological branches, which are further subdivided into specific approaches and individual algorithms or techniques, each accompanied by a citation number in brackets.

### Components/Axes

The diagram is structured as a top-down tree with color-coded boxes indicating different levels of the hierarchy.

* **Root Node (Top Level):**

* **Label:** "Continual Learning Methods"

* **Color:** Red box with black text.

* **Position:** Top-center of the diagram.

* **First-Level Branches (Primary Categories):**

* Three blue boxes branch directly from the root node.

* **Left Branch:** "Replay"

* **Center Branch:** "Regularization-based methods"

* **Right Branch:** "Parameter isolation methods"

* **Second-Level Branches (Sub-Categories):**

* Green boxes branch from the primary categories.

* From **"Replay"**:

* "Rehearsal"

* "Pseudo Rehearsal"

* From **"Regularization-based methods"**:

* "Constrained"

* "Prior-focused"

* "Data-focused"

* From **"Parameter isolation methods"**:

* "Fixed Network"

* "Dynamic Architectures"

* **Leaf Nodes (Specific Methods):**

* Yellow boxes contain the names of specific algorithms or techniques, each followed by a citation number in square brackets (e.g., `[16]`). These are the terminal nodes of the tree.

### Detailed Analysis

The diagram exhaustively lists methods under each sub-category. Below is the complete transcription of the hierarchy and all leaf nodes.

**1. Replay**

* **1.1 Rehearsal**

* iCaRL [16]

* ER [41]

* SER [50]

* TEM [51]

* CoPE [33]

* **1.2 Pseudo Rehearsal**

* DGR [12]

* PR [22]

* CCLUGM [53]

* LGM [54]

**2. Regularization-based methods**

* **2.1 Constrained**

* GEM [55]

* A-GEM [6]

* GSS [48]

* **2.2 Prior-focused**

* EWC [27]

* IMM [28]

* SI [56]

* R-EWC [57]

* MAS [53]

* Riemannian Walk [14]

* **2.3 Data-focused**

* LwF [58]

* LFL [59]

* EBL [9]

* DMC [60]

**3. Parameter isolation methods**

* **3.1 Fixed Network**

* PackNet [31]

* PathNet [30]

* Piggyback [62]

* HAT [63]

* **3.2 Dynamic Architectures**

* PNN [64]

* Expert Gate [5]

* RCL [65]

* DAN [17]

### Key Observations

* **Structural Clarity:** The taxonomy uses a clear, three-tiered hierarchy (Method Type -> Approach -> Specific Algorithm) to organize a complex research field.

* **Citation Density:** Every specific method is accompanied by a citation number, indicating this diagram is likely from a survey paper and serves as a visual index to the literature.

* **Asymmetry in Branching:** The "Regularization-based methods" branch is the most developed, with three sub-approaches and the highest number of listed methods (13 total). "Replay" has two sub-approaches and 9 methods. "Parameter isolation methods" has two sub-approaches and 8 methods.

* **Visual Grouping:** The consistent color scheme (Red -> Blue -> Green -> Yellow) effectively guides the eye through the levels of categorization.

### Interpretation

This diagram provides a structured conceptual map of the continual learning landscape as of its publication. It suggests that the field has evolved along three core philosophical lines:

1. **Replay:** Methods that mitigate catastrophic forgetting by revisiting old data, either directly (Rehearsal) or via generative models (Pseudo Rehearsal).

2. **Regularization:** Methods that modify the learning objective to protect important parameters for previous tasks. This is the most diverse branch, split into approaches that add explicit constraints, focus on preserving the prior distribution of weights, or use data-based regularization.

3. **Parameter Isolation:** Methods that allocate separate network resources (either in a fixed architecture or a dynamically growing one) to different tasks.

The taxonomy implies that choosing a continual learning strategy involves a fundamental trade-off between these paradigms: replaying data (which may have privacy or memory cost), regularizing changes (which may limit plasticity), or dedicating capacity (which may not scale efficiently). The extensive list of methods under each branch highlights the active research and variety of technical solutions within each overarching strategy. The diagram is an essential tool for researchers to locate specific works within the broader theoretical framework of the field.