TECHNICAL ASSET FINGERPRINT

0acd320b1eb7f0d8f11eccf9

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

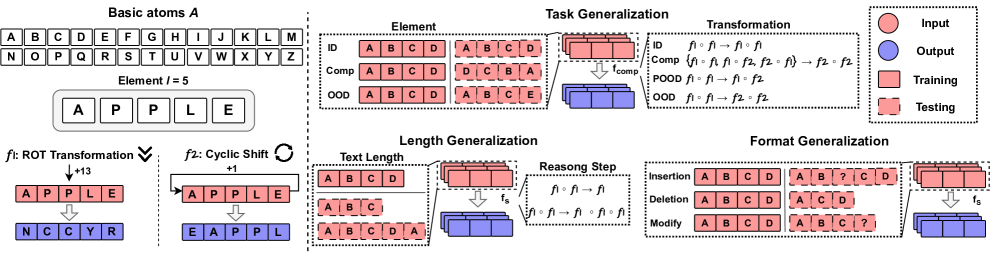

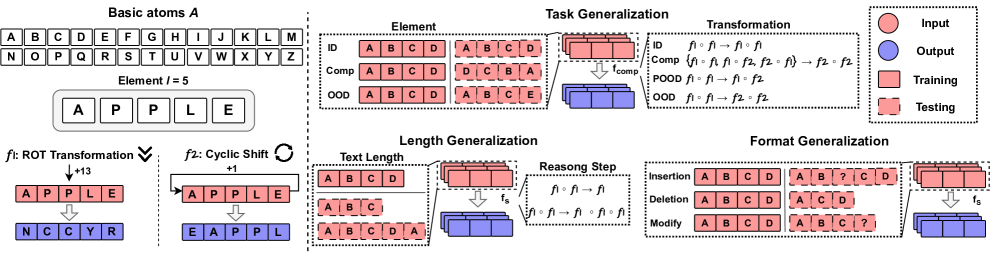

## Diagram: Text Transformation Task Generalization Framework

### Overview

This image is a technical diagram illustrating a framework for evaluating generalization in text transformation tasks. It defines basic atomic elements, specific transformation functions, and then categorizes different types of generalization challenges (Task, Length, Format) that a model might face. The diagram uses a consistent color-coding scheme (defined in a legend) to distinguish between input, output, training, and testing data across various scenarios.

### Components/Axes

The diagram is segmented into several distinct regions:

1. **Top-Left: Basic Definitions**

* **Basic atoms A**: A grid containing the 26 uppercase English letters (A-Z).

* **Element l = 5**: An example element (word) "APPLE" decomposed into its constituent letters: A, P, P, L, E.

* **Transformation Definitions**:

* **f1: ROT Transformation**: Illustrated with a downward arrow and the label "+13". It shows the input "APPLE" transforming to the output "N C C Y R". This represents a ROT13 cipher (each letter shifted 13 places in the alphabet).

* **f2: Cyclic Shift**: Illustrated with a circular arrow and the label "+1". It shows the input "APPLE" transforming to the output "E A P P L". This represents a cyclic shift where the last letter moves to the front.

2. **Top-Right: Legend**

* A box containing four colored circles with labels:

* **Red Circle**: Input

* **Blue Circle**: Output

* **Light Red Square**: Training

* **Light Blue Square**: Testing

3. **Main Right Section: Task Generalization**

This section is divided into three subsections, each exploring a different dimension of generalization.

* **Element (Top-Left of this section)**: A table with three rows (ID, Comp, OOD) and two columns (Training, Testing).

| Category | Training | Testing |

|----------|----------|---------|

| ID (In-Distribution) | A B C D -> A B C D | A B C D -> A B C D |

| Comp (Compositional) | A B C D -> A B C D | D C B A -> A B C D |

| OOD (Out-Of-Distribution) | A B C D -> A B C D | A B C E -> A B C E |

* **Transformation (Top-Right of this section)**: A table with four rows (ID, Comp, POOD, OOD) and columns describing function composition.

| Category | Training | Testing |

|----------|----------|---------|

| ID | f1 | f1 |

| Comp | {f1, f1 o f1, f2, f2 o f1} | f2 o f2 |

| POOD (Probabilistic OOD) | f1, f2 | f1, f2 |

| OOD | f1, f2 | f2, f2 |

A diagram to the right shows a **Training** block (light red) feeding into a function `f_comp`, which produces a **Testing** block (light blue).

* **Length Generalization (Bottom-Left of this section)**:

* A **Text Length** table showing training on length 4 ("A B C D") and testing on lengths 3 ("A B C") and 5 ("A B C D A").

| Training Length | Testing Lengths |

|-----------------|-----------------|

| 4 (A B C D) | 3 (A B C), 5 (A B C D A) |

* A diagram showing a **Training** block (light red) feeding into a function `f_s`, which produces a **Testing** block (light blue).

* A **Reasoning Step** box showing the function composition `f1 o f1 o f1` being simplified to `f1 o f1` (as `f1 o f1 = f1` for ROT13).

* **Format Generalization (Bottom-Right of this section)**:

* Three rows illustrating different format operations:

| Operation | Training | Testing |

|-----------|----------|---------|

| Insertion | A B C D -> A B C D | A B C D -> A B γ C D |

| Deletion | A B C D -> A B C D | A B C D -> A C D |

| Modify | A B C D -> A B C D | A B C D -> A B C ? |

* A diagram showing a **Training** block (light red) feeding into a function `f_s`, which produces a **Testing** block (light blue).

### Detailed Analysis

* **Spatial Grounding**: The legend is positioned in the top-right corner of the entire image. The "Task Generalization" title is centered above its three subsections. Within "Task Generalization," the "Element" and "Transformation" tables are side-by-side at the top, while "Length Generalization" and "Format Generalization" are side-by-side at the bottom.

* **Color-Coding Consistency**: The red/blue color scheme from the legend is applied consistently. For example, in the "Element" table, the input sequences "A B C D" are in red boxes, and the output sequences are in blue boxes. The training data blocks are light red, and testing data blocks are light blue in the flow diagrams.

* **Transformation Examples**: The f1 (ROT13) transformation is shown to map A->N, P->C, L->Y, E->R. The f2 (Cyclic Shift +1) transformation maps the sequence [A,P,P,L,E] to [E,A,P,P,L].

* **Generalization Taxonomy**: The diagram explicitly categorizes generalization challenges into:

1. **Element Generalization**: Testing on known vs. unknown symbols within the alphabet.

2. **Transformation Generalization**: Testing on seen vs. unseen functions or compositions of functions.

3. **Length Generalization**: Testing on sequences of lengths not seen during training.

4. **Format Generalization**: Testing on inputs/outputs with structural modifications (insertion, deletion, substitution) not present in training.

### Key Observations

* The framework is designed to systematically probe a model's ability to generalize beyond its training distribution across multiple axes (elements, functions, length, format).

* The "Comp" (Compositional) and "POOD" categories suggest a focus on testing systematic understanding, not just memorization. For instance, "Comp" in Transformation tests if the model can apply a novel composition of known functions (`f2 o f2`).

* The "Reasoning Step" box highlights that for certain transformations (like ROT13), understanding the algebraic property (`f1 o f1 = identity`) is key to generalization.

* The "Format Generalization" section tests robustness to noise or variations in the input/output structure, which is a common real-world challenge.

### Interpretation

This diagram outlines a rigorous evaluation protocol for artificial intelligence systems, particularly those designed for symbolic reasoning or algorithmic tasks involving text. It moves beyond simple accuracy metrics on held-out data to assess **systematic generalization**—the ability to apply learned rules to novel situations in a compositional and predictable way.

The framework suggests that true understanding of a task like text transformation requires more than memorizing input-output pairs. A robust system should:

1. Recognize that the underlying rules (like ROT13) apply uniformly to all elements, including unseen ones (OOD Element).

2. Understand functions as composable objects, enabling it to execute or predict the outcome of novel function chains (Comp/POOD Transformation).

3. Apply rules independently of sequence length (Length Generalization).

4. Maintain functional integrity despite superficial format changes (Format Generalization).

The inclusion of "Reasoning Step" implies that the ideal system would not just perform the transformation but also internalize the underlying logic (e.g., the cyclic nature of ROT13). This diagram is likely from a research paper or technical report focused on measuring and improving the **systematicity**, **compositionality**, and **robustness** of neural networks or other AI models on algorithmic tasks.

DECODING INTELLIGENCE...