## Line Graph: Probability Trends vs. Number of Heads Disabled

### Overview

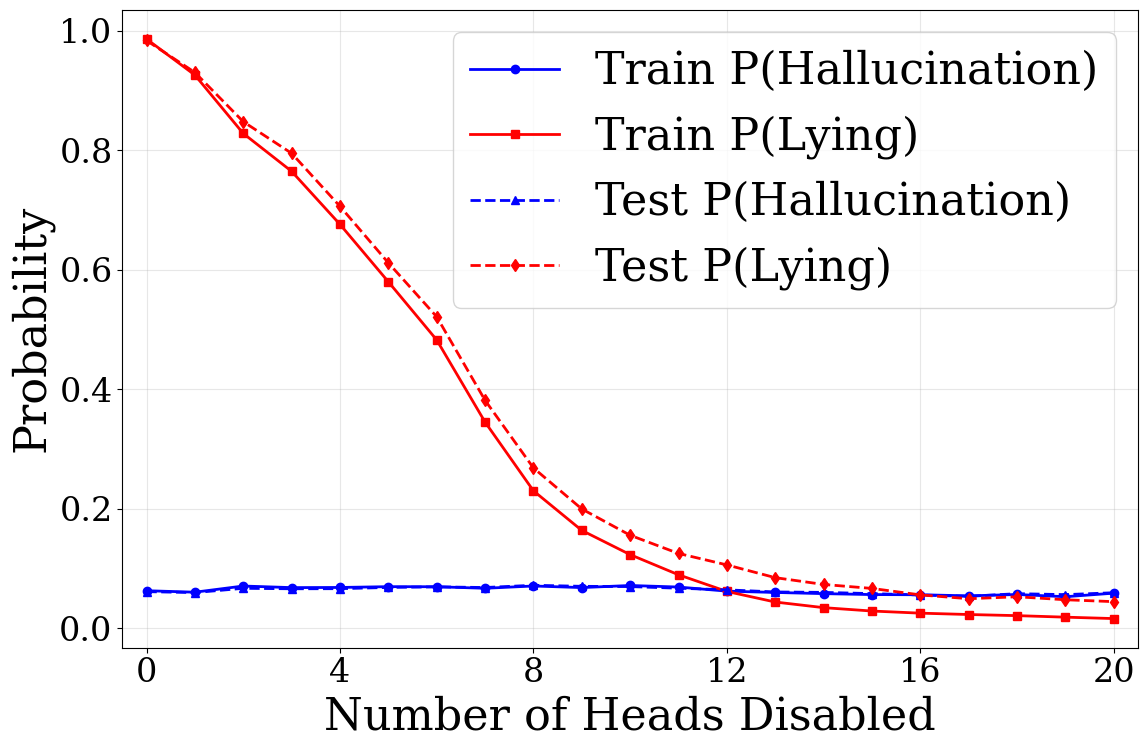

The graph illustrates how the probability of two behaviors ("Hallucination" and "Lying") changes in training and testing datasets as the number of "heads disabled" increases from 0 to 20. Four lines represent combinations of training/testing data and behavior types, with distinct trends observed for each.

### Components/Axes

- **X-axis**: "Number of Heads Disabled" (0 to 20, integer increments).

- **Y-axis**: "Probability" (0.0 to 1.0, linear scale).

- **Legend**: Located in the top-right corner, with four entries:

- **Solid Blue**: Train P(Hallucination)

- **Solid Red**: Train P(Lying)

- **Dashed Blue**: Test P(Hallucination)

- **Dashed Red**: Test P(Lying)

### Detailed Analysis

1. **Train P(Lying) (Solid Red)**:

- Starts at **~1.0** when 0 heads are disabled.

- Declines sharply to **~0.02** by 20 heads disabled.

- Steepest drop occurs between 0–8 heads disabled.

2. **Test P(Lying) (Dashed Red)**:

- Begins at **~0.95** (0 heads) and decreases gradually.

- Reaches **~0.03** by 20 heads disabled.

- Less steep decline than training data.

3. **Train P(Hallucination) (Solid Blue)**:

- Remains relatively flat at **~0.07–0.08** across all heads disabled.

- Minor fluctuations but no significant trend.

4. **Test P(Hallucination) (Dashed Blue)**:

- Starts at **~0.05** (0 heads) and stays nearly constant.

- Slight dip to **~0.04** by 20 heads disabled.

### Key Observations

- **Lying Probability Decline**: Both training and testing data show a strong inverse relationship between heads disabled and lying probability. Training data exhibits a more pronounced effect.

- **Hallucination Stability**: Probabilities for hallucination remain nearly unchanged regardless of heads disabled, suggesting robustness in this behavior.

- **Test vs. Train Divergence**: Test data for lying shows a slower decline than training data, indicating potential overfitting in the training model.

### Interpretation

The data suggests that disabling heads in the model significantly reduces its tendency to lie, particularly in training scenarios. The stability of hallucination probabilities implies that this behavior is less sensitive to architectural changes (e.g., head removal). The divergence between test and train trends for lying highlights a possible overfitting issue, where the training data adapts more aggressively to head removal than the test data. This could inform strategies for model interpretability or robustness optimization.