## Technical Diagram: Multi-Head Attention Layer Analysis

### Overview

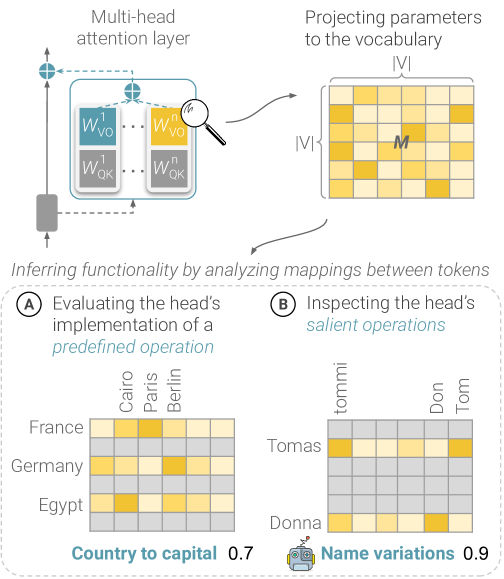

This image is a technical diagram illustrating a method for analyzing the functionality of attention heads within a transformer's multi-head attention layer. It demonstrates how the learned parameters of an attention head can be projected to the vocabulary space to create a mapping matrix (M), which is then analyzed to infer the head's specific function (e.g., "Country to capital" or "Name variations").

### Components/Axes

The diagram is segmented into three primary regions:

**1. Header (Top Section):**

* **Left Component:** Labeled "Multi-head attention layer". It depicts a standard multi-head attention mechanism with:

* Input and output arrows.

* A box containing weight matrices: `W_QK^1` through `W_QK^n` (Query-Key weights for n heads) and `W_VO^1` through `W_VO^n` (Value-Output weights for n heads).

* A magnifying glass icon focused on one head, indicating analysis.

* **Right Component:** Labeled "Projecting parameters to the vocabulary". It shows:

* A matrix labeled `M`.

* The matrix dimensions are indicated as `|V|` (vocabulary size) on both the vertical and horizontal axes.

* The matrix cells are colored in shades of yellow and gray, representing activation strength.

* An arrow points from the magnified head in the left component to this matrix.

**2. Main Chart (Bottom Section):**

* **Title:** "Inferring functionality by analyzing mappings between tokens"

* This section is divided into two side-by-side sub-diagrams, labeled **A** and **B**.

**3. Footer / Sub-diagram A (Bottom Left):**

* **Title:** "A) Evaluating the head's implementation of a *predefined operation*"

* **Chart Type:** Heatmap.

* **Y-axis (Rows):** Country names: "France", "Germany", "Egypt".

* **X-axis (Columns):** Capital city names: "Cairo", "Paris", "Berlin".

* **Legend:** Located at the bottom. Label: "Country to capital". Associated numerical value: `0.7`. A small icon of a building (likely representing a capital) is present.

* **Data Points (Heatmap Cells):** The grid shows varying intensity of yellow fill. The strongest (brightest yellow) mappings appear to be:

* France → Paris

* Germany → Berlin

* Egypt → Cairo

**4. Footer / Sub-diagram B (Bottom Right):**

* **Title:** "B) Inspecting the head's *salient operations*"

* **Chart Type:** Heatmap.

* **Y-axis (Rows):** Name tokens: "Tomas", "Donna".

* **X-axis (Columns):** Name variation tokens: "tommi", "Don", "Tom".

* **Legend:** Located at the bottom. Label: "Name variations". Associated numerical value: `0.9`. A small icon of two people is present.

* **Data Points (Heatmap Cells):** The grid shows varying intensity of yellow fill. The strongest mappings appear to be:

* Tomas → tommi

* Tomas → Tom

* Donna → Don

### Detailed Analysis

* **Flow of Information:** The diagram establishes a clear analytical pipeline: 1) Isolate an attention head, 2) Project its weight matrices to create a vocabulary-space mapping matrix `M`, 3) Analyze `M` to discover the head's function.

* **Matrix M:** This is the core analytical artifact. Its `|V| x |V|` structure suggests it represents a transformation or relationship between any two tokens in the model's vocabulary. The colored cells indicate the strength of the learned association.

* **Heatmap A (Predefined Operation):** This demonstrates a *supervised* or *hypothesis-driven* analysis. The researcher tests if the head implements a known, interpretable function ("Country to capital"). The heatmap confirms strong, correct mappings for the three given country-capital pairs. The score `0.7` likely quantifies the confidence or strength of this discovered mapping.

* **Heatmap B (Salient Operations):** This demonstrates an *unsupervised* or *exploratory* analysis. The researcher looks for strong, salient patterns in the mapping matrix without a predefined hypothesis. The pattern reveals the head groups morphological variations of names (e.g., "Tomas" with "tommi" and "Tom"; "Donna" with "Don"). The higher score `0.9` suggests this is a very strong, clear pattern detected in the head's parameters.

### Key Observations

1. **Dual Analysis Paradigm:** The diagram explicitly contrasts two methodological approaches: testing for a known function (A) versus discovering an unknown function (B).

2. **High Specificity:** The analyzed attention heads appear to be highly specialized. One head is dedicated to a geographic fact (country-capital), while another handles a linguistic/morphological task (name variations).

3. **Quantitative Scoring:** Both analyses yield a numerical score (0.7 and 0.9), providing a metric for the strength or purity of the discovered functionality.

4. **Visual Encoding:** The use of a consistent yellow-scale heatmap across both sub-diagrams allows for direct visual comparison of mapping strength and sparsity.

### Interpretation

This diagram is a pedagogical or methodological illustration from the field of **AI Interpretability**, specifically for transformer models. It argues that the internal mechanisms of complex neural networks, like attention heads, are not inscrutable "black boxes." Instead, their learned functions can be reverse-engineered.

The core insight is that by projecting an attention head's weights into the interpretable space of the vocabulary, we can create a "function map" (`M`). Analyzing this map reveals the head's job. The two examples show that heads can learn both **factual knowledge** (like a lookup table for capitals) and **linguistic rules** (like handling name morphology).

The implication is significant: if we can systematically catalog the functions of thousands of attention heads across a model, we can build a "circuit diagram" of how the model processes information. This is crucial for debugging model behavior, ensuring fairness, and building trust in AI systems. The diagram promotes a specific technical approach to achieve this understanding.