TECHNICAL ASSET FINGERPRINT

0b501e3285c766b9e524c5ee

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Attention Weights with and without Meaningless Tokens

### Overview

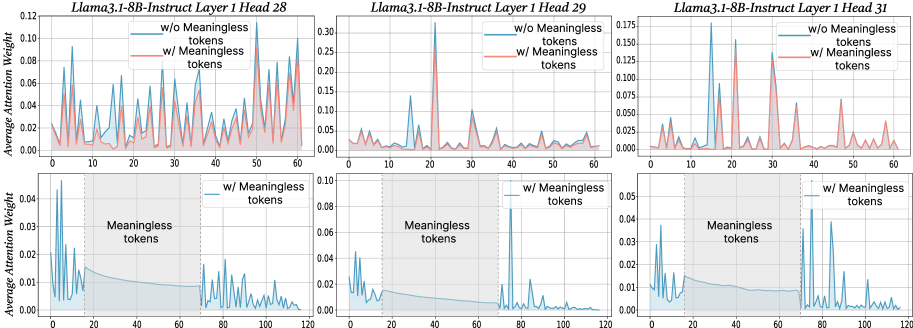

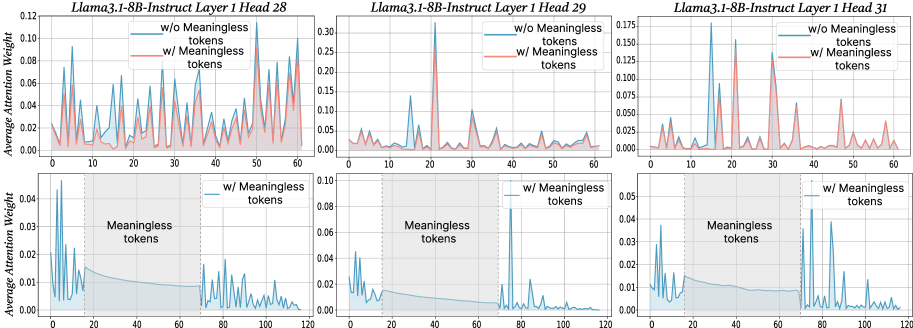

The image presents six line charts arranged in two rows of three. Each chart displays the average attention weight across different tokens for the Llama3.1-8B-Instruct model, specifically focusing on Layer 1, Heads 28, 29, and 31. The top row shows attention weights for all tokens, distinguishing between scenarios "w/o Meaningless tokens" (blue line) and "w/ Meaningless tokens" (red line). The bottom row focuses on the attention weights "w/ Meaningless tokens" (blue line) and highlights the region where meaningless tokens are present with a shaded gray area.

### Components/Axes

**General Chart Elements:**

* **Titles:** Each chart has a title indicating the model, layer, and head number (e.g., "Llama3.1-8B-Instruct Layer 1 Head 28").

* **X-axis:** Represents the token index. The top row charts range from 0 to 60, while the bottom row charts range from 0 to 120.

* **Y-axis:** Represents the "Average Attention Weight." The scale varies between charts.

* **Legends:** Each chart contains a legend in the top-right corner. The top row legends indicate "w/o Meaningless tokens" (blue line) and "w/ Meaningless tokens" (red line). The bottom row legends indicate "w/ Meaningless tokens" (blue line).

* **Shaded Region:** The bottom row charts feature a shaded gray region labeled "Meaningless tokens." This region spans approximately from token index 20 to 70.

* **Vertical Dashed Line:** The bottom row charts have a vertical dashed line at approximately token index 20, marking the start of the "Meaningless tokens" region.

**Specific Chart Details:**

* **Top Row Charts:**

* **Y-axis Scale:**

* Head 28: 0.00 to 0.12

* Head 29: 0.00 to 0.30

* Head 31: 0.000 to 0.175

* **Bottom Row Charts:**

* **Y-axis Scale:**

* Head 28: 0.00 to 0.04

* Head 29: 0.00 to 0.10

* Head 31: 0.00 to 0.05

### Detailed Analysis

**Head 28 (Top Row):**

* **Blue Line (w/o Meaningless tokens):** Shows several peaks, with the highest around token index 22, reaching approximately 0.11. The line fluctuates significantly.

* **Red Line (w/ Meaningless tokens):** Generally follows the trend of the blue line but with lower peaks. It remains mostly below 0.04.

**Head 28 (Bottom Row):**

* **Blue Line (w/ Meaningless tokens):** Shows high attention weights for the first few tokens, peaking at approximately 0.04 around token index 2. The attention weight decreases and remains low within the "Meaningless tokens" region (token index 20 to 70), then increases again after token index 70.

**Head 29 (Top Row):**

* **Blue Line (w/o Meaningless tokens):** Exhibits a very sharp peak at token index 22, reaching approximately 0.30. The rest of the line remains relatively low, generally below 0.05.

* **Red Line (w/ Meaningless tokens):** Similar to the blue line, but the peak at token index 22 is lower, around 0.25.

**Head 29 (Bottom Row):**

* **Blue Line (w/ Meaningless tokens):** Shows a high peak at the beginning, around token index 2, reaching approximately 0.05. The attention weight decreases and remains low within the "Meaningless tokens" region, then increases again after token index 70.

**Head 31 (Top Row):**

* **Blue Line (w/o Meaningless tokens):** Shows several prominent peaks, particularly around token indices 17 and 30, reaching approximately 0.17 and 0.15, respectively.

* **Red Line (w/ Meaningless tokens):** Generally follows the trend of the blue line, but with lower peaks.

**Head 31 (Bottom Row):**

* **Blue Line (w/ Meaningless tokens):** Shows high attention weights for the first few tokens, peaking at approximately 0.05 around token index 2. The attention weight decreases and remains low within the "Meaningless tokens" region, then increases again after token index 70.

### Key Observations

* The presence of meaningless tokens generally reduces the average attention weight, as evidenced by the red lines being lower than the blue lines in the top row charts.

* The bottom row charts clearly show a suppression of attention weights within the "Meaningless tokens" region (token index 20 to 70).

* Heads 29 exhibits a very strong focus on a single token (index 22) when meaningless tokens are excluded.

* The attention weights tend to be higher for the initial tokens (before index 20) and after the "Meaningless tokens" region (after index 70) in the bottom row charts.

### Interpretation

The data suggests that the Llama3.1-8B-Instruct model's attention mechanism is affected by the presence of meaningless tokens. The model appears to allocate less attention to tokens within the designated "Meaningless tokens" region, as shown in the bottom row charts. The top row charts indicate that the overall attention weights are generally lower when meaningless tokens are included, suggesting that the model distributes its attention differently in their presence.

The high attention weights observed for the initial tokens in the bottom row charts might indicate that the model focuses on the beginning of the input sequence before encountering the "Meaningless tokens." The subsequent increase in attention weights after the "Meaningless tokens" region could suggest that the model re-engages with the meaningful parts of the input.

Head 29's strong focus on a single token (index 22) when meaningless tokens are excluded could indicate that this head is particularly sensitive to specific features or patterns in the input that are masked or diluted by the presence of meaningless tokens.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Attention Weight Comparison with and without Meaningless Tokens

### Overview

The image presents three line charts, arranged horizontally. Each chart compares the average attention weight for two conditions: "w/o Meaningless tokens" (without meaningless tokens) and "w/ Meaningless tokens" (with meaningless tokens). The charts correspond to different layers and heads of the Llama3.1-8B-Instruct model: Head 28, Head 29, and Head 31. Each chart has two subplots, one showing the attention weights up to x=60 and the other showing the attention weights up to x=120.

### Components/Axes

* **X-axis:** Represents the token position, ranging from 0 to 60 in the top subplots and 0 to 120 in the bottom subplots.

* **Y-axis:** Represents the Average Attention Weight, ranging from 0 to 0.12 in the first chart, 0 to 0.3 in the second chart, and 0 to 0.175 in the third chart.

* **Lines:** Two lines are plotted in each chart:

* Red line: "w/o Meaningless tokens"

* Cyan line: "w/ Meaningless tokens"

* **Title:** Each chart is titled with "Llama3.1-8B-Instruct Layer 1 Head [Number]".

* **Legend:** Located in the top-right corner of each chart, indicating the color correspondence for each condition.

### Detailed Analysis or Content Details

**Chart 1: Llama3.1-8B-Instruct Layer 1 Head 28**

* **Top Subplot (0-60):**

* The red line ("w/o Meaningless tokens") shows several sharp peaks, with maximum values around 0.10 at approximately x=20, x=40, and x=50. The line generally fluctuates between 0 and 0.10.

* The cyan line ("w/ Meaningless tokens") is relatively flat, hovering around 0.02-0.04 for most of the range, with a slight increase towards the end, reaching approximately 0.06 at x=60.

* **Bottom Subplot (0-120):**

* The cyan line ("w/ Meaningless tokens") remains relatively flat, fluctuating between 0.01 and 0.04.

**Chart 2: Llama3.1-8B-Instruct Layer 1 Head 29**

* **Top Subplot (0-60):**

* The red line ("w/o Meaningless tokens") exhibits several peaks, with maximum values around 0.25 at approximately x=10, x=30, and x=50. The line fluctuates significantly.

* The cyan line ("w/ Meaningless tokens") is generally lower, fluctuating between 0.02 and 0.10, with a peak around 0.10 at x=30.

* **Bottom Subplot (0-120):**

* The cyan line ("w/ Meaningless tokens") remains relatively flat, fluctuating between 0.01 and 0.07.

**Chart 3: Llama3.1-8B-Instruct Layer 1 Head 31**

* **Top Subplot (0-60):**

* The red line ("w/o Meaningless tokens") shows several peaks, with maximum values around 0.13 at approximately x=10, x=30, and x=50. The line fluctuates significantly.

* The cyan line ("w/ Meaningless tokens") is generally lower, fluctuating between 0.01 and 0.08, with a peak around 0.08 at x=30.

* **Bottom Subplot (0-120):**

* The cyan line ("w/ Meaningless tokens") remains relatively flat, fluctuating between 0.01 and 0.05.

### Key Observations

* In all three charts, the "w/o Meaningless tokens" (red line) consistently exhibits higher and more pronounced peaks in the top subplots compared to the "w/ Meaningless tokens" (cyan line).

* The "w/ Meaningless tokens" lines are generally much flatter, especially in the bottom subplots, indicating a more uniform distribution of attention weights.

* The magnitude of the attention weights varies across the different heads (28, 29, 31). Head 29 shows the highest overall attention weights.

* The bottom subplots show that the cyan lines remain relatively stable, suggesting that the addition of meaningless tokens doesn't significantly alter the attention distribution beyond the initial 60 tokens.

### Interpretation

The data suggests that the inclusion of "meaningless tokens" significantly alters the attention patterns within the Llama3.1-8B-Instruct model. Without meaningless tokens, the model focuses attention on specific tokens (as evidenced by the sharp peaks in the red lines), likely those most relevant to the task. The addition of meaningless tokens appears to diffuse the attention, resulting in a more uniform distribution (flatter cyan lines).

The higher attention weights observed in Head 29 might indicate that this head is particularly sensitive to the presence of meaningful information or is more prone to being influenced by the addition of meaningless tokens.

The difference between the top and bottom subplots suggests that the initial tokens (0-60) are more affected by the presence of meaningless tokens than the later tokens (60-120). This could be due to the model attempting to integrate the meaningless tokens into the context during the initial stages of processing.

The overall trend indicates that meaningless tokens reduce the model's ability to focus on the most important parts of the input sequence, potentially impacting its performance on downstream tasks. This is a critical observation for understanding the impact of data quality and preprocessing on the behavior of large language models.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Multi-Panel Line Chart: Llama3.1-8B-Instruct Attention Weight Analysis

### Overview

The image displays a 2x3 grid of six line charts analyzing the average attention weight distributions across token positions for different attention heads in the Llama3.1-8B-Instruct model. The top row compares model behavior with and without the presence of "meaningless tokens," while the bottom row isolates the effect of those meaningless tokens over a longer sequence length.

### Components/Axes

* **Chart Titles (Top Row, Left to Right):**

1. `Llama3.1-8B-Instruct Layer 1 Head 28`

2. `Llama3.1-8B-Instruct Layer 1 Head 29`

3. `Llama3.1-8B-Instruct Layer 1 Head 31`

* **Chart Titles (Bottom Row, Left to Right):**

1. (Implied: Layer 1 Head 28, w/ Meaningless tokens)

2. (Implied: Layer 1 Head 29, w/ Meaningless tokens)

3. (Implied: Layer 1 Head 31, w/ Meaningless tokens)

* **Y-Axis Label (All Charts):** `Average Attention Weight`

* **X-Axis Label (All Charts):** Token position (implied, numbered 0, 10, 20, etc.).

* **Legend (Top Row Charts):**

* Blue Line: `w/o Meaningless tokens`

* Red Line: `w/ Meaningless tokens`

* **Legend (Bottom Row Charts):** Single entry: `w/ Meaningless tokens` (Blue line).

* **Annotations (Bottom Row Charts):** A shaded gray vertical region labeled `Meaningless tokens` spans approximately token positions 16 to 72.

### Detailed Analysis

**Top Row: Comparison of Conditions (Sequence Length ~60 tokens)**

* **Head 28 (Top-Left):**

* **Trend (w/o, Blue):** Shows a series of sharp, high-magnitude peaks (up to ~0.11) interspersed with lower baseline activity. Peaks are irregularly spaced.

* **Trend (w/, Red):** Follows a similar pattern of peaks but with consistently lower amplitude than the blue line. The highest red peak is approximately 0.08.

* **Key Data Points:** Blue peaks near positions 5, 15, 25, 35, 45, 55. Red peaks are co-located but attenuated.

* **Head 29 (Top-Center):**

* **Trend (w/o, Blue):** Exhibits one dominant, very high peak (reaching ~0.30) around position 20, with much lower activity elsewhere.

* **Trend (w/, Red):** The dominant peak at position 20 is drastically reduced (to ~0.10). Other minor peaks are also present but suppressed.

* **Key Data Points:** Primary blue peak at ~pos 20 (0.30). Corresponding red peak at same position (~0.10).

* **Head 31 (Top-Right):**

* **Trend (w/o, Blue):** Displays multiple high, sharp peaks (up to ~0.17) at somewhat regular intervals.

* **Trend (w/, Red):** The peaks are present at the same positions but are significantly reduced in height (highest ~0.08). The pattern appears more "smoothed."

* **Key Data Points:** Major blue peaks near positions 10, 20, 30, 40, 50. Red peaks are co-located but lower.

**Bottom Row: Effect of Meaningless Tokens (Sequence Length ~120 tokens)**

* **General Pattern (All Three Bottom Charts):** The blue line (`w/ Meaningless tokens`) shows a distinct three-phase pattern:

1. **Pre-Region (Tokens 0-16):** High, spiky attention weights.

2. **Meaningless Token Region (Tokens ~16-72, Shaded):** Attention weights drop to a very low, near-zero baseline with minimal fluctuation.

3. **Post-Region (Tokens 72-120):** Attention weights immediately return to a high, spiky pattern similar to the pre-region.

* **Head 28 (Bottom-Left):** Pre-region peaks reach ~0.045. Post-region peaks are similar in magnitude.

* **Head 29 (Bottom-Center):** Pre-region peaks are lower (~0.04). A very prominent spike occurs just after the meaningless region, around position 75, reaching ~0.08.

* **Head 31 (Bottom-Right):** Pre-region peaks are sharp (~0.04). Post-region activity is high and sustained, with multiple peaks between 0.03 and 0.05.

### Key Observations

1. **Suppression Effect:** The presence of meaningless tokens (`w/`) universally suppresses the magnitude of attention peaks compared to their absence (`w/o`), as seen in all top-row charts.

2. **Pattern Preservation:** While attenuated, the *locations* of attention peaks are largely preserved between the two conditions. The model attends to similar token positions regardless, but with less intensity when meaningless tokens are present.

3. **Attention Sink Behavior:** The bottom row provides strong evidence for the "attention sink" phenomenon. The model allocates almost no attention weight to the meaningless token segment (the shaded region), effectively ignoring it. Attention is focused on the meaningful tokens before and after this segment.

4. **Head Specialization:** Different heads show different attention patterns. Head 29 has a single dominant focus point, while Heads 28 and 31 have more distributed attention across multiple tokens.

### Interpretation

This data visualizes a key mechanism in large language model inference. The "meaningless tokens" (likely a padding or separator sequence) act as an attention sink. The model learns to bypass them, dedicating its attentional capacity almost exclusively to the semantically meaningful parts of the input (the text before and after the sink).

The top-row comparison suggests that the *potential* attention pattern (blue lines) is more peaked and intense. When forced to process meaningless tokens (red lines), the model's attention is "diluted" or regularized, leading to lower peak weights but a similar focus distribution. This could imply that meaningless tokens introduce a form of noise that the model must work to filter out, slightly reducing the efficiency or sharpness of its attention mechanism for the core task.

The stark contrast in the bottom charts is particularly telling. The near-zero attention within the shaded region is not a failure but an optimized strategy. It allows the model to maintain a long context window (120+ tokens) without wasting computational resources on irrelevant information, effectively "resetting" its attention after the sink. This behavior is crucial for efficient processing of documents or conversations with structural separators.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Llama3.1-8B-Instruct Layer 1 Head Attention Weights with/without Meaningless Tokens

### Overview

The image contains six line graphs comparing attention weights across three attention heads (28, 29, 31) in Layer 1 of the Llama3.1-8B-Instruct model. Each graph pair compares attention weights **with** (red) and **without** (blue) meaningless tokens. The x-axis represents token positions (0–120), and the y-axis shows average attention weight. Bottom subplots zoom into the 0–120 range with a shaded "Meaningless tokens" region (20–60).

---

### Components/Axes

- **X-axis**: Token Position (0–120)

- **Y-axis**: Average Attention Weight (0.00–0.12–0.175 depending on head)

- **Legends**:

- Blue: "w/o Meaningless tokens"

- Red: "w/ Meaningless tokens"

- **Subplot Structure**:

- Top subplots: Full 0–120 token range

- Bottom subplots: Zoomed 0–120 range with shaded 20–60 "Meaningless tokens" region

---

### Detailed Analysis

#### Head 28

- **Top Subplot**:

- Red line (w/ tokens) peaks at ~0.12 (token 10), ~0.08 (token 30), ~0.10 (token 50).

- Blue line (w/o tokens) peaks at ~0.06 (token 10), ~0.04 (token 30), ~0.05 (token 50).

- **Bottom Subplot**:

- Red line dominates 20–60 range (avg. ~0.08–0.10).

- Blue line drops sharply outside 20–60 (avg. ~0.01–0.03).

#### Head 29

- **Top Subplot**:

- Red line peaks at ~0.15 (token 20), ~0.10 (token 40).

- Blue line peaks at ~0.05 (token 20), ~0.03 (token 40).

- **Bottom Subplot**:

- Red line remains elevated in 20–60 (avg. ~0.06–0.08).

- Blue line flattens to ~0.02–0.04.

#### Head 31

- **Top Subplot**:

- Red line peaks at ~0.175 (token 10), ~0.12 (token 30), ~0.10 (token 50).

- Blue line peaks at ~0.07 (token 10), ~0.05 (token 30), ~0.04 (token 50).

- **Bottom Subplot**:

- Red line sustains high attention in 20–60 (avg. ~0.08–0.10).

- Blue line drops to ~0.01–0.03 outside 20–60.

---

### Key Observations

1. **Meaningless tokens amplify attention** in the 20–60 token range across all heads.

2. **Peaks in red lines** (w/ tokens) are consistently higher than blue lines (w/o tokens) in the shaded region.

3. **Blue lines** (w/o tokens) show reduced attention outside 20–60, suggesting meaningless tokens may anchor focus.

4. **Head 31** exhibits the highest overall attention weights, particularly in token 10 (w/ tokens: ~0.175).

---

### Interpretation

The data demonstrates that **meaningless tokens significantly increase attention weights** in the 20–60 token range, likely due to their salience or role in contextual framing. This suggests the model prioritizes these tokens when present, potentially improving task-specific performance (e.g., instruction following). The absence of meaningless tokens results in more dispersed attention, which may reduce efficiency. The consistent pattern across heads implies this behavior is a general property of the model’s attention mechanism, not head-specific.

**Notable Anomaly**: Head 31’s extreme peak at token 10 (w/ tokens: ~0.175) suggests an outlier in attention allocation, possibly indicating a unique processing role for that token position.

DECODING INTELLIGENCE...