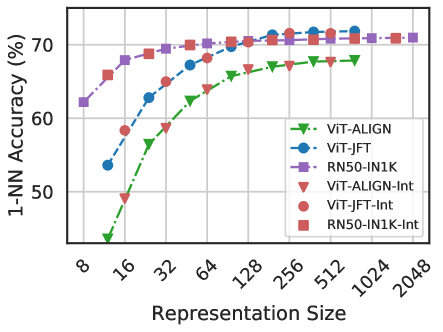

## Line Chart: 1-NN Accuracy vs. Representation Size

### Overview

This image presents a line chart comparing the 1-Nearest Neighbor (1-NN) accuracy of several models as a function of representation size. The chart displays performance for both standard models and their "Int" (likely integer quantized) versions.

### Components/Axes

* **X-axis:** Representation Size (logarithmic scale). Markers are present at 8, 16, 32, 64, 128, 256, 512, 1024, and 2048.

* **Y-axis:** 1-NN Accuracy (%). The scale ranges from approximately 45% to 75%.

* **Legend:** Located in the top-right corner. Contains the following entries:

* VIT-ALIGN (green dashed line)

* ViT-JFT (blue solid line)

* RN50-1N1K (purple dashed-dotted line)

* VIT-ALIGN-Int (red downward-pointing triangle)

* ViT-JFT-Int (pink circle)

* RN50-1N1K-Int (red square)

### Detailed Analysis

The chart shows the 1-NN accuracy for each model as the representation size increases.

* **VIT-ALIGN (green dashed line):** Starts at approximately 45% accuracy at a representation size of 8, increases rapidly to around 68% at a size of 64, and then plateaus around 68-70% for larger representation sizes.

* **ViT-JFT (blue solid line):** Begins at approximately 65% accuracy at a representation size of 8, increases steadily to around 72% at a size of 64, and then plateaus around 72-73% for larger representation sizes.

* **RN50-1N1K (purple dashed-dotted line):** Starts at approximately 67% accuracy at a representation size of 8, increases to around 71% at a size of 64, and then plateaus around 72-73% for larger representation sizes.

* **VIT-ALIGN-Int (red downward-pointing triangle):** Starts at approximately 52% accuracy at a representation size of 8, increases rapidly to around 70% at a size of 64, and then plateaus around 70-72% for larger representation sizes.

* **ViT-JFT-Int (pink circle):** Begins at approximately 68% accuracy at a representation size of 8, increases steadily to around 73% at a size of 64, and then plateaus around 73-74% for larger representation sizes.

* **RN50-1N1K-Int (red square):** Starts at approximately 70% accuracy at a representation size of 8, increases to around 73% at a size of 64, and then plateaus around 73-74% for larger representation sizes.

### Key Observations

* The "Int" versions of the models generally have lower accuracy than their standard counterparts at smaller representation sizes (8-32).

* As representation size increases, the accuracy of the "Int" models converges towards the accuracy of the standard models.

* All models exhibit diminishing returns in accuracy beyond a representation size of 64.

* ViT-JFT and RN50-1N1K consistently achieve higher accuracy than VIT-ALIGN across all representation sizes.

* RN50-1N1K-Int consistently achieves the highest accuracy.

### Interpretation

The data suggests that increasing the representation size improves the 1-NN accuracy of these models, but there is a point of diminishing returns. The "Int" versions of the models, which likely use integer quantization to reduce memory footprint and computational cost, initially suffer a performance penalty compared to the standard models. However, this penalty is mitigated as the representation size increases, indicating that the larger representation provides sufficient information to overcome the quantization effects. The convergence of the "Int" models towards the standard models suggests that integer quantization is a viable strategy for model compression, especially when combined with larger representation sizes. The consistently higher performance of ViT-JFT and RN50-1N1K suggests that these architectures are more effective for this particular task or dataset. The fact that RN50-1N1K-Int achieves the highest accuracy overall indicates that it is the most efficient and accurate model in this comparison.