\n

## Heatmap: Neural Network Layer-wise Component Values

### Overview

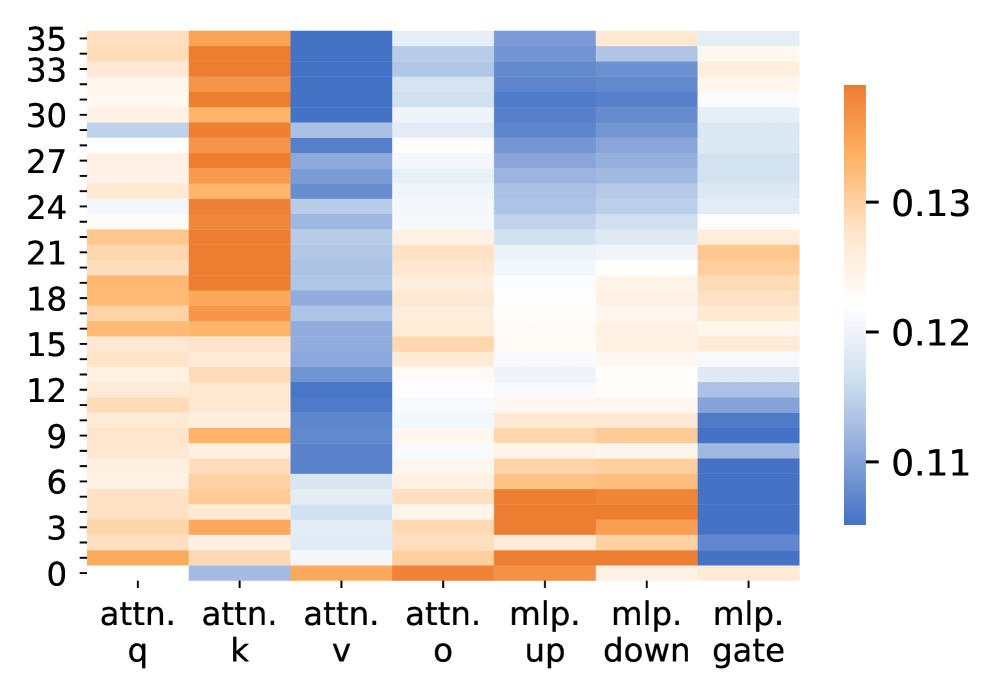

The image displays a heatmap visualizing numerical values across different components of a neural network (likely a transformer model) and across its layers. The heatmap uses a diverging color scale from blue (low values) to orange (high values) to represent the magnitude of a specific metric (e.g., activation, gradient, or parameter statistic) for each component at each layer.

### Components/Axes

* **Y-Axis (Vertical):** Represents the layer number of the neural network. The axis is labeled with integers from **0** at the bottom to **35** at the top, with major tick marks every 3 units (0, 3, 6, 9, 12, 15, 18, 21, 24, 27, 30, 33, 35). The scale is linear.

* **X-Axis (Horizontal):** Represents distinct components within each layer. The labels, from left to right, are:

1. `attn. q` (Attention Query)

2. `attn. k` (Attention Key)

3. `attn. v` (Attention Value)

4. `attn. o` (Attention Output)

5. `mlp. up` (MLP Up-projection)

6. `mlp. down` (MLP Down-projection)

7. `mlp. gate` (MLP Gate)

* **Color Scale/Legend:** Positioned on the right side of the chart. It is a vertical color bar showing the mapping from color to numerical value.

* **Blue** represents lower values, with the bottom of the scale marked at approximately **0.11**.

* **White/Light Gray** represents mid-range values, with the middle of the scale marked at approximately **0.12**.

* **Orange** represents higher values, with the top of the scale marked at approximately **0.13**.

* The scale appears continuous between these points.

### Detailed Analysis

The heatmap is a grid where each cell's color corresponds to a value for a specific component at a specific layer. The following describes the dominant color trends for each column (component):

1. **`attn. q` (Column 1):** Predominantly light orange to orange across most layers, indicating values consistently above the midpoint (~0.12). The intensity is relatively uniform, with slightly stronger orange (higher values) in the middle layers (approx. layers 12-24).

2. **`attn. k` (Column 2):** Shows the most intense and consistent orange coloration of all columns, especially from layer 3 upwards. This indicates this component has the highest values (closest to or exceeding 0.13) across nearly the entire network depth. The bottom-most layers (0-2) are a lighter orange.

3. **`attn. v` (Column 3):** Dominated by blue shades, indicating values consistently below the midpoint (~0.12). The blue is darkest (lowest values, ~0.11) in the upper half of the network (approx. layers 18-35). The lower layers show lighter blue.

4. **`attn. o` (Column 4):** Displays a mixed pattern. The lower half (layers 0-15) is mostly light orange (values >0.12). The upper half transitions to lighter colors and then to light blue (values <0.12) in the top layers (approx. 27-35).

5. **`mlp. up` (Column 5):** Shows a clear vertical gradient. The bottom layers (0-9) are orange (high values). The middle layers (10-21) are white/light (mid values ~0.12). The top layers (22-35) are blue (low values). This indicates a strong trend of decreasing values with increasing layer depth.

6. **`mlp. down` (Column 6):** Similar to `mlp. up` but less pronounced. The bottom layers are orange, transitioning through white in the middle, to light blue at the top. The overall values are slightly higher (less blue) than `mlp. up` in the upper layers.

7. **`mlp. gate` (Column 7):** Exhibits a pattern inverse to the other MLP components. The bottom layers (0-9) are blue (low values). The middle layers transition to white. The top layers (approx. 18-35) are light orange (high values). This indicates values generally increase with layer depth.

### Key Observations

* **Component Dichotomy:** There is a stark contrast between the Attention Key (`attn. k`) and Attention Value (`attn. v`) components. `attn. k` is uniformly high-value (orange), while `attn. v` is uniformly low-value (blue).

* **MLP Component Trends:** The three MLP components (`up`, `down`, `gate`) show distinct and opposing trends with respect to layer depth. `mlp. up` and `mlp. down` decrease in value with depth, while `mlp. gate` increases.

* **Layer-wise Grouping:** The heatmap suggests functional grouping. Layers 0-12 show more orange across several components. Layers 13-24 are more mixed. The top layers (25-35) show stronger blue in most columns except `mlp. gate` and, to a lesser extent, `attn. k`.

* **Outlier:** The `attn. k` column is a significant outlier due to its consistent, high-intensity orange coloration across all layers.

### Interpretation

This heatmap likely visualizes a statistic like the **mean activation value**, **gradient norm**, or **parameter magnitude** for different sub-layers within a 36-layer transformer model. The data suggests several underlying principles of the model's function:

1. **Specialization of Attention Components:** The consistent high values for `attn. k` and low values for `attn. v` may reflect their distinct roles. Keys might require larger magnitudes to effectively compute attention scores across a wide range of inputs, while values, which are weighted sums, might be naturally scaled down.

2. **Depth-dependent Processing in MLPs:** The opposing trends in the MLP layers are particularly insightful. The decreasing values in `mlp. up` and `mlp. down` could indicate that feature transformation and projection become more refined or sparse in deeper layers. Conversely, the increasing values in `mlp. gate` might suggest that the gating mechanism (which controls information flow) becomes more active or decisive in later processing stages.

3. **Layer-wise Functional Evolution:** The shift from more "active" (orange) lower layers to more "suppressed" (blue) upper layers in components like `attn. o` and `mlp. up` aligns with theories that early layers process broad, general features while later layers process more specific, abstract representations. The high-value `mlp. gate` in deep layers could be crucial for final output modulation.

4. **Architectural Insight:** The clear, structured patterns imply the model has learned stable, layer-specific roles for its components. The heatmap serves as a diagnostic tool, revealing how signal magnitudes propagate and transform through the network's depth, which is critical for understanding model stability, training dynamics, and potential points of failure or inefficiency.