## Line Chart: Accuracy vs. Model Size (PRP-RM)

### Overview

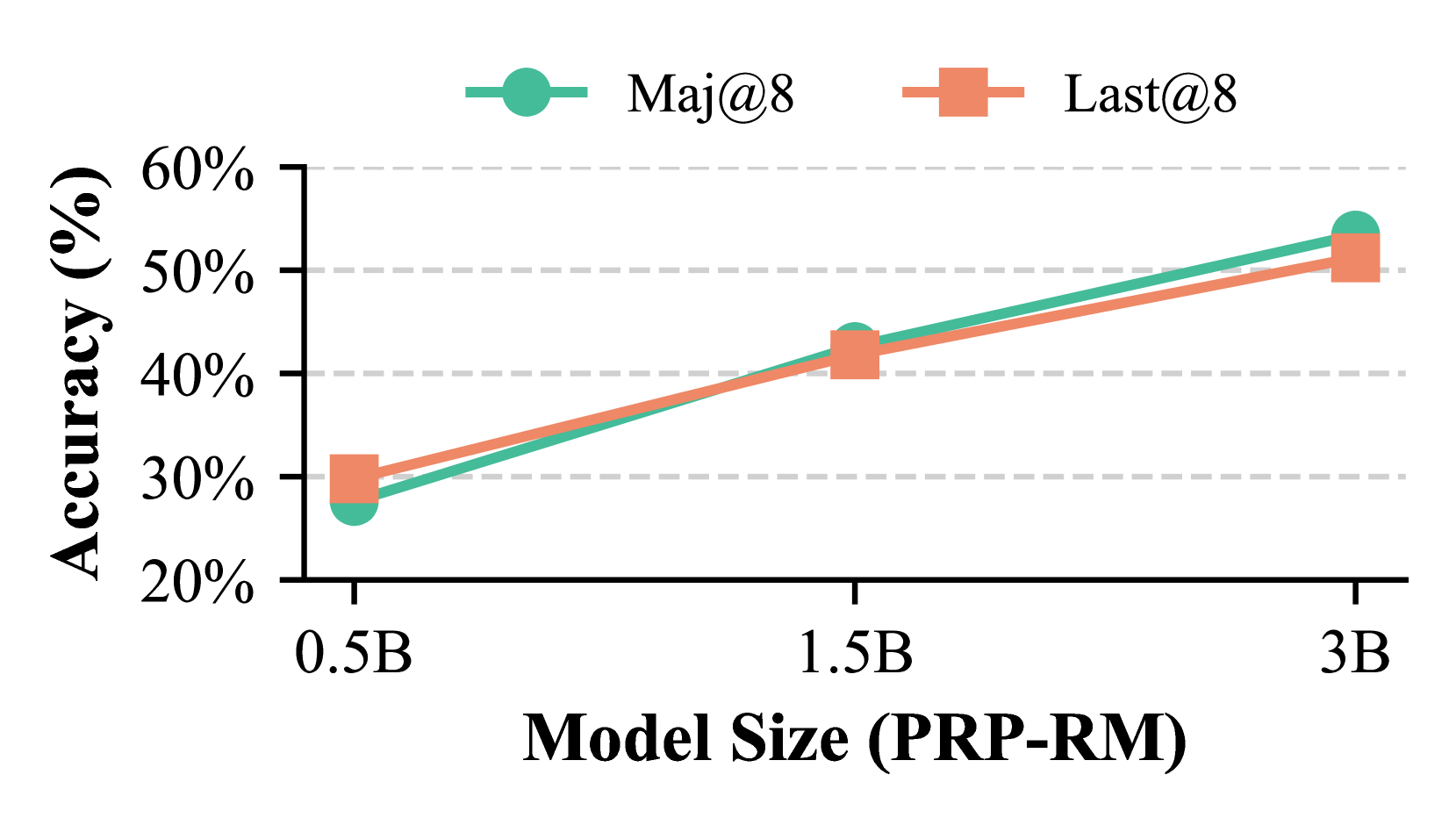

This is a line chart comparing the accuracy performance of two different methods, "Maj@8" and "Last@8," across three different model sizes. The chart demonstrates a positive correlation between model size and accuracy for both methods, with "Maj@8" showing a steeper improvement curve.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis (Horizontal):**

* **Label:** "Model Size (PRP-RM)"

* **Categories/Markers:** Three discrete points: "0.5B", "1.5B", "3B". These likely represent model parameter counts (0.5 Billion, 1.5 Billion, 3 Billion).

* **Y-Axis (Vertical):**

* **Label:** "Accuracy (%)"

* **Scale:** Linear scale from 20% to 60%, with major gridlines at 10% intervals (20%, 30%, 40%, 50%, 60%).

* **Legend:**

* **Position:** Top-center of the chart area.

* **Series 1:** "Maj@8" - Represented by a teal/green line with solid circle markers.

* **Series 2:** "Last@8" - Represented by an orange/salmon line with solid square markers.

### Detailed Analysis

**Data Series & Trends:**

1. **Maj@8 (Teal line, circle markers):**

* **Trend:** Shows a strong, consistent upward slope from left to right, indicating accuracy improves significantly with model size.

* **Data Points (Approximate):**

* At **0.5B**: ~27% accuracy.

* At **1.5B**: ~43% accuracy.

* At **3B**: ~54% accuracy.

* **Observation:** This series starts lower than "Last@8" at 0.5B but surpasses it by 1.5B and maintains a higher accuracy at 3B.

2. **Last@8 (Orange line, square markers):**

* **Trend:** Also shows a consistent upward slope, but the rate of improvement appears slightly less steep than "Maj@8" after the 0.5B point.

* **Data Points (Approximate):**

* At **0.5B**: ~30% accuracy.

* At **1.5B**: ~42% accuracy.

* At **3B**: ~51% accuracy.

* **Observation:** This series starts with a higher accuracy than "Maj@8" at the smallest model size (0.5B) but is overtaken at 1.5B and ends with a lower accuracy at 3B.

**Spatial Relationships:**

* The two lines intersect between the 0.5B and 1.5B model size points, with "Maj@8" crossing from below to above "Last@8".

* The vertical gap between the two lines is smallest at 1.5B and widens again at 3B, with "Maj@8" on top.

### Key Observations

1. **Positive Scaling Law:** Both methods exhibit a clear positive relationship between model size (PRP-RM) and accuracy. Larger models yield higher accuracy.

2. **Performance Crossover:** There is a critical model size between 0.5B and 1.5B where the "Maj@8" method begins to outperform the "Last@8" method.

3. **Differential Scaling:** The "Maj@8" method appears to scale more efficiently with model size, as evidenced by its steeper slope and the growing performance gap in its favor at the largest model size (3B).

4. **Initial Advantage:** The "Last@8" method has a slight advantage at the smallest model scale (0.5B).

### Interpretation

The chart suggests that the choice between the "Maj@8" and "Last@8" methods depends on the available model scale. For very small models (~0.5B parameters), "Last@8" may be preferable. However, for medium to large models (1.5B and above), "Maj@8" is the superior strategy, and its advantage increases with scale.

This data likely comes from a machine learning or AI research context, comparing two inference or decoding strategies (e.g., "Majority vote at 8 samples" vs. "Last token at 8 samples") for a task evaluated by accuracy. The "PRP-RM" in the x-axis label may refer to a specific model architecture or training paradigm. The key takeaway is that the "Maj@8" strategy benefits more from increased model capacity, making it the more scalable solution for high-performance applications.