## Diagram: Long-Context AI Model Interaction

### Overview

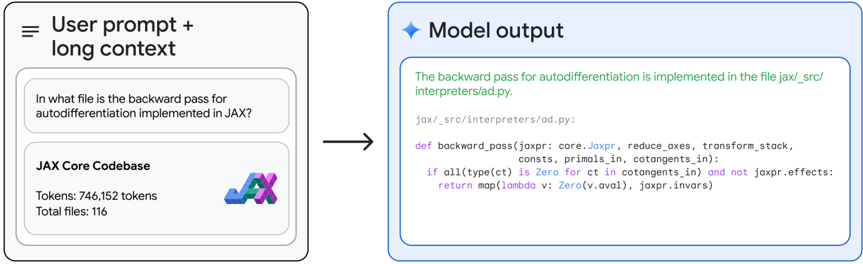

The image is a two-panel diagram illustrating a technical interaction between a user and an AI model. It demonstrates the model's ability to process a query within a very large context (a codebase) and generate a precise, code-based answer. The left panel represents the user's input, and the right panel represents the model's output.

### Components/Axes

The diagram is divided into two primary rectangular panels with rounded corners, connected by a right-pointing arrow.

**Left Panel: "User prompt + long context"**

* **Header:** Contains a hamburger menu icon (three horizontal lines) and the title "User prompt + long context".

* **Content:** Two nested boxes.

1. **Top Box (User Query):** Contains the text: "In what file is the backward pass for autodifferentiation implemented in JAX?"

2. **Bottom Box (Context):** Labeled "JAX Core Codebase". Contains metadata:

* "Tokens: 746,152 tokens"

* "Total files: 116"

* Includes a stylized logo combining the letters "JAX" with a blue and purple gradient.

**Right Panel: "Model output"**

* **Header:** Contains a four-pointed star icon and the title "Model output".

* **Content:** A single large box containing the model's response.

* **Introductory Text (Green):** "The backward pass for autodifferentiation is implemented in the file jax/_src/interpreters/ad.py."

* **Code Block:** A formatted code snippet with syntax highlighting (colors for keywords, functions, etc.).

* **File Path Header:** `jax/_src/interpreters/ad.py:`

* **Python Code:**

```python

def backward_pass(jaxpr: core.Jaxpr, reduce_axes, transform_stock,

consts, primals_in, cotangents_in):

if all(type(ct) is Zero for ct in cotangents_in) and not jaxpr.effects:

return map(lambda v: Zero(v.aval), jaxpr.invars)

```

**Connecting Element:**

* A solid black arrow points from the right edge of the left panel to the left edge of the right panel, indicating the flow from input to output.

### Detailed Analysis

The diagram explicitly shows the input-output relationship for a long-context query.

1. **Input Specification:** The user asks a specific technical question about the JAX library's codebase. The system indicates it has access to the entire "JAX Core Codebase," quantified as 746,152 tokens across 116 files.

2. **Output Generation:** The model provides a direct answer, naming the exact file path (`jax/_src/interpreters/ad.py`). It then supplies a relevant code snippet from that file, showing the `backward_pass` function definition and its initial conditional logic. The code is presented with syntax highlighting, suggesting it is rendered in a code-friendly environment.

### Key Observations

* **Precision:** The model's output is highly specific, providing an exact file path and a verbatim code excerpt, not a general description.

* **Context Scale:** The diagram emphasizes the model's capacity to handle a very large context window (746K tokens), which is necessary to process an entire codebase.

* **Visual Coding:** The use of color in the model output (green for the answer text, multi-color for code syntax) helps distinguish between explanatory text and executable code.

* **Layout:** The clean, side-by-side comparison with a directional arrow makes the process flow immediately understandable.

### Interpretation

This diagram serves as a technical demonstration of a large-language model's (LLM) **retrieval and reasoning capability within a massive, structured context**. It's not just searching for keywords; it's interpreting a conceptual question ("backward pass for autodifferentiation") and mapping it to a specific location and implementation within a complex software project.

The inclusion of token and file counts is crucial. It argues that the model's accuracy stems from its ability to "read" and index the entire codebase simultaneously, rather than relying on pre-trained, generalized knowledge about JAX which might be outdated or incomplete. The output code snippet acts as proof, verifying the answer's correctness by showing the actual function.

The underlying message is about **trust and verification**. The model doesn't just give an answer; it provides the evidence (the code) that allows a developer to immediately confirm the finding. This showcases a shift from AI as a black-box answer engine to AI as a transparent research assistant that surfaces primary sources. The diagram is likely used in technical documentation or marketing to highlight advanced long-context processing features.