\n

## Diagram: JAX Autodifferentiation Implementation

### Overview

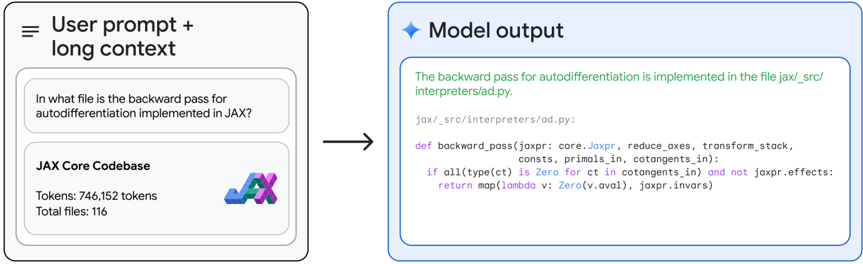

The image depicts a diagram illustrating the response of a model to a user prompt regarding the implementation of the backward pass for autodifferentiation in JAX. The diagram shows a user prompt on the left, a JAX Core Codebase description, and the model's output (code snippet) on the right, connected by an arrow indicating the flow of information.

### Components/Axes

The diagram is divided into three main sections:

1. **User Prompt + Long Context (Left):** Contains the user's question and information about the JAX Core Codebase.

2. **Arrow (Center):** Represents the processing flow from the prompt to the output.

3. **Model Output (Right):** Displays the code snippet answering the user's question.

The JAX Core Codebase section includes:

* **Label:** "JAX Core Codebase"

* **Tokens:** 746,152 tokens

* **Variables:** 116

### Detailed Analysis or Content Details

The user prompt is: "In what file is the backward pass for autodifferentiation implemented in JAX?"

The model output is a code snippet from the file `jax/_src/interpreters/ad.py`:

```python

def backward_pass(jaxpr: core.Jaxpr, reduce_axes, transform_stack,

consts, primals, in_cotangents_in: cotangents_in):

if all(type(ct) is Zero for ct in cotangents_in) and not jaxpr.effects:

return map(lambda v: Zero(v.aval), jaxpr.invars)

```

### Key Observations

The diagram clearly shows the model's ability to identify the relevant file (`jax/_src/interpreters/ad.py`) and provide a code snippet from that file related to the backward pass for autodifferentiation. The JAX Core Codebase information (tokens and variables) is provided as context.

### Interpretation

This diagram demonstrates a successful example of information retrieval and code generation by a model. The model understands the user's question, accesses relevant information from a codebase (represented by the token and variable counts), and provides a precise and relevant code snippet as an answer. The arrow visually represents the model's reasoning process, highlighting its ability to connect the prompt to the appropriate code. The inclusion of the codebase statistics suggests the model has access to and can process large amounts of code. This is a demonstration of a code-understanding and code-generation capability.