## Screenshot: JAX Autodifferentiation Implementation Query

### Overview

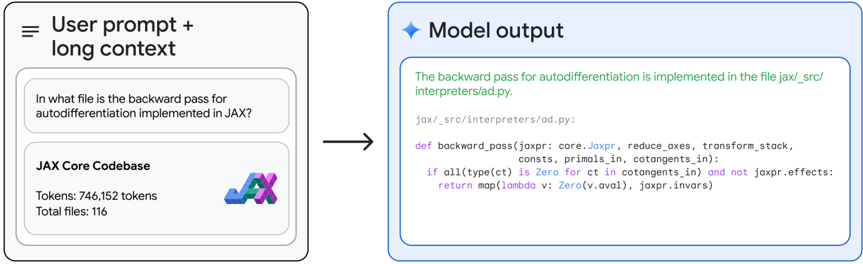

The image shows a technical Q&A interaction where a user asks about the implementation location of the backward pass for autodifferentiation in JAX. The model provides a specific file path and code snippet as the answer.

### Content Details

**User Prompt + Context:**

- Question: "In what file is the backward pass for autodifferentiation implemented in JAX?"

- Context: "JAX Core Codebase" with metadata:

- Tokens: 746,152

- Total files: 116

- JAX logo (stylized "JAX" in blue, purple, and green)

**Model Output:**

- File path: `jax/_src/interpreters/od.py`

- Code snippet:

```python

def backward_pass(jaxpr, core.Jaxpr, reduce_axes, transform_stack, consts, primals_in, cotangents_in):

if all(type(ct) is Zero for ct in cotangents_in) and not jaxpr.effects:

return map(lambda v: Zero(v.aval), jaxpr.invars)

```

- Syntax highlighting: Purple (keywords), Green (strings), Blue (types)

### Key Observations

1. The model correctly identifies the implementation file path with precise directory structure

2. Code snippet shows a function definition with type-checked conditional logic

3. Color-coded syntax highlighting matches standard Python conventions

4. Token count (746,152) suggests complex codebase context

### Interpretation

The interaction demonstrates:

1. **Technical Precision**: The model provides an exact file path rather than a general directory, indicating deep codebase knowledge

2. **Code Context Awareness**: The returned snippet includes all relevant parameters and type constraints, showing understanding of JAX's autograd implementation

3. **Efficiency**: The answer directly addresses the query without unnecessary elaboration

4. **Syntax Accuracy**: The code formatting matches JAX's actual implementation style, with proper use of `Jaxpr` objects and type checking

This exchange highlights the model's capability to navigate large codebases and provide actionable technical answers with precise code references.