# Technical Document Extraction: Attention Forward Speed Benchmark

## 1. Document Header & Metadata

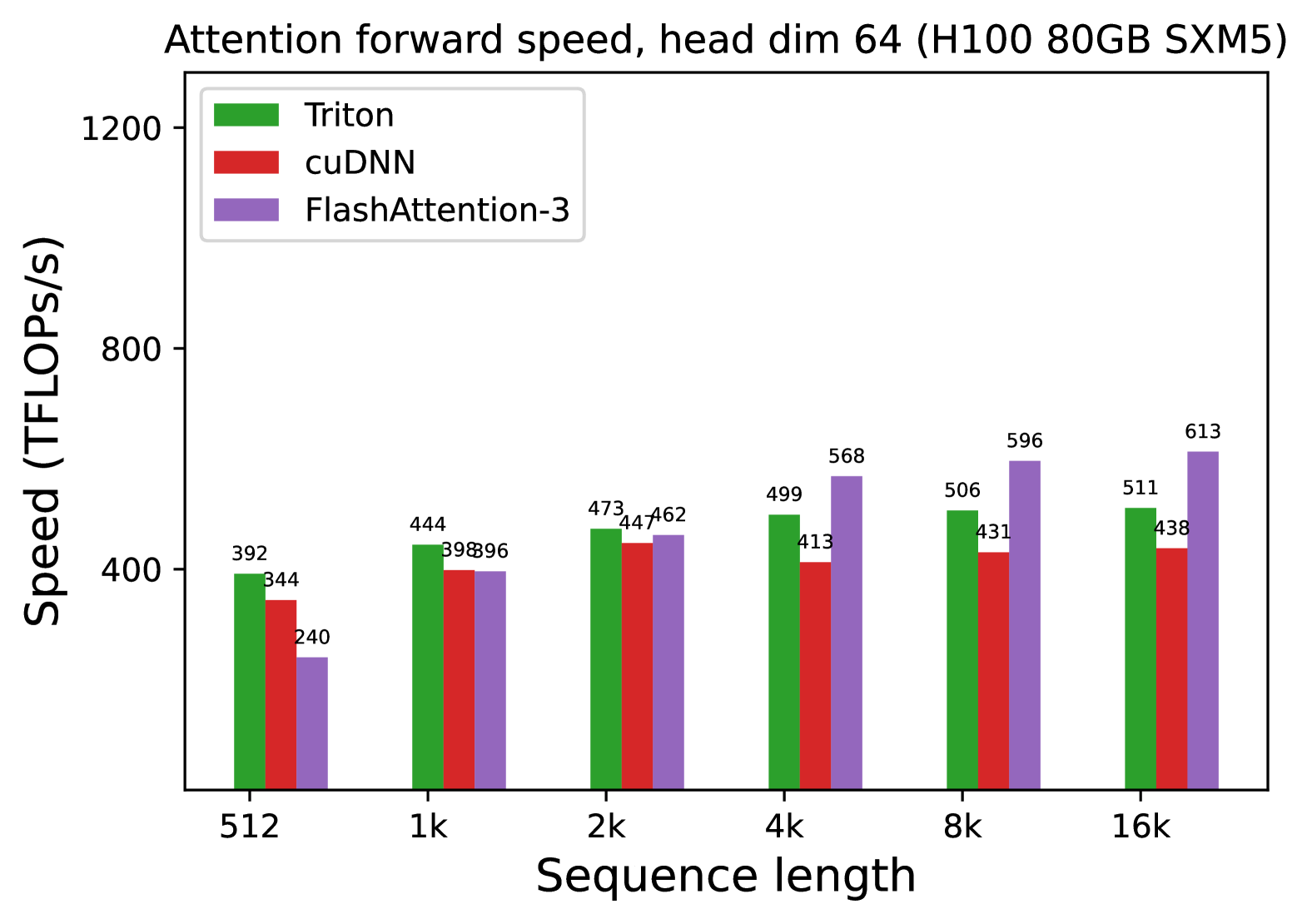

* **Title:** Attention forward speed, head dim 64 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Parameter Specification:** Head dimension is fixed at 64.

## 2. Chart Structure

* **Type:** Grouped Bar Chart.

* **X-Axis (Independent Variable):** Sequence length.

* **Categories:** 512, 1k, 2k, 4k, 8k, 16k.

* **Y-Axis (Dependent Variable):** Speed (TFLOPs/s).

* **Scale:** Linear, ranging from 0 to 1200, with major ticks at 400, 800, and 1200.

* **Legend:**

* **Green Bar:** Triton

* **Red Bar:** cuDNN

* **Purple Bar:** FlashAttention-3

## 3. Data Extraction & Trend Analysis

### Trend Verification

* **Triton (Green):** Shows a steady, slight upward trend as sequence length increases, plateauing around 500 TFLOPs/s.

* **cuDNN (Red):** Shows an initial increase from 512 to 2k, followed by a slight decline and stabilization between 413 and 447 TFLOPs/s.

* **FlashAttention-3 (Purple):** Shows the most significant upward trend. It starts as the slowest at 512 but scales aggressively, becoming the fastest implementation from the 4k sequence length mark onwards.

### Data Table (Reconstructed)

Values are extracted from the labels positioned above each individual bar.

| Sequence Length | Triton (Green) | cuDNN (Red) | FlashAttention-3 (Purple) |

| :--- | :--- | :--- | :--- |

| **512** | 392 | 344 | 240 |

| **1k** | 444 | 398 | 396 |

| **2k** | 473 | 447 | 462 |

| **4k** | 499 | 413 | 568 |

| **8k** | 506 | 431 | 596 |

| **16k** | 511 | 438 | 613 |

## 4. Component Analysis

* **Header Region:** Contains the descriptive title defining the operation (Attention forward), the specific architectural constraint (head dim 64), and the hardware used (H100).

* **Main Chart Region:** Contains the grouped bars. Note that at sequence length **1k**, Triton is the clear leader, while cuDNN and FlashAttention-3 are nearly identical (398 vs 396).

* **Performance Crossover:** A critical observation is the performance crossover between 2k and 4k. At 2k, Triton is still the fastest (473), but by 4k, FlashAttention-3 (568) significantly outperforms both Triton (499) and cuDNN (413).

* **Scaling Efficiency:** FlashAttention-3 demonstrates superior scaling for long sequences, reaching a peak of 613 TFLOPs/s at 16k, which is approximately 2.5x its performance at 512. In contrast, Triton and cuDNN show much flatter scaling curves.