TECHNICAL ASSET FINGERPRINT

0c857f9779c6cdf10af4bf25

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: LLM Memory Management

### Overview

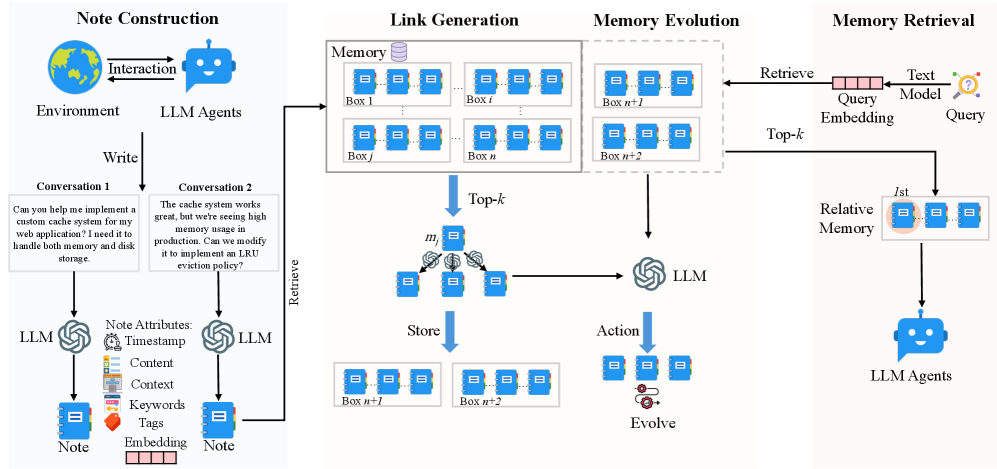

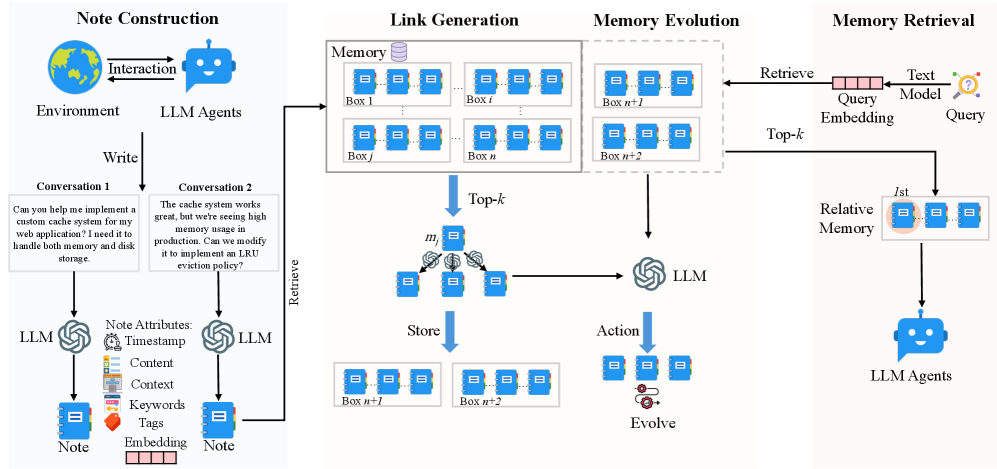

The image is a diagram illustrating the process of memory management using Large Language Models (LLMs). It is divided into four main sections: Note Construction, Link Generation, Memory Evolution, and Memory Retrieval. The diagram shows how user interactions are converted into notes, linked in memory, evolved over time, and retrieved when needed.

### Components/Axes

* **Titles:**

* Note Construction (Top-Left)

* Link Generation (Top-Center-Left)

* Memory Evolution (Top-Center-Right)

* Memory Retrieval (Top-Right)

* **Note Construction:**

* Environment: Depicted as a globe.

* LLM Agents: Depicted as a blue chatbot icon.

* Interaction: A bidirectional arrow between the Environment and LLM Agents.

* Write: A downward arrow indicating the writing process.

* Conversation 1: A text box containing the question, "Can you help me implement a custom cache system for my web application? I need it to handle both memory and disk storage."

* Conversation 2: A text box containing the statement, "The cache system works great, but we're seeing high memory usage in production. Can we modify it to implement an LRU eviction policy?"

* LLM: Represented by the LLM logo.

* Note Attributes:

* Timestamp: Clock icon.

* Content: List icon.

* Context: Document icon.

* Keywords: Tag icon.

* Tags: Orange tag icon.

* Embedding: A series of small rectangles.

* Note: Represented as a blue notebook icon.

* **Link Generation:**

* Memory: Represented as a database icon.

* Boxes: Labeled as Box 1, Box i, Box j, Box n. Each box contains three blue notebook icons.

* Top-k: A downward arrow indicating the top-k selection process.

* m: A node with three blue notebook icons, connected to other nodes via lines and small circular icons.

* Store: A downward arrow indicating the storing process.

* Boxes: Labeled as Box n+1, Box n+2. Each box contains three blue notebook icons.

* **Memory Evolution:**

* Boxes: Labeled as Box n+1, Box n+2. Each box contains three blue notebook icons.

* LLM: Represented by the LLM logo.

* Action: A downward arrow indicating the action process.

* Evolve: A node with three blue notebook icons and a gear icon.

* **Memory Retrieval:**

* Retrieve: An arrow pointing from the "Memory Evolution" section to a "Query Embedding" box.

* Query Embedding: A horizontal box labeled "Query Embedding" with "Text Model" above it.

* Query: A question mark icon.

* Top-k: An arrow pointing from the "Query Embedding" box to the "Relative Memory" box.

* Relative Memory: A box containing three blue notebook icons, with the leftmost icon highlighted with a pink circle and labeled "1st".

* LLM Agents: Depicted as a blue chatbot icon.

### Detailed Analysis or Content Details

* **Note Construction:** The process starts with an interaction between the environment and LLM agents. The agents write conversations, which are then processed by LLMs to create notes. These notes have attributes such as timestamp, content, context, keywords, tags, and embeddings.

* **Link Generation:** The notes are stored in memory boxes (Box 1 to Box n). A top-k selection process retrieves relevant notes, which are then stored in new memory boxes (Box n+1 and Box n+2).

* **Memory Evolution:** The notes in memory boxes (Box n+1 and Box n+2) are processed by an LLM, which takes action and evolves the notes.

* **Memory Retrieval:** A query is embedded using a text model. A top-k selection process retrieves relative memory, and the first (1st) item is highlighted. The retrieved information is then used by LLM agents.

### Key Observations

* The diagram illustrates a cyclical process of note creation, storage, evolution, and retrieval.

* LLMs play a central role in processing and evolving the notes.

* The top-k selection process is used in both link generation and memory retrieval.

* The diagram highlights the importance of note attributes in the memory management process.

### Interpretation

The diagram presents a high-level overview of how LLMs can be used for memory management. The process involves converting user interactions into structured notes, linking these notes in memory, evolving them over time, and retrieving them when needed. The use of top-k selection suggests a mechanism for prioritizing relevant information. The diagram emphasizes the importance of context and attributes in managing and retrieving information effectively. The cyclical nature of the process indicates a continuous learning and adaptation mechanism.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Memory System Architecture

### Overview

The image depicts a diagram illustrating the architecture of a Long Language Model (LLM) memory system. It outlines the process from note construction through memory evolution and retrieval, involving interactions between LLM agents, an environment, and a memory store organized into "boxes". The diagram highlights the flow of information and the key components involved in managing and utilizing memory within the LLM system.

### Components/Axes

The diagram is divided into four main sections: "Note Construction", "Link Generation", "Memory Evolution", and "Memory Retrieval". Key components include:

* **LLM Agents:** Represented by blue robot icons.

* **Environment:** Represented by a globe icon.

* **Memory:** A collection of "boxes" labeled Box 1, Box i, Box j, Box n, Box n+1, Box n+2, Box n+l, Box n+2. Each box contains multiple smaller rectangular elements representing individual notes.

* **Note Attributes:** Timestamp, Content, Context, Keywords, Tags, Embedding.

* **Top-k:** A selection mechanism for retrieving relevant memory.

* **Query Embedding:** The process of converting a query into a vector representation.

* **Text Model:** Used in the query process.

* **Relative Memory:** The final retrieved memory.

* **Arrows:** Indicate the flow of information and interactions between components.

* **Conversation 1 & 2:** Example text inputs.

### Detailed Analysis or Content Details

**Note Construction:**

* An "Interaction" between the "Environment" and "LLM Agents" initiates the process.

* A "Write" action from the LLM agent creates a "Note".

* The "Note" has attributes: Timestamp, Content, Context, Keywords, Tags, and Embedding.

* **Conversation 1:** "Can you help me implement a custom cache system for my web application? I need it to handle both memory and disk storage."

* **Conversation 2:** "The cache system works great, but we're seeing high memory usage in production. Can we modify it to implement an LRU eviction policy?"

**Link Generation:**

* Notes are stored in "Memory" boxes.

* Boxes are labeled sequentially: Box 1, Box i, Box j, Box n, Box n+1, Box n+2.

* The diagram shows a series of boxes, suggesting a dynamic memory structure.

**Memory Evolution:**

* A "Retrieve" action from an LLM agent pulls information from the "Memory" boxes.

* "Top-k" selection is applied to the retrieved information.

* The selected information is passed to an "LLM" for processing.

* An "Action" is taken, leading to an "Evolve" step.

* The evolved memory is then "Stored" back into boxes labeled Box n+l and Box n+2.

**Memory Retrieval:**

* A "Query" is processed through a "Query Embedding" and a "Text Model".

* The resulting embedding is used to "Retrieve" information from the "Relative Memory".

* The retrieved information is then presented to "LLM Agents".

### Key Observations

* The diagram emphasizes a cyclical process of note creation, storage, retrieval, and evolution.

* The "Top-k" mechanism suggests a focus on retrieving the most relevant information from memory.

* The use of "boxes" to represent memory suggests a segmented or partitioned memory structure.

* The diagram highlights the role of LLM agents in both creating and utilizing memory.

* The two example conversations demonstrate a multi-turn interaction and the evolution of a request.

### Interpretation

The diagram illustrates a sophisticated memory management system for LLMs. It demonstrates how LLMs can not only store information (notes) but also actively retrieve, process, and evolve that information over time. The system appears designed to handle complex, multi-turn conversations and adapt to changing user needs. The "Top-k" mechanism and the "Evolve" step suggest a focus on efficiency and relevance. The segmented memory structure (boxes) could be a way to organize information based on topics, contexts, or other criteria. The overall architecture suggests a system capable of learning and improving its performance over time by refining its memory and retrieval strategies. The diagram is a conceptual representation of a system, and doesn't provide specific data or numerical values, but rather a high-level overview of the process. The use of LLM agents throughout the process indicates a distributed and intelligent approach to memory management.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: LLM-Based Memory System for Agents

### Overview

The image is a technical system architecture diagram illustrating a four-stage pipeline for an LLM (Large Language Model) agent memory system. The system processes interactions from an environment, constructs structured "Notes," organizes them into a memory store, evolves the memory over time, and retrieves relevant information to inform agent actions. The diagram flows from left to right, depicting a cyclical process of memory creation, storage, refinement, and usage.

### Components/Axes

The diagram is divided into four primary vertical sections, each with a header:

1. **Note Construction** (Leftmost section)

2. **Link Generation** (Center-left)

3. **Memory Evolution** (Center-right)

4. **Memory Retrieval** (Rightmost section)

**Key Visual Components & Labels:**

* **Icons:** Globe (Environment), Robot (LLM Agents), Database cylinder (Memory), Document/Note icons, LLM symbol (spiral/gear), Text Model icon, Query icon.

* **Textual Labels & Flow Arrows:**

* `Environment`, `LLM Agents`, `Interaction`, `Write`, `Conversation 1`, `Conversation 2`, `LLM`, `Note`, `Note Attributes:`, `Timestamp`, `Content`, `Context`, `Keywords`, `Tags`, `Embedding`.

* `Memory`, `Box 1`, `Box i`, `Box j`, `Box n`, `Top-k`, `Retrieve`, `Store`, `m_j`.

* `Box n+1`, `Box n+2`, `LLM`, `Action`, `Evolve`.

* `Retrieve`, `Query`, `Text Model`, `Query Embedding`, `Top-k`, `1st`, `Relative Memory`, `LLM Agents`.

### Detailed Analysis

**1. Note Construction (Left Section):**

* **Process:** An `Environment` (globe icon) and `LLM Agents` (robot icon) engage in `Interaction`. This interaction is used to `Write` conversations.

* **Example Conversations:** Two text boxes show example dialogues:

* `Conversation 1`: "Can you help me implement a custom cache system for my web application? I need it to handle both memory and disk storage."

* `Conversation 2`: "The cache system works great, but we're seeing high memory usage in production. Can we modify it to implement an LRU eviction policy?"

* **Note Creation:** Each conversation is processed by an `LLM` (spiral icon) to generate a structured `Note`.

* **Note Attributes:** A list specifies the components of a Note: `Timestamp`, `Content`, `Context`, `Keywords`, `Tags`, and an `Embedding` (represented by a pink bar graph).

**2. Link Generation (Center-Left Section):**

* **Memory Store:** A central `Memory` database contains multiple storage units labeled as `Box 1`, `Box i`, `Box j`, through `Box n`. Each Box contains multiple document icons.

* **Retrieval & Processing:** A `Retrieve` arrow points from the `Memory` to a selection process labeled `Top-k`. This selects a subset of notes (represented by a cluster of document icons labeled `m_j`).

* **LLM Processing & Storage:** The selected notes (`m_j`) are processed by an `LLM`. The output is then directed via a `Store` arrow into new memory boxes: `Box n+1` and `Box n+2`.

**3. Memory Evolution (Center-Right Section):**

* **Input:** Takes the newly created `Box n+1` and `Box n+2` from the Link Generation stage.

* **Process:** These boxes are fed into an `LLM`.

* **Output:** The LLM produces an `Action` (document icons) which leads to an `Evolve` step (represented by a circular arrow icon), suggesting an update or refinement process for the memory structure.

**4. Memory Retrieval (Right Section):**

* **Query Input:** A `Query` (question mark icon) is processed by a `Text Model` to create a `Query Embedding` (pink bar graph).

* **Retrieval:** This embedding is used to `Retrieve` information from the main `Memory` store (arrow points left to the Link Generation section's Memory).

* **Ranking & Output:** The retrieval yields a `Top-k` result, with the `1st` ranked result highlighted as `Relative Memory` (a set of three document icons).

* **Action:** This `Relative Memory` is then passed to the `LLM Agents` (robot icon) to inform their next action.

### Key Observations

* **Cyclical Flow:** The diagram depicts a closed-loop system where agent interactions generate memory, which is stored, evolved, and then retrieved to guide future agent actions, creating a continuous learning cycle.

* **LLM as Core Processor:** The LLM symbol appears in three distinct stages (Note Construction, Link Generation/Memory Evolution, and implicitly in Retrieval via the Text Model), highlighting its central role in structuring, processing, and utilizing unstructured conversational data.

* **Hierarchical Memory:** Memory is not a flat list but is organized into `Boxes`, suggesting a structured or clustered organization. The `Top-k` mechanism is used both for selecting notes to process and for retrieving relevant memories.

* **From Unstructured to Structured:** The system's primary function is to transform raw, unstructured `Conversation` text into structured, attribute-rich `Notes` with embeddings, making them machine-searchable and actionable.

* **Evolution Mechanism:** The dedicated `Memory Evolution` stage implies the system doesn't just store data statically but has a mechanism to update, consolidate, or refine its memory structure over time based on new information.

### Interpretation

This diagram outlines a sophisticated cognitive architecture for AI agents, addressing the critical challenge of long-term memory and experience accumulation. The system's design suggests several key principles:

1. **Experience as Data:** Every agent interaction is treated as a valuable data point to be captured, not just a transient event. This enables learning from past successes and failures.

2. **Structured Abstraction:** The conversion of conversations into "Notes" with metadata (keywords, tags, context) and embeddings is a form of abstraction. It allows the system to move beyond keyword matching to semantic understanding and relationship mapping between different experiences.

3. **Dynamic Knowledge Base:** The `Link Generation` and `Memory Evolution` stages indicate this is not a static log. The system actively processes its memories to form new links (`m_j` processed into `Box n+1/n+2`) and evolve its understanding, mimicking how human memory consolidates and reorganizes information.

4. **Context-Aware Retrieval:** The retrieval process uses a query embedding to find the `Relative Memory`, implying semantic search. This ensures agents recall not just exact matches but contextually relevant past experiences, which is crucial for complex, ongoing tasks.

5. **Scalability & Efficiency:** The use of `Top-k` selection at multiple stages is a practical design choice for scalability, allowing the system to work with a large memory store by focusing processing and retrieval on the most relevant subsets.

**Underlying Purpose:** The architecture aims to create agents that are not stateless but have a persistent, evolving "experience memory." This would allow for more coherent, personalized, and improved long-term performance in tasks like technical support (as hinted by the cache system example), interactive storytelling, or complex project management, where context from distant past interactions is vital. The explicit "Evolve" step is particularly notable, suggesting the system is designed to improve its own memory organization over time, a step towards more autonomous and adaptive AI.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: LLM Agent Memory System

## Diagram Overview

The image depicts a four-stage process for an LLM (Large Language Model) agent memory system. The flow involves **Note Construction**, **Link Generation**, **Memory Evolution**, and **Memory Retrieval**. Below is a detailed breakdown of components, labels, and workflow.

---

## 1. **Note Construction**

### Components:

- **Environment**: Interacts with LLM Agents (represented by a robot icon).

- **LLM Agents**: Generate responses based on environmental input.

- **Conversation 1**:

- *"Can you help me implement a custom cache system for my web application? I need it to handle both memory and disk storage."*

- **Conversation 2**:

- *"The cache system works great, but we’re seeing high memory usage in production. Can we modify it to implement an LRU eviction policy?"*

- **Note Attributes**:

- **Timestamp**

- **Content**

- **Context**

- **Keywords**

- **Tags**

- **Embedding**

### Workflow:

1. **Environment** → **LLM Agents** → **Write** (generate responses to conversations).

2. **LLM Agents** → **Note Construction** (extract attributes from conversations).

3. **Note** → **LLM** (embedding generation).

---

## 2. **Link Generation**

### Components:

- **Memory**: A database of memory boxes (e.g., `Box 1`, `Box i`, `Box j`, `Box n+1`, `Box n+2`).

- **LLM**: Processes memory retrieval and storage.

- **Top-k**: Selected memory boxes for further processing.

### Workflow:

1. **Retrieve** memory boxes (`Box 1`, `Box i`, `Box j`, etc.).

2. **LLM** → **Top-k** (select relevant memories).

3. **Store** evolved memory boxes (`Box n+1`, `Box n+2`).

---

## 3. **Memory Evolution**

### Components:

- **Top-k**: Selected memory boxes from Link Generation.

- **LLM**: Evolves memory boxes.

- **Action**: Represents the evolution process (e.g., modifying memory structure).

### Workflow:

1. **Top-k** → **LLM** → **Evolve** (modify memory boxes).

2. **Evolved Memory** → **Store** (update database with new versions).

---

## 4. **Memory Retrieval**

### Components:

- **Retrieve**: Query memory database.

- **Text Model**: Converts queries into embeddings.

- **Query**: User input for retrieval (e.g., *"How do I implement an LRU eviction policy?"*).

- **Query Embedding**: Vector representation of the query.

- **Relative Memory**: Memory boxes most relevant to the query.

### Workflow:

1. **Retrieve** → **Text Model** → **Query Embedding**.

2. **Query Embedding** → **Relative Memory** (match with stored memories).

3. **LLM Agents** → **Action** (generate response based on retrieved memory).

---

## Key Observations

- **Circular Flow**: Memory boxes are continuously retrieved, evolved, and stored, creating a feedback loop.

- **Embeddings**: Used in both Note Construction (for notes) and Memory Retrieval (for queries).

- **LLM Role**: Central to all stages, acting as a processor for conversations, memory evolution, and retrieval.

---

## Diagram Structure

- **Spatial Grounding**:

- **Note Construction**: Leftmost section.

- **Link Generation**: Middle-left.

- **Memory Evolution**: Middle-right.

- **Memory Retrieval**: Rightmost section.

- **Legend**: No explicit legend present; colors (blue boxes, gray arrows) are consistent across stages.

---

## Notes

- No numerical data or charts present; the diagram focuses on process flow and component interactions.

- All text is in English; no additional languages detected.

DECODING INTELLIGENCE...