\n

## Diagram: LLM Memory System Architecture

### Overview

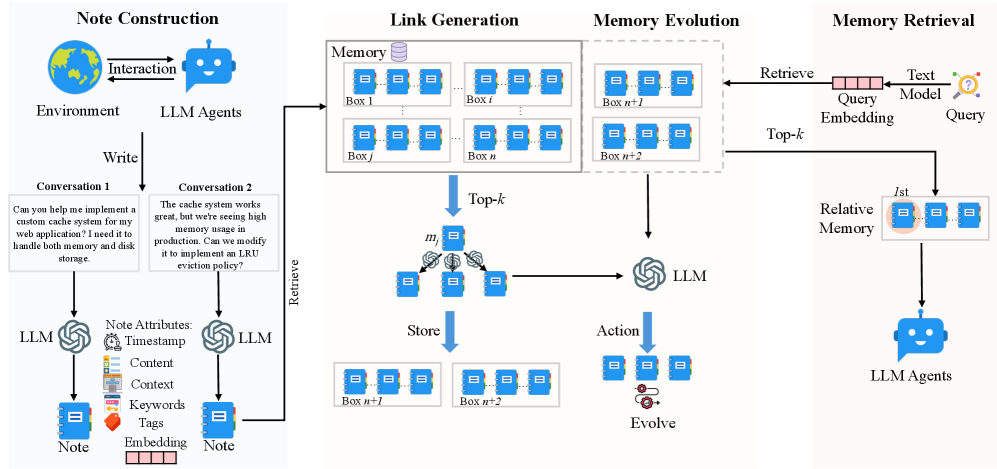

The image depicts a diagram illustrating the architecture of a Long Language Model (LLM) memory system. It outlines the process from note construction through memory evolution and retrieval, involving interactions between LLM agents, an environment, and a memory store organized into "boxes". The diagram highlights the flow of information and the key components involved in managing and utilizing memory within the LLM system.

### Components/Axes

The diagram is divided into four main sections: "Note Construction", "Link Generation", "Memory Evolution", and "Memory Retrieval". Key components include:

* **LLM Agents:** Represented by blue robot icons.

* **Environment:** Represented by a globe icon.

* **Memory:** A collection of "boxes" labeled Box 1, Box i, Box j, Box n, Box n+1, Box n+2, Box n+l, Box n+2. Each box contains multiple smaller rectangular elements representing individual notes.

* **Note Attributes:** Timestamp, Content, Context, Keywords, Tags, Embedding.

* **Top-k:** A selection mechanism for retrieving relevant memory.

* **Query Embedding:** The process of converting a query into a vector representation.

* **Text Model:** Used in the query process.

* **Relative Memory:** The final retrieved memory.

* **Arrows:** Indicate the flow of information and interactions between components.

* **Conversation 1 & 2:** Example text inputs.

### Detailed Analysis or Content Details

**Note Construction:**

* An "Interaction" between the "Environment" and "LLM Agents" initiates the process.

* A "Write" action from the LLM agent creates a "Note".

* The "Note" has attributes: Timestamp, Content, Context, Keywords, Tags, and Embedding.

* **Conversation 1:** "Can you help me implement a custom cache system for my web application? I need it to handle both memory and disk storage."

* **Conversation 2:** "The cache system works great, but we're seeing high memory usage in production. Can we modify it to implement an LRU eviction policy?"

**Link Generation:**

* Notes are stored in "Memory" boxes.

* Boxes are labeled sequentially: Box 1, Box i, Box j, Box n, Box n+1, Box n+2.

* The diagram shows a series of boxes, suggesting a dynamic memory structure.

**Memory Evolution:**

* A "Retrieve" action from an LLM agent pulls information from the "Memory" boxes.

* "Top-k" selection is applied to the retrieved information.

* The selected information is passed to an "LLM" for processing.

* An "Action" is taken, leading to an "Evolve" step.

* The evolved memory is then "Stored" back into boxes labeled Box n+l and Box n+2.

**Memory Retrieval:**

* A "Query" is processed through a "Query Embedding" and a "Text Model".

* The resulting embedding is used to "Retrieve" information from the "Relative Memory".

* The retrieved information is then presented to "LLM Agents".

### Key Observations

* The diagram emphasizes a cyclical process of note creation, storage, retrieval, and evolution.

* The "Top-k" mechanism suggests a focus on retrieving the most relevant information from memory.

* The use of "boxes" to represent memory suggests a segmented or partitioned memory structure.

* The diagram highlights the role of LLM agents in both creating and utilizing memory.

* The two example conversations demonstrate a multi-turn interaction and the evolution of a request.

### Interpretation

The diagram illustrates a sophisticated memory management system for LLMs. It demonstrates how LLMs can not only store information (notes) but also actively retrieve, process, and evolve that information over time. The system appears designed to handle complex, multi-turn conversations and adapt to changing user needs. The "Top-k" mechanism and the "Evolve" step suggest a focus on efficiency and relevance. The segmented memory structure (boxes) could be a way to organize information based on topics, contexts, or other criteria. The overall architecture suggests a system capable of learning and improving its performance over time by refining its memory and retrieval strategies. The diagram is a conceptual representation of a system, and doesn't provide specific data or numerical values, but rather a high-level overview of the process. The use of LLM agents throughout the process indicates a distributed and intelligent approach to memory management.