## Diagram: Natural Debate Training Loop

### Overview

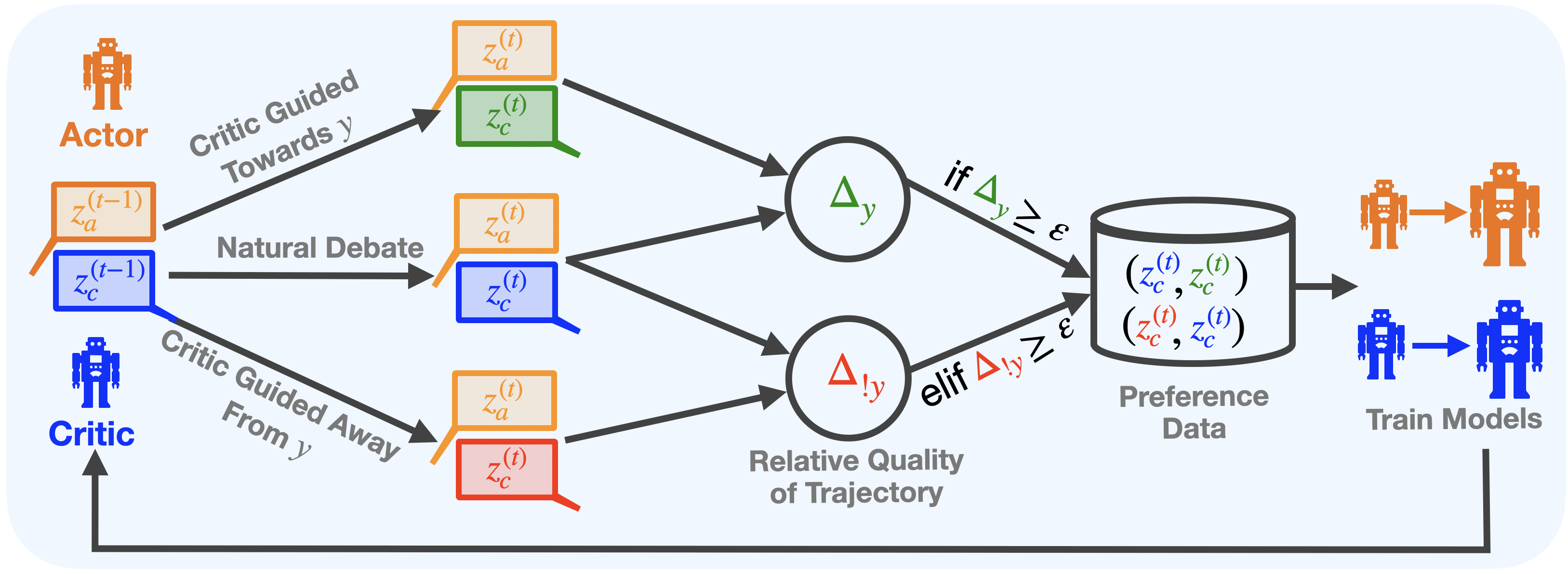

This diagram illustrates a training loop for reinforcement learning, specifically a "Natural Debate" approach involving an Actor and a Critic. The loop involves generating states, evaluating trajectories, and using preference data to train both the Actor and the Critic models. The diagram depicts the flow of information and data between these components.

### Components/Axes

The diagram consists of the following key components:

* **Actor (Orange):** Represented by a robot icon, labeled "Actor".

* **Critic (Blue):** Represented by a robot icon, labeled "Critic".

* **States:** Represented by circles labeled `z_a^(t)` and `z_c^(t)`, with a superscript (t) indicating the time step. There are also states from the previous time step, labeled `z_a^(t-1)` and `z_c^(t-1)`.

* **Critic Guidance:** Two directional arrows labeled "Critic Guided Towards y" and "Critic Guided Away From y" indicate the direction of the Critic's influence on the Actor.

* **Natural Debate:** A central area labeled "Natural Debate" connects the Actor and Critic.

* **Relative Quality of Trajectory:** A diamond-shaped node labeled "Relative Quality of Trajectory".

* **Decision Node:** A diamond-shaped node with the conditional statement "if Δy ≥ ε" and "elif Δy ≥ ε". Δy represents a change in a value, and ε represents a threshold.

* **Preference Data:** A rectangular node labeled "Preference Data" containing the tuple `(z_c^(t), z_a^(t), z_c^(t), z_c^(t))`.

* **Train Models:** A cylinder labeled "Train Models" with two robot icons, one orange (Actor) and one blue (Critic), indicating the models being trained.

* **Arrows:** Curved arrows indicate the flow of information and data.

### Detailed Analysis or Content Details

The diagram shows the following flow:

1. **Critic and Actor States:** The Critic and Actor each have states at time `t-1` (`z_a^(t-1)`, `z_c^(t-1)`).

2. **Critic Guidance:** The Critic provides guidance to the Actor, either towards or away from a value 'y'. This guidance influences the Actor's state at time `t` (`z_a^(t)`). The Critic also has a state at time `t` (`z_c^(t)`).

3. **Natural Debate:** The states of the Actor and Critic at time `t` (`z_a^(t)`, `z_c^(t)`) are fed into the "Natural Debate" area.

4. **Relative Quality of Trajectory:** The "Natural Debate" process outputs a measure of the "Relative Quality of Trajectory".

5. **Decision Node:** The "Relative Quality of Trajectory" (Δy) is evaluated against a threshold ε.

* If Δy ≥ ε, the data flows to the "Preference Data" node.

* Else if Δy ≥ ε, the data flows to the "Preference Data" node. (Note: the "elif" condition is identical to the "if" condition, which is likely an error in the diagram).

6. **Preference Data:** The "Preference Data" node contains the tuple `(z_c^(t), z_a^(t), z_c^(t), z_c^(t))`.

7. **Train Models:** The "Preference Data" is used to train both the Actor and Critic models.

### Key Observations

* The diagram highlights a closed-loop system where the Critic evaluates the Actor's actions, and this evaluation is used to improve both models.

* The conditional statement at the decision node appears redundant, as both conditions are identical.

* The tuple in the "Preference Data" node contains repeated elements (`z_c^(t)` appears three times), which may indicate a specific data structure or encoding.

* The diagram does not specify the nature of 'y' or the meaning of Δy.

### Interpretation

This diagram represents a reinforcement learning framework where an Actor and a Critic engage in a "Natural Debate" to improve their performance. The Critic provides feedback to the Actor, and the resulting trajectories are evaluated to generate preference data. This data is then used to train both models, creating a continuous learning loop. The "Natural Debate" aspect suggests that the Critic's feedback is not simply a reward signal but a more nuanced evaluation of the Actor's actions. The repeated `z_c^(t)` in the preference data might represent the critic's state being used multiple times in the training process, perhaps for regularization or to emphasize the critic's perspective. The identical "if" and "elif" conditions suggest a potential error in the diagram's design, or a specific, but unclear, logic. The diagram is a high-level overview and lacks specific details about the algorithms or parameters used in the training process.