## Chart Type: Line Chart - Validation Reward vs. Training Steps

### Overview

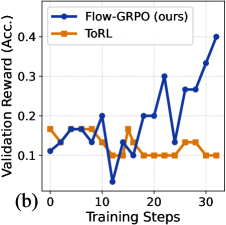

This image displays a 2D line chart comparing the "Validation Reward (Acc.)" of two different methods, "Flow-GRPO (ours)" and "ToRL", over "Training Steps". The chart shows how the validation reward evolves as training progresses for both methods, highlighting their performance trends and relative effectiveness. The chart is labeled as "(b)" in the bottom-left corner, suggesting it might be part of a larger figure.

### Components/Axes

* **Chart Title**: No explicit title is provided, but the axes and legend indicate the content.

* **X-axis**:

* **Label**: "Training Steps"

* **Range**: Approximately from 0 to 32 steps.

* **Major Ticks**: Labeled at 0, 10, 20, 30.

* **Minor Ticks**: Present at intervals of 5 steps (e.g., 5, 15, 25).

* **Y-axis**:

* **Label**: "Validation Reward (Acc.)"

* **Range**: Approximately from 0.0 to 0.4.

* **Major Ticks**: Labeled at 0.0, 0.1, 0.2, 0.3, 0.4.

* **Minor Ticks**: Present at intervals of 0.05 (e.g., 0.05, 0.15, 0.25, 0.35).

* **Grid Lines**: Light gray dashed grid lines are present for both major X and Y axis ticks, aiding in data point estimation.

* **Legend**: Located in the top-right corner of the plot area.

* **Entry 1**: A blue line with solid circular markers (●) represents "Flow-GRPO (ours)".

* **Entry 2**: An orange line with solid square markers (■) represents "ToRL".

* **Figure Label**: The character "(b)" is present in the bottom-left corner of the chart area.

### Detailed Analysis

The chart presents two data series, each representing the validation reward over training steps:

1. **Flow-GRPO (ours)** (Blue line with circular markers):

* **Trend**: This line generally shows an increasing trend in validation reward, especially in the latter half of the training steps, but with significant fluctuations. It starts relatively low, experiences an initial increase, a sharp dip, and then a strong recovery and ascent.

* **Data Points (approximate)**:

* At Training Step 0: Validation Reward is approximately 0.11.

* At Training Step 2: Validation Reward is approximately 0.14.

* At Training Step 4: Validation Reward is approximately 0.16.

* At Training Step 6: Validation Reward is approximately 0.16.

* At Training Step 8: Validation Reward is approximately 0.13.

* At Training Step 10: Validation Reward is approximately 0.20 (a peak).

* At Training Step 12: Validation Reward drops sharply to approximately 0.04 (a trough).

* At Training Step 14: Validation Reward recovers to approximately 0.13.

* At Training Step 16: Validation Reward is approximately 0.10.

* At Training Step 18: Validation Reward rises to approximately 0.20.

* At Training Step 20: Validation Reward remains around 0.20.

* At Training Step 22: Validation Reward peaks at approximately 0.30.

* At Training Step 24: Validation Reward drops to approximately 0.13.

* At Training Step 26: Validation Reward rises to approximately 0.27.

* At Training Step 28: Validation Reward remains around 0.27.

* At Training Step 30: Validation Reward increases to approximately 0.33.

* At Training Step 32: Validation Reward reaches its highest point at approximately 0.40.

2. **ToRL** (Orange line with square markers):

* **Trend**: This line shows a relatively stable but lower validation reward throughout the training steps, with minor fluctuations. It does not exhibit the same strong upward trend as Flow-GRPO.

* **Data Points (approximate)**:

* At Training Step 0: Validation Reward is approximately 0.17.

* At Training Step 2: Validation Reward is approximately 0.15.

* At Training Step 4: Validation Reward is approximately 0.16.

* At Training Step 6: Validation Reward is approximately 0.17.

* At Training Step 8: Validation Reward is approximately 0.16.

* At Training Step 10: Validation Reward is approximately 0.13.

* At Training Step 12: Validation Reward is approximately 0.10.

* At Training Step 14: Validation Reward rises to approximately 0.17.

* At Training Step 16: Validation Reward drops to approximately 0.10.

* From Training Step 18 to 24: Validation Reward remains consistently around 0.10.

* At Training Step 26: Validation Reward rises to approximately 0.13.

* At Training Step 28: Validation Reward remains around 0.13.

* At Training Step 30: Validation Reward drops to approximately 0.10.

* At Training Step 32: Validation Reward remains around 0.10.

### Key Observations

* **Initial Performance**: ToRL starts with a slightly higher validation reward (approx. 0.17) than Flow-GRPO (approx. 0.11) at Training Step 0.

* **Early Fluctuations**: Both methods show fluctuations in the early training steps (0-10). Flow-GRPO experiences an early peak around step 10 (0.20) before a significant drop.

* **Flow-GRPO's Dip**: A notable sharp decrease in Flow-GRPO's performance occurs around Training Step 12, where its reward drops to its lowest point (approx. 0.04), falling significantly below ToRL's performance at that point (approx. 0.10).

* **Recovery and Outperformance**: After the dip, Flow-GRPO demonstrates a strong recovery and a clear upward trend, consistently outperforming ToRL from approximately Training Step 18 onwards.

* **ToRL's Stability**: ToRL's performance remains relatively stable, hovering mostly between 0.10 and 0.17 throughout the entire training process, without significant improvements or major drops after the initial phase.

* **Final Performance**: At Training Step 32, Flow-GRPO achieves a validation reward of approximately 0.40, which is four times higher than ToRL's reward of approximately 0.10 at the same step.

### Interpretation

The data suggests that "Flow-GRPO (ours)" is a more effective method for achieving higher validation rewards over a longer training duration compared to "ToRL". While Flow-GRPO exhibits more volatility, including a significant performance dip early in training, its ability to recover and continuously improve leads to substantially better final performance. This indicates that Flow-GRPO might be exploring the reward landscape more aggressively or effectively, even if it encounters temporary setbacks.

Conversely, "ToRL" appears to be a more stable but less performant method. Its validation reward plateaus relatively early and remains consistently low, suggesting it might converge to a local optimum or have inherent limitations in achieving higher rewards within the given training steps. The initial higher performance of ToRL compared to Flow-GRPO is quickly surpassed, and ToRL fails to demonstrate any significant learning or improvement in the later stages.

The sharp dip in Flow-GRPO's performance around step 12 could be an artifact of the training process (e.g., a learning rate schedule change, exploration phase, or a particularly challenging batch of data), but its subsequent strong recovery and sustained growth highlight its robustness and potential for superior long-term performance. The "ours" designation for Flow-GRPO implies it is a novel method being presented, and the chart effectively demonstrates its advantage over the baseline or comparative method, ToRL, especially in terms of peak and final performance.