TECHNICAL ASSET FINGERPRINT

0da9627184b21bdf4aa15a35

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

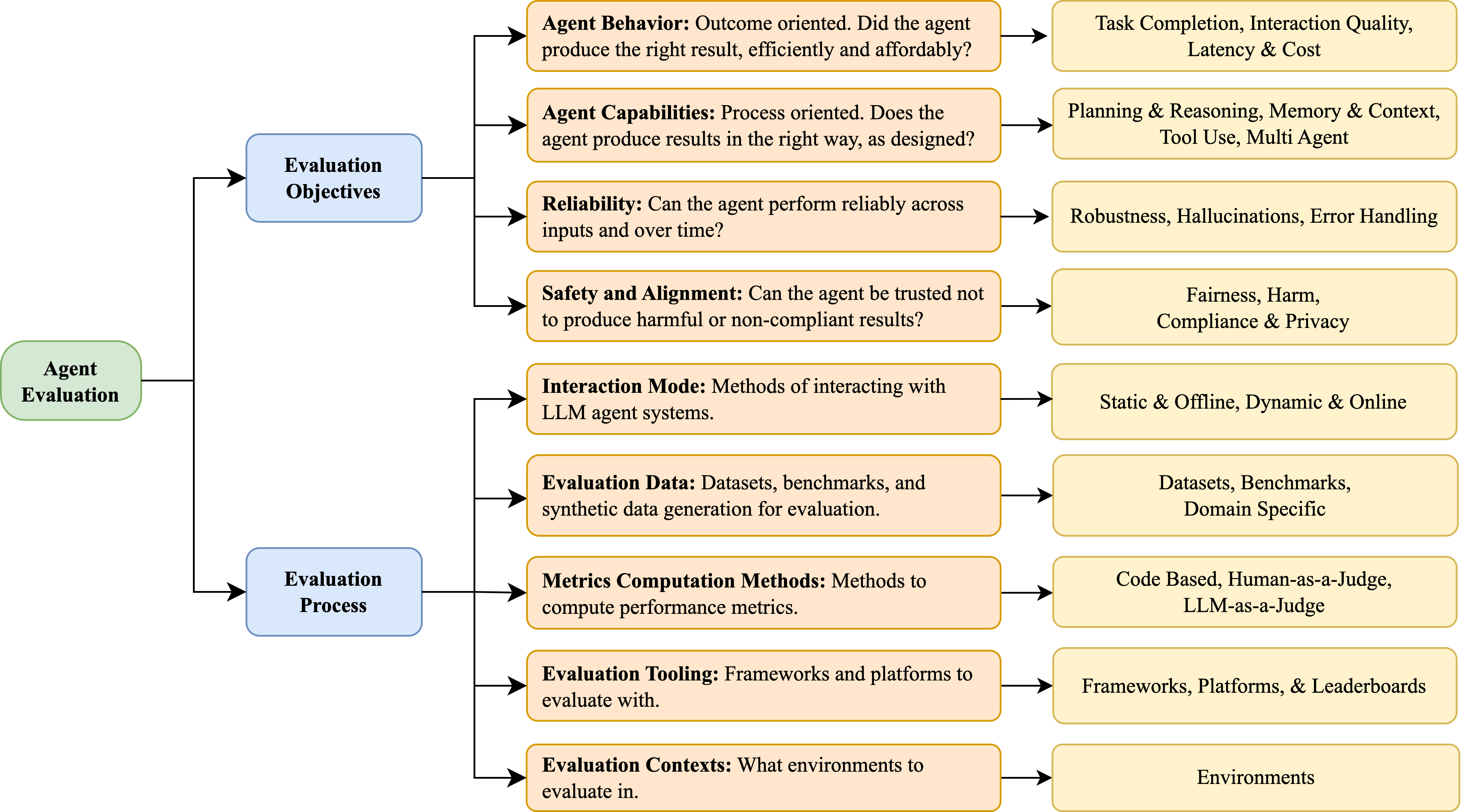

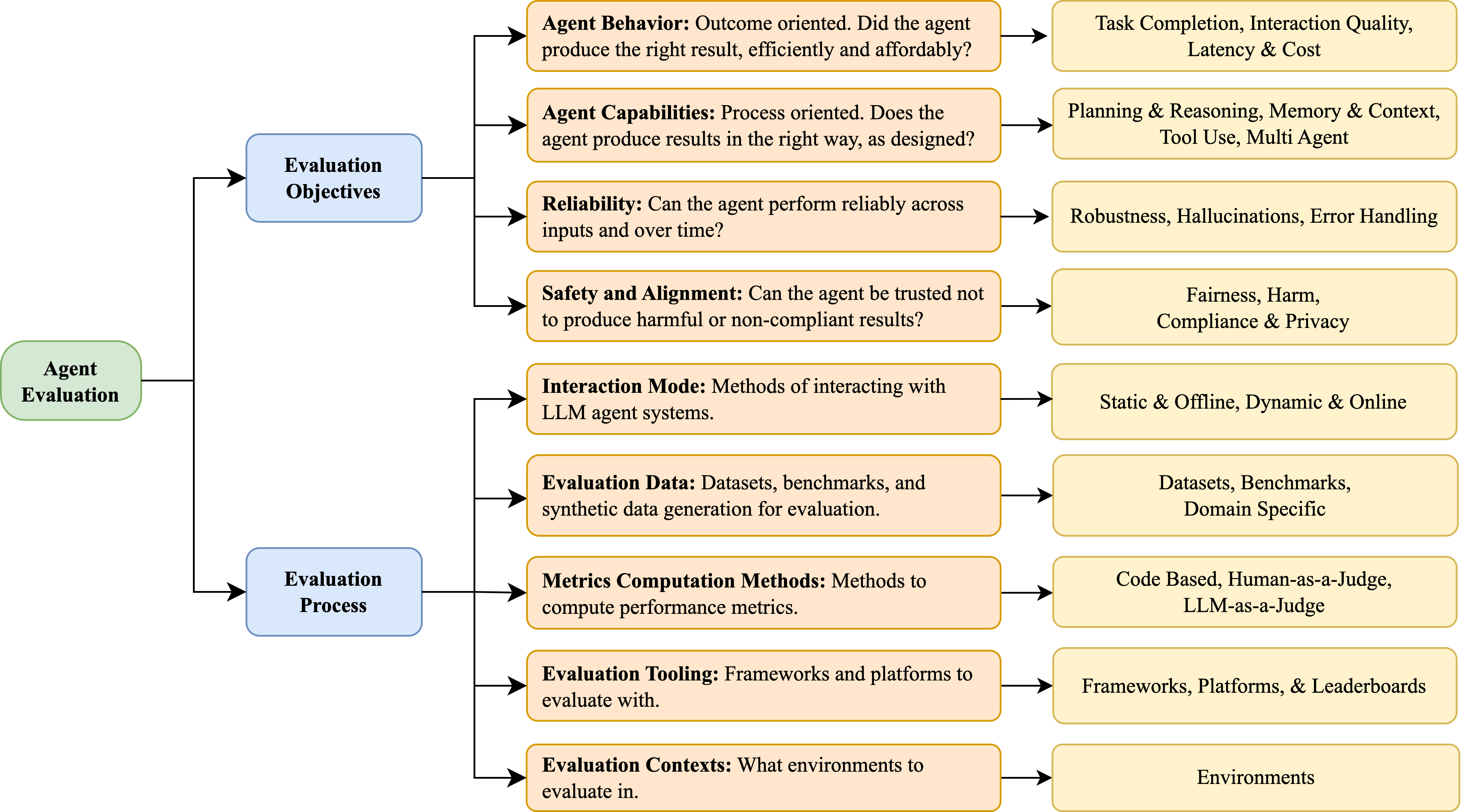

## Flow Diagram: Agent Evaluation Process

### Overview

The image is a flow diagram illustrating the agent evaluation process. It starts with "Agent Evaluation" and branches into "Evaluation Objectives" and "Evaluation Process." Each of these branches further expands into specific aspects and considerations for evaluating agents.

### Components/Axes

* **Root Node:** "Agent Evaluation" (green rounded rectangle, left side)

* **Primary Branches:**

* "Evaluation Objectives" (blue rounded rectangle, top-center)

* "Evaluation Process" (blue rounded rectangle, bottom-center)

* **Secondary Branches (from Evaluation Objectives):**

* "Agent Behavior: Outcome oriented. Did the agent produce the right result, efficiently and affordably?" (tan rounded rectangle, top-right)

* "Agent Capabilities: Process oriented. Does the agent produce results in the right way, as designed?" (tan rounded rectangle, mid-top-right)

* "Reliability: Can the agent perform reliably across inputs and over time?" (tan rounded rectangle, mid-right)

* "Safety and Alignment: Can the agent be trusted not to produce harmful or non-compliant results?" (tan rounded rectangle, mid-bottom-right)

* "Interaction Mode: Methods of interacting with LLM agent systems." (tan rounded rectangle, bottom-right)

* **Secondary Branches (from Evaluation Process):**

* "Evaluation Data: Datasets, benchmarks, and synthetic data generation for evaluation." (tan rounded rectangle, mid-top-left)

* "Metrics Computation Methods: Methods to compute performance metrics." (tan rounded rectangle, mid-left)

* "Evaluation Tooling: Frameworks and platforms to evaluate with." (tan rounded rectangle, mid-bottom-left)

* "Evaluation Contexts: What environments to evaluate in." (tan rounded rectangle, bottom-left)

* **Tertiary Branches (from Agent Behavior):**

* "Task Completion, Interaction Quality, Latency & Cost" (tan rounded rectangle, far-right, top)

* **Tertiary Branches (from Agent Capabilities):**

* "Planning & Reasoning, Memory & Context, Tool Use, Multi Agent" (tan rounded rectangle, far-right, second from top)

* **Tertiary Branches (from Reliability):**

* "Robustness, Hallucinations, Error Handling" (tan rounded rectangle, far-right, middle)

* **Tertiary Branches (from Safety and Alignment):**

* "Fairness, Harm, Compliance & Privacy" (tan rounded rectangle, far-right, second from bottom)

* **Tertiary Branches (from Interaction Mode):**

* "Static & Offline, Dynamic & Online" (tan rounded rectangle, far-right, bottom)

* **Tertiary Branches (from Evaluation Data):**

* "Datasets, Benchmarks, Domain Specific" (tan rounded rectangle, far-right, second from top)

* **Tertiary Branches (from Metrics Computation Methods):**

* "Code Based, Human-as-a-Judge, LLM-as-a-Judge" (tan rounded rectangle, far-right, middle)

* **Tertiary Branches (from Evaluation Tooling):**

* "Frameworks, Platforms, & Leaderboards" (tan rounded rectangle, far-right, second from bottom)

* **Tertiary Branches (from Evaluation Contexts):**

* "Environments" (tan rounded rectangle, far-right, bottom)

### Detailed Analysis or ### Content Details

The diagram outlines a hierarchical structure for agent evaluation. The process begins with a general "Agent Evaluation" node, which then splits into two main categories: "Evaluation Objectives" and "Evaluation Process."

* **Evaluation Objectives:** This branch focuses on *what* aspects of the agent's performance are being evaluated. It includes:

* **Agent Behavior:** Assessing the outcome of the agent's actions (e.g., task completion, efficiency, cost).

* Metrics: Task Completion, Interaction Quality, Latency & Cost

* **Agent Capabilities:** Evaluating the agent's process and design (e.g., planning, reasoning, tool use).

* Metrics: Planning & Reasoning, Memory & Context, Tool Use, Multi Agent

* **Reliability:** Determining the agent's consistency and robustness over time and across different inputs.

* Metrics: Robustness, Hallucinations, Error Handling

* **Safety and Alignment:** Ensuring the agent's actions are safe, ethical, and compliant.

* Metrics: Fairness, Harm, Compliance & Privacy

* **Interaction Mode:** Considering the methods of interaction with LLM agent systems.

* Metrics: Static & Offline, Dynamic & Online

* **Evaluation Process:** This branch focuses on *how* the agent is being evaluated. It includes:

* **Evaluation Data:** Specifying the datasets, benchmarks, and synthetic data used for evaluation.

* Data Types: Datasets, Benchmarks, Domain Specific

* **Metrics Computation Methods:** Defining the methods used to compute performance metrics.

* Methods: Code Based, Human-as-a-Judge, LLM-as-a-Judge

* **Evaluation Tooling:** Identifying the frameworks and platforms used for evaluation.

* Tools: Frameworks, Platforms, & Leaderboards

* **Evaluation Contexts:** Defining the environments in which the agent is evaluated.

* Contexts: Environments

### Key Observations

* The diagram provides a structured overview of the key considerations in agent evaluation.

* It highlights the importance of both *what* is being evaluated (objectives) and *how* it is being evaluated (process).

* The diagram covers a wide range of evaluation aspects, including behavior, capabilities, reliability, safety, interaction mode, data, metrics, tooling, and contexts.

### Interpretation

The diagram serves as a useful framework for designing and conducting agent evaluations. It emphasizes the need to consider both the objectives of the evaluation and the process used to achieve those objectives. By systematically addressing each of the aspects outlined in the diagram, evaluators can gain a comprehensive understanding of an agent's performance and capabilities. The diagram also highlights the importance of considering ethical and safety concerns in agent evaluation, as well as the need to use appropriate data, metrics, and tools.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Agent Evaluation Framework

### Overview

This diagram illustrates a framework for evaluating Large Language Model (LLM) agents. It organizes evaluation aspects into four main categories: Evaluation Objectives, Agent Evaluation, Evaluation Process, and their corresponding considerations. The diagram uses a flow-chart style with boxes representing categories and arrows indicating relationships between them.

### Components/Axes

The diagram consists of four main rectangular blocks labeled:

1. **Evaluation Objectives** (Top-Left)

2. **Agent Evaluation** (Left-Center)

3. **Evaluation Process** (Bottom-Left)

4. Each of these blocks is connected via arrows to a corresponding block on the right side detailing specific considerations.

The right-side blocks are labeled with specific evaluation aspects:

* Task Completion, Interaction Quality, Latency & Cost

* Planning & Reasoning, Memory & Context, Tool Use, Multi Agent

* Robustness, Hallucinations, Error Handling

* Fairness, Harm, Compliance & Privacy

* Static & Offline, Dynamic & Online

* Datasets, Benchmarks, Domain Specific

* Code Based, Human-as-a-Judge, LLM-as-a-Judge

* Frameworks, Platforms, & Leaderboards

* Environments

### Detailed Analysis or Content Details

The diagram details the following aspects of agent evaluation:

**Evaluation Objectives:**

* **Agent Behavior:** Outcome oriented. Did the agent produce the right result, efficiently and affordably? – Connected to: Task Completion, Interaction Quality, Latency & Cost.

* **Agent Capabilities:** Process oriented. Does the agent produce results in the right way, as designed? – Connected to: Planning & Reasoning, Memory & Context, Tool Use, Multi Agent.

* **Reliability:** Can the agent perform reliably across inputs and over time? – Connected to: Robustness, Hallucinations, Error Handling.

* **Safety and Alignment:** Can the agent be trusted not to produce harmful or non-compliant results? – Connected to: Fairness, Harm, Compliance & Privacy.

**Agent Evaluation:**

* **Interaction Mode:** Methods of interacting with LLM agent systems. – Connected to: Static & Offline, Dynamic & Online.

* **Evaluation Data:** Datasets, benchmarks, and synthetic data generation for evaluation. – Connected to: Datasets, Benchmarks, Domain Specific.

* **Metrics Computation Methods:** Methods to compute performance metrics. – Connected to: Code Based, Human-as-a-Judge, LLM-as-a-Judge.

* **Evaluation Tooling:** Frameworks and platforms to evaluate with. – Connected to: Frameworks, Platforms, & Leaderboards.

* **Evaluation Contexts:** What environments to evaluate in. – Connected to: Environments.

The arrows indicate a directional relationship, suggesting that the objectives and evaluation aspects influence the evaluation process.

### Key Observations

The diagram presents a comprehensive framework, covering both the *what* (objectives) and the *how* (process) of agent evaluation. It highlights the multi-faceted nature of evaluation, encompassing behavior, capabilities, reliability, and safety. The inclusion of different interaction modes, data sources, metrics, tooling, and contexts emphasizes the need for a holistic approach.

### Interpretation

This diagram suggests a structured approach to evaluating LLM agents, moving beyond simple performance metrics to consider broader aspects like safety, alignment, and reliability. The framework emphasizes the importance of defining clear evaluation objectives and selecting appropriate methods and tools to assess agent performance across various contexts. The connections between the left and right sides of the diagram indicate that the evaluation process is informed by the objectives and considerations outlined. The diagram is a high-level overview and doesn't delve into specific metrics or methodologies, but it provides a valuable roadmap for developing a robust agent evaluation strategy. It is a conceptual model, not a data-driven chart. The diagram is intended to be a guide for thinking about the different aspects of agent evaluation, rather than a precise set of instructions.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Agent Evaluation Framework

### Overview

The image displays a hierarchical flowchart outlining a comprehensive framework for evaluating AI agents. The diagram is structured as a tree, flowing from left to right, starting with a root concept that branches into two primary categories, which further subdivide into specific evaluation dimensions and their corresponding components or metrics.

### Components/Axes

The diagram is organized into four distinct layers, differentiated by color and position:

1. **Root Node (Green, Far Left):** "Agent Evaluation"

2. **Primary Categories (Blue, Center-Left):** Two main branches:

* "Evaluation Objectives"

* "Evaluation Process"

3. **Evaluation Dimensions (Orange, Center):** Specific areas of focus under each primary category.

4. **Components/Metrics (Yellow, Far Right):** Concrete elements, methods, or metrics associated with each evaluation dimension.

Arrows connect each node to its children, indicating a hierarchical and logical relationship. The flow is strictly left-to-right.

### Detailed Analysis

**Branch 1: Evaluation Objectives**

This branch defines *what* aspects of an agent should be evaluated.

* **Dimension 1: Agent Behavior**

* **Description:** "Outcome oriented. Did the agent produce the right result, efficiently and affordably?"

* **Components:** "Task Completion, Interaction Quality, Latency & Cost"

* **Dimension 2: Agent Capabilities**

* **Description:** "Process oriented. Does the agent produce results in the right way, as designed?"

* **Components:** "Planning & Reasoning, Memory & Context, Tool Use, Multi Agent"

* **Dimension 3: Reliability**

* **Description:** "Can the agent perform reliably across inputs and over time?"

* **Components:** "Robustness, Hallucinations, Error Handling"

* **Dimension 4: Safety and Alignment**

* **Description:** "Can the agent be trusted not to produce harmful or non-compliant results?"

* **Components:** "Fairness, Harm, Compliance & Privacy"

**Branch 2: Evaluation Process**

This branch defines *how* an agent evaluation is conducted.

* **Dimension 1: Interaction Mode**

* **Description:** "Methods of interacting with LLM agent systems."

* **Components:** "Static & Offline, Dynamic & Online"

* **Dimension 2: Evaluation Data**

* **Description:** "Datasets, benchmarks, and synthetic data generation for evaluation."

* **Components:** "Datasets, Benchmarks, Domain Specific"

* **Dimension 3: Metrics Computation Methods**

* **Description:** "Methods to compute performance metrics."

* **Components:** "Code Based, Human-as-a-Judge, LLM-as-a-Judge"

* **Dimension 4: Evaluation Tooling**

* **Description:** "Frameworks and platforms to evaluate with."

* **Components:** "Frameworks, Platforms, & Leaderboards"

* **Dimension 5: Evaluation Contexts**

* **Description:** "What environments to evaluate in."

* **Components:** "Environments"

### Key Observations

1. **Clear Dichotomy:** The framework cleanly separates the *goals* of evaluation (Objectives) from the *methodology* (Process).

2. **Comprehensive Scope:** The "Objectives" branch covers a holistic view of agent performance, from raw outcomes (Behavior) to internal processes (Capabilities), stability (Reliability), and ethical constraints (Safety).

3. **Process Granularity:** The "Process" branch breaks down the practical steps of evaluation into five distinct, actionable categories, moving from setup (Interaction Mode, Data) to execution (Metrics, Tooling) and environment (Contexts).

4. **Visual Hierarchy:** The color coding (Green -> Blue -> Orange -> Yellow) and spatial arrangement effectively communicate the increasing level of detail from left to right.

### Interpretation

This diagram presents a structured taxonomy for the emerging field of AI agent evaluation. It moves beyond simple accuracy metrics to advocate for a multi-faceted assessment.

* **Relationship Between Elements:** The "Evaluation Objectives" define the *requirements* for a good agent. The "Evaluation Process" provides the *operational blueprint* to measure those requirements. For example, to evaluate the "Reliability" objective, one would need to select an appropriate "Interaction Mode" (e.g., Dynamic & Online), use relevant "Evaluation Data" (e.g., Domain Specific benchmarks), and apply a "Metrics Computation Method" (e.g., Code Based for robustness checks).

* **Underlying Philosophy:** The framework implies that a trustworthy agent must be evaluated not just on *what* it does, but *how* it does it, *how consistently* it does it, and *within what ethical boundaries*. The inclusion of "LLM-as-a-Judge" and "Multi Agent" components acknowledges the specific challenges and paradigms of modern LLM-based systems.

* **Practical Utility:** This serves as a checklist or reference model for researchers and engineers designing evaluation suites. It ensures that critical dimensions like safety, tool use, and evaluation context are not overlooked in favor of simpler task-completion metrics. The separation of "Tooling" and "Contexts" highlights that the choice of platform and the environment (e.g., simulated vs. real-world) are first-class concerns in rigorous evaluation.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Agent Evaluation Framework

### Overview

The image depicts a hierarchical flowchart outlining an **Agent Evaluation Framework** for Large Language Model (LLM) systems. It branches into two primary categories: **Evaluation Objectives** (left) and **Evaluation Process** (right), each subdivided into detailed criteria. Arrows indicate relationships between components, emphasizing a structured approach to assessing agent performance.

### Components/Axes

#### Evaluation Objectives (Left Branch)

1. **Agent Behavior**

- Sub-components: Task Completion, Interaction Quality, Latency & Cost

- Question: "Did the agent produce the right result, efficiently and affordably?"

2. **Agent Capabilities**

- Sub-components: Planning & Reasoning, Memory & Context, Tool Use, Multi-Agent

- Question: "Does the agent produce results in the right way, as designed?"

3. **Reliability**

- Sub-components: Robustness, Hallucinations, Error Handling

- Question: "Can the agent perform reliably across inputs and over time?"

4. **Safety and Alignment**

- Sub-components: Fairness, Harm, Compliance & Privacy

- Question: "Can the agent be trusted not to produce harmful or non-compliant results?"

5. **Interaction Mode**

- Sub-components: Static & Offline, Dynamic & Online

- Question: "Methods of interacting with LLM agent systems."

6. **Evaluation Data**

- Sub-components: Datasets, Benchmarks, Domain-Specific

- Description: "Datasets, benchmarks, and synthetic data generation for evaluation."

#### Evaluation Process (Right Branch)

1. **Metrics Computation Methods**

- Sub-components: Code-Based, Human-as-a-Judge, LLM-as-a-Judge

- Description: "Methods to compute performance metrics."

2. **Evaluation Tooling**

- Sub-components: Frameworks, Platforms, & Leaderboards

- Description: "Frameworks and platforms to evaluate with."

3. **Evaluation Contexts**

- Sub-components: Environments

- Description: "What environments to evaluate in."

### Detailed Analysis

- **Agent Behavior** focuses on outcome-oriented metrics (e.g., task completion efficiency).

- **Agent Capabilities** emphasizes process-oriented design adherence (e.g., reasoning, tool use).

- **Reliability** addresses consistency across inputs and time, including error handling.

- **Safety and Alignment** ensures ethical and compliant outputs (e.g., fairness, privacy).

- **Interaction Mode** distinguishes between static/offline and dynamic/online interactions.

- **Evaluation Data** highlights the importance of domain-specific benchmarks.

- **Metrics Computation Methods** include hybrid approaches (human/LLM judges).

- **Evaluation Tooling** and **Contexts** stress the need for scalable frameworks and realistic environments.

### Key Observations

- The framework balances **outcome** (e.g., task completion) and **process** (e.g., reasoning) evaluations.

- **Safety and Alignment** is a standalone pillar, reflecting its criticality in trustworthy AI.

- **Evaluation Data** and **Tooling** components suggest a focus on reproducibility and scalability.

- Arrows indicate a top-down flow: objectives → process → implementation.

### Interpretation

This framework provides a **comprehensive, multi-dimensional evaluation strategy** for LLM agents. By separating objectives (what to measure) from processes (how to measure), it ensures both **effectiveness** (did the agent succeed?) and **reliability** (can it be trusted?). The inclusion of **human/LLM judges** and **domain-specific data** acknowledges the complexity of real-world applications. The emphasis on **safety** and **interaction modes** aligns with ethical AI development trends. Overall, the flowchart advocates for a holistic approach to agent evaluation, critical for deploying robust and responsible LLM systems.

DECODING INTELLIGENCE...