## Diagram: Variational Autoencoder (VAE) Architecture with Deterministic Router Network

### Overview

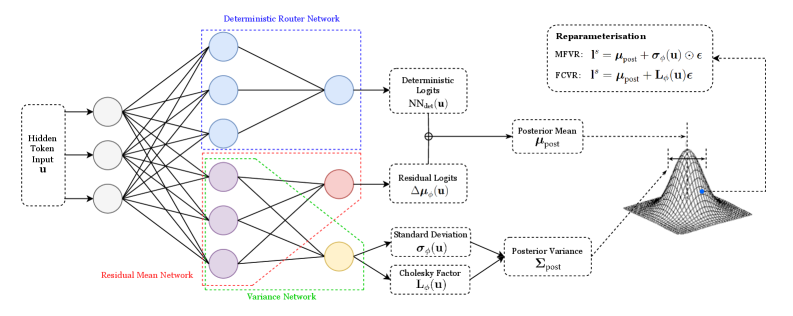

The diagram illustrates a Variational Autoencoder (VAE) architecture with a deterministic router network. It shows the flow of data from input through multiple neural network components, including residual mean and variance networks, to posterior mean/variance parameters. A Gaussian distribution visualization represents the probabilistic output.

### Components/Axes

1. **Input Layer**:

- "Hidden Token Input **u**"

2. **Deterministic Router Network**:

- Blue-boxed section with 4 nodes

- Outputs to "Deterministic Logits **NN_det(u)**"

3. **Residual Mean Network**:

- Red-boxed section with 4 nodes

- Outputs to "Residual Logits **Δμ_φ(u)**"

4. **Variance Network**:

- Green-boxed section with 4 nodes

- Outputs to:

- "Standard Deviation **σ_φ(u)**"

- "Cholesky Factor **L_φ(u)**"

5. **Posterior Parameters**:

- "Posterior Mean **μ_post**"

- "Posterior Variance **Σ_post**"

6. **Reparameterization**:

- Equations for:

- **MFVR**: **l**^s = **μ_post** + **σ_φ(u)** ⊙ **ε**

- **FCVR**: **l**^s = **μ_post** + **L_φ(u)** **ε**

7. **Gaussian Distribution**:

- Visualized with **μ_post** (mean) and **Σ_post** (variance)

### Spatial Grounding

- **Top-left**: Hidden Token Input **u** feeds into all networks

- **Center-left**: Deterministic Router Network (blue)

- **Center**: Residual Mean Network (red) and Variance Network (green)

- **Right**: Posterior parameters and reparameterization equations

- **Bottom-right**: Gaussian curve visualization

### Detailed Analysis

1. **Data Flow**:

- Input **u** → Deterministic Router Network → Deterministic Logits **NN_det(u)**

- Input **u** → Residual Mean Network → Residual Logits **Δμ_φ(u)**

- Input **u** → Variance Network → Standard Deviation **σ_φ(u)** and Cholesky Factor **L_φ(u)**

- Residual Logits + Variance components → Posterior Mean **μ_post** and Variance **Σ_post**

- Posterior parameters used in reparameterization equations

2. **Equations**:

- **MFVR** (Mean-Field Variational Reparameterization):

**l**^s = **μ_post** + **σ_φ(u)** ⊙ **ε**

- **FCVR** (Fully Connected Variational Reparameterization):

**l**^s = **μ_post** + **L_φ(u)** **ε**

3. **Gaussian Visualization**:

- Blue dot represents sampling point

- Curve shape determined by **μ_post** (center) and **Σ_post** (spread)

### Key Observations

1. The deterministic router network acts as a gating mechanism for input distribution

2. Residual and variance networks enable separate modeling of mean and uncertainty

3. Cholesky Factor **L_φ(u)** enables efficient variance parameterization

4. Reparameterization bridges probabilistic and deterministic components

5. Gaussian curve confirms the model's probabilistic output distribution

### Interpretation

This architecture demonstrates a hybrid approach combining deterministic routing with probabilistic modeling. The deterministic router network likely enables structured decomposition of input representations, while the residual/variance networks capture uncertainty. The reparameterization equations show how the model balances exploration (via sampling **ε**) with exploitation (via posterior parameters). The Gaussian visualization emphasizes the VAE's core principle of learning data distributions rather than point estimates. The architecture suggests applications in tasks requiring both structured representation learning and uncertainty quantification, such as generative modeling of structured data with inherent variability.