\n

## Line Chart: Accuracy vs. Model Size

### Overview

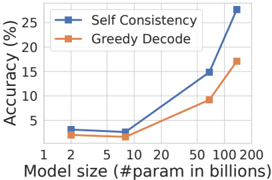

This line chart compares the accuracy of two decoding methods, "Self Consistency" and "Greedy Decode", across varying model sizes. Accuracy is measured in percentage (%) and model size is measured in billions of parameters. The chart demonstrates how accuracy scales with model size for each method.

### Components/Axes

* **X-axis:** "Model size (#param in billions)". Markers are present at 1, 2, 5, 10, 20, 50, 100, and 200.

* **Y-axis:** "Accuracy (%)". Scale ranges from 0 to 25, with increments of 5.

* **Legend:** Located in the top-right corner.

* "Self Consistency" - Represented by a blue line with triangle markers.

* "Greedy Decode" - Represented by an orange line with square markers.

* **Gridlines:** Horizontal and vertical gridlines are present to aid in reading values.

### Detailed Analysis

**Self Consistency (Blue Line):**

The blue line representing "Self Consistency" shows a generally upward trend.

* At Model Size = 1 billion parameters, Accuracy ≈ 2%.

* At Model Size = 2 billion parameters, Accuracy ≈ 3%.

* At Model Size = 5 billion parameters, Accuracy ≈ 3%.

* At Model Size = 10 billion parameters, Accuracy ≈ 4%.

* At Model Size = 20 billion parameters, Accuracy ≈ 8%.

* At Model Size = 50 billion parameters, Accuracy ≈ 12%.

* At Model Size = 100 billion parameters, Accuracy ≈ 16%.

* At Model Size = 200 billion parameters, Accuracy ≈ 25%.

**Greedy Decode (Orange Line):**

The orange line representing "Greedy Decode" also shows an upward trend, but is generally lower than "Self Consistency".

* At Model Size = 1 billion parameters, Accuracy ≈ 2%.

* At Model Size = 2 billion parameters, Accuracy ≈ 3%.

* At Model Size = 5 billion parameters, Accuracy ≈ 3%.

* At Model Size = 10 billion parameters, Accuracy ≈ 3%.

* At Model Size = 20 billion parameters, Accuracy ≈ 5%.

* At Model Size = 50 billion parameters, Accuracy ≈ 8%.

* At Model Size = 100 billion parameters, Accuracy ≈ 10%.

* At Model Size = 200 billion parameters, Accuracy ≈ 18%.

### Key Observations

* "Self Consistency" consistently outperforms "Greedy Decode" across all model sizes.

* The accuracy gap between the two methods widens as the model size increases.

* Both methods show relatively little improvement in accuracy between 1 and 10 billion parameters.

* The most significant gains in accuracy for both methods occur when the model size exceeds 20 billion parameters.

* The "Self Consistency" method shows a particularly steep increase in accuracy between 50 and 200 billion parameters.

### Interpretation

The data suggests that increasing model size generally improves accuracy for both decoding methods. However, "Self Consistency" is a more effective decoding strategy, especially as model size grows. This could be because "Self Consistency" leverages multiple generated outputs to arrive at a more robust and accurate answer, which becomes more beneficial with larger, more complex models. The relatively flat performance curve for both methods at smaller model sizes (1-10 billion parameters) indicates that the benefits of increased model capacity are limited until a certain threshold is reached. The substantial gains observed at larger model sizes (50-200 billion parameters) suggest that these models have the capacity to learn more complex patterns and relationships, but require a more sophisticated decoding strategy like "Self Consistency" to fully realize their potential. The difference in performance between the two methods is likely due to the inherent limitations of "Greedy Decode", which selects the most probable token at each step without considering alternative possibilities. This can lead to suboptimal results, especially in complex tasks where multiple valid solutions exist.