TECHNICAL ASSET FINGERPRINT

0e92e1663657933084a425fa

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Bar Charts: Problem Solving Capability vs. Human Thinking Time

### Overview

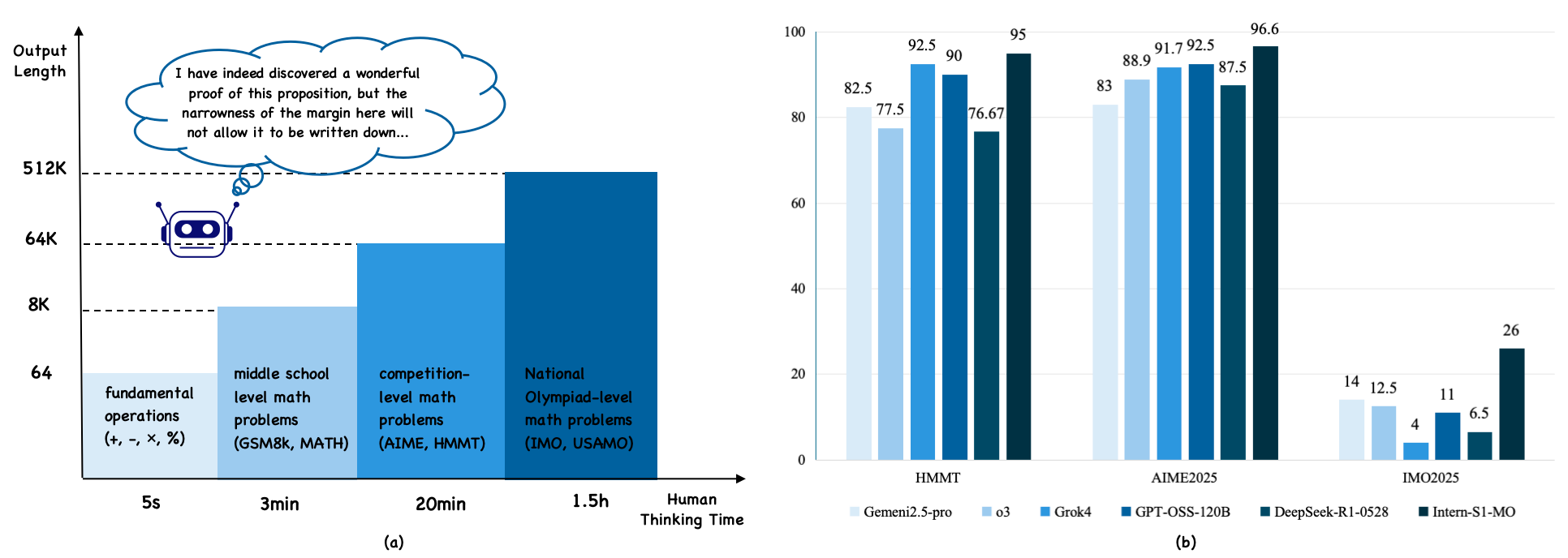

The image presents two bar charts comparing the problem-solving capabilities of AI models against human thinking time for different levels of math problems. Chart (a) illustrates the relationship between the complexity of math problems and the human thinking time required, while chart (b) compares the performance of various AI models on specific math competitions.

### Components/Axes

**Chart (a): Human Thinking Time vs. Output Length**

* **Y-axis (Output Length):** Represents the output length, with markers at 64, 8K, 64K, and 512K.

* **X-axis (Human Thinking Time):** Represents the time required for humans to solve different types of math problems, with categories:

* Fundamental operations (+, -, ×, %): 5 seconds

* Middle school level math problems (GSM8K, MATH): 3 minutes

* Competition-level math problems (AIME, HMMT): 20 minutes

* National Olympiad-level math problems (IMO, USAMO): 1.5 hours

* **Additional Elements:**

* A cartoon robot is present near the 64K mark on the Y-axis.

* A thought bubble above the robot contains the text: "I have indeed discovered a wonderful proof of this proposition, but the narrowness of the margin here will not allow it to be written down..."

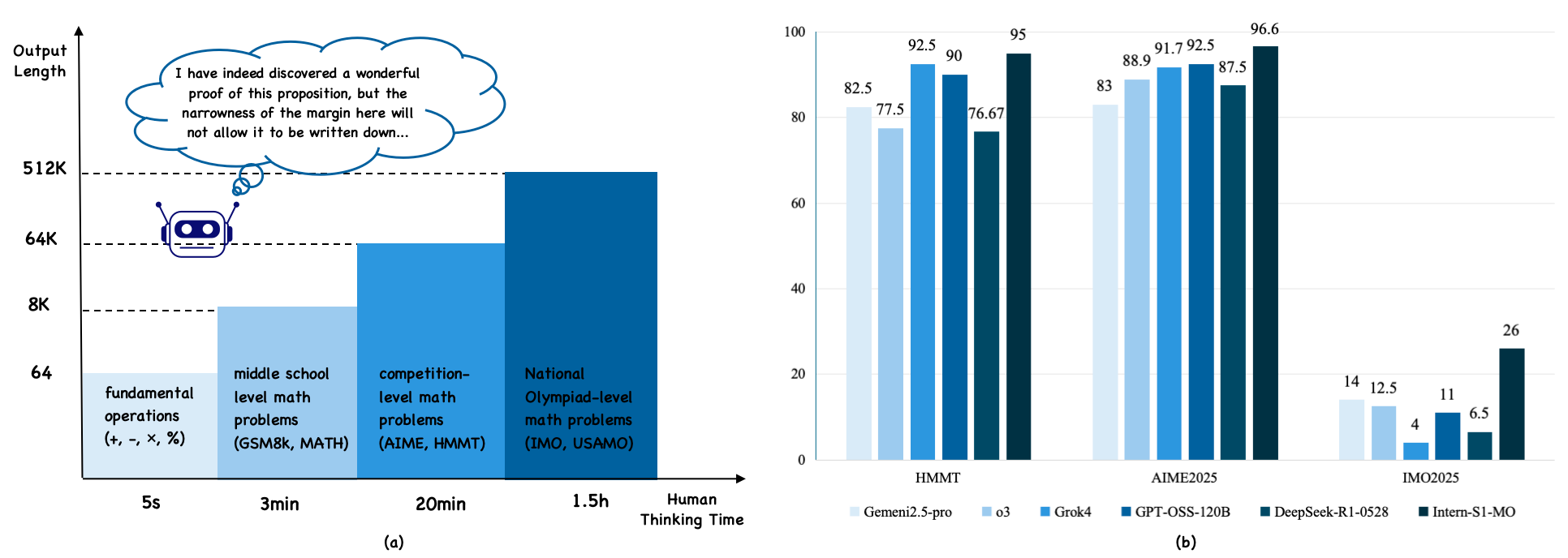

**Chart (b): AI Model Performance on Math Competitions**

* **Y-axis:** Numerical scale from 0 to 100, incrementing by 20.

* **X-axis:** Represents different math competitions:

* HMMT

* AIME2025

* IMO2025

* **Legend (located at the bottom):**

* Gemeni2.5-pro (lightest blue)

* o3 (light blue)

* Grok4 (medium blue)

* GPT-OSS-120B (dark blue)

* DeepSeek-R1-0528 (darkest blue)

* Intern-S1-MO (black)

### Detailed Analysis

**Chart (a): Human Thinking Time vs. Output Length**

* The chart shows a positive correlation between the complexity of math problems and the human thinking time required. As the level of math problems increases from fundamental operations to National Olympiad-level, the required human thinking time increases from seconds to hours.

* The bar heights are not precisely quantified, but they visually represent an increasing output length as problem complexity increases.

* Fundamental operations: ~64

* Middle school level math problems: ~8K

* Competition-level math problems: ~64K

* National Olympiad-level math problems: ~512K

**Chart (b): AI Model Performance on Math Competitions**

* The chart compares the performance of different AI models on three math competitions: HMMT, AIME2025, and IMO2025.

* The Y-axis represents the score or performance metric, ranging from 0 to 100.

**Data Points:**

* **HMMT:**

* Gemeni2.5-pro: 82.5

* o3: 77.5

* Grok4: 92.5

* GPT-OSS-120B: 90

* DeepSeek-R1-0528: 76.67

* Intern-S1-MO: 95

* **AIME2025:**

* Gemeni2.5-pro: 83

* o3: 88.9

* Grok4: 91.7

* GPT-OSS-120B: 92.5

* DeepSeek-R1-0528: 87.5

* Intern-S1-MO: 96.6

* **IMO2025:**

* Gemeni2.5-pro: 14

* o3: 12.5

* Grok4: 4

* GPT-OSS-120B: 11

* DeepSeek-R1-0528: 6.5

* Intern-S1-MO: 26

### Key Observations

* In chart (a), the human thinking time increases significantly with the complexity of the math problems.

* In chart (b), the AI models generally perform well on HMMT and AIME2025, with scores ranging from approximately 75 to 97. However, their performance drops significantly on IMO2025, with scores ranging from approximately 4 to 26.

* The Intern-S1-MO model consistently achieves the highest scores across all three math competitions.

* The Grok4 model shows a relatively lower performance on IMO2025 compared to other models.

### Interpretation

The data suggests that AI models are capable of solving complex math problems, but their performance varies depending on the type and difficulty of the problem. The models perform well on competition-level problems (AIME, HMMT) but struggle with National Olympiad-level problems (IMO, USAMO). This indicates that AI models may require further development to handle the complexity and nuances of the most challenging math problems. The Intern-S1-MO model appears to be the most proficient among the tested models, while the Grok4 model may have limitations in solving highly complex problems. The thought bubble in chart (a) humorously suggests that while AI can solve these problems, the explanation might be too complex to fit within a limited space.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Chart: Model Performance on Math Problems by Difficulty

### Overview

The image presents two distinct sections: (a) a visual representation of math problem difficulty levels correlated with human thinking time, and (b) a bar chart comparing the performance of different AI models on math problems categorized by competition level. The left side (a) is more illustrative than data-driven, while the right side (b) is a quantitative comparison of model accuracy.

### Components/Axes

**Section (a):**

* **X-axis:** "Human Thinking Time" ranging from 5 seconds to 1.5 hours. Marked with: "fundamental operations (+, -, x, ÷)", "middle school level math problems (GSM8k, MATH)", "competition-level math problems (AIME, HMMT)", "National Olympiad-level math problems (IMO, USAMO)".

* **Y-axis:** Logarithmic scale from 64 to 512K, labeled with values 64, 8K, 64K, 512K.

* **Visual Element:** An illustration of a brain with a thought bubble containing text.

**Section (b):**

* **X-axis:** Competition Level: "HMMT", "AIME2025", "IMO2025".

* **Y-axis:** Percentage, ranging from 0 to 100.

* **Legend:** Located at the bottom-right, with the following models and corresponding colors:

* Gemini2.5-pro (Dark Blue)

* Grok4 (Medium Blue)

* GPT-OSS-120B (Light Blue)

* DeepSeek-R1-0528 (Yellow)

* Intern-SI-IMO (Green)

### Detailed Analysis or Content Details

**Section (a):**

* The illustration suggests that as the complexity of the math problem increases, the amount of human thinking time required also increases. The logarithmic scale emphasizes the exponential growth in thinking time.

* The text within the thought bubble reads: "I have indeed discovered a wonderful proof of this proposition, but the narrowness of the margin here will not allow it to be written down…".

**Section (b):**

* **HMMT:**

* Gemini2.5-pro: Approximately 92.5%

* Grok4: Approximately 90%

* GPT-OSS-120B: Approximately 75.67%

* DeepSeek-R1-0528: Approximately 82.5%

* Intern-SI-IMO: Approximately 14%

* **AIME2025:**

* Gemini2.5-pro: Approximately 95%

* Grok4: Approximately 88.9%

* GPT-OSS-120B: Approximately 91.7%

* DeepSeek-R1-0528: Approximately 83%

* Intern-SI-IMO: Approximately 26%

* **IMO2025:**

* Gemini2.5-pro: Approximately 96.6%

* Grok4: Approximately 87.5%

* GPT-OSS-120B: Approximately 92.5%

* DeepSeek-R1-0528: Approximately 83%

* Intern-SI-IMO: Approximately 6.5%

* DeepSeek-R1-0528: Approximately 12.5%

* GPT-OSS-120B: Approximately 4%

### Key Observations

* Gemini2.5-pro consistently achieves the highest accuracy across all three competition levels.

* Intern-SI-IMO performs significantly worse than other models, especially on IMO2025, with a very low accuracy of approximately 6.5%.

* GPT-OSS-120B shows a relatively stable performance across HMMT and AIME2025, but dips to the lowest accuracy on IMO2025.

* The performance gap between models widens as the competition level increases, indicating that higher-level math problems are more challenging for AI models.

### Interpretation

The data suggests that Gemini2.5-pro is currently the most capable AI model for solving math problems across a range of difficulty levels, from middle school to national olympiad level. The performance of other models varies, with Grok4 and GPT-OSS-120B showing reasonable accuracy on HMMT and AIME2025, but struggling more with IMO2025. Intern-SI-IMO consistently underperforms, suggesting it may require further development or specialized training.

The logarithmic scale in section (a) highlights the increasing cognitive effort required for more complex math problems. The quote within the thought bubble alludes to the difficulty of formalizing complex mathematical ideas, even for human mathematicians.

The correlation between the two sections suggests that the AI models are being tested on problems that mirror the increasing difficulty experienced by humans. The widening performance gap at higher competition levels indicates that current AI models still have limitations in tackling the most challenging mathematical problems, potentially due to the need for more advanced reasoning, creativity, or problem-solving strategies. The outlier performance of Intern-SI-IMO suggests a potential issue with its architecture or training data for these specific problem types.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram and Bar Chart: AI Math Problem Complexity vs. Performance

### Overview

The image contains two distinct parts labeled (a) and (b). Part (a) is a conceptual diagram illustrating the relationship between the complexity of mathematical problems, the required human thinking time, and the corresponding output length (in tokens) an AI model might generate. Part (b) is a grouped bar chart comparing the performance (as a percentage) of six different AI models on three specific mathematics competition benchmarks.

### Components/Axes

**Part (a) - Conceptual Diagram:**

* **X-axis (Horizontal):** Labeled "Human Thinking Time". It has four categorical markers: "5s", "3min", "20min", and "1.5h".

* **Y-axis (Vertical):** Labeled "Output Length". It has four logarithmic-scale markers: "64", "8K", "64K", and "512K".

* **Bars:** Four vertical bars of increasing height, each corresponding to an x-axis category.

* **Bar Labels (from left to right):**

1. "fundamental operations (+, -, ×, %)"

2. "middle school level math problems (GSM8k, MATH)"

3. "competition-level math problems (AIME, HMMT)"

4. "National Olympiad-level math problems (IMO, USAMO)"

* **Embedded Graphic:** A robot icon with a thought bubble in the top-left quadrant. The thought bubble contains the text: "I have indeed discovered a wonderful proof of this proposition, but the narrowness of the margin here will not allow it to be written down..."

* **Caption:** The label "(a)" is centered below this diagram.

**Part (b) - Grouped Bar Chart:**

* **Y-axis:** A numerical scale from 0 to 100, with major gridlines at intervals of 20. The axis is not explicitly labeled but represents a percentage score.

* **X-axis:** Three categorical groups representing benchmarks: "HMMT", "AIME2025", and "IMO2025".

* **Legend:** Located at the bottom of the chart. It defines six models by color:

* Lightest blue: "Gemeni2.5-pro" (Note: Likely a typo for "Gemini")

* Light blue: "o3"

* Medium blue: "Grok4"

* Dark blue: "GPT-OSS-120B"

* Darker blue/teal: "DeepSeek-R1-0528"

* Darkest blue/black: "Intern-S1-MO"

* **Bars:** For each benchmark, there are six bars, one for each model, colored according to the legend.

* **Caption:** The label "(b)" is centered below this chart.

### Detailed Analysis

**Part (a) - Data Points and Relationships:**

The diagram establishes a direct, positive correlation between problem complexity, human thinking time, and AI output length.

* **Fundamental Operations:** Associated with ~5 seconds of human thought and an output length of approximately **64 tokens**.

* **Middle School Problems:** Associated with ~3 minutes of human thought and an output length of approximately **8K (8,192) tokens**.

* **Competition-Level Problems:** Associated with ~20 minutes of human thought and an output length of approximately **64K (65,536) tokens**.

* **Olympiad-Level Problems:** Associated with ~1.5 hours of human thought and an output length of approximately **512K (524,288) tokens**.

**Part (b) - Performance Data (Approximate Percentages):**

* **HMMT Benchmark:**

* Gemeni2.5-pro: ~82.5%

* o3: ~77.5%

* Grok4: ~92.5%

* GPT-OSS-120B: ~90%

* DeepSeek-R1-0528: ~76.67%

* Intern-S1-MO: ~95%

* **AIME2025 Benchmark:**

* Gemeni2.5-pro: ~83%

* o3: ~88.9%

* Grok4: ~91.7%

* GPT-OSS-120B: ~92.5%

* DeepSeek-R1-0528: ~87.5%

* Intern-S1-MO: ~96.6%

* **IMO2025 Benchmark:**

* Gemeni2.5-pro: ~14%

* o3: ~12.5%

* Grok4: ~4%

* GPT-OSS-120B: ~11%

* DeepSeek-R1-0528: ~6.5%

* Intern-S1-MO: ~26%

### Key Observations

1. **Exponential Scaling in (a):** The diagram suggests an exponential relationship. A 1080x increase in human thinking time (5s to 1.5h) corresponds to an 8192x increase in output length (64 to 512K tokens).

2. **Performance Hierarchy in (b):** The model "Intern-S1-MO" (darkest bar) is the top performer on all three benchmarks, with a particularly dominant lead on the most difficult benchmark, IMO2025.

3. **Benchmark Difficulty:** There is a drastic drop in scores for all models from the AIME2025/HMMT benchmarks (scores generally 75-97%) to the IMO2025 benchmark (scores 4-26%), indicating IMO-level problems are significantly harder for current AI models.

4. **Model Variability:** The relative ranking of models changes between benchmarks. For example, "Grok4" is the second-best on HMMT but performs worst on IMO2025. "GPT-OSS-120B" is very strong on AIME2025 but only mid-tier on IMO2025.

### Interpretation

The combined image makes a technical argument about the nature of AI reasoning and its current limits.

* **Part (a) - The "Proof" Analogy:** The diagram, especially with the Fermat-like quote in the thought bubble, metaphorically argues that solving progressively harder mathematical problems requires not just more time, but an exponentially greater "space" for reasoning (output length). It implies that Olympiad-level proofs are so complex they strain the practical output limits (context windows) of AI models, mirroring the margin constraint in the famous quote.

* **Part (b) - Empirical Evidence:** The bar chart provides real-world data supporting the conceptual model in (a). The IMO2025 benchmark represents the "National Olympiad-level" problems. The uniformly low scores (all below 30%) demonstrate that current state-of-the-art models struggle profoundly with this tier of problem-solving, validating the idea that such tasks require a qualitative leap in capability, not just incremental improvement. The high performance on HMMT/AIME shows models are competent at "competition-level" problems, aligning with the middle tier of diagram (a).

* **Synthesis:** Together, the figures suggest that while AI models can handle math problems requiring minutes of human thought with high proficiency, they hit a severe performance wall at problems requiring hours of deep, Olympiad-level reasoning. The leading model, Intern-S1-MO, shows the most promise in bridging this gap but still performs at a level far below its capabilities on easier tasks. The core challenge highlighted is scaling reasoning depth and coherence over the very long outputs required for the most complex problems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Output Length vs. Thinking Time and Model Performance

### Overview

The image contains two components:

1. **Left Diagram (a)**: A timeline comparing output length (y-axis) against thinking time (x-axis) for different math problem complexities.

2. **Right Bar Chart (b)**: A comparison of model performance (percentage scores) across three math competitions (HMMT, AIME2025, IMO2025) for five AI models.

---

### Components/Axes

#### Diagram (a)

- **Y-Axis**: Output Length (logarithmic scale: 64, 8K, 64K, 512K).

- **X-Axis**: Thinking Time (categories: 5s, 3min, 20min, 1.5h, Human Thinking Time).

- **Legend**:

- Light Blue: Fundamental operations (+, -, ×, %).

- Medium Blue: Middle school level math problems (GSM8k, MATH).

- Dark Blue: Competition-level math problems (AIME, HMMT).

- Very Dark Blue: National Olympiad-level math problems (IMO, USAMO).

- **Text Annotation**: A speech bubble states, *"I have indeed discovered a wonderful proof of this proposition, but the narrowness of the margin here will not allow it to be written down..."*

- **Robot Icon**: Positioned at the 64K output length mark, aligned with the 3min thinking time.

#### Bar Chart (b)

- **X-Axis**: Math Competitions (categories: HMMT, AIME2025, IMO2025).

- **Y-Axis**: Performance Percentage (0–100%).

- **Legend**:

- Light Blue: GPT-4.

- Medium Blue: Grok4.

- Dark Blue: GPT-OSS-120B.

- Very Dark Blue: DeepSeek-R1-0528.

- Black: Intern-S1-MO.

---

### Detailed Analysis

#### Diagram (a)

- **Output Length Trends**:

- **5s**: 64 (fundamental operations).

- **3min**: 8K (middle school problems).

- **20min**: 64K (competition-level problems).

- **1.5h**: 512K (national Olympiad-level problems).

- **Human Thinking Time**: Not explicitly quantified but implied to exceed 1.5h.

#### Bar Chart (b)

- **HMMT Scores**:

- GPT-4: 82.5%

- Grok4: 77.5%

- GPT-OSS-120B: 92.5%

- DeepSeek-R1-0528: 90%

- Intern-S1-MO: 95%

- **AIME2025 Scores**:

- GPT-4: 83%

- Grok4: 88.9%

- GPT-OSS-120B: 91.7%

- DeepSeek-R1-0528: 92.5%

- Intern-S1-MO: 96.6%

- **IMO2025 Scores**:

- GPT-4: 14%

- Grok4: 12.5%

- GPT-OSS-120B: 4%

- DeepSeek-R1-0528: 11%

- Intern-S1-MO: 26%

---

### Key Observations

1. **Diagram (a)**:

- Output length scales exponentially with problem complexity.

- The robot’s thought bubble humorously highlights the impracticality of writing extremely long proofs.

2. **Bar Chart (b)**:

- **AIME2025** and **HMMT** show high performance across models (80–96.6%).

- **IMO2025** scores are significantly lower (4–26%), with Intern-S1-MO outperforming others.

- Intern-S1-MO dominates in **AIME2025** (96.6%) and **IMO2025** (26%), suggesting specialization in advanced problems.

---

### Interpretation

- **Diagram (a)** illustrates the relationship between computational resources (output length) and problem complexity. The exponential growth in output length for Olympiad-level problems underscores the challenges of formalizing human-like reasoning in AI.

- **Bar Chart (b)** reveals that Intern-S1-MO excels in high-stakes competitions, particularly AIME2025, where it achieves near-human performance (96.6%). Its relative weakness in IMO2025 (26%) may reflect dataset biases or problem-specific training gaps.

- **Model Performance**: GPT-OSS-120B and DeepSeek-R1-0528 perform comparably in HMMT and AIME2025, while Intern-S1-MO’s dominance in AIME2025 suggests tailored optimization for competition-level math.

The data highlights the trade-offs between generality and specialization in AI systems, with Intern-S1-MO prioritizing advanced problem-solving at the cost of broader applicability.

DECODING INTELLIGENCE...