TECHNICAL ASSET FINGERPRINT

0ebfc77702f33798ee4c9b04

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

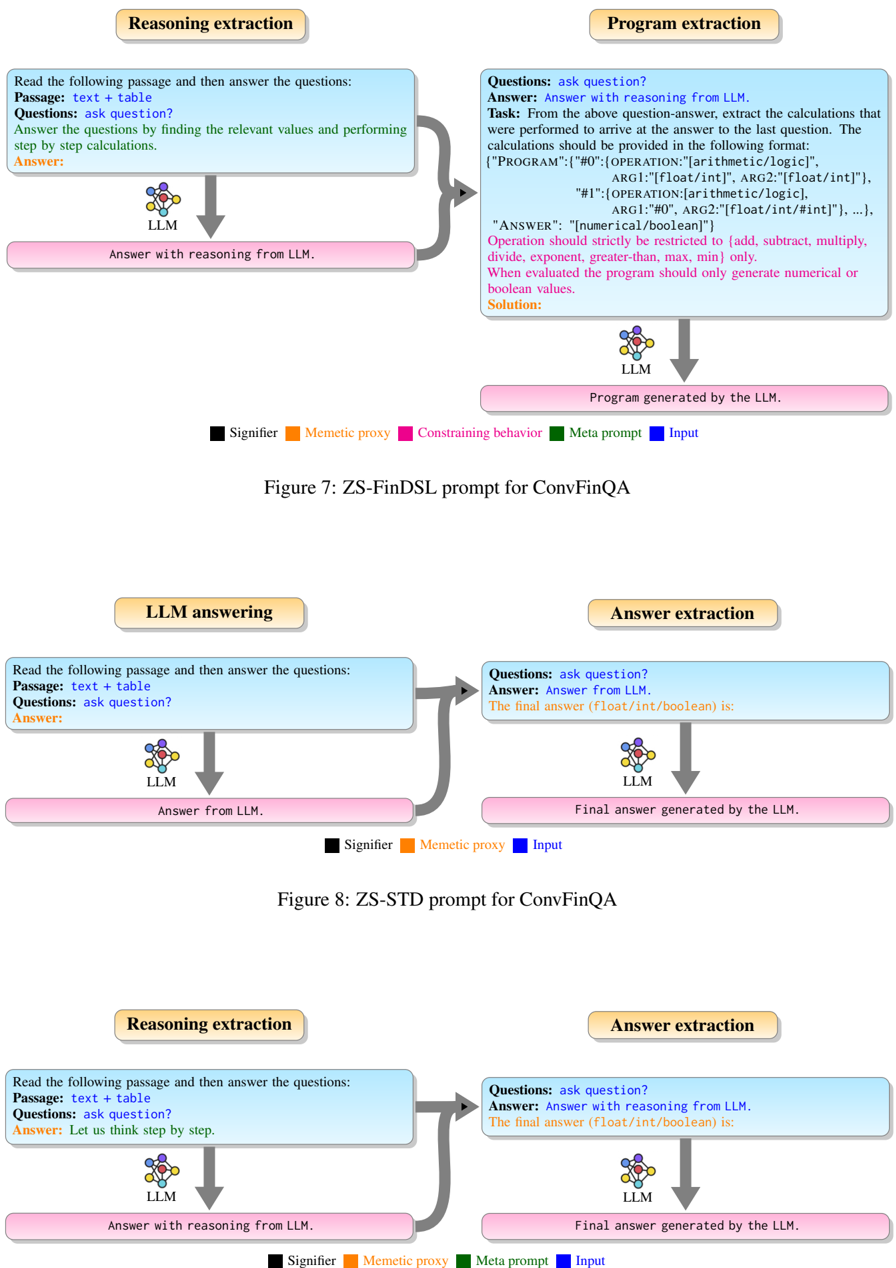

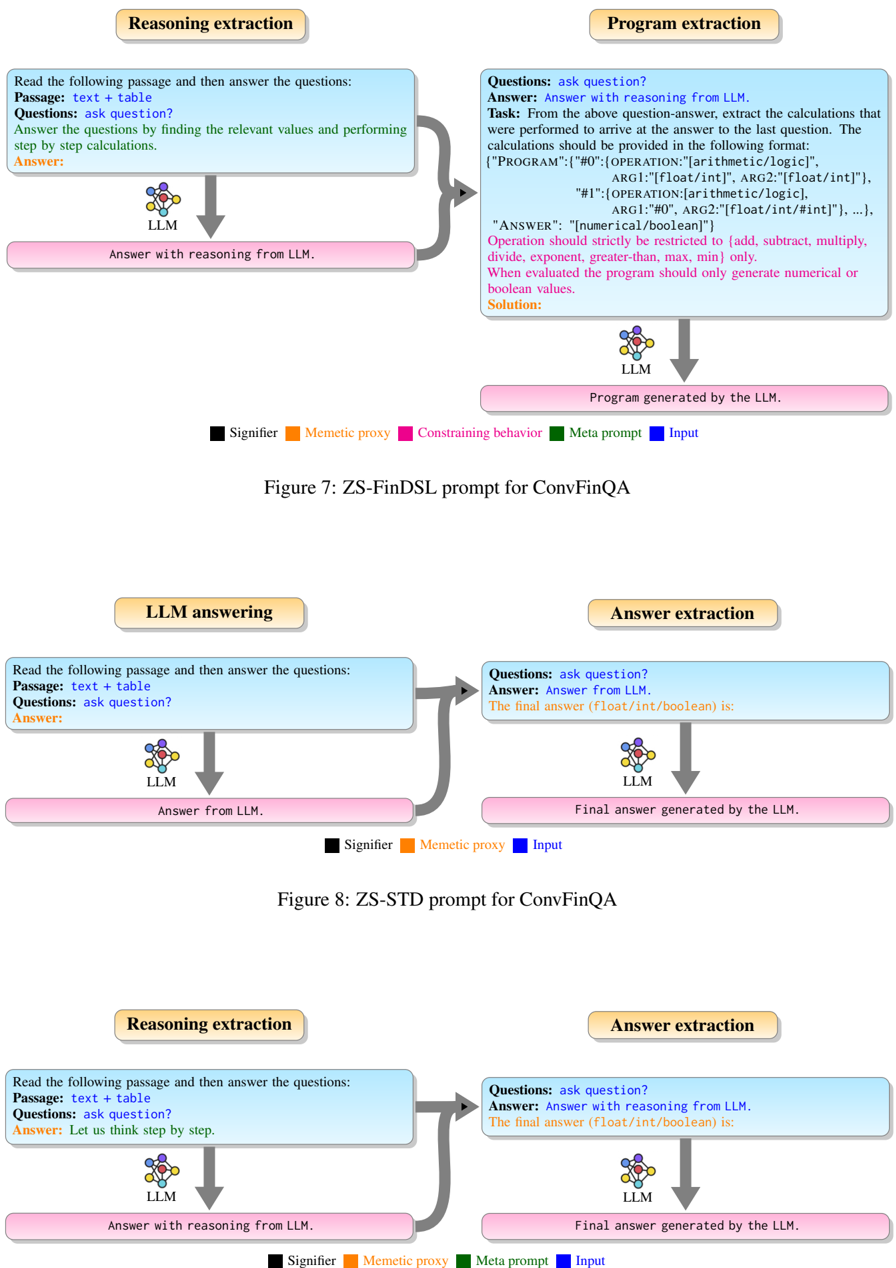

## Diagram: Prompt Engineering Strategies for ConvFinQA

### Overview

The image presents three diagrams illustrating different prompt engineering strategies (ZS-FinDSL, ZS-STD, and a third unnamed strategy) used with a Large Language Model (LLM) for the ConvFinQA task. Each diagram outlines the flow of information and processing steps involved in answering questions based on a given passage and table.

### Components/Axes

**General Components (Present in all diagrams):**

* **Input Text Box (Light Blue):** Contains the initial prompt instructions and data.

* **LLM Icon:** Represents the Large Language Model processing the input.

* **Output Text Box (Light Pink):** Displays the LLM's response or generated output.

* **Arrows:** Indicate the flow of information between components.

* **Legend (Bottom):** Defines the color-coded elements:

* Black: Signifier

* Orange: Memetic proxy

* Green: Constraining behavior (Only in Figure 7)

* Pink: Meta prompt

* Blue: Input

**Figure 7: ZS-FinDSL prompt for ConvFinQA**

* **Top-Left Box (Reasoning extraction):**

* Title: Reasoning extraction

* Text:

* Read the following passage and then answer the questions:

* Passage: text + table

* Questions: ask question?

* Answer the questions by finding the relevant values and performing step by step calculations.

* Answer:

* **Top-Right Box (Program extraction):**

* Title: Program extraction

* Text:

* Questions: ask question?

* Answer: Answer with reasoning from LLM.

* Task: From the above question-answer, extract the calculations that were performed to arrive at the answer to the last question. The calculations should be provided in the following format:

* {"PROGRAM": {"#0": {OPERATION:"[arithmetic/logic]", ARG1:"[float/int]", ARG2:"[float/int]"}, "#1":{OPERATION: [arithmetic/logic], ARG1:"#0", ARG2:"[float/int/#int]"}, ...}, "ANSWER": "[numerical/boolean]"}

* Operation should strictly be restricted to {add, subtract, multiply, divide, exponent, greater-than, max, min} only.

* When evaluated the program should only generate numerical or boolean values.

* Solution:

* **Bottom-Left Box:** Answer with reasoning from LLM.

* **Bottom-Right Box:** Program generated by the LLM.

**Figure 8: ZS-STD prompt for ConvFinQA**

* **Top-Left Box (LLM answering):**

* Title: LLM answering

* Text:

* Read the following passage and then answer the questions:

* Passage: text + table

* Questions: ask question?

* Answer:

* **Top-Right Box (Answer extraction):**

* Title: Answer extraction

* Text:

* Questions: ask question?

* Answer: Answer from LLM.

* The final answer (float/int/boolean) is:

* **Bottom-Left Box:** Answer from LLM.

* **Bottom-Right Box:** Final answer generated by the LLM.

**Third Diagram (Unnamed)**

* **Top-Left Box (Reasoning extraction):**

* Title: Reasoning extraction

* Text:

* Read the following passage and then answer the questions:

* Passage: text + table

* Questions: ask question?

* Answer: Let us think step by step.

* **Top-Right Box (Answer extraction):**

* Title: Answer extraction

* Text:

* Questions: ask question?

* Answer: Answer with reasoning from LLM.

* The final answer (float/int/boolean) is:

* **Bottom-Left Box:** Answer with reasoning from LLM.

* **Bottom-Right Box:** Final answer generated by the LLM.

### Detailed Analysis or ### Content Details

**Figure 7: ZS-FinDSL prompt for ConvFinQA**

1. **Reasoning extraction:** The LLM receives a passage and table, and is prompted to answer questions by finding relevant values and performing step-by-step calculations.

2. **Program extraction:** The LLM extracts the calculations performed to arrive at the answer. The calculations are formatted as a program with arithmetic/logic operations on float/int values. The output is a numerical or boolean answer.

3. The "Reasoning extraction" box connects to the "Program extraction" box with a gray arrow.

4. The LLM icon below the "Reasoning extraction" box connects to the "Answer with reasoning from LLM" box with a downward arrow.

5. The LLM icon below the "Program extraction" box connects to the "Program generated by the LLM" box with a downward arrow.

**Figure 8: ZS-STD prompt for ConvFinQA**

1. **LLM answering:** The LLM receives a passage and table, and is prompted to answer questions.

2. **Answer extraction:** The LLM extracts the final answer, which is a float, integer, or boolean value.

3. The "LLM answering" box connects to the "Answer extraction" box with a gray arrow.

4. The LLM icon below the "LLM answering" box connects to the "Answer from LLM" box with a downward arrow.

5. The LLM icon below the "Answer extraction" box connects to the "Final answer generated by the LLM" box with a downward arrow.

**Third Diagram (Unnamed)**

1. **Reasoning extraction:** The LLM receives a passage and table, and is prompted to answer questions by thinking step by step.

2. **Answer extraction:** The LLM extracts the final answer, which is a float, integer, or boolean value.

3. The "Reasoning extraction" box connects to the "Answer extraction" box with a gray arrow.

4. The LLM icon below the "Reasoning extraction" box connects to the "Answer with reasoning from LLM" box with a downward arrow.

5. The LLM icon below the "Answer extraction" box connects to the "Final answer generated by the LLM" box with a downward arrow.

### Key Observations

* Figure 7 (ZS-FinDSL) involves a two-step process: reasoning extraction followed by program extraction.

* Figure 8 (ZS-STD) is a simpler, direct answer extraction process.

* The third diagram is similar to ZS-STD but includes the prompt "Let us think step by step" in the reasoning extraction phase.

* The legend provides a color-coding scheme for different elements in the diagrams.

### Interpretation

The diagrams illustrate different approaches to prompt engineering for question answering tasks using LLMs. ZS-FinDSL aims to extract the reasoning process as a program, while ZS-STD directly extracts the answer. The third diagram combines elements of both, prompting the LLM to think step by step before extracting the answer. The choice of prompt engineering strategy can significantly impact the LLM's performance and the interpretability of its reasoning process. The diagrams highlight the importance of carefully designing prompts to elicit the desired behavior from the LLM.

DECODING INTELLIGENCE...