\n

## Diagram: ZS-FinDSL and ZS-STD Prompts for ConvFinQA

### Overview

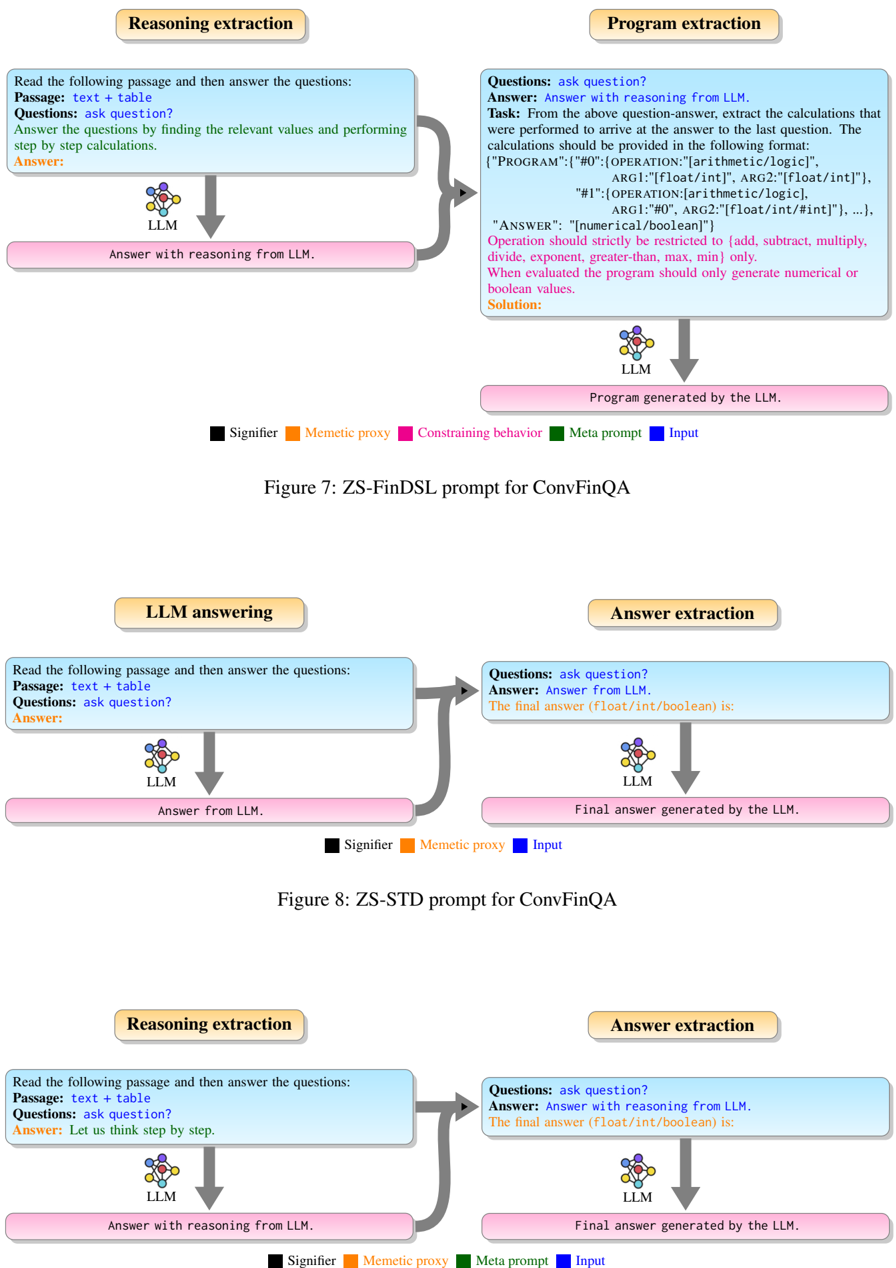

The image presents a series of diagrams illustrating the process of question answering using Large Language Models (LLMs) for the ConvFinQA dataset. There are three distinct diagram sets, each depicting a different stage: Reasoning and Extraction, Program Extraction, and LLM Answering/Answer Extraction. Each diagram uses a similar visual structure with boxes representing LLMs, text blocks representing input/output, and colored lines representing different types of prompts or data flow.

### Components/Axes

Each diagram set contains the following components:

* **Input:** A text block labeled "Passage: text + table" and "Questions: ask question?".

* **LLM:** A box labeled "LLM".

* **Output:** A text block labeled "Answer:".

* **Prompt/Data Flow:** Colored lines connecting the input, LLM, and output. These lines are labeled with terms like "Signifier", "Memetic proxy", "Constraining behavior", "Meta prompt", and "Input".

* **Diagram Titles:** "Figure 7: ZS-FinDSL prompt for ConvFinQA", "Figure 8: ZS-STD prompt for ConvFinQA".

* **Text Blocks within LLM boxes:** These contain descriptions of the LLM's task.

### Detailed Analysis or Content Details

**Diagram 1: Reasoning and Extraction (Figure 7)**

* **Input:** "Passage: text + table", "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Answer:".

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A green line labeled "Memetic proxy" connects the input to the LLM.

* A purple line labeled "Constraining behavior" connects the input to the LLM.

* A yellow line labeled "Meta prompt" connects the input to the LLM.

**Diagram 2: Program Extraction (Figure 7)**

* **Input:** "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Program generated by the LLM."

* **Text within LLM box:**

* "Task: From the above question-answer, extract the calculations that were performed to arrive at the answer to the last question. The calculations should be provided in the following format: '[PROGRAM: {"0": operation(arg1, arg2, logic)}, {"1": operation(arg1, arg2, logic)}]'".

* "ARG1: [float/int/logic], ARG2: [float/int/logic]".

* "OPERATION: [arithmetic/logic]".

* "ARG1: “#”, ARG2: “#”. ARG2: [float/int/#int”], …".

* "ANSWER: “numerical/boolean”".

* "Operation should strictly be restricted to [add, subtract, multiply, divide, exponent, greater than, less than, min, max] only."

* "When evaluated the program should only generate numerical or boolean values."

* "Solution:"

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A green line labeled "Memetic proxy" connects the input to the LLM.

**Diagram 3: LLM Answering (Figure 8)**

* **Input:** "Passage: text + table", "Questions: ask question?".

* **LLM:** "Answer from LLM."

* **Output:** "Answer:".

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

**Diagram 4: Answer Extraction (Figure 8)**

* **Input:** "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Final answer (float/int/boolean) is:".

* **Text within LLM box:** "Final answer (float/int/boolean) is:"

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A yellow line labeled "Input" connects the input to the LLM.

**Diagram 5: Reasoning Extraction (Figure 8)**

* **Input:** "Passage: text + table", "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Answer: Let us think step by step.".

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A green line labeled "Memetic proxy" connects the input to the LLM.

* A yellow line labeled "Meta prompt" connects the input to the LLM.

**Diagram 6: Answer Extraction (Figure 8)**

* **Input:** "Questions: ask question?".

* **LLM:** "Answer with reasoning from LLM."

* **Output:** "Final answer (float/int/boolean) is:".

* **Prompt/Data Flow:**

* A blue line labeled "Signifier" connects the input to the LLM.

* A yellow line labeled "Final answer generated by the LLM".

### Key Observations

* The diagrams consistently use the same visual structure, highlighting a standardized process.

* The "Program Extraction" diagram contains the most detailed textual information, outlining the specific format and constraints for the LLM's output.

* The prompts labeled "Signifier", "Memetic proxy", "Constraining behavior", and "Meta prompt" appear to guide the LLM's reasoning and output generation.

* The diagrams illustrate a multi-stage process, starting with input, progressing through LLM processing, and culminating in an answer or program output.

### Interpretation

These diagrams demonstrate two different prompting strategies – ZS-FinDSL and ZS-STD – for tackling the ConvFinQA dataset. The diagrams visually represent how LLMs are used to process text and tables, extract reasoning, generate programs (in the case of ZS-FinDSL), and ultimately provide answers. The different colored lines represent different types of prompts or data flow, suggesting that the researchers are experimenting with various techniques to improve the LLM's performance. The detailed instructions within the "Program Extraction" diagram indicate a focus on structured output and adherence to specific constraints. The consistent use of the LLM box and input/output blocks suggests a standardized workflow for evaluating and comparing the effectiveness of different prompting strategies. The diagrams are a visual representation of a research process aimed at improving the ability of LLMs to solve complex financial question-answering tasks. The use of terms like "Signifier" and "Memetic proxy" suggests a theoretical framework related to information transfer and representation within the LLM.