TECHNICAL ASSET FINGERPRINT

0ebfc77702f33798ee4c9b04

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

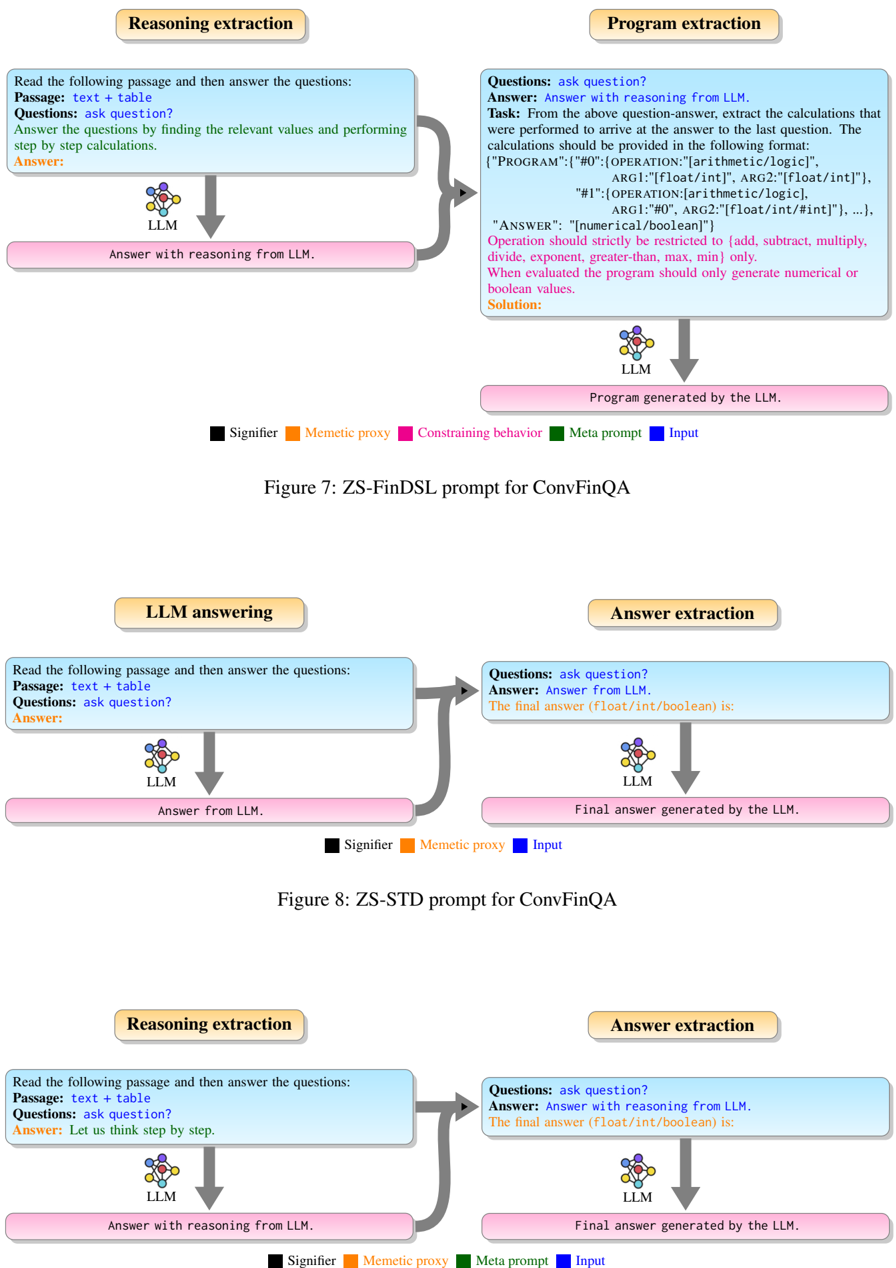

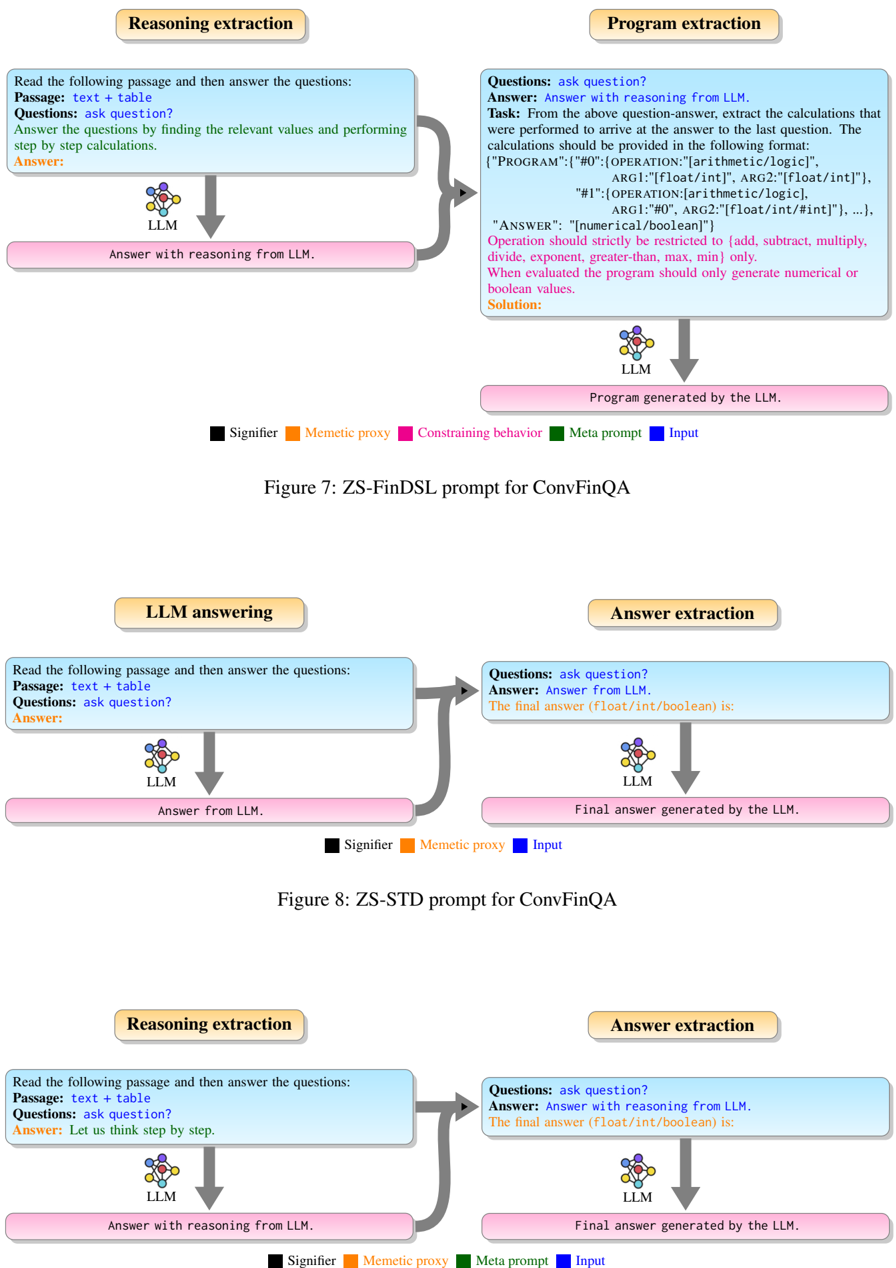

## Diagram Set: LLM Prompting Strategies for ConvFinQA

### Overview

The image displays three separate flow diagrams illustrating different prompting strategies for using Large Language Models (LLMs) on the ConvFinQA task. Each diagram shows a two-stage process where an initial prompt is sent to an LLM, and its output is then processed or used in a second stage. The diagrams are labeled as Figure 7, Figure 8, and an unlabeled third figure. A color-coded legend is provided for each diagram to identify the function of different text elements.

### Components/Axes

The image is composed of three distinct diagrams arranged vertically.

**Common Elements Across Diagrams:**

* **Process Boxes:** Each diagram has two main process boxes (e.g., "Reasoning extraction", "Program extraction") with a beige background and rounded corners.

* **Prompt Boxes:** Within each process box, there is a light blue box containing the prompt text sent to the LLM.

* **LLM Icon:** A stylized brain/chip icon labeled "LLM" represents the language model.

* **Output Boxes:** Pink boxes show the output generated by the LLM.

* **Arrows:** Grey arrows indicate the flow of information from input prompts to the LLM and then to the output.

* **Legends:** Each diagram has a legend at the bottom explaining the color-coding of text within the prompt boxes.

**Legend Key (from the diagrams):**

* **Black Square:** Signifier

* **Orange Square:** Memetic proxy

* **Pink Square:** Constraining behavior (Only in Figure 7)

* **Green Square:** Meta prompt

* **Blue Square:** Input

### Detailed Analysis

#### **Figure 7: ZS-FinDSL prompt for ConvFinQA (Top Diagram)**

* **Left Process - "Reasoning extraction":**

* **Prompt Text:** "Read the following passage and then answer the questions: Passage: text + table Questions: ask question? Answer the questions by finding the relevant values and performing step by step calculations. Answer:"

* **LLM Output:** "Answer with reasoning from LLM."

* **Right Process - "Program extraction":**

* **Prompt Text:** "Questions: ask question? Answer: Answer with reasoning from LLM. Task: From the above question-answer, extract the calculations that were performed to arrive at the answer to the last question. The calculations should be provided in the following format: {"PROGRAM":{"#0":{"OPERATION":["arithmetic/logic"], "ARG1":["float/int"], "ARG2":["float/int"]}, "#1":{"OPERATION":["arithmetic/logic"], "ARG1":"#0", "ARG2":["float/int/#int"]}, ...}, "ANSWER": ["numerical/boolean"]} Operation should strictly be restricted to {add, subtract, multiply, divide, exponent, greater-than, max, min} only. When evaluated the program should only generate numerical or boolean values. Solution:"

* **LLM Output:** "Program generated by the LLM."

* **Flow:** The reasoning from the first stage is fed as input into the second stage, which instructs the LLM to convert that reasoning into a structured program (FinDSL format).

#### **Figure 8: ZS-STD prompt for ConvFinQA (Middle Diagram)**

* **Left Process - "LLM answering":**

* **Prompt Text:** "Read the following passage and then answer the questions: Passage: text + table Questions: ask question? Answer:"

* **LLM Output:** "Answer from LLM."

* **Right Process - "Answer extraction":**

* **Prompt Text:** "Questions: ask question? Answer: Answer from LLM. The final answer (float/int/boolean) is:"

* **LLM Output:** "Final answer generated by the LLM."

* **Flow:** The initial answer from the LLM is fed into a second prompt that explicitly asks for the final answer in a specific data type (float/int/boolean). This is a simpler, two-step extraction process.

#### **Unlabeled Diagram (Bottom Diagram)**

* **Left Process - "Reasoning extraction":**

* **Prompt Text:** "Read the following passage and then answer the questions: Passage: text + table Questions: ask question? Answer: Let us think step by step."

* **LLM Output:** "Answer with reasoning from LLM."

* **Right Process - "Answer extraction":**

* **Prompt Text:** "Questions: ask question? Answer: Answer with reasoning from LLM. The final answer (float/int/boolean) is:"

* **LLM Output:** "Final answer generated by the LLM."

* **Flow:** Similar to Figure 8, but the first-stage prompt explicitly includes the chain-of-thought directive "Let us think step by step." The reasoned answer is then passed to the second stage for final answer extraction.

### Key Observations

1. **Progression of Complexity:** The diagrams show a progression from a simple answer extraction pipeline (Figure 8) to one that incorporates explicit step-by-step reasoning (Bottom Diagram), and finally to a complex pipeline that converts natural language reasoning into a formal, executable program (Figure 7).

2. **Structured Output:** Figure 7 is unique in requiring a highly structured JSON-like output ("PROGRAM") with defined operations and arguments, moving beyond free-text answers.

3. **Prompt Engineering Techniques:** The diagrams illustrate different prompt engineering techniques:

* **Zero-Shot (ZS):** Implied by the figure titles.

* **Chain-of-Thought (CoT):** Explicitly used in the bottom diagram ("Let us think step by step").

* **Two-Stage Prompting:** All diagrams use a two-stage process where the output of one LLM call becomes the input for the next.

4. **Legend Consistency:** The color-coding for "Signifier" (black), "Memetic proxy" (orange), and "Input" (blue) is consistent across all three legends. Figure 7 includes additional categories ("Constraining behavior" in pink, "Meta prompt" in green) not present in the other two.

### Interpretation

These diagrams represent different methodological approaches to solving complex financial question-answering (ConvFinQA) using LLMs. The core challenge is to move from raw text and tables to precise numerical or boolean answers.

* **Figure 8 (ZS-STD)** represents a baseline approach: ask the model for an answer, then ask it again to format that answer. It relies on the LLM's inherent reasoning but doesn't explicitly guide or verify the process.

* **The Bottom Diagram** introduces a **chain-of-thought** prompt, encouraging the model to externalize its reasoning process before the final extraction. This is a common technique to improve accuracy on multi-step problems.

* **Figure 7 (ZS-FinDSL)** represents the most sophisticated approach. It doesn't just seek an answer or reasoning; it seeks to **extract and formalize the computational logic** behind the reasoning into a domain-specific language (FinDSL). This has significant implications:

* **Interpretability:** The generated program makes the model's calculation steps explicit and auditable.

* **Verifiability:** The structured program could potentially be executed or verified by a separate system, reducing reliance on the LLM's internal, opaque computation.

* **Generalization:** It frames the problem as one of "program synthesis" from natural language, which is a powerful paradigm for handling complex, multi-step tasks.

The progression suggests a research direction focused on increasing the reliability, transparency, and formal rigor of LLM outputs for financial analysis, moving from black-box answers to transparent, structured computations. The absence of the "Constraining behavior" and "Meta prompt" elements in the simpler diagrams highlights that the FinDSL approach requires more carefully engineered prompts to guide the model toward the desired structured output format.

DECODING INTELLIGENCE...