## Diagram: ZS-FinDSL and ZS-STD Prompts for ConvFinQA

### Overview

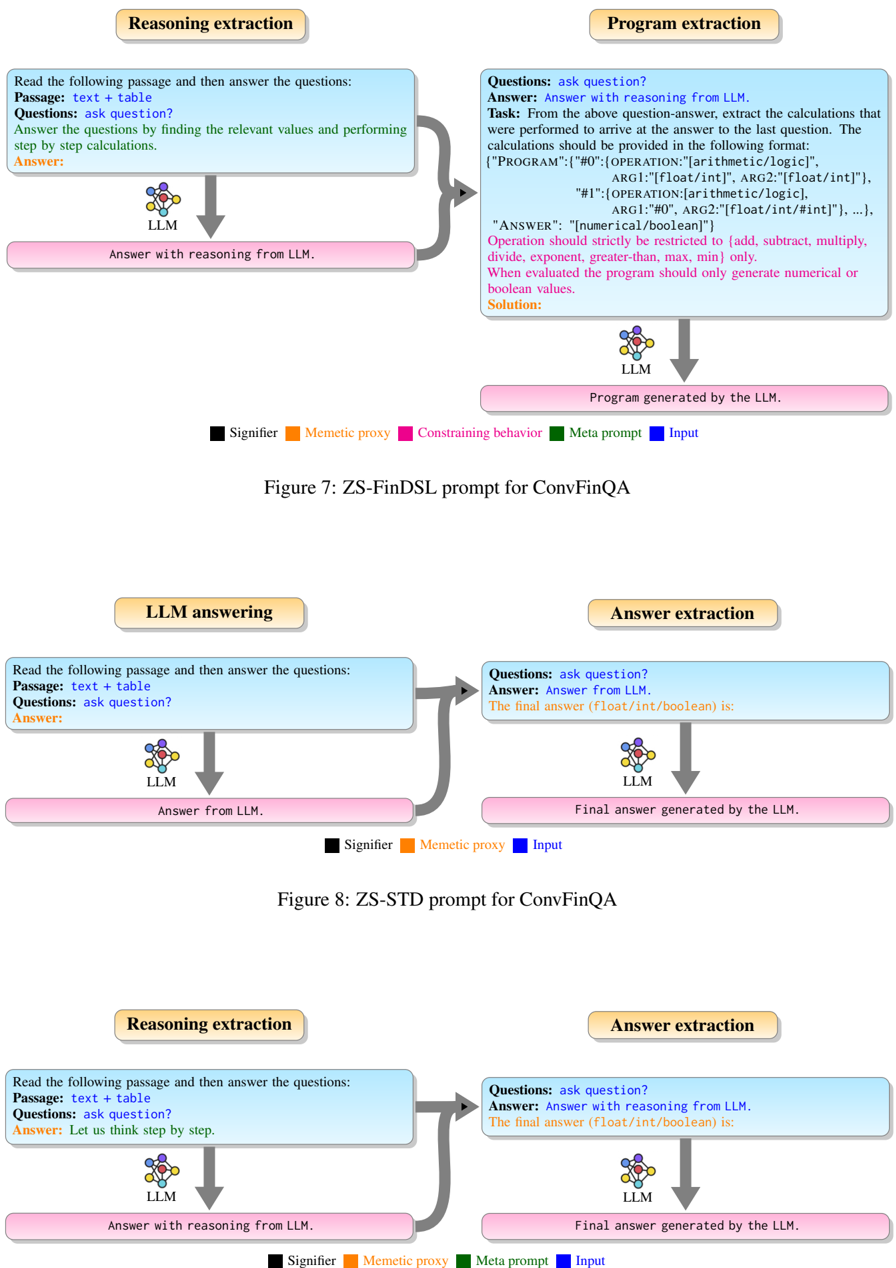

The image contains three diagrams (Figure 7, 8, 9) illustrating workflows for a ConvFinQA system. Each diagram outlines steps for **Reasoning extraction**, **Program extraction**, **LLM answering**, and **Answer extraction**, with color-coded elements (Signifier, Mementic proxy, Constraining behavior, Meta prompt, Input) and directional arrows indicating process flow.

---

### Components/Axes

#### Figure 7: ZS-FinDSL Prompt

- **Components**:

- **Reasoning extraction**: "Read the following passage and then answer the questions: Passage: text + table. Questions: ask question? Answer: Answer with reasoning from LLM."

- **Program extraction**: "From the above question-answer, extract last calculations... Operation should strictly be restricted to [add, subtract, multiply, divide, exponent, greater-than, max, min] only."

- **LLM**: Arrows connect to "Answer with reasoning from LLM" and "Program generated by the LLM."

- **Legend**:

- **Signifier** (black), **Mementic proxy** (orange), **Constraining behavior** (pink), **Meta prompt** (green), **Input** (blue).

#### Figure 8: ZS-STD Prompt

- **Components**:

- **LLM answering**: "Read the following passage and then answer the questions: Passage: text + table. Questions: ask question? Answer: Answer from LLM."

- **Answer extraction**: "The final answer (float/int/boolean) is: [answer]."

- **LLM**: Arrows connect to "Answer from LLM" and "Final answer generated by the LLM."

- **Legend**:

- **Signifier** (black), **Mementic proxy** (orange), **Input** (blue).

#### Figure 9: ZS-STD Prompt (Alternative)

- **Components**:

- **Reasoning extraction**: "Read the following passage and then answer the questions: Passage: text + table. Questions: ask question? Answer: Let us think step by step."

- **Answer extraction**: "The final answer (float/int/boolean) is: [answer]."

- **LLM**: Arrows connect to "Answer with reasoning from LLM" and "Final answer generated by the LLM."

- **Legend**:

- **Signifier** (black), **Mementic proxy** (orange), **Meta prompt** (green), **Input** (blue).

---

### Detailed Analysis

#### Figure 7: ZS-FinDSL Prompt

- **Textual Content**:

- **Reasoning extraction** requires the LLM to generate answers with explicit reasoning.

- **Program extraction** mandates strict adherence to predefined operations (e.g., no custom functions).

- **LLM** outputs both reasoning and program code.

- **Flow**:

- Input (text + table) → Reasoning extraction → Program extraction → LLM → Program generation.

#### Figure 8: ZS-STD Prompt

- **Textual Content**:

- **LLM answering** focuses on direct answers without explicit reasoning.

- **Answer extraction** isolates the final numerical/boolean result.

- **Flow**:

- Input (text + table) → LLM answering → Answer extraction → LLM → Final answer.

#### Figure 9: ZS-STD Prompt (Alternative)

- **Textual Content**:

- **Reasoning extraction** emphasizes step-by-step thinking ("Let us think step by step").

- **Answer extraction** mirrors Figure 8 but includes reasoning in the LLM output.

- **Flow**:

- Input (text + table) → Reasoning extraction → Answer extraction → LLM → Final answer.

---

### Key Observations

1. **Legend Consistency**:

- **Signifier** (black) and **Input** (blue) are consistently used across all figures.

- **Mementic proxy** (orange) appears in all figures, suggesting it represents contextual or auxiliary data.

- **Constraining behavior** (pink) and **Meta prompt** (green) are unique to Figure 7 and 9, respectively.

2. **Process Differences**:

- **Figure 7** emphasizes program generation from reasoning, while **Figures 8 and 9** focus on direct answer extraction.

- **Figure 9** introduces a "step-by-step" reasoning prompt, distinct from the other figures.

3. **Color-Coding**:

- **Signifier** (black) likely marks critical elements (e.g., questions, answers).

- **Mementic proxy** (orange) may denote contextual or secondary information.

---

### Interpretation

The diagrams illustrate two workflows for ConvFinQA:

1. **ZS-FinDSL (Figure 7)**: Designed for tasks requiring programmatic reasoning (e.g., financial calculations). The LLM generates both reasoning and executable code, constrained by strict operation rules.

2. **ZS-STD (Figures 8 and 9)**: Focuses on direct answer extraction, with variations in prompting (e.g., "step-by-step" reasoning in Figure 9).

**Notable Trends**:

- **Program extraction** (Figure 7) is more rigid, enforcing specific operations, while **answer extraction** (Figures 8/9) allows flexibility in output formats (float/int/boolean).

- The inclusion of **Meta prompt** (green) in Figure 9 suggests additional constraints or metadata handling.

**Implications**:

- The system adapts to different task requirements: structured program generation vs. direct answer retrieval.

- Color-coded elements (e.g., **Mementic proxy**) likely serve to differentiate contextual or auxiliary data from core inputs.

---

**Note**: No numerical data or charts are present; the diagrams focus on process flow and textual instructions.