\n

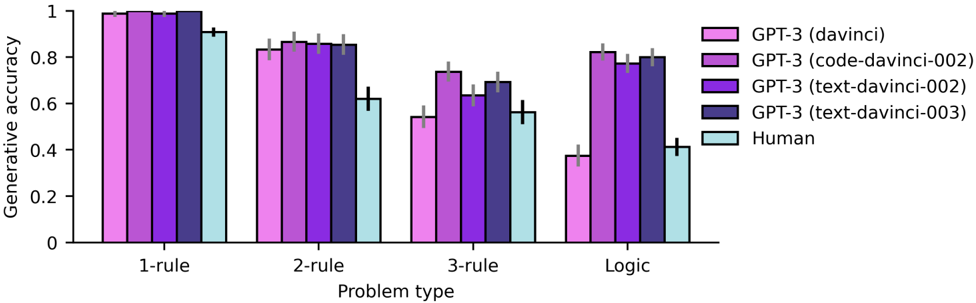

## Bar Chart: Generative Accuracy vs. Problem Type

### Overview

This bar chart compares the generative accuracy of different GPT-3 models (davinci, code-davinci-002, text-davinci-002, text-davinci-003) and humans across four problem types: 1-rule, 2-rule, 3-rule, and Logic. Each bar represents the average generative accuracy for a specific model and problem type, with error bars indicating the variability.

### Components/Axes

* **X-axis:** Problem type (1-rule, 2-rule, 3-rule, Logic).

* **Y-axis:** Generative accuracy (ranging from 0 to 1).

* **Legend:**

* GPT-3 (davinci) - Pink

* GPT-3 (code-davinci-002) - Light Gray

* GPT-3 (text-davinci-002) - Purple

* GPT-3 (text-davinci-003) - Light Blue

* Human - Black

### Detailed Analysis

The chart consists of four groups of bars, one for each problem type. Within each group, there are five bars representing the generative accuracy of each model/human. Error bars are present on top of each bar.

**1-rule Problem Type:**

* GPT-3 (davinci): Approximately 0.98, with an error bar extending to approximately 1.02.

* GPT-3 (code-davinci-002): Approximately 0.96, with an error bar extending to approximately 0.99.

* GPT-3 (text-davinci-002): Approximately 0.97, with an error bar extending to approximately 1.00.

* GPT-3 (text-davinci-003): Approximately 0.97, with an error bar extending to approximately 1.00.

* Human: Approximately 0.97, with an error bar extending to approximately 1.00.

**2-rule Problem Type:**

* GPT-3 (davinci): Approximately 0.82, with an error bar extending to approximately 0.86.

* GPT-3 (code-davinci-002): Approximately 0.84, with an error bar extending to approximately 0.87.

* GPT-3 (text-davinci-002): Approximately 0.85, with an error bar extending to approximately 0.88.

* GPT-3 (text-davinci-003): Approximately 0.85, with an error bar extending to approximately 0.88.

* Human: Approximately 0.84, with an error bar extending to approximately 0.87.

**3-rule Problem Type:**

* GPT-3 (davinci): Approximately 0.73, with an error bar extending to approximately 0.76.

* GPT-3 (code-davinci-002): Approximately 0.66, with an error bar extending to approximately 0.69.

* GPT-3 (text-davinci-002): Approximately 0.71, with an error bar extending to approximately 0.74.

* GPT-3 (text-davinci-003): Approximately 0.72, with an error bar extending to approximately 0.75.

* Human: Approximately 0.72, with an error bar extending to approximately 0.75.

**Logic Problem Type:**

* GPT-3 (davinci): Approximately 0.36, with an error bar extending to approximately 0.40.

* GPT-3 (code-davinci-002): Approximately 0.34, with an error bar extending to approximately 0.38.

* GPT-3 (text-davinci-002): Approximately 0.82, with an error bar extending to approximately 0.85.

* GPT-3 (text-davinci-003): Approximately 0.83, with an error bar extending to approximately 0.86.

* Human: Approximately 0.44, with an error bar extending to approximately 0.48.

### Key Observations

* Accuracy generally decreases as the problem complexity increases (from 1-rule to Logic).

* GPT-3 (davinci) performs well on 1-rule and 2-rule problems but significantly drops in accuracy for 3-rule and Logic problems.

* GPT-3 (code-davinci-002) consistently shows lower accuracy compared to other GPT-3 models across all problem types.

* GPT-3 (text-davinci-002) and GPT-3 (text-davinci-003) exhibit the highest accuracy on the Logic problem type, surpassing human performance.

* Human performance is relatively stable across 1-rule, 2-rule, and 3-rule problems but drops significantly on the Logic problem type.

### Interpretation

The data suggests that GPT-3 models, particularly text-davinci-002 and text-davinci-003, demonstrate a capacity to solve logic problems with higher accuracy than humans. However, their performance on simpler rule-based problems is comparable to or slightly below human performance. The significant drop in accuracy for GPT-3 (davinci) and code-davinci-002 as problem complexity increases indicates that these models struggle with tasks requiring more complex reasoning. The error bars suggest that the variability in performance is relatively low for all models and humans, indicating consistent results. The superior performance of text-davinci models on the Logic problem type could be attributed to their enhanced reasoning capabilities and training data. This chart highlights the trade-offs between different GPT-3 models and their suitability for various problem types.