TECHNICAL ASSET FINGERPRINT

0ef8e334f040ee425b96d3f0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

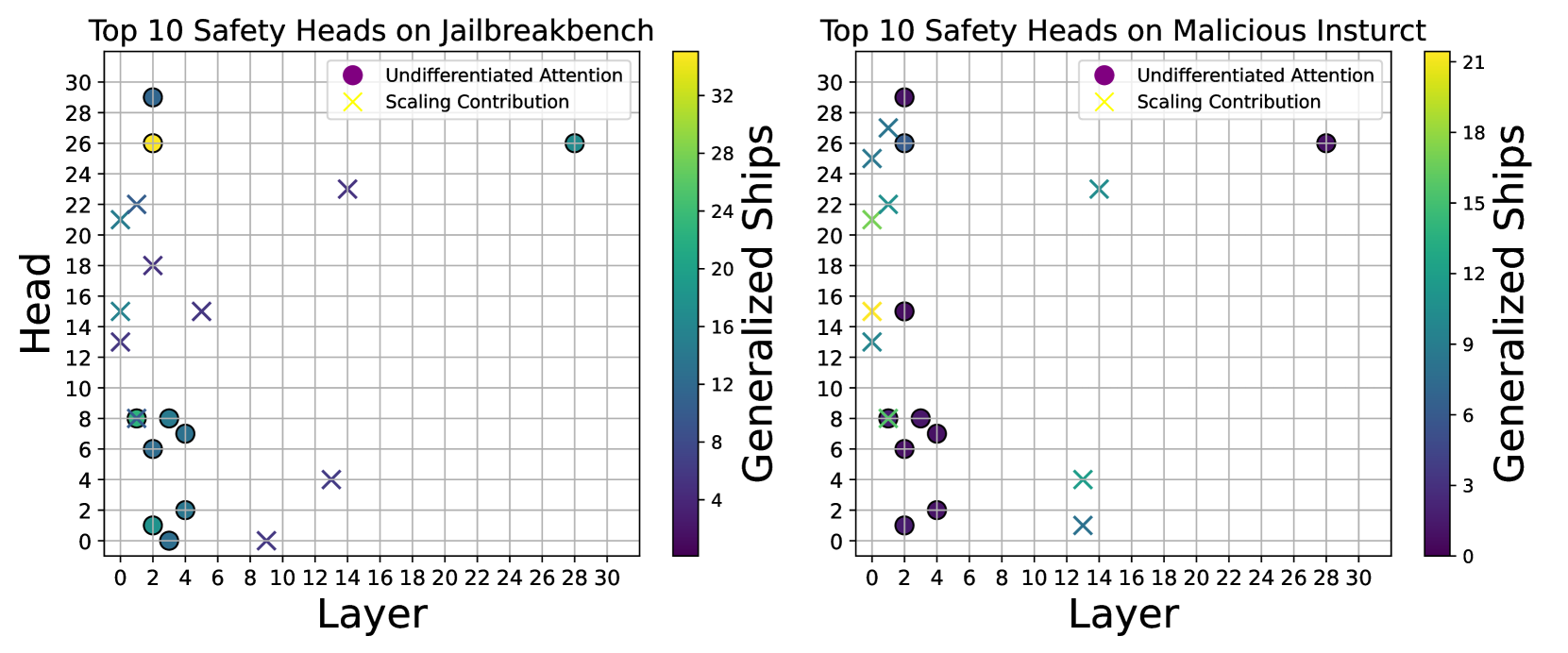

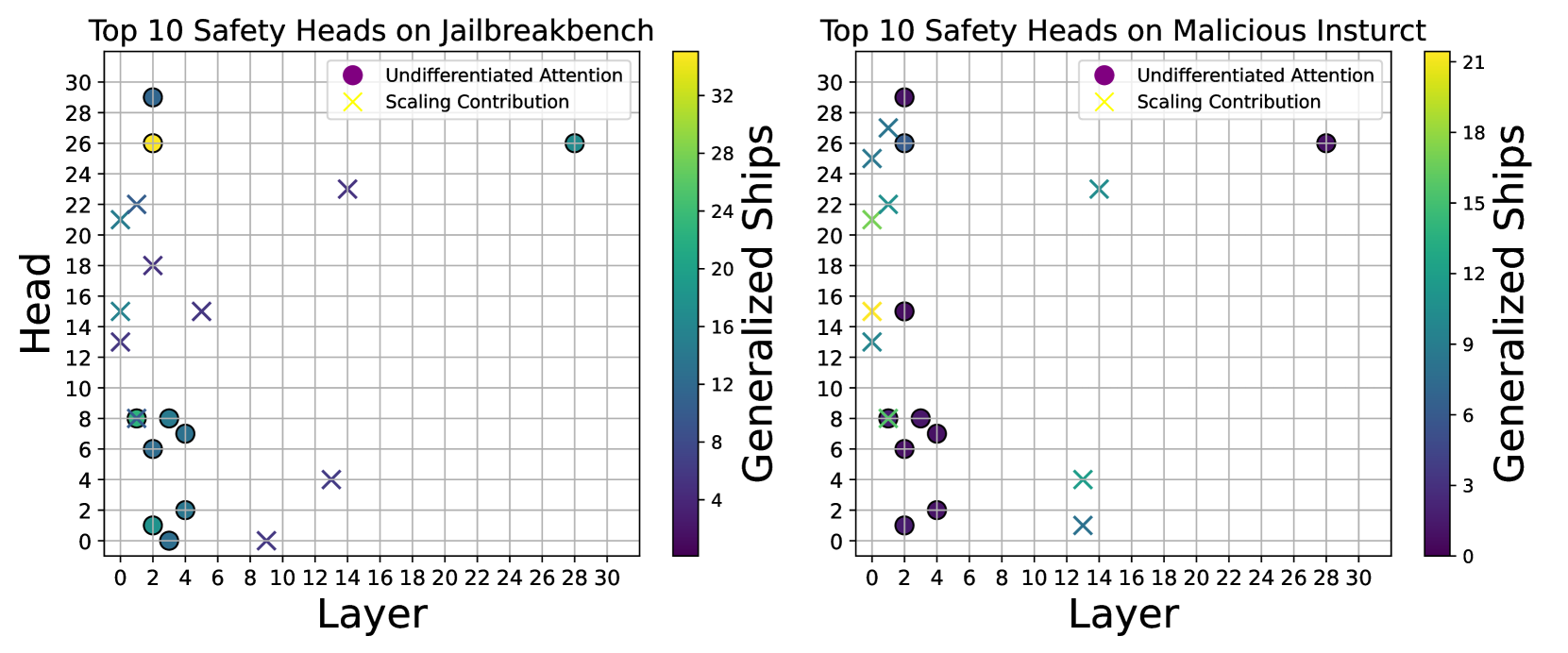

## Scatter Plots: Top 10 Safety Heads on Jailbreakbench and Malicious Insturct

### Overview

The image contains two scatter plots comparing the top 10 safety heads on "Jailbreakbench" (left) and "Malicious Insturct" (right). The plots show the relationship between "Head" (y-axis) and "Layer" (x-axis), with data points colored according to "Generalized Ships" using a color gradient. Two types of data points are represented: "Undifferentiated Attention" (circles) and "Scaling Contribution" (crosses).

### Components/Axes

**Left Plot (Jailbreakbench):**

* **Title:** Top 10 Safety Heads on Jailbreakbench

* **X-axis:** Layer, ranging from 0 to 30 in increments of 2.

* **Y-axis:** Head, ranging from 0 to 30 in increments of 2.

* **Color Bar (Right Side):** Generalized Ships, ranging from approximately 0 to 32. The color gradient goes from dark purple (0) to yellow (32).

* **Legend (Top-Right):**

* Purple circle: Undifferentiated Attention

* Yellow cross: Scaling Contribution

**Right Plot (Malicious Insturct):**

* **Title:** Top 10 Safety Heads on Malicious Insturct

* **X-axis:** Layer, ranging from 0 to 30 in increments of 2.

* **Y-axis:** Head, ranging from 0 to 30 in increments of 2.

* **Color Bar (Right Side):** Generalized Ships, ranging from approximately 0 to 21. The color gradient goes from dark purple (0) to yellow (21).

* **Legend (Top-Right):**

* Purple circle: Undifferentiated Attention

* Yellow cross: Scaling Contribution

### Detailed Analysis

**Left Plot (Jailbreakbench):**

* **Undifferentiated Attention (Circles):**

* A cluster of points with Head values between 0 and 8, and Layer values between 0 and 6. These points have Generalized Ships values ranging from approximately 0 to 8 (dark purple to green).

* One point at approximately (2, 2), Generalized Ships ~ 2 (dark green).

* One point at approximately (2, 6), Generalized Ships ~ 6 (green).

* One point at approximately (2, 8), Generalized Ships ~ 8 (green).

* One point at approximately (4, 6), Generalized Ships ~ 6 (green).

* One point at approximately (4, 8), Generalized Ships ~ 8 (green).

* One point at approximately (2, 0), Generalized Ships ~ 0 (dark purple).

* One point at approximately (2, 2), Generalized Ships ~ 2 (dark purple).

* One point at approximately (28, 28), Generalized Ships ~ 28 (yellow).

* **Scaling Contribution (Crosses):**

* One point at approximately (0, 14), Generalized Ships ~ 14 (light blue).

* One point at approximately (0, 16), Generalized Ships ~ 16 (light blue).

* One point at approximately (0, 18), Generalized Ships ~ 18 (light blue).

* One point at approximately (0, 20), Generalized Ships ~ 20 (light blue).

* One point at approximately (0, 12), Generalized Ships ~ 12 (light blue).

* One point at approximately (14, 16), Generalized Ships ~ 16 (light blue).

* One point at approximately (16, 24), Generalized Ships ~ 24 (light blue).

* One point at approximately (10, 4), Generalized Ships ~ 4 (dark purple).

* One point at approximately (8, 0), Generalized Ships ~ 0 (dark purple).

**Right Plot (Malicious Insturct):**

* **Undifferentiated Attention (Circles):**

* A cluster of points with Head values between 6 and 8, and Layer values between 0 and 6. These points have Generalized Ships values ranging from approximately 0 to 6 (dark purple to green).

* One point at approximately (0, 6), Generalized Ships ~ 6 (green).

* One point at approximately (0, 8), Generalized Ships ~ 6 (green).

* One point at approximately (2, 6), Generalized Ships ~ 6 (green).

* One point at approximately (4, 8), Generalized Ships ~ 6 (green).

* One point at approximately (2, 2), Generalized Ships ~ 2 (dark purple).

* One point at approximately (2, 0), Generalized Ships ~ 0 (dark purple).

* One point at approximately (2, 16), Generalized Ships ~ 3 (dark purple).

* One point at approximately (4, 16), Generalized Ships ~ 3 (dark purple).

* One point at approximately (28, 26), Generalized Ships ~ 18 (yellow).

* **Scaling Contribution (Crosses):**

* One point at approximately (0, 14), Generalized Ships ~ 12 (light blue).

* One point at approximately (0, 22), Generalized Ships ~ 15 (light blue).

* One point at approximately (0, 26), Generalized Ships ~ 15 (light blue).

* One point at approximately (0, 28), Generalized Ships ~ 15 (light blue).

* One point at approximately (14, 24), Generalized Ships ~ 15 (light blue).

* One point at approximately (14, 4), Generalized Ships ~ 3 (dark purple).

* One point at approximately (14, 2), Generalized Ships ~ 3 (dark purple).

### Key Observations

* Both plots show a concentration of "Undifferentiated Attention" heads (circles) at lower layer and head values (bottom-left).

* "Scaling Contribution" heads (crosses) are more scattered across the layer and head space.

* The "Generalized Ships" values vary significantly across the data points, indicated by the color gradient.

* The range of "Generalized Ships" is different between the two plots (0-32 for Jailbreakbench, 0-21 for Malicious Insturct).

### Interpretation

The plots visualize the distribution of safety heads in two different scenarios: "Jailbreakbench" and "Malicious Insturct." The concentration of "Undifferentiated Attention" heads at lower layers and head values suggests that these heads might be more relevant in the initial stages of processing or represent more fundamental features. The scattered distribution of "Scaling Contribution" heads indicates that these heads might be involved in more complex or specialized computations across different layers. The "Generalized Ships" values likely represent a measure of the importance or contribution of each head to the overall safety performance. The different ranges of "Generalized Ships" between the two scenarios suggest that the safety heads might have different levels of effectiveness or relevance depending on the specific task or dataset.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Heatmap: Top 10 Safety Heads on Jailbreakbench & Malicious Instruct

### Overview

The image presents two heatmaps side-by-side. Both plots visualize the relationship between "Layer" (x-axis) and "Head" (y-axis) with color representing "Generalized Ships". The left heatmap focuses on "Top 10 Safety Heads on jailbreakbench", while the right heatmap focuses on "Top 10 Safety Heads on Malicious Instruct". Each heatmap displays two data series: "Undifferentiated Attention" (purple circles) and "Scaling Contribution" (light blue crosses). The color scale on each heatmap indicates the value of "Generalized Ships", ranging from approximately 0 to 32 for the jailbreakbench plot and 0 to 21 for the malicious instruct plot.

### Components/Axes

* **X-axis (Both Plots):** "Layer", ranging from 0 to 30.

* **Y-axis (Both Plots):** "Head", ranging from 0 to 30.

* **Color Scale (Both Plots):** "Generalized Ships", with a gradient from dark blue (low values) to dark red (high values).

* **Left Plot Legend (Top-Right):**

* Purple Circle: "Undifferentiated Attention"

* Light Blue Cross: "Scaling Contribution"

* **Right Plot Legend (Top-Right):**

* Purple Circle: "Undifferentiated Attention"

* Light Blue Cross: "Scaling Contribution"

* **Title (Left Plot):** "Top 10 Safety Heads on jailbreakbench"

* **Title (Right Plot):** "Top 10 Safety Heads on Malicious Instruct"

### Detailed Analysis or Content Details

**Left Plot (jailbreakbench):**

* **Undifferentiated Attention (Purple Circles):**

* At Layer 0, Head ≈ 28, Generalized Ships ≈ 32.

* At Layer 2, Head ≈ 24, Generalized Ships ≈ 28.

* At Layer 4, Head ≈ 16, Generalized Ships ≈ 16.

* At Layer 6, Head ≈ 8, Generalized Ships ≈ 8.

* At Layer 8, Head ≈ 4, Generalized Ships ≈ 4.

* At Layer 10, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 12, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 14, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 16, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 18, Head ≈ 2, Generalized Ships ≈ 2.

* **Scaling Contribution (Light Blue Crosses):**

* At Layer 0, Head ≈ 22, Generalized Ships ≈ 24.

* At Layer 2, Head ≈ 18, Generalized Ships ≈ 18.

* At Layer 4, Head ≈ 14, Generalized Ships ≈ 14.

* At Layer 6, Head ≈ 10, Generalized Ships ≈ 10.

* At Layer 8, Head ≈ 6, Generalized Ships ≈ 6.

* At Layer 10, Head ≈ 4, Generalized Ships ≈ 4.

* At Layer 12, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 14, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 16, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 18, Head ≈ 2, Generalized Ships ≈ 2.

**Right Plot (Malicious Instruct):**

* **Undifferentiated Attention (Purple Circles):**

* At Layer 0, Head ≈ 28, Generalized Ships ≈ 21.

* At Layer 2, Head ≈ 24, Generalized Ships ≈ 18.

* At Layer 4, Head ≈ 16, Generalized Ships ≈ 15.

* At Layer 6, Head ≈ 8, Generalized Ships ≈ 9.

* At Layer 8, Head ≈ 6, Generalized Ships ≈ 6.

* At Layer 10, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 12, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 14, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 16, Head ≈ 4, Generalized Ships ≈ 3.

* **Scaling Contribution (Light Blue Crosses):**

* At Layer 0, Head ≈ 26, Generalized Ships ≈ 18.

* At Layer 2, Head ≈ 22, Generalized Ships ≈ 15.

* At Layer 4, Head ≈ 18, Generalized Ships ≈ 12.

* At Layer 6, Head ≈ 12, Generalized Ships ≈ 9.

* At Layer 8, Head ≈ 8, Generalized Ships ≈ 6.

* At Layer 10, Head ≈ 6, Generalized Ships ≈ 6.

* At Layer 12, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 14, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 16, Head ≈ 4, Generalized Ships ≈ 3.

### Key Observations

* In both plots, both data series (Undifferentiated Attention and Scaling Contribution) generally decrease in "Generalized Ships" as the "Layer" increases.

* The "jailbreakbench" plot exhibits higher "Generalized Ships" values overall compared to the "Malicious Instruct" plot.

* The initial values for both data series are relatively high at lower layers (0-4) and then rapidly decline.

* Beyond Layer 10, both data series converge towards very low "Generalized Ships" values in both plots.

* The "Undifferentiated Attention" series consistently shows slightly higher "Generalized Ships" values than the "Scaling Contribution" series at lower layers in both plots.

### Interpretation

These heatmaps likely represent the effectiveness of different safety heads ("Undifferentiated Attention" and "Scaling Contribution") at mitigating risks in language models when exposed to jailbreakbench prompts (designed to bypass safety mechanisms) and malicious instructions. The "Layer" likely refers to the depth of the neural network.

The decreasing trend in "Generalized Ships" with increasing "Layer" suggests that the safety heads become less effective as the input propagates deeper into the model. This could be due to the model learning to circumvent the safety mechanisms at higher layers. The higher values observed in the "jailbreakbench" plot indicate that the safety heads are more effective at defending against jailbreak prompts compared to malicious instructions.

The convergence of both data series at higher layers suggests a potential saturation point where the safety heads offer minimal additional protection. The initial higher performance of "Undifferentiated Attention" might indicate that this approach is more robust in the early stages of processing, but its effectiveness diminishes as the input moves through the network.

The data suggests that focusing on improving safety mechanisms at lower layers might be more effective than solely relying on deeper layers. Further investigation is needed to understand why the safety heads become less effective at higher layers and to explore strategies for maintaining their effectiveness throughout the entire network.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot with Color Mapping: Top 10 Safety Heads on Jailbreakbench and Malicious Instruct

### Overview

The image displays two side-by-side scatter plots. Each plot visualizes the "Top 10 Safety Heads" identified on a specific benchmark. The left plot is for "Jailbreakbench," and the right plot is for "Malicious Instruct" (note: the title contains a typo, "Insturct"). Each plot maps individual "Heads" (y-axis) across different "Layers" (x-axis) of a model. Data points are categorized by two metrics ("Undifferentiated Attention" and "Scaling Contribution") and are color-coded by a third metric, "Generalized Ships," with a corresponding color bar.

### Components/Axes

**Common Elements for Both Plots:**

* **X-axis:** Label: "Layer". Scale: Linear, from 0 to 30, with major ticks every 2 units.

* **Y-axis:** Label: "Head". Scale: Linear, from 0 to 30, with major ticks every 2 units.

* **Legend:** Located in the top-right corner of each plot area.

* Purple Circle (●): "Undifferentiated Attention"

* Yellow X (✕): "Scaling Contribution"

* **Color Bar:** Located to the right of each plot, labeled "Generalized Ships". It maps point color to a numerical value.

**Left Plot Specifics:**

* **Title:** "Top 10 Safety Heads on Jailbreakbench"

* **Color Bar Scale:** Ranges from approximately 4 (dark purple) to 32 (bright yellow). Ticks at 4, 8, 12, 16, 20, 24, 28, 32.

**Right Plot Specifics:**

* **Title:** "Top 10 Safety Heads on Malicious Insturct"

* **Color Bar Scale:** Ranges from 0 (dark purple) to 21 (bright yellow). Ticks at 0, 3, 6, 9, 12, 15, 18, 21.

### Detailed Analysis

**Left Plot: Jailbreakbench**

* **Data Points (Approximate Layer, Head, Generalized Ships Value, Category):**

* (Layer ~1, Head ~21, Ships ~22, Scaling Contribution - X)

* (Layer ~1, Head ~22, Ships ~24, Scaling Contribution - X)

* (Layer ~1, Head ~13, Ships ~16, Scaling Contribution - X)

* (Layer ~1, Head ~15, Ships ~18, Scaling Contribution - X)

* (Layer ~2, Head ~1, Ships ~8, Undifferentiated Attention - Circle)

* (Layer ~2, Head ~6, Ships ~10, Undifferentiated Attention - Circle)

* (Layer ~2, Head ~8, Ships ~12, Undifferentiated Attention - Circle)

* (Layer ~2, Head ~18, Ships ~20, Scaling Contribution - X)

* (Layer ~3, Head ~0, Ships ~6, Undifferentiated Attention - Circle)

* (Layer ~3, Head ~2, Ships ~10, Undifferentiated Attention - Circle)

* (Layer ~3, Head ~7, Ships ~12, Undifferentiated Attention - Circle)

* (Layer ~3, Head ~8, Ships ~14, Undifferentiated Attention - Circle)

* (Layer ~4, Head ~2, Ships ~10, Undifferentiated Attention - Circle)

* (Layer ~4, Head ~7, Ships ~12, Undifferentiated Attention - Circle)

* (Layer ~5, Head ~15, Ships ~18, Scaling Contribution - X)

* (Layer ~9, Head ~0, Ships ~4, Scaling Contribution - X)

* (Layer ~13, Head ~4, Ships ~8, Scaling Contribution - X)

* (Layer ~13, Head ~23, Ships ~22, Scaling Contribution - X)

* (Layer ~28, Head ~26, Ships ~26, Undifferentiated Attention - Circle)

* (Layer ~2, Head ~26, Ships ~32, Undifferentiated Attention - Circle) *[Highest value on this plot]*

**Right Plot: Malicious Instruct**

* **Data Points (Approximate Layer, Head, Generalized Ships Value, Category):**

* (Layer ~1, Head ~21, Ships ~15, Scaling Contribution - X)

* (Layer ~1, Head ~22, Ships ~16, Scaling Contribution - X)

* (Layer ~1, Head ~13, Ships ~9, Scaling Contribution - X)

* (Layer ~1, Head ~15, Ships ~12, Scaling Contribution - X)

* (Layer ~2, Head ~1, Ships ~3, Undifferentiated Attention - Circle)

* (Layer ~2, Head ~6, Ships ~6, Undifferentiated Attention - Circle)

* (Layer ~2, Head ~8, Ships ~9, Undifferentiated Attention - Circle)

* (Layer ~2, Head ~15, Ships ~12, Undifferentiated Attention - Circle)

* (Layer ~2, Head ~25, Ships ~18, Scaling Contribution - X)

* (Layer ~2, Head ~27, Ships ~21, Scaling Contribution - X)

* (Layer ~3, Head ~0, Ships ~3, Undifferentiated Attention - Circle)

* (Layer ~3, Head ~2, Ships ~6, Undifferentiated Attention - Circle)

* (Layer ~3, Head ~7, Ships ~9, Undifferentiated Attention - Circle)

* (Layer ~3, Head ~8, Ships ~9, Undifferentiated Attention - Circle)

* (Layer ~4, Head ~2, Ships ~6, Undifferentiated Attention - Circle)

* (Layer ~4, Head ~7, Ships ~9, Undifferentiated Attention - Circle)

* (Layer ~13, Head ~1, Ships ~6, Scaling Contribution - X)

* (Layer ~13, Head ~4, Ships ~9, Scaling Contribution - X)

* (Layer ~13, Head ~23, Ships ~15, Scaling Contribution - X)

* (Layer ~28, Head ~26, Ships ~18, Undifferentiated Attention - Circle)

### Key Observations

1. **Spatial Distribution:** In both plots, the majority of identified "Safety Heads" are clustered in the very early layers (Layers 0-5). There is a significant sparse region between layers ~6 and ~12, with only a few isolated points in later layers (e.g., Layer 13, Layer 28).

2. **Category Distribution:** The "Undifferentiated Attention" heads (circles) are predominantly found in the early-layer cluster. The "Scaling Contribution" heads (X's) are more spread out, appearing in the early cluster, the mid-layer (Layer 13), and the late layer (Layer 28).

3. **Metric Comparison ("Generalized Ships"):**

* The color scale for "Jailbreakbench" (4-32) has a higher maximum and wider range than for "Malicious Instruct" (0-21).

* The single highest "Generalized Ships" value (32) appears in the Jailbreakbench plot at (Layer 2, Head 26).

* For corresponding head positions (e.g., the early-layer cluster), the "Generalized Ships" values are consistently higher in the Jailbreakbench plot than in the Malicious Instruct plot.

4. **Trend Verification:** There is no simple linear trend (e.g., "ships increase with layer"). Instead, the data shows that high-importance heads (as measured by "Generalized Ships") are not uniformly distributed but are concentrated in specific layers, with the most critical ones appearing very early in the network.

### Interpretation

This visualization analyzes which attention heads within a large language model are most important for safety-related behaviors across two different adversarial benchmarks. The "Generalized Ships" metric likely quantifies the contribution or importance of each head.

The key finding is that **safety-relevant information is processed very early in the model's architecture**. The dense cluster of high-importance heads in layers 0-5 suggests that foundational pattern recognition or initial content filtering related to safety occurs at the beginning of the processing pipeline. The presence of important heads in later layers (13, 28) indicates that some safety processing or refinement also happens after the initial processing stages.

The difference in the "Generalized Ships" scale between the two plots suggests that the "Jailbreakbench" task may elicit stronger or more concentrated activation of these safety heads compared to the "Malicious Instruct" task. The consistent spatial pattern across both benchmarks, however, implies a common underlying mechanism or location for safety processing within the model, regardless of the specific adversarial trigger. This has implications for model interpretability and safety alignment, pointing to specific, early layers as critical targets for analysis or intervention.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plots: Top 10 Safety Heads on Jailbreakbench and Malicious Instrct

### Overview

The image contains two side-by-side scatter plots comparing safety head metrics across layers for two datasets: "Jailbreakbench" (left) and "Malicious Instrct" (right). Each plot uses color-coded markers (purple circles for "Undifferentiated Attention" and yellow crosses for "Scaling Contribution") and a color scale for "Generalized Ships." The plots reveal spatial distributions of data points, with notable outliers and trends.

---

### Components/Axes

- **Left Plot (Jailbreakbench):**

- **X-axis (Layer):** 0 to 30 (integer increments).

- **Y-axis (Head):** 0 to 30 (integer increments).

- **Legend:** Top-right corner, with purple circles labeled "Undifferentiated Attention" and yellow crosses labeled "Scaling Contribution."

- **Color Scale:** Right side, labeled "Generalized Ships" (0–32), with a gradient from dark purple (low) to yellow (high).

- **Right Plot (Malicious Instrct):**

- **X-axis (Layer):** 0 to 30 (integer increments).

- **Y-axis (Head):** 0 to 28 (integer increments).

- **Legend:** Top-right corner, same labels as the left plot.

- **Color Scale:** Right side, labeled "Generalized Ships" (0–21), with a gradient from dark purple (low) to yellow (high).

---

### Detailed Analysis

#### Left Plot (Jailbreakbench)

- **Data Points:**

- **Highest Head Value:** 29 at Layer 3 (purple circle).

- **Notable Outlier:** Yellow cross at Layer 3, Head 26 (highest Scaling Contribution).

- **Trend:** Head values generally decrease as Layer increases, with a cluster of low Head values (0–8) at Layers 0–4.

- **Color Scale:** The yellow cross at Layer 3 has the highest Generalized Ships (32), while most points cluster in the 8–16 range.

- **Spatial Grounding:**

- Purple circles (Undifferentiated Attention) dominate the lower-left quadrant (Layers 0–10, Heads 0–10).

- Yellow crosses (Scaling Contribution) are scattered, with the highest value at Layer 3.

#### Right Plot (Malicious Instrct)

- **Data Points:**

- **Highest Head Value:** 29 at Layer 2 (purple circle).

- **Notable Outlier:** Yellow cross at Layer 14, Head 22 (highest Scaling Contribution).

- **Trend:** Head values decrease with Layer, but with a cluster of low Head values (0–8) at Layers 0–6.

- **Color Scale:** The yellow cross at Layer 14 has the highest Generalized Ships (21), while most points cluster in the 3–12 range.

- **Spatial Grounding:**

- Purple circles (Undifferentiated Attention) are concentrated in the lower-left quadrant (Layers 0–6, Heads 0–8).

- Yellow crosses (Scaling Contribution) are sparse, with the highest value at Layer 14.

---

### Key Observations

1. **Outliers:**

- Jailbreakbench: A yellow cross at Layer 3 (Head 26) stands out as the highest Scaling Contribution.

- Malicious Instrct: A yellow cross at Layer 14 (Head 22) is the highest Scaling Contribution.

2. **Trends:**

- Both plots show a general decline in Head values as Layer increases, but with exceptions (e.g., Layer 3 in Jailbreakbench, Layer 2 in Malicious Instrct).

- The color scale suggests that higher Generalized Ships correlate with specific layers (e.g., Layer 3 in Jailbreakbench, Layer 14 in Malicious Instrct).

3. **Legend Consistency:**

- Purple circles (Undifferentiated Attention) and yellow crosses (Scaling Contribution) are consistently mapped across both plots.

---

### Interpretation

- **Data Implications:**

- The "Undifferentiated Attention" (purple circles) dominates lower layers, suggesting a focus on foundational patterns in early layers.

- "Scaling Contribution" (yellow crosses) appears in specific layers, indicating targeted optimization for safety in those regions.

- The color scale (Generalized Ships) highlights layers where safety mechanisms are most generalized, with Jailbreakbench showing higher values (up to 32) compared to Malicious Instrct (up to 21).

- **Anomalies:**

- The yellow cross at Layer 3 (Jailbreakbench) and Layer 14 (Malicious Instrct) may represent critical layers where safety scaling is prioritized, despite lower Head values in surrounding layers.

- **Broader Context:**

- The plots likely reflect model architecture design choices, where certain layers are optimized for safety through attention mechanisms or scaling strategies. The disparity in Generalized Ships between datasets suggests differing safety requirements or model configurations.

---

### Final Notes

- **Language:** All text is in English. No non-English content is present.

- **Data Completeness:** All axis labels, legends, and color scales are explicitly described. No data tables or embedded text beyond the legends and axis titles are visible.

- **Uncertainty:** Approximate values are provided based on grid alignment (e.g., Head 29 at Layer 3 in Jailbreakbench). Exact numerical precision cannot be confirmed without raw data.

DECODING INTELLIGENCE...