\n

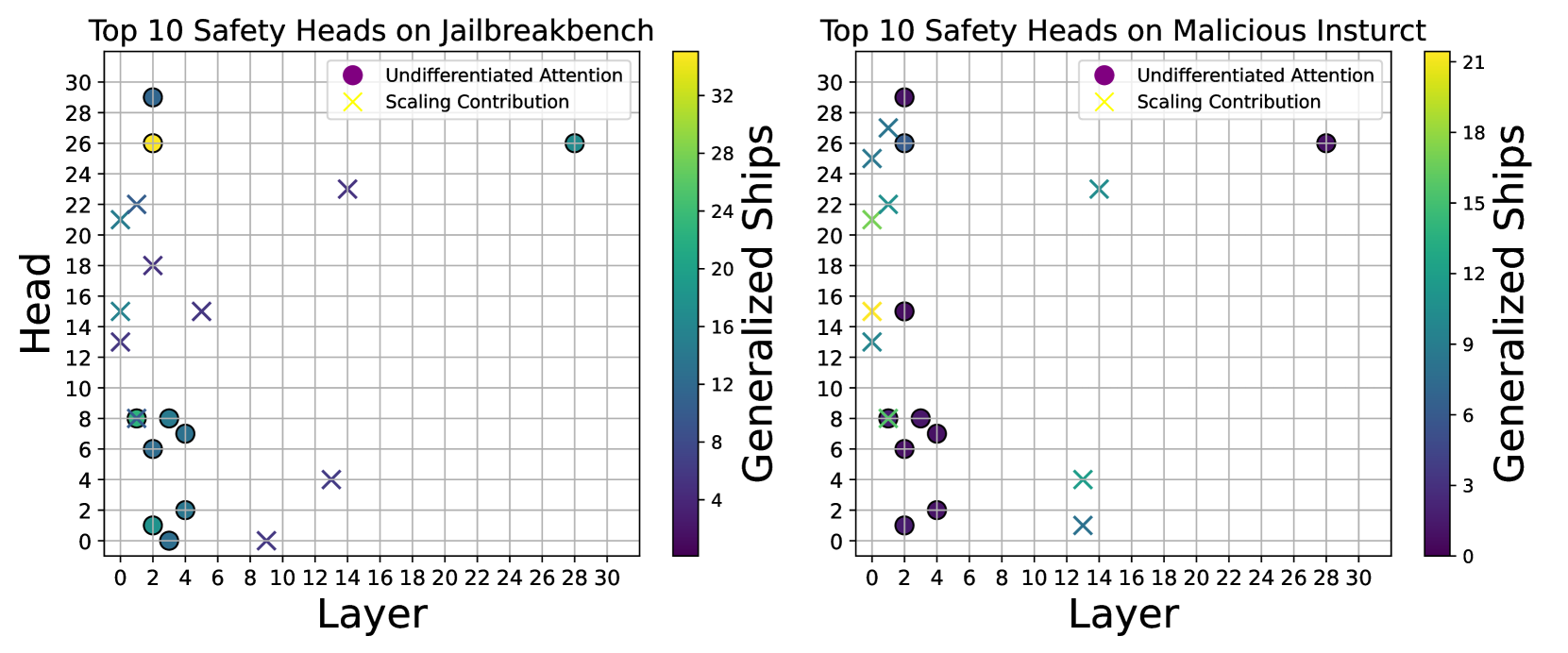

## Heatmap: Top 10 Safety Heads on Jailbreakbench & Malicious Instruct

### Overview

The image presents two heatmaps side-by-side. Both plots visualize the relationship between "Layer" (x-axis) and "Head" (y-axis) with color representing "Generalized Ships". The left heatmap focuses on "Top 10 Safety Heads on jailbreakbench", while the right heatmap focuses on "Top 10 Safety Heads on Malicious Instruct". Each heatmap displays two data series: "Undifferentiated Attention" (purple circles) and "Scaling Contribution" (light blue crosses). The color scale on each heatmap indicates the value of "Generalized Ships", ranging from approximately 0 to 32 for the jailbreakbench plot and 0 to 21 for the malicious instruct plot.

### Components/Axes

* **X-axis (Both Plots):** "Layer", ranging from 0 to 30.

* **Y-axis (Both Plots):** "Head", ranging from 0 to 30.

* **Color Scale (Both Plots):** "Generalized Ships", with a gradient from dark blue (low values) to dark red (high values).

* **Left Plot Legend (Top-Right):**

* Purple Circle: "Undifferentiated Attention"

* Light Blue Cross: "Scaling Contribution"

* **Right Plot Legend (Top-Right):**

* Purple Circle: "Undifferentiated Attention"

* Light Blue Cross: "Scaling Contribution"

* **Title (Left Plot):** "Top 10 Safety Heads on jailbreakbench"

* **Title (Right Plot):** "Top 10 Safety Heads on Malicious Instruct"

### Detailed Analysis or Content Details

**Left Plot (jailbreakbench):**

* **Undifferentiated Attention (Purple Circles):**

* At Layer 0, Head ≈ 28, Generalized Ships ≈ 32.

* At Layer 2, Head ≈ 24, Generalized Ships ≈ 28.

* At Layer 4, Head ≈ 16, Generalized Ships ≈ 16.

* At Layer 6, Head ≈ 8, Generalized Ships ≈ 8.

* At Layer 8, Head ≈ 4, Generalized Ships ≈ 4.

* At Layer 10, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 12, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 14, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 16, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 18, Head ≈ 2, Generalized Ships ≈ 2.

* **Scaling Contribution (Light Blue Crosses):**

* At Layer 0, Head ≈ 22, Generalized Ships ≈ 24.

* At Layer 2, Head ≈ 18, Generalized Ships ≈ 18.

* At Layer 4, Head ≈ 14, Generalized Ships ≈ 14.

* At Layer 6, Head ≈ 10, Generalized Ships ≈ 10.

* At Layer 8, Head ≈ 6, Generalized Ships ≈ 6.

* At Layer 10, Head ≈ 4, Generalized Ships ≈ 4.

* At Layer 12, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 14, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 16, Head ≈ 2, Generalized Ships ≈ 2.

* At Layer 18, Head ≈ 2, Generalized Ships ≈ 2.

**Right Plot (Malicious Instruct):**

* **Undifferentiated Attention (Purple Circles):**

* At Layer 0, Head ≈ 28, Generalized Ships ≈ 21.

* At Layer 2, Head ≈ 24, Generalized Ships ≈ 18.

* At Layer 4, Head ≈ 16, Generalized Ships ≈ 15.

* At Layer 6, Head ≈ 8, Generalized Ships ≈ 9.

* At Layer 8, Head ≈ 6, Generalized Ships ≈ 6.

* At Layer 10, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 12, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 14, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 16, Head ≈ 4, Generalized Ships ≈ 3.

* **Scaling Contribution (Light Blue Crosses):**

* At Layer 0, Head ≈ 26, Generalized Ships ≈ 18.

* At Layer 2, Head ≈ 22, Generalized Ships ≈ 15.

* At Layer 4, Head ≈ 18, Generalized Ships ≈ 12.

* At Layer 6, Head ≈ 12, Generalized Ships ≈ 9.

* At Layer 8, Head ≈ 8, Generalized Ships ≈ 6.

* At Layer 10, Head ≈ 6, Generalized Ships ≈ 6.

* At Layer 12, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 14, Head ≈ 4, Generalized Ships ≈ 3.

* At Layer 16, Head ≈ 4, Generalized Ships ≈ 3.

### Key Observations

* In both plots, both data series (Undifferentiated Attention and Scaling Contribution) generally decrease in "Generalized Ships" as the "Layer" increases.

* The "jailbreakbench" plot exhibits higher "Generalized Ships" values overall compared to the "Malicious Instruct" plot.

* The initial values for both data series are relatively high at lower layers (0-4) and then rapidly decline.

* Beyond Layer 10, both data series converge towards very low "Generalized Ships" values in both plots.

* The "Undifferentiated Attention" series consistently shows slightly higher "Generalized Ships" values than the "Scaling Contribution" series at lower layers in both plots.

### Interpretation

These heatmaps likely represent the effectiveness of different safety heads ("Undifferentiated Attention" and "Scaling Contribution") at mitigating risks in language models when exposed to jailbreakbench prompts (designed to bypass safety mechanisms) and malicious instructions. The "Layer" likely refers to the depth of the neural network.

The decreasing trend in "Generalized Ships" with increasing "Layer" suggests that the safety heads become less effective as the input propagates deeper into the model. This could be due to the model learning to circumvent the safety mechanisms at higher layers. The higher values observed in the "jailbreakbench" plot indicate that the safety heads are more effective at defending against jailbreak prompts compared to malicious instructions.

The convergence of both data series at higher layers suggests a potential saturation point where the safety heads offer minimal additional protection. The initial higher performance of "Undifferentiated Attention" might indicate that this approach is more robust in the early stages of processing, but its effectiveness diminishes as the input moves through the network.

The data suggests that focusing on improving safety mechanisms at lower layers might be more effective than solely relying on deeper layers. Further investigation is needed to understand why the safety heads become less effective at higher layers and to explore strategies for maintaining their effectiveness throughout the entire network.