# Technical Data Extraction: Model Accuracy vs. Context Length

## 1. Component Isolation

* **Header:** None present.

* **Main Chart Area:** A 2D line graph plotted on a Cartesian coordinate system with a light-gray dashed grid.

* **Legend:** Located in the center-right of the plot area.

* **Axes:** Y-axis (left) representing "Accuracy" and X-axis (bottom) representing "Context length".

---

## 2. Axis Labels and Markers

### Y-Axis (Vertical)

* **Label:** Accuracy

* **Scale:** Linear, ranging from 0.0 to 1.0.

* **Major Tick Markers:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

### X-Axis (Horizontal)

* **Label:** Context length

* **Scale:** Linear, ranging from approximately 500 to 5500.

* **Major Tick Markers:** 1000, 2000, 3000, 4000, 5000.

---

## 3. Legend and Data Series Identification

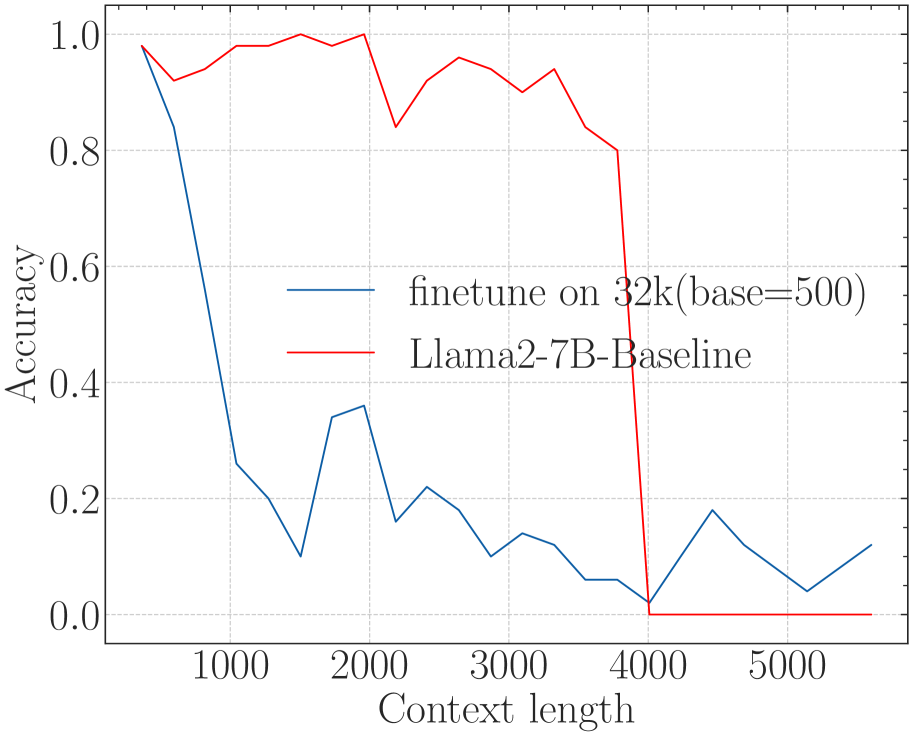

The legend contains two entries, which correspond to the two lines plotted:

1. **Blue Line (Dark Blue/Teal):** `finetune on 32k(base=500)`

2. **Red Line:** `Llama2-7B-Baseline`

---

## 4. Trend Verification and Data Extraction

### Series 1: Llama2-7B-Baseline (Red Line)

* **Visual Trend:** The line starts at near-perfect accuracy (~0.98) at the shortest context length. It maintains high performance (fluctuating between 0.85 and 1.0) until a context length of approximately 3800. At the 4000 mark, the line exhibits a catastrophic "cliff-edge" drop, falling vertically to 0.0 and remaining at 0.0 for all subsequent context lengths.

* **Key Data Points (Estimated):**

| Context Length | Accuracy |

| :--- | :--- |

| ~500 | 0.98 |

| 1000 | 0.98 |

| 2000 | 1.0 |

| 2200 | 0.84 |

| 3800 | 0.80 |

| 4000 | 0.0 |

| 5000+ | 0.0 |

### Series 2: finetune on 32k(base=500) (Blue Line)

* **Visual Trend:** This line starts high (~0.98) but immediately begins a steep decline as context length increases. By a context length of 1000, accuracy has dropped significantly. It continues to trend downward with high volatility (zig-zagging) between 0.0 and 0.4. Unlike the baseline, it does not hit a hard zero at 4000, but its overall performance is significantly lower than the baseline in the 500-3800 range.

* **Key Data Points (Estimated):**

| Context Length | Accuracy |

| :--- | :--- |

| ~500 | 0.98 |

| 1000 | 0.26 |

| 1500 | 0.10 |

| 2000 | 0.36 |

| 3000 | 0.10 |

| 4000 | 0.02 |

| 4500 | 0.18 |

| 5500 | 0.12 |

---

## 5. Summary of Findings

The chart compares the performance of a baseline Llama2-7B model against a version finetuned on 32k context with a base of 500.

* **The Baseline (Red)** is highly effective within its native context window (up to ~3800-4000 tokens) but fails completely and immediately once that limit is exceeded.

* **The Finetuned Model (Blue)** shows a significant degradation in accuracy even at relatively short context lengths (starting at 1000). While it technically "survives" past the 4000-token limit where the baseline fails, its accuracy remains very low (generally below 0.2) and unstable across the entire extended range.