\n

## Bar Chart: Speaker Selections by Model and Clause Type

### Overview

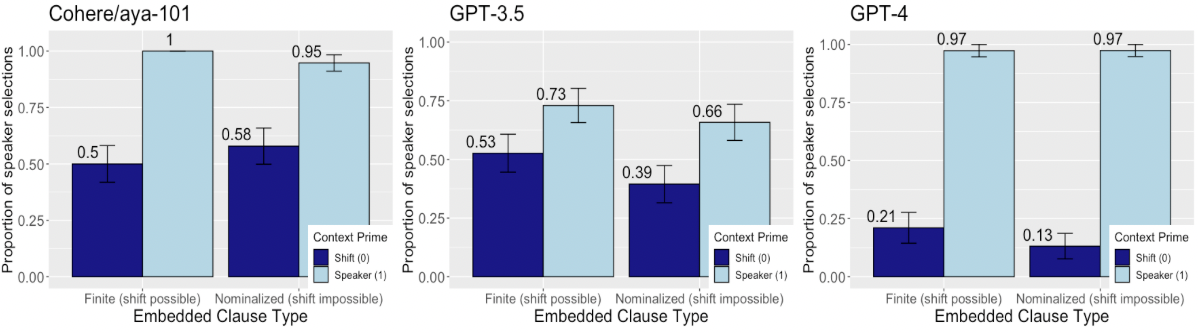

The image presents three bar charts comparing the proportion of speaker selections across different language models (Cohere/aya-101, GPT-3.5, and GPT-4) and two types of embedded clauses: "Finite (shift possible)" and "Nominalized (shift impossible)". Each chart displays two data series: "Context Prime" and "Speaker (1)", represented by different colors. Error bars are included for each data point.

### Components/Axes

* **X-axis:** "Embedded Clause Type" with two categories: "Finite (shift possible)" and "Nominalized (shift impossible)".

* **Y-axis:** "Proportion of speaker selections", ranging from 0.00 to 1.00.

* **Models:** Three separate charts are presented, one for each model: "Cohere/aya-101", "GPT-3.5", and "GPT-4". These titles are positioned above each chart.

* **Legend:** Located in the bottom-right corner of each chart, the legend identifies the two data series:

* "Context Prime" (light blue)

* "Speaker (1)" (dark blue)

* **Error Bars:** Vertical lines extending above and below each bar, indicating the standard error or confidence interval.

### Detailed Analysis

**Chart 1: Cohere/aya-101**

* **Context Prime:**

* "Finite (shift possible)": Approximately 0.50, with error bars extending from roughly 0.35 to 0.65.

* "Nominalized (shift impossible)": Approximately 0.95, with error bars extending from roughly 0.85 to 1.00.

* **Speaker (1):**

* "Finite (shift possible)": Approximately 0.57, with error bars extending from roughly 0.45 to 0.70.

* "Nominalized (shift impossible)": Approximately 0.58, with error bars extending from roughly 0.45 to 0.70.

**Chart 2: GPT-3.5**

* **Context Prime:**

* "Finite (shift possible)": Approximately 0.53, with error bars extending from roughly 0.40 to 0.65.

* "Nominalized (shift impossible)": Approximately 0.73, with error bars extending from roughly 0.60 to 0.85.

* **Speaker (1):**

* "Finite (shift possible)": Approximately 0.39, with error bars extending from roughly 0.25 to 0.55.

* "Nominalized (shift impossible)": Approximately 0.66, with error bars extending from roughly 0.50 to 0.80.

**Chart 3: GPT-4**

* **Context Prime:**

* "Finite (shift possible)": Approximately 0.21, with error bars extending from roughly 0.10 to 0.35.

* "Nominalized (shift impossible)": Approximately 0.97, with error bars extending from roughly 0.90 to 1.00.

* **Speaker (1):**

* "Finite (shift possible)": Approximately 0.13, with error bars extending from roughly 0.05 to 0.25.

* "Nominalized (shift impossible)": Approximately 0.97, with error bars extending from roughly 0.90 to 1.00.

### Key Observations

* **Clause Type Effect:** Across all models, the "Nominalized (shift impossible)" clause type consistently yields higher proportions of speaker selections for both "Context Prime" and "Speaker (1)" compared to the "Finite (shift possible)" clause type.

* **Model Differences:** The models exhibit significant differences in their speaker selection proportions. GPT-4 shows the most pronounced difference between clause types, while Cohere/aya-101 shows the least.

* **GPT-3.5 Speaker (1) Anomaly:** GPT-3.5 has a notably lower proportion of speaker selections for the "Finite (shift possible)" clause type compared to the other models.

* **High Performance on Nominalized Clauses:** All models perform very well (close to 1.0) on the "Nominalized (shift impossible)" clause type for both data series.

### Interpretation

The data suggests that the type of embedded clause significantly influences speaker selection behavior in these language models. Nominalized clauses, where a shift is impossible, are more readily associated with speaker selection than finite clauses where a shift is possible. This could be due to the grammatical structure of nominalized clauses making the speaker's role more explicit or predictable.

The differences between the models indicate varying levels of sensitivity to this linguistic feature. GPT-4 appears to be the most sensitive, exhibiting the largest difference in speaker selection proportions between the two clause types. The lower performance of GPT-3.5 on the "Finite (shift possible)" clause type suggests a potential weakness in its ability to handle this type of grammatical construction.

The consistently high performance on nominalized clauses across all models might indicate that this clause type is a strong signal for speaker selection, and the models have learned to leverage this signal effectively. The error bars indicate some variability in the results, suggesting that other factors beyond clause type also influence speaker selection. Further investigation could explore the impact of context, speaker identity, and other linguistic features on these models' behavior.