## Heatmap/Attention Matrix: Token Alignment in a Bilingual Context

### Overview

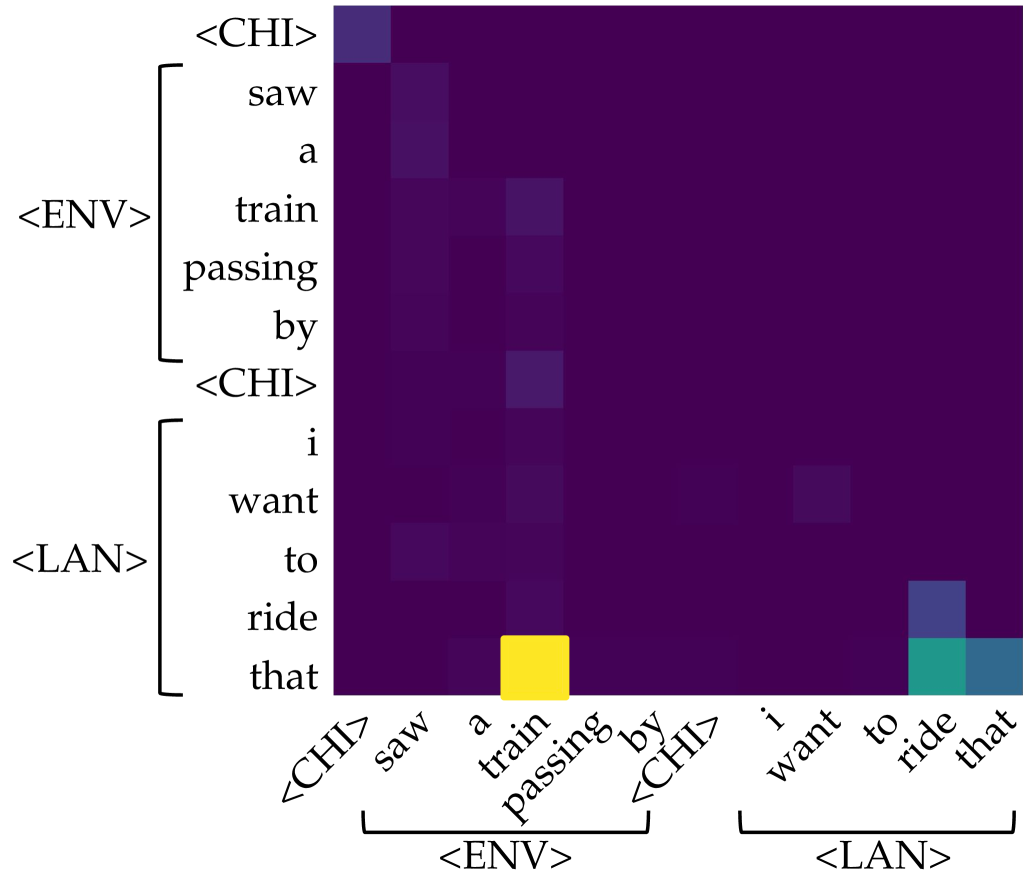

The image displays a heatmap, likely an attention matrix or alignment visualization from a neural machine translation or cross-lingual model. It shows the relationship or attention weights between a sequence of tokens on the vertical (y) axis and the same sequence on the horizontal (x) axis. The color intensity represents the strength of the relationship, with bright yellow indicating the highest value and dark purple indicating the lowest (near-zero) value.

### Components/Axes

* **Chart Type:** Square heatmap/attention matrix.

* **Y-Axis (Rows):** A sequence of tokens, grouped by brackets and labels.

* Top Group (labeled `<ENV>`): `<CHI>`, `saw`, `a`, `train`, `passing`, `by`, `<CHI>`

* Bottom Group (labeled `<LAN>`): `i`, `want`, `to`, `ride`, `that`

* **X-Axis (Columns):** The same sequence of tokens, mirrored from the y-axis, also grouped.

* Left Group (labeled `<ENV>`): `<CHI>`, `saw`, `a`, `train`, `passing`, `by`, `<CHI>`

* Right Group (labeled `<LAN>`): `i`, `want`, `to`, `ride`, `that`

* **Color Scale/Legend:** No explicit legend is present. The scale is inferred visually:

* **Bright Yellow:** Highest value/attention (approx. 1.0 or maximum).

* **Teal/Green:** Medium-high value.

* **Blue/Purple:** Low to very low value.

* **Dark Purple/Black:** Near-zero value.

* **Spatial Grounding:** The most prominent feature is a single bright yellow cell located at the intersection of the row labeled `that` (the last token in the `<LAN>` group) and the column labeled `train` (the fourth token in the `<ENV>` group). This cell is in the lower-right quadrant of the matrix.

### Detailed Analysis

**Token Sequence (Transcribed):**

The full sequence, read from top-to-bottom on the y-axis and left-to-right on the x-axis, is:

`<CHI> saw a train passing by <CHI> i want to ride that`

**Language Declaration:** The token `<CHI>` is present. This is a common placeholder or special token in NLP models indicating Chinese language content. However, **no actual Chinese characters are visible in the image itself**. The visible text is entirely in English. Therefore, the `<CHI>` token is transcribed directly, and its implied meaning (Chinese) is noted.

**Heatmap Content & Trends:**

1. **Overall Pattern:** The matrix is overwhelmingly dark purple, indicating very low attention or alignment between most token pairs. The relationships are highly sparse.

2. **Primary Alignment (Strongest Signal):** There is one exceptionally strong alignment (bright yellow) between the English word `that` (row) and the English word `train` (column). This suggests the model is strongly associating the demonstrative pronoun "that" with the noun "train" from the preceding clause.

3. **Secondary Alignments (Weaker Signals):**

* A medium-intensity (teal/green) cell aligns the row `that` with the column `ride`.

* A low-intensity (blue) cell aligns the row `ride` with the column `that`.

* Very faint, low-intensity (dark blue/purple) alignments are visible between other tokens, such as `saw` and `train`, or `want` and `train`, but these are barely distinguishable from the background.

4. **Diagonal:** The main diagonal (where row and column tokens are identical, e.g., `saw`-`saw`, `train`-`train`) does **not** show the expected high values. This is atypical for a standard self-attention matrix and suggests this may be a specialized cross-attention or alignment plot between two different sequences or representations.

### Key Observations

* **Extreme Sparsity:** The model's attention or alignment is focused almost exclusively on one specific pair (`that` -> `train`).

* **Asymmetry:** The alignment is not symmetric. The `that`->`train` link is very strong, while the reverse `train`->`that` link is extremely weak (dark purple).

* **Contextual Grouping:** The tokens are explicitly grouped into `<ENV>` (likely "Environment" or context) and `<LAN>` (likely "Language" or target utterance) segments. The strongest link bridges these two groups.

* **Lack of Self-Alignment:** The absence of a strong diagonal suggests this is not a visualization of standard intra-sequence self-attention.

### Interpretation

This heatmap likely visualizes the **cross-lingual or cross-modal alignment** learned by a model. The `<ENV>` group (`<CHI> saw a train passing by <CHI>`) appears to be a context or input (possibly a description in Chinese, denoted by `<CHI>` tokens, though the text shown is English). The `<LAN>` group (`i want to ride that`) is the target language output or query.

The data demonstrates that the model has learned a **highly specific and strong semantic link** between the concept of "train" in the environmental context and the word "that" in the language utterance. This indicates the model correctly identifies "that" as referring to the "train" mentioned earlier. The weaker link between "that" and "ride" is also semantically coherent, as one rides a train.

The sparsity suggests the model is very decisive in its alignment, ignoring most other potential word pairs. The lack of self-alignment on the diagonal reinforces that this plot is specifically showing *cross-sequence* relationships, not intra-sequence dependencies. The primary takeaway is the model's successful grounding of the pronoun "that" to its antecedent "train" across what may be different modalities or languages.