\n

## Bar Chart: Memorization Rate of Language Models

### Overview

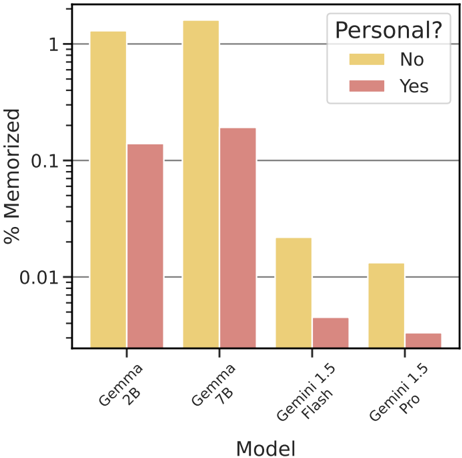

This bar chart compares the percentage of memorized information by four different language models (Gemma 2B, Gemma 7B, Gemini 1.5 Flash, and Gemini 1.5 Pro) for both personal and non-personal data. The y-axis represents the percentage memorized, displayed on a logarithmic scale, and the x-axis represents the model name. Two bars are present for each model, one representing "No" (non-personal data) and one representing "Yes" (personal data).

### Components/Axes

* **X-axis:** "Model" with categories: Gemma 2B, Gemma 7B, Gemini 1.5 Flash, Gemini 1.5 Pro.

* **Y-axis:** "% Memorized" - Logarithmic scale. Markers are approximately at 0.001, 0.01, 0.1, and 1.

* **Legend:** Located in the top-right corner.

* "No" - Represented by a yellow color.

* "Yes" - Represented by a reddish-brown color.

### Detailed Analysis

The chart consists of eight bars, two for each model.

* **Gemma 2B:**

* "No" (Yellow): Approximately 1.1. The bar extends to just above the '1' marker on the y-axis.

* "Yes" (Reddish-brown): Approximately 0.12. The bar extends to between the 0.1 and 1 markers.

* **Gemma 7B:**

* "No" (Yellow): Approximately 1.2. The bar extends slightly above the '1' marker.

* "Yes" (Reddish-brown): Approximately 0.15. The bar extends between the 0.1 and 1 markers.

* **Gemini 1.5 Flash:**

* "No" (Yellow): Approximately 0.05. The bar extends to between the 0.01 and 0.1 markers.

* "Yes" (Reddish-brown): Approximately 0.005. The bar extends to just above the 0.001 marker.

* **Gemini 1.5 Pro:**

* "No" (Yellow): Approximately 0.02. The bar extends to between the 0.001 and 0.01 markers.

* "Yes" (Reddish-brown): Approximately 0.002. The bar extends to just above the 0.001 marker.

The "No" bars (yellow) are consistently higher than the "Yes" bars (reddish-brown) for all models. The Gemma models exhibit significantly higher memorization rates than the Gemini models, particularly for non-personal data.

### Key Observations

* Gemma 2B and 7B demonstrate a much higher memorization rate compared to Gemini 1.5 Flash and Pro.

* Memorization rates are substantially higher for non-personal data ("No") than for personal data ("Yes") across all models.

* The Gemini models show very low memorization rates, especially for personal data.

* The y-axis is logarithmic, which exaggerates the differences between the lower values.

### Interpretation

The data suggests that the Gemma models (2B and 7B) are more prone to memorizing training data than the Gemini models (1.5 Flash and Pro). The significant difference in memorization rates between "No" and "Yes" data indicates that these models are less likely to memorize personal information, which is a desirable characteristic for privacy reasons. However, the high memorization rates of the Gemma models, particularly for non-personal data, could raise concerns about potential data leakage or unintended reproduction of training data. The logarithmic scale emphasizes the large difference in memorization rates between the Gemma and Gemini models, and the very low memorization rates of the Gemini models. This could be due to differences in model architecture, training data, or regularization techniques employed during training. Further investigation would be needed to determine the underlying causes of these differences.