## Bar Chart: Model Memorization Rates by Data Type

### Overview

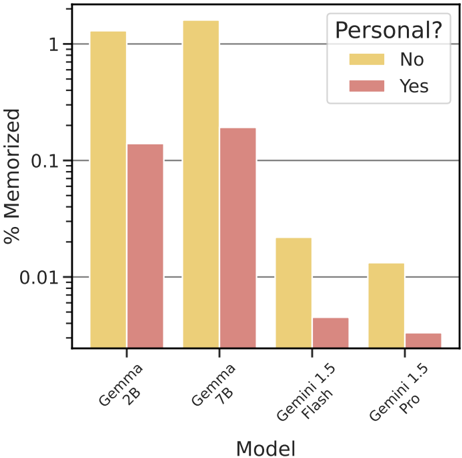

This is a grouped bar chart comparing the percentage of memorization across four different AI models, categorized by whether the memorized data is personal ("Yes") or not ("No"). The chart uses a logarithmic scale on the y-axis to display a wide range of values.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **X-Axis (Horizontal):**

* **Label:** "Model"

* **Categories (from left to right):** "Gemma 2B", "Gemma 7B", "Gemini 1.5 Flash", "Gemini 1.5 Pro".

* **Y-Axis (Vertical):**

* **Label:** "% Memorized"

* **Scale:** Logarithmic (base 10).

* **Major Tick Marks:** 0.01, 0.1, 1.

* **Minor Tick Marks:** Present between major ticks, indicating intermediate values (e.g., 0.02, 0.05, 0.2, 0.5).

* **Legend:**

* **Title:** "Personal?"

* **Location:** Top-right corner of the chart area.

* **Categories:**

* "No" - Represented by a yellow/gold bar.

* "Yes" - Represented by a red/salmon bar.

### Detailed Analysis

The chart displays two bars for each model, representing the "% Memorized" for non-personal ("No", yellow) and personal ("Yes", red) data.

**1. Gemma 2B:**

* **Non-Personal (Yellow):** The bar extends slightly above the 1.0 mark. **Approximate Value:** ~1.1%.

* **Personal (Red):** The bar is between 0.1 and 0.2. **Approximate Value:** ~0.15%.

* **Trend:** Memorization for non-personal data is significantly higher (by nearly an order of magnitude) than for personal data.

**2. Gemma 7B:**

* **Non-Personal (Yellow):** The tallest bar in the chart, extending above the 1.0 mark. **Approximate Value:** ~1.3%.

* **Personal (Red):** The bar is between 0.1 and 0.2, slightly taller than the corresponding bar for Gemma 2B. **Approximate Value:** ~0.2%.

* **Trend:** Similar to Gemma 2B, non-personal memorization is much higher. This model shows the highest overall memorization rates in the chart.

**3. Gemini 1.5 Flash:**

* **Non-Personal (Yellow):** The bar is between 0.01 and 0.1. **Approximate Value:** ~0.025%.

* **Personal (Red):** The bar is below the 0.01 mark. **Approximate Value:** ~0.004%.

* **Trend:** Both memorization rates are substantially lower than the Gemma models. The non-personal rate is still notably higher than the personal rate.

**4. Gemini 1.5 Pro:**

* **Non-Personal (Yellow):** The bar is just above the 0.01 mark. **Approximate Value:** ~0.012%.

* **Personal (Red):** The bar is the shortest in the chart, well below 0.01. **Approximate Value:** ~0.002%.

* **Trend:** This model exhibits the lowest memorization rates overall. The pattern of higher non-personal memorization persists.

### Key Observations

1. **Consistent Pattern:** For all four models, the percentage of memorization for non-personal data ("No", yellow bars) is consistently and significantly higher than for personal data ("Yes", red bars).

2. **Model Comparison:** The Gemma models (2B and 7B) demonstrate much higher memorization rates (on the order of 0.1% to >1%) compared to the Gemini models (Flash and Pro), which are in the range of 0.002% to 0.025%.

3. **Scale Impact:** The use of a logarithmic y-axis is crucial for visualizing the data, as the values span over three orders of magnitude (from ~0.002% to ~1.3%).

4. **Relative Difference:** The *ratio* between non-personal and personal memorization appears roughly similar across models, with non-personal rates being approximately 5 to 10 times higher than personal rates.

### Interpretation

This chart provides a technical comparison of how different large language models retain or "memorize" information from their training data, with a specific focus on the sensitivity of that data.

* **Data Sensitivity & Memorization:** The clear and consistent trend suggests that all evaluated models have a lower tendency to memorize data flagged as "personal." This could be an intended outcome of safety training, data filtering, or an emergent property of how models process different data types. It implies a potential built-in or learned mechanism for reduced retention of personally identifiable information (PII).

* **Model Architecture/Scale Effects:** The stark difference between the Gemma and Gemini families indicates that model architecture, training methodology, or data curation practices have a profound impact on memorization behavior. The Gemini models, particularly "Pro," show orders-of-magnitude lower memorization, which may reflect different design priorities regarding privacy and data security.

* **Privacy Implications:** From a privacy and security perspective, the lower memorization of personal data is a positive indicator. However, the non-zero rates, especially for the Gemma models, highlight that memorization remains a potential risk that needs to be managed. The data suggests that model choice is a critical factor in mitigating this risk.

* **Limitations:** The chart does not define what constitutes "Personal?" data, which is a critical parameter. The absolute percentages are low, but their significance depends on the volume of data processed. The investigation is Peircean in that it moves from observing a consistent pattern (lower personal memorization) to inferring a likely cause (model training or architecture prioritizing privacy) and suggesting a practical implication (model selection for sensitive applications).