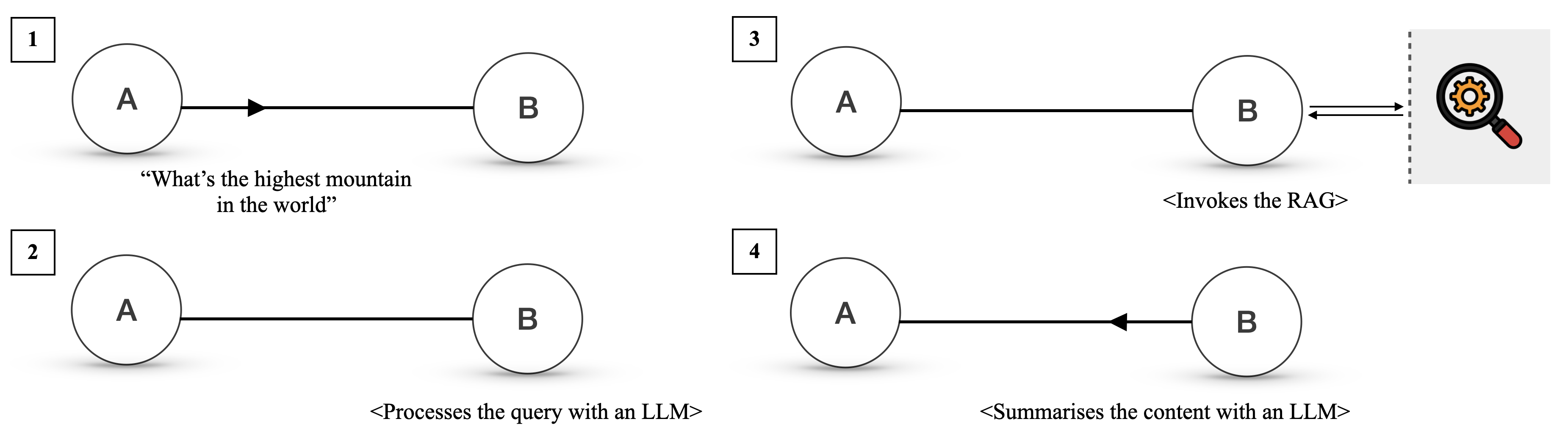

## Flowchart: Multi-Step AI Query Processing System

### Overview

The diagram illustrates a four-step process for handling a query about "the highest mountain in the world" using AI components. It shows sequential interactions between two entities (A and B) with specific actions at each stage, culminating in a search interface.

### Components/Axes

1. **Nodes**:

- Two labeled circles (A and B) in each step

- Magnifying glass icon with gear (step 3)

2. **Arrows**:

- Directional flow indicators between nodes

3. **Text Labels**:

- Step numbers (1-4) in top-left corners

- Descriptive text under each step

4. **Interface Element**:

- Search interface with magnifying glass (step 3)

### Detailed Analysis

1. **Step 1**:

- Query "What's the highest mountain in the world?" originates from node A

- Transmitted to node B via rightward arrow

2. **Step 2**:

- Node A processes the query using an LLM (Large Language Model)

- Output flows to node B via bidirectional arrow

3. **Step 3**:

- Node A invokes a RAG (Retrieval-Augmented Generation) system

- Visualized by magnifying glass with gear icon

- Output returns to node B via bidirectional arrow

4. **Step 4**:

- Final summarization occurs at node A using an LLM

- Result transmitted to node B via rightward arrow

### Key Observations

- Bidirectional arrows in steps 2 and 3 suggest iterative processing

- Magnifying glass icon represents external data retrieval (RAG)

- All steps maintain consistent node labeling (A→B flow)

- No numerical data present; purely procedural diagram

### Interpretation

This flowchart demonstrates a hybrid AI workflow combining:

1. **Natural Language Processing** (LLM for query understanding)

2. **Knowledge Retrieval** (RAG for factual verification)

3. **Content Synthesis** (LLM for final summarization)

The bidirectional arrows between nodes A and B in steps 2-3 imply a feedback loop where the LLM and RAG system collaborate to refine the response. The magnifying glass icon visually reinforces the RAG component's role in information retrieval. The consistent A→B flow suggests a centralized processing unit (B) receiving inputs from an initiating entity (A), possibly representing user-interface interaction.

The diagram emphasizes the integration of different AI paradigms - pure language modeling (LLM) and retrieval-augmented approaches (RAG) - to handle factual queries more effectively than either method alone.