## Diagram: Knowledge Graph (KG) Integration with LLMs for Question Answering

### Overview

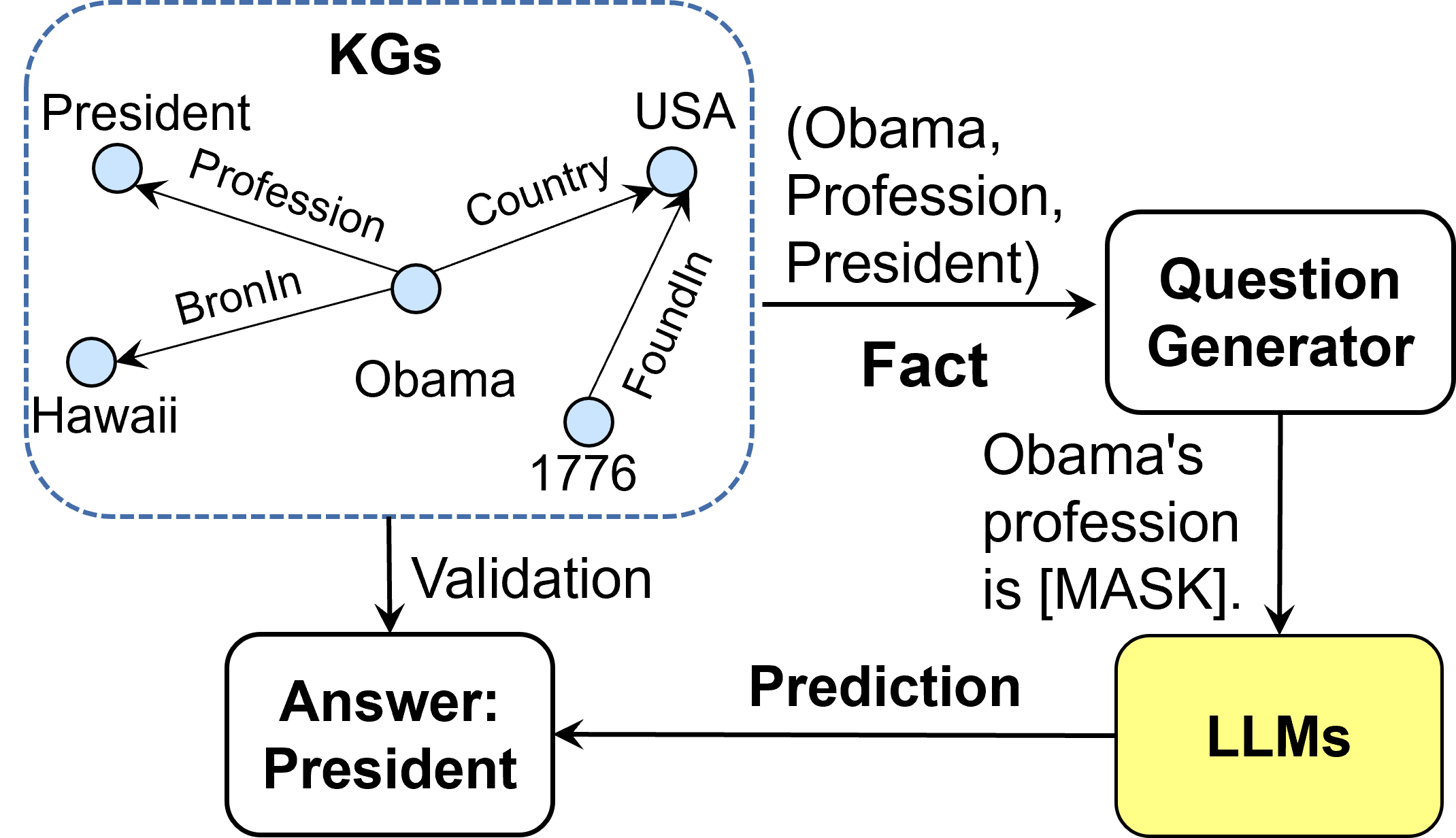

The image is a technical diagram illustrating a process flow where a Knowledge Graph (KG) is used to generate factual questions, which are then answered by Large Language Models (LLMs). The process includes a validation step where the LLM's prediction is checked against the original KG fact. The diagram is composed of a central KG subgraph, connected via labeled arrows to three processing blocks: a Question Generator, LLMs, and an Answer/Validation block.

### Components/Axes

The diagram is segmented into four primary regions:

1. **Top-Left (Dashed Box):** A Knowledge Graph (KG) subgraph.

2. **Top-Right:** A "Question Generator" block.

3. **Bottom-Right:** A yellow "LLMs" block.

4. **Bottom-Left:** An "Answer: President" block.

**Labels and Text Elements:**

* **KGs Box Title:** "KGs"

* **KG Nodes (Circles):** "President", "Obama", "Hawaii", "USA", "1776"

* **KG Edges (Arrows with Labels):**

* From "Obama" to "President": "Profession"

* From "Obama" to "Hawaii": "BronIn" (Note: Likely a typo for "BornIn")

* From "Obama" to "USA": "Country"

* From "1776" to "USA": "FoundedIn"

* **Flow Labels:**

* Arrow from KG to Question Generator: "Fact" with the text "(Obama, Profession, President)" above it.

* Arrow from Question Generator to LLMs: "Obama's profession is [MASK]."

* Arrow from LLMs to Answer block: "Prediction"

* Arrow from KG to Answer block: "Validation"

* **Block Labels:**

* "Question Generator"

* "LLMs"

* "Answer: President"

### Detailed Analysis

**1. Knowledge Graph (KG) Subgraph:**

* **Central Entity:** "Obama" is the central node.

* **Relationships:**

* `Obama --(Profession)--> President`: States Obama's profession.

* `Obama --(BronIn)--> Hawaii`: States Obama's birthplace (with a noted typo).

* `Obama --(Country)--> USA`: States Obama's country.

* `1776 --(FoundedIn)--> USA`: States the founding year of the USA.

* **Spatial Layout:** The KG is enclosed in a blue dashed rectangle in the top-left quadrant. "Obama" is centrally located within this box. "President" is top-left of Obama, "Hawaii" is bottom-left, "USA" is top-right, and "1776" is bottom-right.

**2. Process Flow:**

* **Step 1 - Fact Extraction:** A specific fact, represented as the triple `(Obama, Profession, President)`, is extracted from the KG. This is indicated by the arrow labeled "Fact" pointing from the KG box to the "Question Generator".

* **Step 2 - Question Generation:** The "Question Generator" block processes the fact to create a natural language question with a mask: `"Obama's profession is [MASK]."`

* **Step 3 - LLM Prediction:** The masked question is fed into the "LLMs" block (highlighted in yellow). The LLM generates a prediction for the masked token.

* **Step 4 - Validation:** The LLM's "Prediction" is sent to the "Answer" block. Simultaneously, the original fact from the KG is used for "Validation". The final output in the answer block is "President", indicating the correct answer derived from the KG and confirmed as the valid prediction.

### Key Observations

* **Typo in Diagram:** The edge label "BronIn" is almost certainly a typographical error for "BornIn".

* **Closed-Loop System:** The diagram depicts a closed-loop evaluation or training system. The KG provides ground truth, which is transformed into a testable format (masked question) for the LLM, and the result is validated against the original source.

* **Specific Example:** The entire process is demonstrated using a single, concrete example about Barack Obama's profession, making the abstract process easy to follow.

* **Visual Emphasis:** The "LLMs" block is the only one filled with color (yellow), drawing attention to it as the core component being tested or utilized.

### Interpretation

This diagram illustrates a methodology for **evaluating or training LLMs on factual knowledge** using structured Knowledge Graphs. The process demonstrates how unstructured language models can be grounded in and tested against a structured, verifiable knowledge base.

* **Purpose:** The system is designed to generate precise, fact-based questions from a KG to probe an LLM's knowledge. The `[MASK]` token format is typical of cloze-style tasks used in model training and evaluation (e.g., BERT).

* **Relationships:** The KG acts as the authoritative source of truth. The Question Generator acts as a translator, converting structured triples into natural language. The LLM is the system under test, and the validation step closes the loop by comparing the model's output to the KG's fact.

* **Significance:** This approach addresses a key challenge with LLMs: their tendency to generate plausible but factually incorrect information ("hallucination"). By using a KG for validation, the process ensures answer correctness and provides a clear metric for model performance. The specific example shows the system correctly identifying "President" as the profession, validating that the LLM's prediction aligns with the structured fact `(Obama, Profession, President)`.

* **Underlying Concept:** The diagram promotes the idea of **neuro-symbolic AI**, where neural networks (LLMs) are combined with symbolic knowledge representations (KGs) to achieve more reliable and interpretable results.