## Flowchart: Knowledge Graph (KG) Integration with Language Models (LLMs) for Question Answering

### Overview

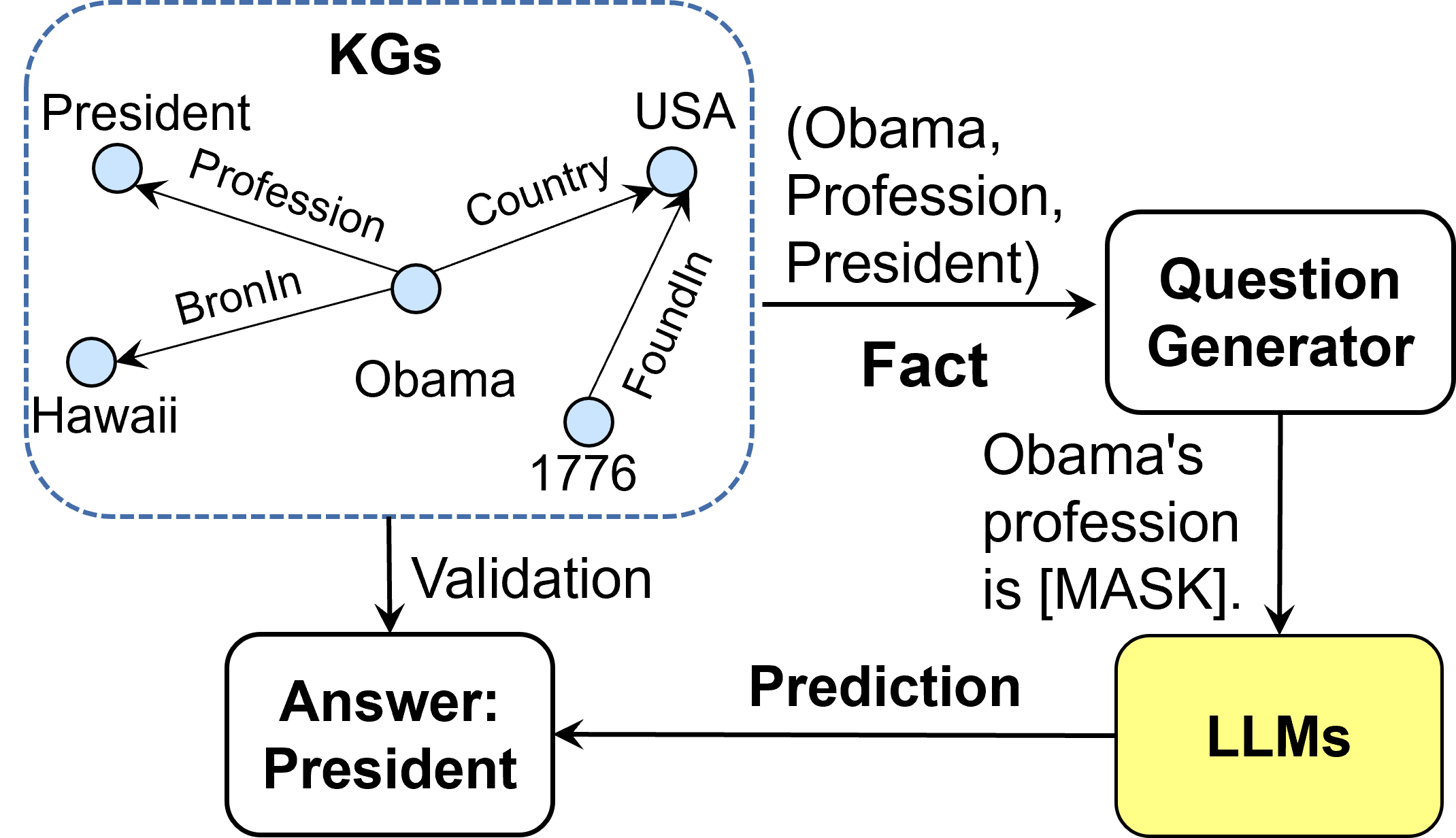

The diagram illustrates a system architecture that integrates a Knowledge Graph (KG) with Large Language Models (LLMs) for question answering. It demonstrates how structured knowledge (KG) is used to validate predictions from LLMs, ensuring factual accuracy in responses.

### Components/Axes

1. **KG Section (Left Box)**:

- **Nodes**:

- President

- USA

- Profession

- Country

- Obama

- Bronlin

- Hawaii

- 1776

- **Edges**:

- "Profession" (connects President → Profession)

- "Country" (connects President → USA)

- "Bronlin" (connects President → Hawaii)

- "Foundlin" (connects President → 1776)

- **Structure**: Hierarchical relationships between entities and attributes.

2. **Process Flow (Right Side)**:

- **Question Generator**:

- Input: `(Obama, Profession, President)`

- Output: `Obama's profession is [MASK].`

- **LLMs**:

- Receives the masked question and generates a prediction.

- **Validation**:

- Compares LLM prediction (`Answer: President`) against the KG.

### Detailed Analysis

- **KG Relationships**:

- `President` is linked to `Profession`, `Country` (USA), `Bronlin` (Hawaii), and `Foundlin` (1776).

- Entities like `Obama` are implicitly connected to these attributes via the KG structure.

- **Process Workflow**:

1. The KG provides structured facts (e.g., `President → Profession`).

2. The Question Generator formulates a query with a masked slot (`[MASK]`).

3. The LLM predicts the missing value (e.g., "President").

4. Validation checks if the prediction aligns with the KG.

### Key Observations

- The KG acts as a factual anchor for the LLM's predictions.

- The system explicitly tests the LLM's ability to retrieve structured knowledge (e.g., Obama's profession).

- The validation step ensures the LLM's output is grounded in verified facts.

### Interpretation

This architecture highlights the synergy between structured knowledge graphs and generative models. By using the KG to validate LLM outputs, the system mitigates hallucination risks and improves reliability. The example of Obama's profession demonstrates how the pipeline can answer domain-specific questions while cross-referencing authoritative data. The spatial separation of KG and LLM components emphasizes modularity, allowing independent updates to either system without disrupting the overall workflow.