TECHNICAL ASSET FINGERPRINT

0f9ec4a57499e456a0914184

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

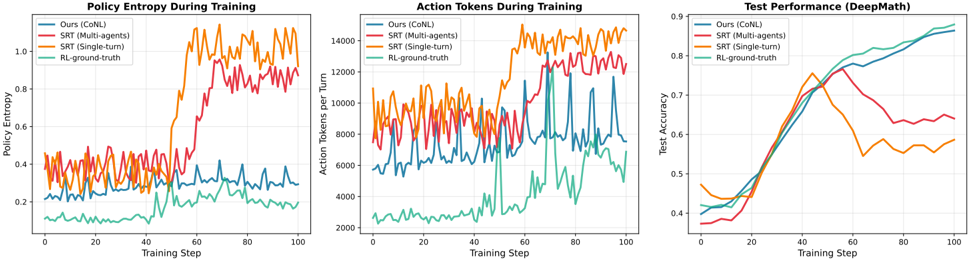

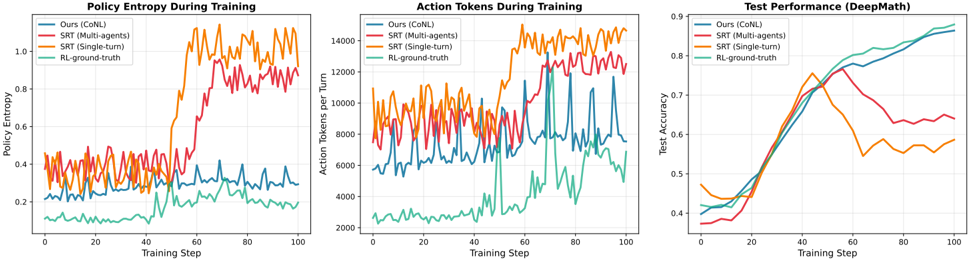

## Chart Type: Multiple Line Graphs Comparing Training Performance

### Overview

The image presents three line graphs comparing the performance of different training methods: "Ours (CoNL)", "SRT (Multi-agents)", "SRT (Single-turn)", and "RL-ground-truth". The graphs depict "Policy Entropy During Training", "Action Tokens During Training", and "Test Performance (DeepMath)" across training steps.

### Components/Axes

**Graph 1: Policy Entropy During Training**

* **Title:** Policy Entropy During Training

* **Y-axis:** Policy Entropy, ranging from 0.0 to 1.0 in increments of 0.2.

* **X-axis:** Training Step, ranging from 0 to 100 in increments of 20.

* **Legend (Top-Left):**

* Blue: Ours (CoNL)

* Red: SRT (Multi-agents)

* Orange: SRT (Single-turn)

* Teal: RL-ground-truth

**Graph 2: Action Tokens During Training**

* **Title:** Action Tokens During Training

* **Y-axis:** Action Tokens per Turn, ranging from 2000 to 14000 in increments of 2000.

* **X-axis:** Training Step, ranging from 0 to 100 in increments of 20.

* **Legend (Top-Left):**

* Blue: Ours (CoNL)

* Red: SRT (Multi-agents)

* Orange: SRT (Single-turn)

* Teal: RL-ground-truth

**Graph 3: Test Performance (DeepMath)**

* **Title:** Test Performance (DeepMath)

* **Y-axis:** Test Accuracy, ranging from 0.4 to 0.9 in increments of 0.1.

* **X-axis:** Training Step, ranging from 0 to 100 in increments of 20.

* **Legend (Top-Left):**

* Blue: Ours (CoNL)

* Red: SRT (Multi-agents)

* Orange: SRT (Single-turn)

* Teal: RL-ground-truth

### Detailed Analysis

**Graph 1: Policy Entropy During Training**

* **Ours (CoNL) (Blue):** Stays relatively constant around 0.3.

* **SRT (Multi-agents) (Red):** Starts around 0.35, increases to approximately 0.5 around step 50, then increases again to approximately 0.8 around step 70, then fluctuates between 0.8 and 0.9.

* **SRT (Single-turn) (Orange):** Starts around 0.3, increases sharply around step 50 to approximately 1.0, then fluctuates between 0.9 and 1.1.

* **RL-ground-truth (Teal):** Starts around 0.1, increases gradually to approximately 0.25 by step 100.

**Graph 2: Action Tokens During Training**

* **Ours (CoNL) (Blue):** Fluctuates between approximately 4000 and 8000.

* **SRT (Multi-agents) (Red):** Fluctuates between approximately 8000 and 12000.

* **SRT (Single-turn) (Orange):** Fluctuates between approximately 10000 and 14000.

* **RL-ground-truth (Teal):** Fluctuates between approximately 2000 and 4000.

**Graph 3: Test Performance (DeepMath)**

* **Ours (CoNL) (Blue):** Increases steadily from approximately 0.4 to 0.85.

* **SRT (Multi-agents) (Red):** Increases from approximately 0.38 to a peak of approximately 0.75 around step 50, then decreases and fluctuates between 0.6 and 0.65.

* **SRT (Single-turn) (Orange):** Increases from approximately 0.4 to a peak of approximately 0.78 around step 40, then decreases and fluctuates between 0.5 and 0.6.

* **RL-ground-truth (Teal):** Increases steadily from approximately 0.4 to 0.9.

### Key Observations

* In the Policy Entropy graph, SRT (Single-turn) and SRT (Multi-agents) show a significant increase in entropy during training, while Ours (CoNL) remains relatively stable. RL-ground-truth shows a gradual increase.

* In the Action Tokens graph, SRT (Single-turn) consistently uses the most action tokens, followed by SRT (Multi-agents), Ours (CoNL), and RL-ground-truth.

* In the Test Performance graph, RL-ground-truth and Ours (CoNL) achieve the highest test accuracy, while SRT (Single-turn) and SRT (Multi-agents) peak earlier and then plateau or decrease.

### Interpretation

The graphs suggest that while SRT (Single-turn) and SRT (Multi-agents) initially perform well, their performance plateaus or decreases in terms of test accuracy, possibly due to increased entropy and a higher number of action tokens used. Ours (CoNL) and RL-ground-truth, on the other hand, show more stable and ultimately better test performance, indicating a more effective learning process for the DeepMath task. The higher action tokens used by SRT methods might indicate less efficient strategies. The policy entropy results suggest that the SRT methods explore a wider range of actions, which might lead to instability in the long run.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Charts: Training Performance Comparison

### Overview

The image presents three line charts displayed side-by-side, comparing the performance of four different reinforcement learning algorithms during training. The charts track Policy Entropy, Action Tokens per Turn, and Test Performance (DeepMath) over 100 training steps. Each chart uses the same color scheme to represent the four algorithms: Ours (CoNL), SRT (Multi-agents), SRT (Single-turn), and RL-ground-truth.

### Components/Axes

Each chart shares the following components:

* **X-axis:** Training Step (ranging from 0 to 100, with increments of 10)

* **Y-axis:** Varies depending on the chart:

* Chart 1: Policy Entropy (ranging from approximately 0.1 to 0.9, with increments of 0.1)

* Chart 2: Action Tokens per Turn (ranging from approximately 2000 to 14000, with increments of 2000)

* Chart 3: Test Accuracy (ranging from approximately 0.35 to 0.9, with increments of 0.05)

* **Legend:** Located at the top-left of each chart, identifying the algorithms by color:

* Ours (CoNL) - Teal

* SRT (Multi-agents) - Blue

* SRT (Single-turn) - Red

* RL-ground-truth - Orange

### Detailed Analysis or Content Details

**Chart 1: Policy Entropy During Training**

* **Ours (CoNL) - Teal:** The line fluctuates around a low value, approximately 0.2, with minor oscillations.

* **SRT (Multi-agents) - Blue:** The line starts at approximately 0.4 and remains relatively stable, with slight variations.

* **SRT (Single-turn) - Red:** The line begins at approximately 0.5 and exhibits a significant upward trend, reaching a peak of around 0.85 at step 60, then decreasing slightly to approximately 0.75 at step 100.

* **RL-ground-truth - Orange:** The line starts at approximately 0.6 and shows a strong upward trend, peaking at around 0.9 at step 60, then decreasing to approximately 0.8 at step 100.

**Chart 2: Action Tokens During Training**

* **Ours (CoNL) - Teal:** The line fluctuates around a value of approximately 3000, with oscillations.

* **SRT (Multi-agents) - Blue:** The line starts at approximately 4000 and remains relatively stable, with minor variations.

* **SRT (Single-turn) - Red:** The line begins at approximately 6000 and exhibits a fluctuating pattern, generally staying between 6000 and 10000.

* **RL-ground-truth - Orange:** The line starts at approximately 8000 and shows a fluctuating pattern, generally staying between 8000 and 14000.

**Chart 3: Test Performance (DeepMath)**

* **Ours (CoNL) - Teal:** The line starts at approximately 0.4 and exhibits a steady upward trend, reaching approximately 0.75 at step 100.

* **SRT (Multi-agents) - Blue:** The line starts at approximately 0.4 and shows a rapid upward trend, reaching approximately 0.85 at step 60, then leveling off slightly to approximately 0.8 at step 100.

* **SRT (Single-turn) - Red:** The line begins at approximately 0.4 and exhibits a slow upward trend, reaching approximately 0.6 at step 100.

* **RL-ground-truth - Orange:** The line starts at approximately 0.4 and shows a rapid upward trend, peaking at approximately 0.9 at step 40, then decreasing to approximately 0.65 at step 100.

### Key Observations

* The "RL-ground-truth" algorithm consistently demonstrates the highest policy entropy and action tokens per turn, indicating a more exploratory behavior.

* "SRT (Multi-agents)" and "Ours (CoNL)" show relatively stable policy entropy and action tokens per turn.

* "SRT (Single-turn)" exhibits the most significant increase in policy entropy during training.

* In terms of test accuracy, "SRT (Multi-agents)" initially outperforms the other algorithms, but its performance plateaus after step 60. "Ours (CoNL)" shows a consistent improvement in test accuracy throughout the training process.

* "RL-ground-truth" achieves high test accuracy initially but experiences a decline in performance towards the end of the training process.

### Interpretation

The charts suggest that the "Ours (CoNL)" algorithm demonstrates a balanced approach to exploration and exploitation, as evidenced by its moderate policy entropy and consistent improvement in test accuracy. The "SRT (Multi-agents)" algorithm achieves high initial test accuracy but may suffer from overfitting or a lack of continued learning. The "RL-ground-truth" algorithm, while exhibiting high exploration, may not generalize well to unseen data, as indicated by its declining test accuracy. The "SRT (Single-turn)" algorithm's increasing policy entropy suggests it is becoming more exploratory, but its test accuracy remains relatively low.

The relationship between the charts is evident: higher policy entropy and action tokens per turn often correlate with increased exploration, which can lead to higher initial test accuracy but may also result in decreased generalization performance. The optimal algorithm appears to be one that balances exploration and exploitation to achieve both high test accuracy and good generalization capabilities. The decline in "RL-ground-truth" performance suggests that excessive exploration without sufficient exploitation can be detrimental to long-term performance. The consistent improvement of "Ours (CoNL)" suggests a more robust learning strategy.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Composite Training Performance Charts: Policy Entropy, Action Tokens, and Test Accuracy

### Overview

The image displays three side-by-side line charts comparing the training dynamics and final performance of four different methods or models. The charts track metrics over 100 training steps. The overall purpose is to demonstrate the behavior and effectiveness of a proposed method ("Ours (CoNL)") against three baselines: "SRT (Multi-agents)", "SRT (Single-agent)", and "RL-ground-truth".

### Components/Axes

**Common Elements Across All Charts:**

* **X-Axis:** Labeled "Training Step". Scale runs from 0 to 100 with major ticks at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Legend:** Located in the top-left corner of each chart. Contains four entries with corresponding line colors:

* `Ours (CoNL)`: Blue line

* `SRT (Multi-agents)`: Orange line

* `SRT (Single-agent)`: Red line

* `RL-ground-truth`: Green line

* **Grid:** Light gray horizontal and vertical grid lines are present.

**Chart 1 (Left): Policy Entropy During Training**

* **Title:** "Policy Entropy During Training"

* **Y-Axis:** Labeled "Policy Entropy". Scale runs from 0.0 to 1.0 with major ticks at 0.2 intervals.

**Chart 2 (Center): Action Tokens During Training**

* **Title:** "Action Tokens During Training"

* **Y-Axis:** Labeled "Action Tokens per Turn". Scale runs from 2000 to 14000 with major ticks at 2000 intervals.

**Chart 3 (Right): Test Performance (DeepMath)**

* **Title:** "Test Performance (DeepMath)"

* **Y-Axis:** Labeled "Test Accuracy". Scale runs from 0.4 to 0.9 with major ticks at 0.1 intervals.

### Detailed Analysis

**Chart 1: Policy Entropy During Training**

* **Trend Verification:**

* **Ours (CoNL) [Blue]:** Shows a relatively stable, low-entropy trend with minor fluctuations. It remains the lowest line for most of the training.

* **SRT (Multi-agents) [Orange]:** Exhibits high volatility and a general upward trend, especially after step 40. It becomes the highest-entropy line from step ~50 onward.

* **SRT (Single-agent) [Red]:** Also volatile, with a significant upward trend starting around step 40, closely following but generally below the multi-agent version.

* **RL-ground-truth [Green]:** Shows the lowest and most stable entropy, with a slight downward trend over time.

* **Approximate Data Points (Estimated from grid):**

* At Step 0: All methods start between ~0.2 and ~0.4.

* At Step 50: Blue ~0.3, Green ~0.2, Red ~0.6, Orange ~0.8.

* At Step 100: Blue ~0.3, Green ~0.2, Red ~0.9, Orange ~1.0.

**Chart 2: Action Tokens During Training**

* **Trend Verification:**

* **Ours (CoNL) [Blue]:** Displays a moderately volatile but generally stable trend, oscillating between ~6000 and ~10000 tokens.

* **SRT (Multi-agents) [Orange]:** Shows a strong upward trend with high volatility, rising from ~8000 to over 14000 tokens.

* **SRT (Single-agent) [Red]:** Follows a similar upward and volatile pattern to the multi-agent version, but at a slightly lower magnitude.

* **RL-ground-truth [Green]:** Starts very low (~2500), remains low until step ~60, then exhibits a sharp, volatile increase, peaking near 12000 before dropping again.

* **Approximate Data Points (Estimated from grid):**

* At Step 0: Green ~2500, Blue ~6000, Red ~8000, Orange ~8000.

* At Step 60: Green ~3000, Blue ~8000, Red ~10000, Orange ~12000.

* At Step 100: Green ~6000, Blue ~8000, Red ~12000, Orange ~14000.

**Chart 3: Test Performance (DeepMath)**

* **Trend Verification:**

* **Ours (CoNL) [Blue]:** Shows a smooth, steady, and strong upward trend, achieving the highest final accuracy.

* **SRT (Multi-agents) [Orange]:** Increases rapidly until step ~40, then experiences a sharp decline, followed by a partial recovery.

* **SRT (Single-agent) [Red]:** Follows a similar initial rise to the multi-agent version, peaks around step 50, then declines and stabilizes at a lower level.

* **RL-ground-truth [Green]:** Rises steadily, closely tracking the blue line until step ~40, then continues a smooth ascent to become the second-best performer.

* **Approximate Data Points (Estimated from grid):**

* At Step 0: All methods start between ~0.40 and ~0.45.

* At Step 40: All methods are clustered between ~0.65 and ~0.70.

* At Step 100: Blue ~0.88, Green ~0.85, Red ~0.65, Orange ~0.58.

### Key Observations

1. **Stability vs. Volatility:** The proposed method (`Ours (CoNL)`) demonstrates significantly more stable training dynamics (lower policy entropy variance, moderate action token usage) compared to the highly volatile SRT methods.

2. **Performance Divergence:** While all methods improve initially on the test task, a major divergence occurs after ~40-50 training steps. The SRT methods (especially multi-agent) suffer a performance collapse, whereas `Ours (CoNL)` and `RL-ground-truth` continue to improve steadily.

3. **Entropy and Token Correlation:** The rise in policy entropy for SRT methods (Chart 1) correlates with a dramatic increase in action tokens per turn (Chart 2), suggesting their policies become more random and verbose without gaining effectiveness.

4. **Ground Truth Benchmark:** The `RL-ground-truth` line serves as a high-performance baseline. `Ours (CoNL)` matches or slightly exceeds its final test accuracy while using more action tokens but maintaining lower policy entropy.

### Interpretation

The data suggests that the `Ours (CoNL)` method achieves a superior balance between exploration (measured by policy entropy) and efficient, goal-directed behavior (measured by test accuracy and action token usage). The SRT methods, particularly the multi-agent variant, appear to suffer from an instability or "collapse" during training. Their policies become increasingly random (high entropy) and generate excessively long action sequences without improving, and in fact harming, final task performance. This could indicate issues with credit assignment, non-stationarity, or reward hacking in those multi-agent setups. The charts effectively argue that the proposed CoNL method is more robust and sample-efficient, converging to a high-performing policy without the pathological behaviors exhibited by the baselines. The `RL-ground-truth` performance validates that high accuracy is achievable, and CoNL meets this benchmark.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Training Metrics Comparison

### Overview

The image contains three line graphs comparing training metrics across four methods: CoNL (Ours), SRT (Multi-agents), SRT (Single-turn), and RL-ground-truth. Each graph tracks a different metric over 100 training steps: Policy Entropy, Action Tokens per Turn, and Test Accuracy (DeepMath).

---

### Components/Axes

1. **Policy Entropy During Training**

- **X-axis**: Training Step (0–100)

- **Y-axis**: Policy Entropy (0.0–1.0)

- **Legend**:

- Blue: CoNL (Ours)

- Red: SRT (Multi-agents)

- Orange: SRT (Single-turn)

- Green: RL-ground-truth

2. **Action Tokens During Training**

- **X-axis**: Training Step (0–100)

- **Y-axis**: Action Tokens per Turn (0–14,000)

- **Legend**: Same as above.

3. **Test Performance (DeepMath)**

- **X-axis**: Training Step (0–100)

- **Y-axis**: Test Accuracy (0.0–0.9)

- **Legend**: Same as above.

---

### Detailed Analysis

#### Policy Entropy During Training

- **CoNL (Blue)**: Starts at ~0.25, fluctuates between 0.2–0.4, stabilizes near 0.35 by step 100.

- **SRT (Multi-agents) (Red)**: Peaks at ~0.9 at step 60, drops to ~0.6 by step 100.

- **SRT (Single-turn) (Orange)**: Peaks at ~0.85 at step 40, declines to ~0.6 by step 100.

- **RL-ground-truth (Green)**: Smoothly increases from ~0.1 to ~0.25.

#### Action Tokens During Training

- **CoNL (Blue)**: Ranges 5,000–10,000, peaks at ~12,000 at step 60.

- **SRT (Multi-agents) (Red)**: Peaks at ~10,000 at step 40, drops to ~6,000 by step 100.

- **SRT (Single-turn) (Orange)**: Peaks at ~13,000 at step 60, declines to ~8,000 by step 100.

- **RL-ground-truth (Green)**: Stable at ~3,000.

#### Test Performance (DeepMath)

- **CoNL (Blue)**: Steady rise from ~0.4 to ~0.85.

- **SRT (Multi-agents) (Red)**: Peaks at ~0.75 at step 60, drops to ~0.6 by step 100.

- **SRT (Single-turn) (Orange)**: Peaks at ~0.7 at step 40, declines to ~0.5 by step 100.

- **RL-ground-truth (Green)**: Smoothly increases from ~0.4 to ~0.88.

---

### Key Observations

1. **RL-ground-truth** consistently outperforms other methods in test accuracy and maintains lower policy entropy.

2. **SRT (Multi-agents)** shows the highest policy entropy spikes but underperforms in test accuracy after step 60.

3. **SRT (Single-turn)** has the highest action tokens but exhibits declining test performance after step 40.

4. **CoNL** demonstrates stable learning with moderate entropy and action tokens, achieving the second-highest test accuracy.

---

### Interpretation

- **RL-ground-truth** likely represents an idealized or benchmark model, showing optimal balance between entropy, action efficiency, and accuracy.

- **SRT methods** (Multi-agents/Single-turn) exhibit higher variability, suggesting potential instability in multi-agent coordination or overfitting in single-turn scenarios.

- **CoNL**'s steady improvement indicates robust training dynamics, though it lags behind RL-ground-truth in final performance.

- The divergence between action tokens and test accuracy (e.g., SRT Single-turn's high tokens but low final accuracy) implies that token volume alone does not guarantee performance.

The data highlights trade-offs between exploration (entropy), computational efficiency (tokens), and generalization (test accuracy), with RL-ground-truth emerging as the most effective approach.

DECODING INTELLIGENCE...