## Diagram: Recurrent Neural Network with KV Cache Illustration

### Overview

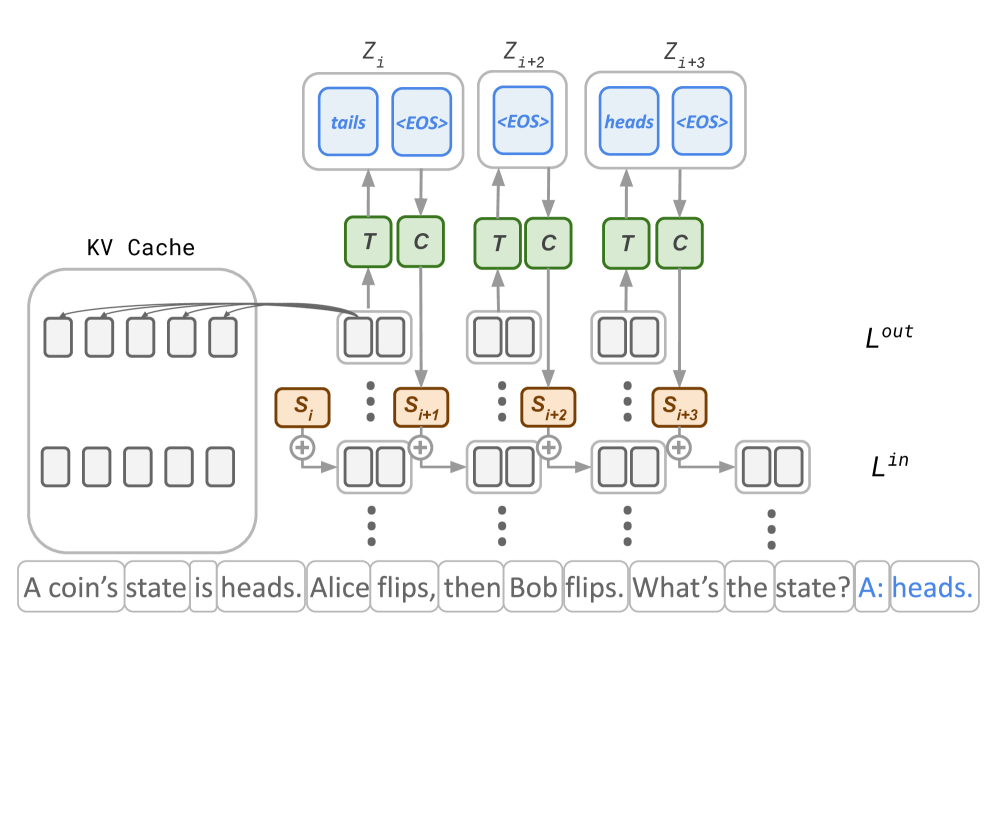

The image depicts a diagram illustrating a recurrent neural network architecture incorporating a Key-Value (KV) cache. The diagram shows the flow of information through the network, with a focus on how past states are stored and retrieved using the KV cache. The diagram also includes a textual example of a coin flip scenario.

### Components/Axes

The diagram consists of several key components:

* **L<sub>in</sub>**: Represents the input layer.

* **L<sub>out</sub>**: Represents the output layer.

* **S<sub>i</sub>, S<sub>i+1</sub>, S<sub>i+2</sub>, S<sub>i+3</sub>**: Represent the hidden states at different time steps. The "+" symbol indicates an addition operation.

* **KV Cache**: A storage mechanism for past key-value pairs.

* **T**: Represents a "Transformer" block within the KV cache.

* **C**: Represents a "Cache" block within the KV cache.

* **Z<sub>i</sub>, Z<sub>i+1</sub>, Z<sub>i+2</sub>, Z<sub>i+3</sub>**: Represent the output tokens at different time steps.

* **Textual Example**: "A coin's state is heads. Alice flips, then Bob flips. What's the state? A: heads."

### Detailed Analysis or Content Details

The diagram shows a sequential flow of information.

1. **Input Layer (L<sub>in</sub>)**: The input layer consists of a series of unlabeled boxes, representing the initial input sequence.

2. **Hidden States**: The input is processed through a series of hidden states (S<sub>i</sub> to S<sub>i+3</sub>). Each hidden state is calculated by adding the previous hidden state to some input.

3. **KV Cache**: The KV cache stores key-value pairs derived from the hidden states. The cache is structured with "Transformer" (T) and "Cache" (C) blocks. The arrows indicate that the hidden states are used to populate the KV cache.

4. **Output Layer (L<sub>out</sub>)**: The output layer receives information from both the current hidden state and the KV cache. The KV cache provides context from previous time steps.

5. **Output Tokens**: The output layer generates output tokens (Z<sub>i</sub> to Z<sub>i+3</sub>).

* Z<sub>i</sub> contains the text "tails" and "<EOS>".

* Z<sub>i+1</sub> contains the text "<EOS>".

* Z<sub>i+2</sub> contains the text "heads" and "<EOS>".

The textual example at the bottom provides a simple scenario: "A coin's state is heads. Alice flips, then Bob flips. What's the state? A: heads." This example likely illustrates how the network can track state changes over time.

### Key Observations

* The KV cache is crucial for maintaining context across time steps.

* The diagram suggests a recurrent process where the hidden state at each time step depends on the previous hidden state and the current input.

* The "<EOS>" token likely signifies the end of a sequence.

* The Transformer and Cache blocks within the KV cache suggest a more complex internal structure.

### Interpretation

This diagram illustrates a recurrent neural network architecture designed to handle sequential data. The KV cache is a key component, allowing the network to access and utilize information from past time steps. This is particularly important for tasks where context is crucial, such as natural language processing or time series analysis. The coin flip example demonstrates how the network can track state changes over time, suggesting its ability to model dynamic systems. The use of "Transformer" blocks within the KV cache hints at the incorporation of attention mechanisms, which can further enhance the network's ability to focus on relevant information from the past. The diagram is a simplified representation of a more complex system, but it effectively conveys the core principles of recurrent processing and the role of the KV cache in maintaining context. The diagram suggests a model capable of predicting future states based on past observations. The use of the KV cache is a technique to improve the efficiency and performance of such models.