\n

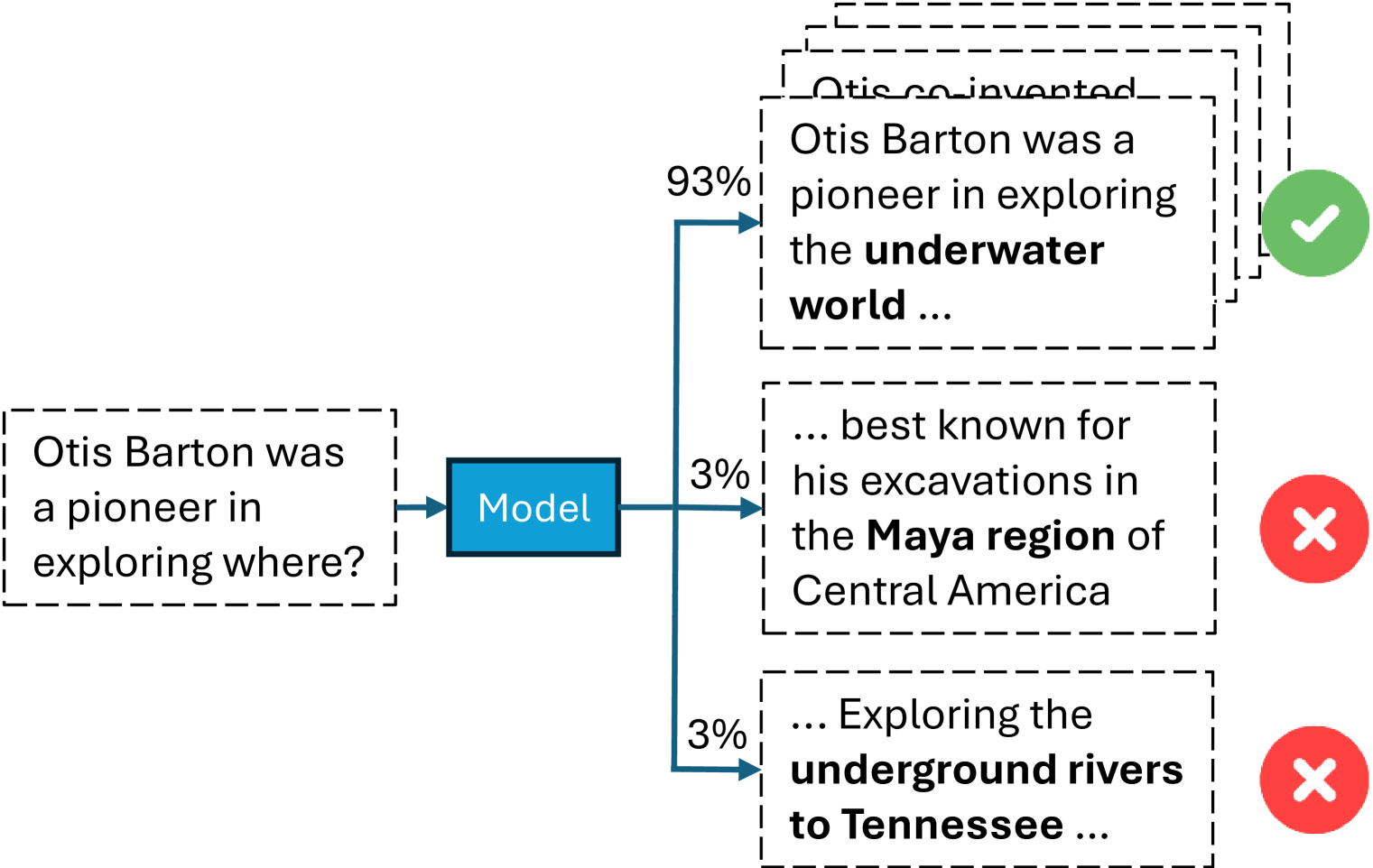

## Diagram: Model Output for a Factual Question

### Overview

The image is a flowchart or decision diagram illustrating how a machine learning model processes a factual question and generates multiple possible answers, each with an associated confidence score and a correctness indicator. The diagram visually separates the input, processing unit, and output options.

### Components/Axes

The diagram is structured from left to right:

1. **Input (Left):** A dashed-line box containing the question.

2. **Processing (Center):** A solid blue rectangle labeled "Model".

3. **Outputs (Right):** Three dashed-line boxes stacked vertically, each containing a possible answer. Arrows connect the "Model" to each output box.

4. **Confidence Scores:** Percentage values are placed on the arrows leading to each output.

5. **Correctness Indicators:** Icons placed to the right of each output box.

### Detailed Analysis

**1. Input Question:**

* **Text:** "Otis Barton was a pioneer in exploring where?"

* **Location:** Left side of the diagram, enclosed in a dashed-line box.

**2. Model:**

* **Label:** "Model"

* **Location:** Center, represented by a solid blue rectangle. An arrow points from the input question to this box.

**3. Outputs (from top to bottom):**

* **Output 1 (Top):**

* **Text:** "Otis Barton was a pioneer in exploring the **underwater world** ..."

* **Confidence Score:** 93% (displayed on the arrow leading to this box).

* **Correctness Indicator:** A green circle with a white checkmark (✓), positioned to the right of the text box. This indicates a correct answer.

* **Spatial Note:** This is the topmost output box. The text "underwater world" is in bold.

* **Output 2 (Middle):**

* **Text:** "... best known for his excavations in the **Maya region** of Central America"

* **Confidence Score:** 3% (displayed on the arrow leading to this box).

* **Correctness Indicator:** A red circle with a white 'X', positioned to the right of the text box. This indicates an incorrect answer.

* **Spatial Note:** This is the middle output box. The text "Maya region" is in bold.

* **Output 3 (Bottom):**

* **Text:** "... Exploring the **underground rivers to Tennessee** ..."

* **Confidence Score:** 3% (displayed on the arrow leading to this box).

* **Correctness Indicator:** A red circle with a white 'X', positioned to the right of the text box. This indicates an incorrect answer.

* **Spatial Note:** This is the bottom output box. The text "underground rivers to Tennessee" is in bold.

### Key Observations

1. **Confidence Distribution:** The model assigns a very high confidence (93%) to one answer and very low, equal confidence (3% each) to the other two. This creates a stark contrast between the primary output and the alternatives.

2. **Correctness Correlation:** The answer with the highest confidence score (93%) is the only one marked as correct (green checkmark). The two low-confidence answers are both marked as incorrect (red X).

3. **Text Formatting:** Key phrases within the answers ("underwater world", "Maya region", "underground rivers to Tennessee") are bolded, likely to highlight the core subject of each proposed answer.

4. **Diagram Semantics:** The use of dashed-line boxes for inputs and outputs versus a solid box for the "Model" may visually distinguish between data and the processing unit.

### Interpretation

This diagram serves as a clear visualization of a model's inference process for a factual query. It demonstrates a scenario where the model is highly confident in a single, correct answer and assigns minimal, equal probability to incorrect distractors.

The data suggests the model has a strong, correct association for the entity "Otis Barton" with "underwater world" exploration. The incorrect answers reference plausible but wrong domains (archaeology in the Maya region, speleology in Tennessee), indicating the model's knowledge base correctly discriminates between these fields.

The stark 93% vs. 3% confidence split implies the model's internal scoring mechanism is decisive in this case, leaving little ambiguity. This could reflect either a well-trained model with clear factual boundaries or a specific test case designed to showcase high-confidence correct retrieval. The diagram effectively communicates not just *what* the model answered, but also its *certainty* and the *validity* of that answer.