TECHNICAL ASSET FINGERPRINT

1022bd6e0128b0b1bfc8f923

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

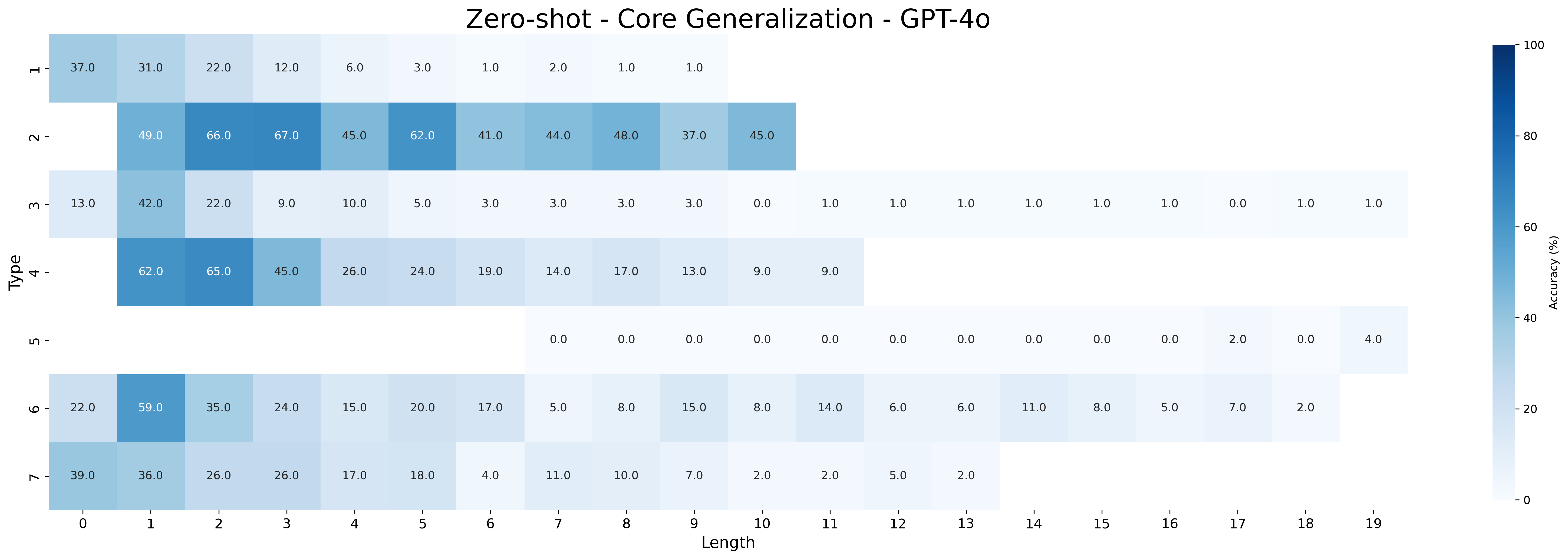

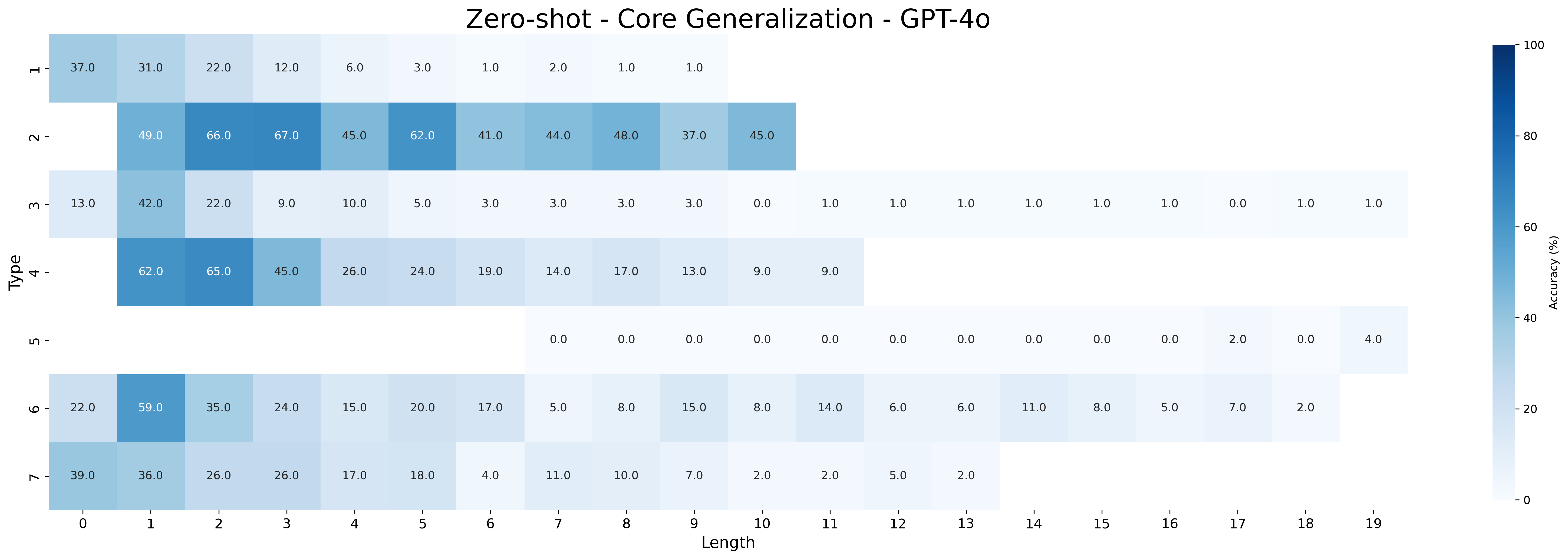

## Heatmap: Zero-shot - Core Generalization - GPT-4o

### Overview

The image is a heatmap visualizing the zero-shot core generalization performance of GPT-4o. The heatmap displays accuracy percentages for different "Types" across varying "Lengths." The color intensity corresponds to the accuracy, with darker blue shades indicating higher accuracy and lighter shades indicating lower accuracy.

### Components/Axes

* **Title:** Zero-shot - Core Generalization - GPT-4o

* **X-axis:** Length (ranging from 0 to 19)

* **Y-axis:** Type (categorical, labeled 1 through 7)

* **Color Scale (Legend):** Accuracy (%) ranging from 0% (lightest shade) to 100% (darkest shade of blue). The color bar is vertically oriented on the right side of the heatmap.

### Detailed Analysis

The heatmap presents accuracy values for each combination of "Type" and "Length." Here's a breakdown of the data:

* **Type 1:**

* Length 0: 37.0%

* Length 1: 31.0%

* Length 2: 22.0%

* Length 3: 12.0%

* Length 4: 6.0%

* Length 5: 3.0%

* Length 6: 1.0%

* Length 7: 2.0%

* Length 8: 1.0%

* Length 9: 1.0%

* **Type 2:**

* Length 0: 49.0%

* Length 1: 66.0%

* Length 2: 67.0%

* Length 3: 45.0%

* Length 4: 62.0%

* Length 5: 41.0%

* Length 6: 44.0%

* Length 7: 48.0%

* Length 8: 37.0%

* Length 9: 45.0%

* **Type 3:**

* Length 0: 13.0%

* Length 1: 42.0%

* Length 2: 22.0%

* Length 3: 9.0%

* Length 4: 10.0%

* Length 5: 5.0%

* Length 6: 3.0%

* Length 7: 3.0%

* Length 8: 3.0%

* Length 9: 3.0%

* Length 10: 0.0%

* Length 11: 1.0%

* Length 12: 1.0%

* Length 13: 1.0%

* Length 14: 1.0%

* Length 15: 1.0%

* Length 16: 1.0%

* Length 17: 0.0%

* Length 18: 1.0%

* Length 19: 1.0%

* **Type 4:**

* Length 1: 62.0%

* Length 2: 65.0%

* Length 3: 45.0%

* Length 4: 26.0%

* Length 5: 24.0%

* Length 6: 19.0%

* Length 7: 14.0%

* Length 8: 17.0%

* Length 9: 13.0%

* Length 10: 9.0%

* Length 11: 9.0%

* **Type 5:**

* Length 7: 0.0%

* Length 8: 0.0%

* Length 9: 0.0%

* Length 10: 0.0%

* Length 11: 0.0%

* Length 12: 0.0%

* Length 13: 0.0%

* Length 14: 0.0%

* Length 15: 0.0%

* Length 16: 0.0%

* Length 17: 2.0%

* Length 18: 0.0%

* Length 19: 4.0%

* **Type 6:**

* Length 0: 22.0%

* Length 1: 59.0%

* Length 2: 35.0%

* Length 3: 24.0%

* Length 4: 15.0%

* Length 5: 20.0%

* Length 6: 17.0%

* Length 7: 5.0%

* Length 8: 8.0%

* Length 9: 15.0%

* Length 10: 8.0%

* Length 11: 14.0%

* Length 12: 6.0%

* Length 13: 6.0%

* Length 14: 11.0%

* Length 15: 8.0%

* Length 16: 5.0%

* Length 17: 7.0%

* Length 18: 2.0%

* **Type 7:**

* Length 0: 39.0%

* Length 1: 36.0%

* Length 2: 26.0%

* Length 3: 26.0%

* Length 4: 17.0%

* Length 5: 18.0%

* Length 6: 4.0%

* Length 7: 11.0%

* Length 8: 10.0%

* Length 9: 7.0%

* Length 10: 2.0%

* Length 11: 2.0%

* Length 12: 5.0%

* Length 13: 2.0%

### Key Observations

* Types 2 and 4 generally exhibit higher accuracy compared to other types.

* Type 5 has very low accuracy across all lengths, with most values at 0%.

* For most types, accuracy tends to decrease as the length increases.

* There are variations in accuracy across different types for the same length, indicating that the model's performance is type-dependent.

### Interpretation

The heatmap provides insights into the zero-shot core generalization capabilities of the GPT-4o model. The model's performance varies significantly depending on the "Type" and "Length" of the input. The higher accuracy values for Types 2 and 4 suggest that the model is better at generalizing for these specific types. The decreasing accuracy with increasing length indicates that the model's performance degrades as the input sequence becomes longer, which is a common challenge in sequence modeling. The near-zero accuracy for Type 5 suggests a significant limitation in the model's ability to generalize to this particular type. These findings can be used to identify areas where the model excels and areas where further improvements are needed to enhance its generalization capabilities.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Heatmap: Zero-shot - Core Generalization - GPT-4o

### Overview

This image presents a heatmap visualizing the accuracy of GPT-4o across different 'Type' and 'Length' combinations. The heatmap uses a color gradient to represent accuracy percentages, ranging from approximately 0% (lightest shade) to 100% (darkest shade). The heatmap is structured as a grid, with 'Type' on the vertical axis and 'Length' on the horizontal axis.

### Components/Axes

* **Title:** "Zero-shot - Core Generalization - GPT-4o" (positioned at the top-center)

* **Vertical Axis (Type):** Labels are: "H", "Z", "M", "4", "U", "O", "7".

* **Horizontal Axis (Length):** Labels are integers from 0 to 19, representing length.

* **Color Scale:** A gradient scale on the right side indicates accuracy percentage, ranging from 0% (lightest color) to 100% (darkest color).

* **Data Cells:** Each cell in the grid represents the accuracy percentage for a specific 'Type' and 'Length' combination. The values are displayed within each cell.

### Detailed Analysis

The heatmap displays accuracy percentages for each combination of 'Type' and 'Length'. Here's a breakdown of the data, reading row by row:

* **Type H:**

* Length 0: 37.0%

* Length 1: 31.0%

* Length 2: 22.0%

* Length 3: 6.0%

* Length 4: 3.0%

* Length 5: 2.0%

* Length 6: 1.0%

* Length 7: 1.0%

* Length 8: 1.0%

* Length 9: 1.0%

* Length 10: 1.0%

* Length 11: 0.0%

* Length 12: 0.0%

* Length 13: 0.0%

* Length 14: 0.0%

* Length 15: 0.0%

* Length 16: 0.0%

* Length 17: 2.0%

* Length 18: 0.0%

* Length 19: 4.0%

* **Type Z:**

* Length 0: 49.0%

* Length 1: 66.0%

* Length 2: 67.0%

* Length 3: 45.0%

* Length 4: 62.0%

* Length 5: 44.0%

* Length 6: 48.0%

* Length 7: 37.0%

* Length 8: 45.0%

* **Type M:**

* Length 0: 42.0%

* Length 1: 22.0%

* Length 2: 9.0%

* Length 3: 10.0%

* Length 4: 5.0%

* Length 5: 3.0%

* Length 6: 3.0%

* Length 7: 3.0%

* Length 8: 1.0%

* Length 9: 1.0%

* Length 10: 1.0%

* Length 11: 1.0%

* Length 12: 1.0%

* Length 13: 1.0%

* Length 14: 1.0%

* Length 15: 1.0%

* Length 16: 1.0%

* Length 17: 1.0%

* Length 18: 1.0%

* Length 19: 1.0%

* **Type 4:**

* Length 0: 62.0%

* Length 1: 65.0%

* Length 2: 45.0%

* Length 3: 26.0%

* Length 4: 24.0%

* Length 5: 19.0%

* Length 6: 17.0%

* Length 7: 13.0%

* Length 8: 9.0%

* **Type U:**

* Length 0: 0.0%

* Length 1: 0.0%

* Length 2: 0.0%

* Length 3: 0.0%

* Length 4: 0.0%

* Length 5: 0.0%

* Length 6: 0.0%

* Length 7: 0.0%

* Length 8: 0.0%

* Length 9: 0.0%

* Length 10: 0.0%

* Length 11: 0.0%

* Length 12: 0.0%

* Length 13: 2.0%

* Length 14: 0.0%

* Length 15: 0.0%

* Length 16: 0.0%

* Length 17: 0.0%

* Length 18: 0.0%

* Length 19: 4.0%

* **Type O:**

* Length 0: 22.0%

* Length 1: 59.0%

* Length 2: 35.0%

* Length 3: 24.0%

* Length 4: 20.0%

* Length 5: 17.0%

* Length 6: 8.0%

* Length 7: 15.0%

* Length 8: 14.0%

* **Type 7:**

* Length 0: 39.0%

* Length 1: 36.0%

* Length 2: 26.0%

* Length 3: 17.0%

* Length 4: 18.0%

* Length 5: 11.0%

* Length 6: 10.0%

* Length 7: 2.0%

* Length 8: 5.0%

* Length 9: 2.0%

### Key Observations

* **Type Z** generally exhibits higher accuracy percentages, particularly at lengths 1 and 2, peaking at 67.0%.

* **Type U** consistently shows very low accuracy, often near 0%, across most lengths. A slight increase is observed at length 13 (2.0%) and 19 (4.0%).

* Accuracy tends to decrease as 'Length' increases for most 'Type' values.

* The highest accuracy value is 67.0% (Type Z, Length 2).

* The lowest accuracy value is 0.0% (Type U, Lengths 0-9).

### Interpretation

The heatmap demonstrates the performance of GPT-4o in a zero-shot core generalization setting, evaluating its accuracy across different input 'Types' and 'Lengths'. The significant variation in accuracy based on 'Type' suggests that the model performs better on certain types of tasks or inputs than others. The decline in accuracy with increasing 'Length' indicates a potential challenge in maintaining performance with longer inputs. The extremely low accuracy for 'Type U' suggests this type of input is particularly difficult for the model to handle in a zero-shot setting. This data could be used to identify areas where the model needs improvement, such as focusing on enhancing its performance on 'Type U' inputs or improving its ability to handle longer sequences. The heatmap provides a clear visual representation of the model's strengths and weaknesses, enabling targeted development efforts.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap: Zero-shot Core Generalization Performance of GPT-4o

### Overview

This image is a heatmap visualizing the zero-shot accuracy (in percentage) of a model identified as "GPT-4o" on a "Core Generalization" task. The performance is broken down across two dimensions: "Type" (vertical axis, categories 1-7) and "Length" (horizontal axis, values 0-19). The color intensity represents accuracy, with a scale from 0% (lightest) to 100% (darkest blue).

### Components/Axes

* **Title:** "Zero-shot - Core Generalization - GPT-4o"

* **Vertical Axis (Y-axis):** Labeled "Type". Contains 7 discrete categories, numbered 1 through 7 from top to bottom.

* **Horizontal Axis (X-axis):** Labeled "Length". Contains 20 discrete values, numbered 0 through 19 from left to right.

* **Color Bar/Legend:** Located on the far right. It is a vertical gradient bar labeled "Accuracy (%)". The scale runs from 0 at the bottom to 100 at the top, with tick marks at 0, 20, 40, 60, 80, and 100. The color transitions from very light blue/white (0%) to dark blue (100%).

* **Data Grid:** The main body of the chart is a grid of cells. Each cell corresponds to a specific (Type, Length) pair. The cell's background color and the numerical value printed within it indicate the accuracy percentage. Some cells are blank, indicating no data or a value of 0 that is not displayed numerically.

### Detailed Analysis

The following table reconstructs the accuracy data for each Type across the available Lengths. Values are transcribed directly from the image. Blank cells are noted as "N/A".

| Type | Length 0 | Length 1 | Length 2 | Length 3 | Length 4 | Length 5 | Length 6 | Length 7 | Length 8 | Length 9 | Length 10 | Length 11 | Length 12 | Length 13 | Length 14 | Length 15 | Length 16 | Length 17 | Length 18 | Length 19 |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **1** | 37.0 | 31.0 | 22.0 | 12.0 | 6.0 | 3.0 | 1.0 | 2.0 | 1.0 | 1.0 | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A |

| **2** | N/A | 49.0 | 66.0 | 67.0 | 45.0 | 62.0 | 41.0 | 44.0 | 48.0 | 37.0 | 45.0 | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A |

| **3** | 13.0 | 42.0 | 22.0 | 9.0 | 10.0 | 5.0 | 3.0 | 3.0 | 3.0 | 3.0 | 0.0 | 1.0 | 1.0 | 1.0 | 1.0 | 1.0 | 1.0 | 0.0 | 1.0 | 1.0 |

| **4** | N/A | 62.0 | 65.0 | 45.0 | 26.0 | 24.0 | 19.0 | 14.0 | 17.0 | 13.0 | 9.0 | 9.0 | N/A | N/A | N/A | N/A | N/A | N/A | N/A | N/A |

| **5** | N/A | N/A | N/A | N/A | N/A | N/A | N/A | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 2.0 | 0.0 | 4.0 |

| **6** | 22.0 | 59.0 | 35.0 | 24.0 | 15.0 | 20.0 | 17.0 | 5.0 | 8.0 | 15.0 | 8.0 | 14.0 | 6.0 | 6.0 | 11.0 | 8.0 | 5.0 | 7.0 | 2.0 | N/A |

| **7** | 39.0 | 36.0 | 26.0 | 26.0 | 17.0 | 18.0 | 4.0 | 11.0 | 10.0 | 7.0 | 2.0 | 2.0 | 5.0 | 2.0 | N/A | N/A | N/A | N/A | N/A | N/A |

### Key Observations

1. **Performance Decay with Length:** For most Types (especially 1, 4, 6, 7), accuracy shows a clear downward trend as "Length" increases. The highest values are typically found at the shortest lengths (0-3).

2. **Type-Specific Performance:**

* **Type 2** demonstrates the strongest and most consistent performance, maintaining accuracies between 37% and 67% across its measured lengths (1-10).

* **Type 5** shows near-total failure, with accuracies of 0% for almost all lengths (7-19), except for minor blips of 2.0% and 4.0% at lengths 17 and 19.

* **Type 3** has a wide range of lengths (0-19) but generally low accuracy, peaking at 42.0% at Length 1 and frequently dropping to 0-1%.

3. **Data Coverage:** The heatmap is not a complete rectangle. Different Types have data for different ranges of Lengths. Type 3 has the broadest coverage (Lengths 0-19), while Type 2 and Type 4 have narrower ranges.

4. **Peak Values:** The highest accuracy recorded is **67.0%** for **Type 2 at Length 3**. The second highest is **66.0%** for **Type 2 at Length 2**.

### Interpretation

This heatmap provides a diagnostic view of GPT-4o's zero-shot generalization capabilities on a specific core task. The data suggests the following:

* **Task Difficulty Scales with Length:** The predominant trend of decreasing accuracy with increasing "Length" indicates that the core generalization task becomes significantly harder for the model as the sequence or problem length grows. This is a common challenge in language model evaluation.

* **Heterogeneous Task Types:** The "Type" axis likely represents different sub-tasks or problem formats within the core generalization benchmark. The stark performance differences between types (e.g., Type 2 vs. Type 5) reveal that the model's zero-shot capability is highly dependent on the specific structure or nature of the problem. Type 2 appears to be a format the model handles well, while Type 5 is almost completely intractable for it in a zero-shot setting.

* **Zero-Shot Limitations:** The overall low-to-moderate accuracy values (mostly below 50%) for longer lengths and certain types highlight the limitations of zero-shot prompting for complex generalization. The model struggles to infer the correct pattern or solution without examples, especially as problem complexity (length) increases.

* **Benchmark Design:** The structure of the heatmap, with its varying data ranges per type, suggests the benchmark itself may have different length distributions for different problem types, or that some types are only defined for certain lengths.

In summary, the image reveals that GPT-4o's zero-shot core generalization is highly uneven: it is moderately effective for short problems of certain types (notably Type 2) but degrades rapidly with length and fails almost completely on other problem types (notably Type 5). This points to specific areas where the model's reasoning or pattern-matching abilities are robust and where they are critically lacking.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document: Zero-shot - Core Generalization - GPT-4o Heatmap Analysis

## 1. Axis Labels and Titles

- **Title**: "Zero-shot - Core Generalization - GPT-4o" (centered at top)

- **X-axis**: "Length" (horizontal axis, values 0–19)

- **Y-axis**: "Type" (vertical axis, categories 1–7)

- **Colorbar**: "Accuracy (%)" (right side, gradient from 0% (light blue) to 100% (dark blue))

## 2. Categories and Sub-Categories

- **Y-axis Categories (Types)**:

- Type 1

- Type 2

- Type 3

- Type 4

- Type 5

- Type 6

- Type 7

- **X-axis Sub-Categories (Lengths)**:

- 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19

## 3. Data Table Reconstruction

| Type \ Length | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 |

|---------------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|

| **1** | 37.0 | 31.0 | 22.0 | 12.0 | 6.0 | 3.0 | 1.0 | 2.0 | 1.0 | 1.0 | | | | | | | | | | |

| **2** | | 49.0 | 66.0 | 67.0 | 45.0 | 62.0 | 41.0 | 44.0 | 48.0 | 37.0 | 45.0 | | | | | | | | | |

| **3** | 13.0 | 42.0 | 22.0 | 9.0 | 10.0 | 5.0 | 3.0 | 3.0 | 3.0 | 3.0 | 0.0 | 1.0 | 1.0 | 1.0 | 1.0 | 1.0 | 1.0 | 1.0 | 0.0 | 1.0 |

| **4** | 62.0 | 65.0 | 45.0 | 26.0 | 24.0 | 19.0 | 14.0 | 17.0 | 13.0 | 9.0 | | | | | | | | | | |

| **5** | | | | | | | | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 2.0 | 0.0 | 4.0 |

| **6** | 22.0 | 59.0 | 35.0 | 24.0 | 15.0 | 20.0 | 17.0 | 5.0 | 8.0 | 15.0 | 8.0 | 14.0 | 6.0 | 6.0 | 11.0 | 8.0 | 5.0 | 7.0 | 2.0 | |

| **7** | 39.0 | 36.0 | 26.0 | 26.0 | 17.0 | 18.0 | 4.0 | 11.0 | 10.0 | 7.0 | 2.0 | 2.0 | 5.0 | 2.0 | | | | | | |

## 4. Key Trends and Observations

- **General Pattern**: Accuracy decreases as length increases for most types.

- **Type 2**:

- Highest accuracy at Length 1 (66%) and Length 3 (67%).

- Sharp decline after Length 3.

- **Type 5**:

- All zeros except Length 19 (4%).

- **Type 7**:

- Gradual decline from 39% (Length 0) to 2% (Length 13).

- **Color Consistency**:

- Darker blues (e.g., 66%, 67%) align with the colorbar's high-accuracy range.

- Light blues (e.g., 1%, 2%) match the low-accuracy range.

## 5. Spatial Grounding

- **Legend Position**: Colorbar on the right, vertical orientation.

- **Title Position**: Centered at the top of the chart.

- **Cell Placement**:

- Rows correspond to Types (1–7).

- Columns correspond to Lengths (0–19).

## 6. Component Isolation

- **Header**: Title and axis labels.

- **Main Chart**: Heatmap grid with numerical values.

- **Footer**: Colorbar with accuracy scale.

## 7. Transcribed Embedded Text

All numerical values in the heatmap cells are transcribed above. No non-English text detected.

## 8. Verification Notes

- All legend colors match the heatmap's color intensity.

- Trends (e.g., Type 2's peak at Length 3) align with numerical data.

- No omitted labels or axis markers.

DECODING INTELLIGENCE...